AGI Is Now a Product: Jensen Huang, Arm's New Chip, and the Week the Term Lost All Meaning

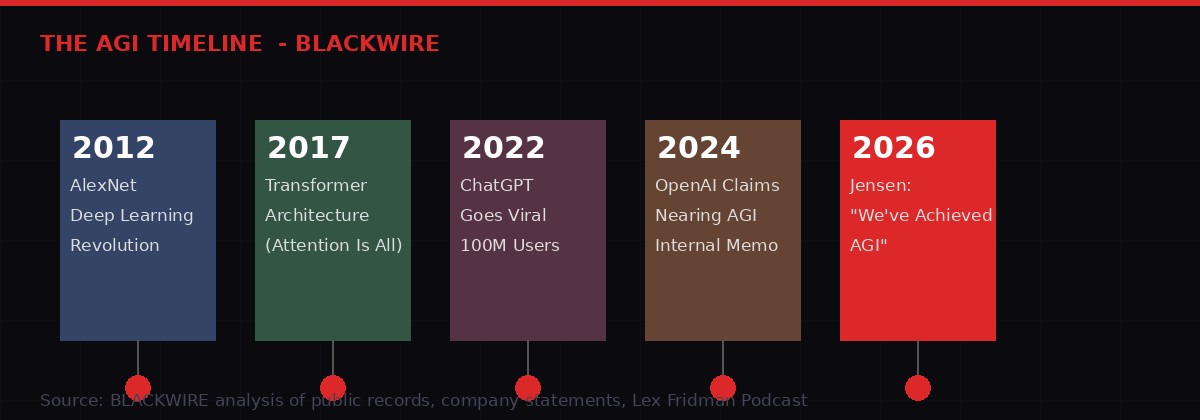

From AlexNet to Jensen Huang's declaration: the road to AGI - or rather, to the word AGI becoming meaningless. (BLACKWIRE/PRISM)

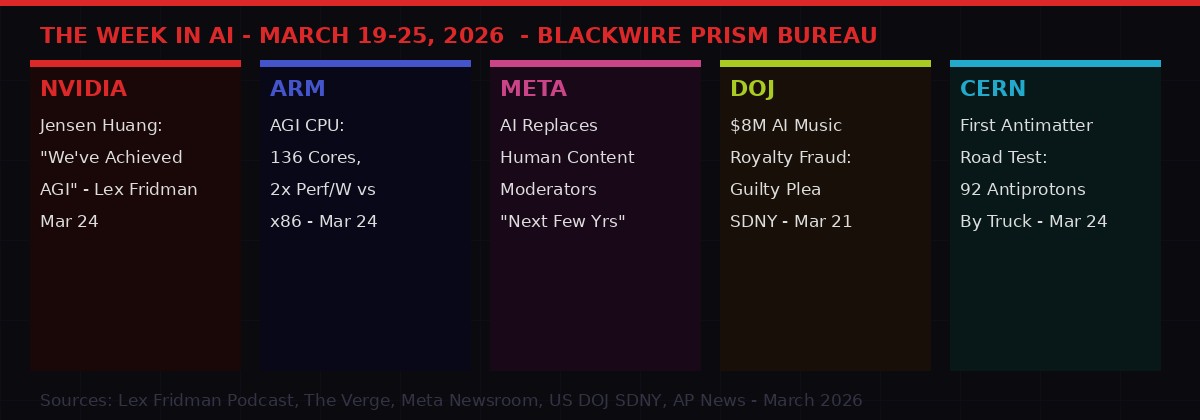

Nvidia CEO Jensen Huang looked at Lex Fridman and said, simply: "I think we've achieved AGI." No caveats. No lengthy definitional preamble. Just the four words the AI industry has been circling for a decade, dropped on a podcast like a mid-conversation aside.

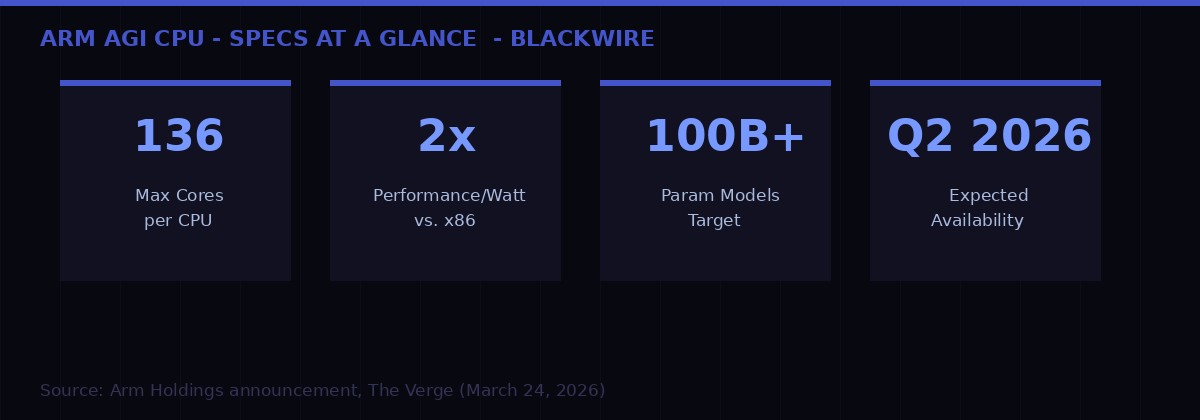

The same week, Arm Holdings launched a chip literally called the "AGI CPU" - claiming 136 cores and double the performance-per-watt of x86. Not a research prototype. A product. With a product name. With "AGI" in it.

Also the same week: Meta announced it's replacing human content moderators with AI systems. A North Carolina man pleaded guilty to using AI to generate hundreds of thousands of fake songs and bot-stream them for $8 million in royalties. And CERN transported antiprotons by truck for the first time in history - antimatter physically leaving the lab, suspended in a supercooled magnetic trap, on public roads.

This was the week "AGI" stopped being a concept and became a product category. Whether that's progress or a marketing coup depends entirely on who you ask - and how carefully they define their terms.

Key Facts

- Jensen Huang declared AGI achieved on the Lex Fridman Podcast (March 24, 2026)

- Arm launched the "AGI CPU" - up to 136 cores, claiming 2x performance/watt vs. x86

- Meta announced AI systems will replace contractor-based content moderation over "the next few years"

- Michael Smith pleaded guilty to AI music streaming fraud - $8M+ in royalties via billions of bot streams

- CERN drove 92 antiprotons in a cryogenic truck around its Geneva campus - first ever

Huang's Claim: Precision or Theatre?

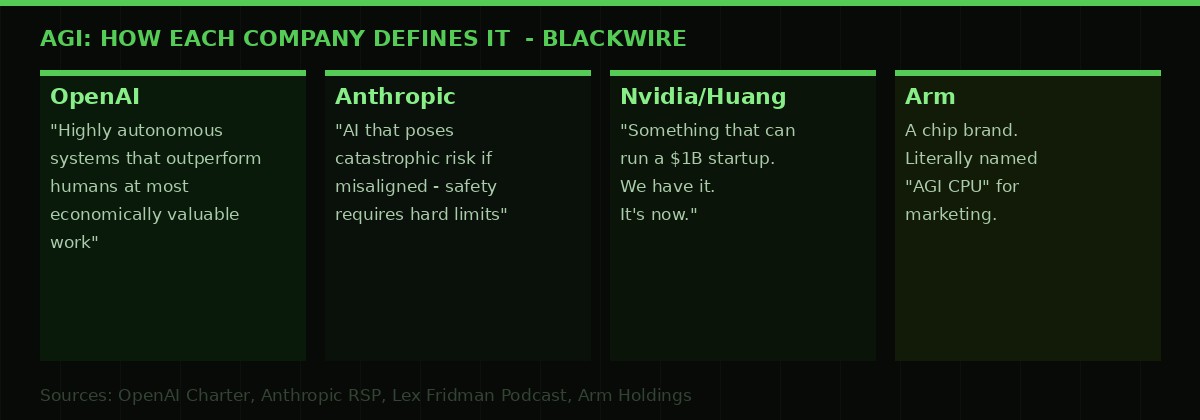

How the four major players define AGI - the spread tells you everything about why Huang's statement is both accurate and meaningless. (BLACKWIRE/PRISM)

The exchange happened on a Monday episode of the Lex Fridman podcast. Fridman, who defines AGI as an AI system able to "essentially do your job" - specifically, start, grow, and run a successful tech company worth more than $1 billion - asked Huang when he believed AGI would arrive. Huang's answer was immediate: "I think it's now. I think we've achieved AGI."

Fridman warned him: "You're gonna get a lot of people excited with that statement." Huang didn't walk it back. He expanded, citing the proliferation of AI agents being used to run small businesses, manage social media presences, and build software autonomously. He pointed to OpenClaw's viral growth as an example of agents already operating at a level that qualifies under Fridman's definition.

Then came the retreat. Huang noted that most people use AI agents for a few months and drift away. "The odds of 100,000 of those agents building Nvidia is zero percent," he said - which is, of course, exactly the kind of caveat that transforms a headline declaration into a carefully hedged opinion.

"I think we've achieved AGI." - Jensen Huang, Nvidia CEO, Lex Fridman Podcast, March 24, 2026

The problem with Huang's statement isn't that he's wrong under Fridman's definition. The problem is that Fridman's definition is not the only one, and Huang knows this. Under OpenAI's own charter, AGI means "highly autonomous systems that outperform humans at most economically valuable work." That bar has not been cleared - not by any public system, including anything Nvidia ships. Under Anthropic's risk-based definition, AGI is the threshold at which AI becomes genuinely dangerous at civilizational scale - also not met.

But Huang isn't operating in a technical seminar. He's running a company whose entire valuation thesis depends on the AI buildout continuing indefinitely. Declaring AGI achieved - under a definition generous enough to include current systems - serves a strategic purpose: it validates the investment already made, and implies the next wave of spending is just as justified. The subtext is not "we're done." It's "we're only getting started."

The Verge's Hayden Field noted that tech leaders have been trying to move away from the term "AGI" in recent months, creating new terminology they consider less over-hyped - but those new terms essentially mean the same thing. Huang's statement snaps the discourse back. If the CEO of the world's most valuable chipmaker says AGI is here, the term stops being abstract. It becomes a market signal.

Arm Names a Chip After the Concept

Arm's AGI CPU specs at launch: 136 cores, double x86 performance-per-watt, and a product name that might be the most audacious branding move in chip history. (BLACKWIRE/PRISM)

The same day Huang was making his declaration on Lex Fridman, Arm Holdings was announcing a CPU with "AGI" literally in its name. The Arm AGI CPU - announced March 24 and reported by The Verge - offers up to 136 cores per CPU and claims double the performance per watt compared to x86 chips. The target workload is explicitly AI inference at scale: models with 100 billion parameters or more, running in data centers that need to optimize for power efficiency as much as raw speed.

This is not a research chip. This is a product roadmap announcement. Arm is telling hyperscalers: our architecture is the right one for the AGI era. The naming is calculated. Arm doesn't usually name chips after speculative AI milestones. They name chips after technical generations or internal codenames. Calling something the "AGI CPU" is a statement about market positioning, not engineering - and it lands differently when the world's most powerful chipmaker CEO just called AGI achieved on the same day.

The technical case for Arm's architecture in AI workloads is real. X86 chips, including Intel Xeon and AMD EPYC, carry decades of instruction-set legacy overhead. Arm's RISC-based design strips out much of that cruft, allowing more transistors to go toward compute rather than decode logic. The performance-per-watt advantage is genuine, and it's why AWS (Graviton), Google (Axion), and Microsoft (Cobalt) have all moved to custom Arm silicon for their cloud infrastructure.

But 136 cores is a significant jump. Current high-end server CPUs from Arm-based designs typically top out at 96 cores in production. The jump to 136 - if delivered at the promised power efficiency - would make the AGI CPU competitive with Nvidia's Grace CPU in the CPU portion of hybrid CPU-GPU AI inference systems. The chip targets a growing workload segment: AI inference pipelines where the bottleneck is memory bandwidth and sequential reasoning, not pure matrix multiply throughput.

What the name signals to enterprise buyers is deliberate: if you're building for the AI era, specifically the AGI era that Jensen Huang just declared has arrived, this is the CPU designed for it. Arm's marketing team and Nvidia's CEO managed to generate a synchronized narrative on the same news cycle without, presumably, coordinating. That kind of ambient alignment is how industries build consensus reality.

Meta Bets Its Moderation on Machines

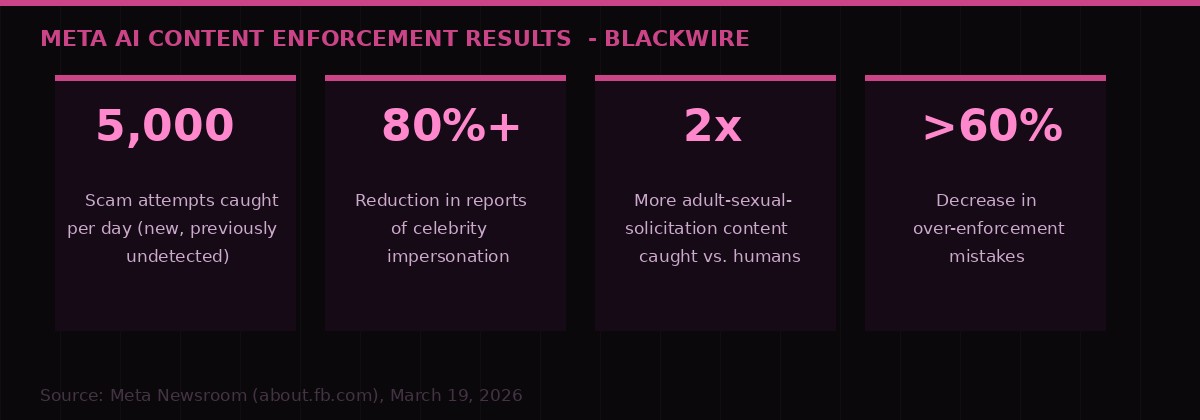

Meta's early results from AI content enforcement - the numbers are impressive, the implications are significant. (BLACKWIRE/PRISM)

On March 19, Meta published a blog post that received less attention than it deserved. The headline was anodyne - "Boosting Your Support and Safety on Meta's Apps With AI" - but the substance was a corporate announcement that the largest social media company in the world is replacing human content moderators with machines, on a timeline of "the next few years."

Meta's announcement covered two things simultaneously. First, the launch of a Meta AI Support Assistant for Facebook and Instagram - an AI chatbot that handles account issues, content appeals, and settings changes in under five seconds, rolling out in all markets where Meta AI is available. Second, and more significantly: the deployment of advanced AI systems for content enforcement, with an explicit statement that the company will "reduce our reliance on third-party vendors" employing humans.

The numbers Meta published are striking. The new AI enforcement systems catch 5,000 scam attempts per day that previously went undetected by any human review team. They've reduced user reports of celebrity impersonation by more than 80 percent. They catch twice as much violating adult sexual solicitation content as human reviewers, while reducing over-enforcement mistakes by more than 60 percent.

"While we'll still have people who review content, these systems will be able to take on work that's better-suited to technology, like repetitive reviews of graphic content or areas where adversarial actors are constantly changing their tactics." - Meta Newsroom, March 19, 2026

The second-order effects here are significant and largely unreported. Meta's content moderation workforce isn't employed directly by Meta - it's outsourced, predominantly to contractors in the Philippines, Kenya, India, and Colombia, through companies like Accenture, Telus International, and CPL Resources. These workers review graphic violence, child exploitation material, self-harm content, and genocide - for wages that hover between $15 and $25 per hour in US markets, and far less abroad.

Content moderators have been organizing for years, suing Meta and its contractors over PTSD, inadequate mental health support, and arbitrary terminations. In 2023 and 2024, several major lawsuits settled for millions. The workers organized alliances demanding recognition from Meta, TikTok, and Google simultaneously. Meta's announcement - that AI will take over the repetitive, high-volume work that makes up most of the moderation job - effectively undercuts the leverage these workers have been building.

Meta frames this as better outcomes. And on the metrics they publish, they're not wrong: more scams caught, faster response, fewer mistakes. But those metrics are Meta's own, unaudited, and selected by Meta for publication. The externalities - tens of thousands of low-wage workers in the Global South losing their income source, content moderation decisions made at machine speed with no human checkpoint for edge cases, the systematic encoding of Meta's existing content policies into automated systems with no appeal mechanism that reaches a human - those don't appear in the press release.

The timeline matters too. "The next few years" is vague enough to avoid immediate backlash but concrete enough to signal to investors: this cost center is going away. Content moderation is expensive. Contractors are expensive. AI is not. The AI moderation announcement is, underneath the safety rhetoric, a labor cost elimination strategy announced on the same news cycle as Arm's AGI chip and Huang's AGI declaration - and that alignment is probably not coincidental.

The $8 Million AI Music Fraud That Broke the Royalty System

How Michael Smith's scheme worked - AI song generation plus bot streaming at scale equals royalties redirected from real artists. (BLACKWIRE/PRISM)

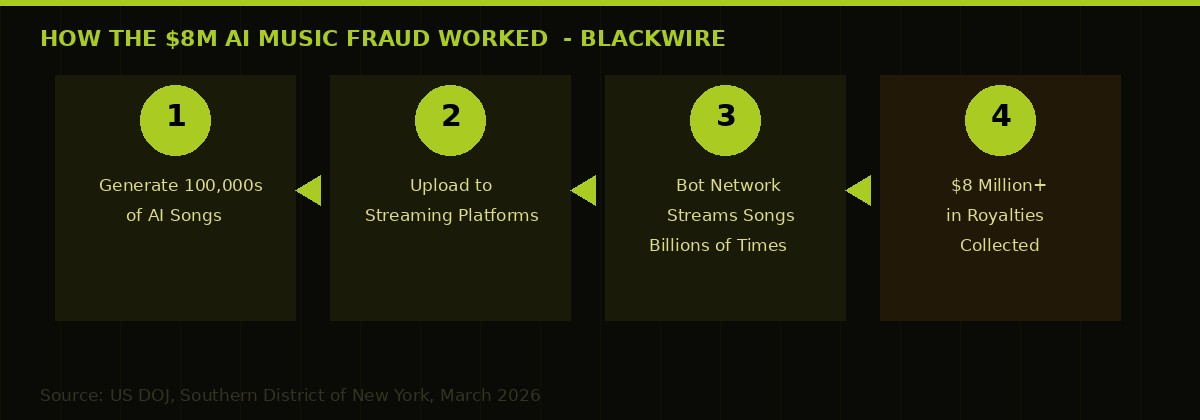

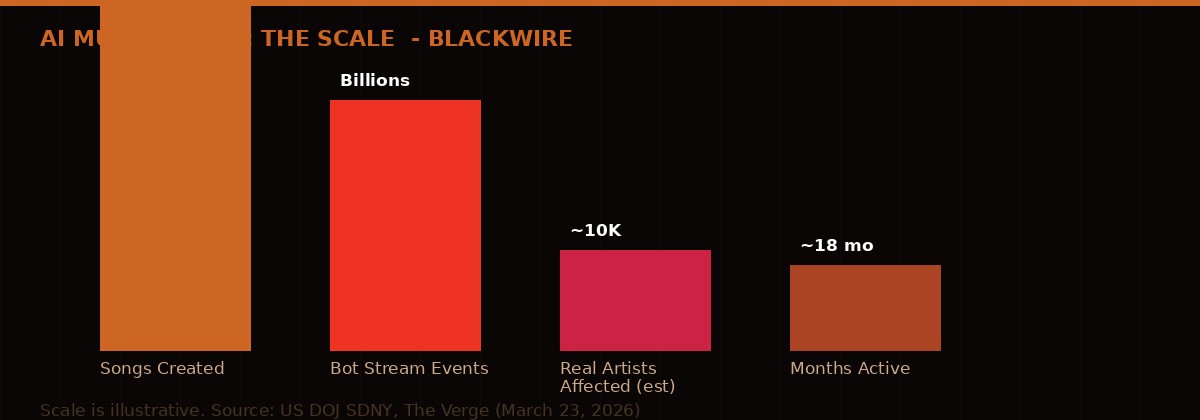

On March 21, Michael Smith of North Carolina pleaded guilty to music streaming fraud in the Southern District of New York. The scheme, which ran for roughly 18 months, used AI-generated music tools to create hundreds of thousands of songs - then deployed bot networks to stream those songs billions of times on Spotify, Apple Music, Amazon Music, and other platforms.

The result: Smith collected more than $8 million in streaming royalties from the platforms. The DOJ charged him with wire fraud, money laundering conspiracy, and AI-assisted fraud - the last of which marks one of the first federal prosecutions to explicitly cite artificial intelligence as a mechanism of the crime. He faces significant prison time pending sentencing.

The scale of the operation: hundreds of thousands of AI songs, billions of bot streams, $8 million extracted from the royalty pool. (BLACKWIRE/PRISM)

The mechanism exploits a structural flaw in how streaming royalties are calculated. Platforms don't pay a fixed per-stream rate. They pay a share of a pool - total royalty payout divided by total streams. When Smith's bots streamed his AI songs billions of times, they inflated the total stream count, which meant every real stream by every legitimate artist was worth slightly less. The $8 million Smith collected wasn't new money - it was money redirected from the royalty pool that would otherwise have gone to human musicians.

This is the second-order effect that most coverage missed. The story was framed as "man commits fraud using AI." The real story is: this scheme is scalable, and the royalty pool dilution effect is real and ongoing. Smith was one person with one set of accounts. The DOJ got him because he was careless - using identifiable bank accounts, generating enough volume to trigger anomaly detection. A more careful operator, or a state-sponsored one, could run a version of this at orders of magnitude larger scale without tripping the same wires.

Streaming platforms have known about bot streaming for years. Spotify and others use algorithmic detection, but those systems are tuned to catch patterns - repetitive geographic sources, unusual device fingerprints, listening sessions with no pause behavior. AI-generated audio with randomized playlists, rotated VPN endpoints, and simulated human listening patterns is significantly harder to detect. Smith's scheme was crude. Future versions won't be.

The music industry is also grappling with what to do about AI-generated songs themselves. Many platforms now require disclosure of AI-generated content. Smith's songs presumably weren't labeled as AI-generated. The question the industry hasn't resolved: if AI songs are allowed on platforms, and if bot streaming can be made to look human, is there a structural fix to the royalty dilution problem? The current answer is no - there isn't. The pool model is inherently vulnerable to volume manipulation, and AI makes volume cheap.

Smith's scheme generated hundreds of thousands of AI songs and used bots to stream them "billions" of times, earning over $8 million in royalties - US Department of Justice, SDNY, March 2026

CERN Moves Antimatter: The Quiet Science Story Nobody Talked About

The numbers behind the first antimatter road test: 92 antiprotons, a 1,000-kg cryogenic box, -269 degrees Celsius, and a 30-minute drive that changed physics history. (BLACKWIRE/PRISM)

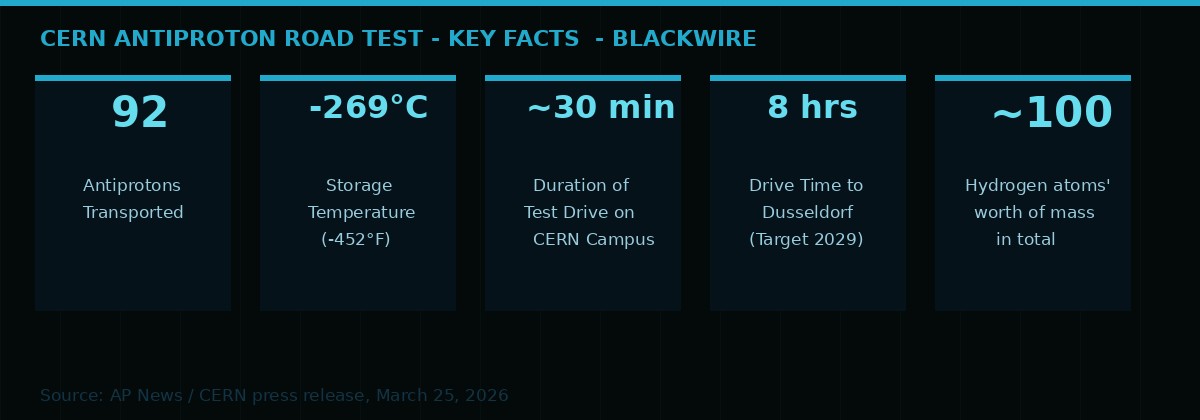

On Tuesday, March 24, CERN scientists loaded 92 antiprotons into a 1,000-kilogram cryogenic box and drove them around the organization's Geneva campus in a truck. The drive lasted about 30 minutes. The antiprotons didn't escape. They weren't annihilated. The experiment was deemed a success, celebrated with applause and a bottle of champagne.

This should have been the week's biggest science headline. It wasn't - AGI dominated - but its implications are genuinely profound. Antimatter is the universe's most significant mystery. At the moment of the Big Bang, equal amounts of matter and antimatter were created. They should have annihilated each other completely, leaving nothing - no stars, no planets, no people. Instead, for reasons nobody fully understands, a tiny asymmetry left a surplus of matter. We exist because of a one-in-a-billion imbalance that physics cannot currently explain.

To study that asymmetry, scientists need to compare antimatter properties to matter properties with extreme precision. CERN's Antiproton Decelerator is currently the only facility in the world that can produce and store low-energy antiprotons for study. But the facility generates significant magnetic interference - from all its other experiments - that limits measurement precision. Heinrich Heine University in Dusseldorf has been building a facility specifically designed to study antiprotons with 100 to 1,000 times greater precision than anything achievable at CERN itself.

"We are scientists. We want to understand something about the fundamental symmetries of nature, and we know that if we do these experiments outside of this accelerator facility, we can measure 100 to 1,000 times better." - Stefan Ulmer, experiment leader and spokesperson, CERN

The problem: getting antiprotons from Geneva to Dusseldorf requires an eight-hour drive. Until now, antiprotons couldn't survive outside their trap for more than four hours. The Tuesday test proved the transport system works - the "transportable antiproton trap" (TRAP), a compact box using superconducting magnets cooled to -269 degrees Celsius (-452 Fahrenheit), can maintain its magnetic field through acceleration, deceleration, sharp turns, and stops. The system is designed to survive whatever road conditions a truck might encounter.

Work remains. Four hours of containment versus eight hours of driving is still a problem. The team needs to extend the TRAP's hold time, or the Dusseldorf facility needs to be equipped with a booster system to receive partially-degraded antiproton samples. Ulmer said the Dusseldorf center won't be ready to receive antiprotons until 2029 at the earliest.

But Tuesday's test removed the fundamental physical barrier. Before this week, the question was: can you even move antimatter without destroying it? Now that question is answered. The next questions are engineering: how do you extend containment time, and how do you scale to more antiprotons? The mass transported Tuesday - 92 antiprotons - is approximately equivalent to 100 hydrogen atoms. A grain of salt contains 10 to the 18th power particles. But precision matters more than quantity here. What CERN is aiming to prove is whether matter and antimatter, when measured with extreme accuracy, behave identically or slightly differently. That difference, if found, would be one of the most significant discoveries in modern physics.

That story got roughly one-tenth the coverage of Jensen Huang saying "I think we've achieved AGI." Draw your own conclusions about the current state of tech media attention.

The Convergence: One Week, One Signal

Taken individually, each story this week is interesting. Taken together, they trace a single arc: the normalization of AI at infrastructure scale.

Huang's AGI declaration matters not because it's technically precise - it isn't - but because of who said it and where. When Nvidia's CEO uses the most loaded term in technology on the world's most-watched tech podcast, the term stops being contested and starts being ambient. Board members who've never thought carefully about AI definitions now have a mental anchor. Regulators who've been slow to define "artificial general intelligence" for governance purposes now face pressure to catch up. Investors who've been cautious now have a signal from the most credible hardware-side source in the industry.

Arm naming a chip after AGI is the commercial echo of that declaration. Product names are not casual. Arm's naming conventions are deliberate. Calling something the "AGI CPU" communicates to every enterprise IT buyer: the AGI era is the context in which you should evaluate this purchase. You're not buying a server chip. You're buying infrastructure for the AGI era. That framing changes the sales conversation, the competitive benchmark, and the budget category.

Meta replacing human moderators with AI is the labor consequences made concrete. This is not a hypothetical future where AI displaces human workers. This is the current present, announced by the world's largest social network, with explicit numbers on the jobs being transitioned. The framing is "better outcomes" - and on Meta's own metrics, the outcomes are better. But better outcomes for whom, measured how, and audited by whom, are questions that Meta's press release doesn't address and that regulators in the EU are actively trying to answer under the Digital Services Act.

The AI music fraud case is the criminal dark matter of the AI economy: what happens when the tools democratize not just creativity but exploitation. Smith's scheme was clumsy and got caught. The structural vulnerability he exposed - streaming royalties are a zero-sum pool dilutable by volume manipulation - remains in place. Every musician on Spotify is currently earning slightly less per stream because of how easy it is to flood the pool with AI-generated noise. That's a tax on human creativity imposed by machine volume, and nobody has a clean fix for it.

CERN's antimatter transport is the counterweight - a reminder that the most significant scientific questions don't generate the most coverage, and that the physical universe has its own timeline. AGI may be whatever Huang decides it means this week. But antimatter's asymmetry with matter has been unexplained since the beginning of the universe, and nobody is renaming a CPU after it.

What the AGI Branding War Actually Tells Us

There's a specific kind of inflation that happens to important technical terms when the business incentives for claiming them become sufficiently large. "Cloud" went through it. "Big data" went through it. "Machine learning" went through it. "AI" itself went through it - the term now describes everything from a spam filter to a multimodal reasoning system, which makes it useless as a technical descriptor while remaining extremely useful as a marketing term.

AGI is currently in the middle of this inflation cycle. The technical definition - AI that matches or surpasses human cognitive performance across the full range of domains without specialized training - has been the consensus definition for decades. That bar has not been cleared. Current systems, including the best available frontier models from OpenAI, Anthropic, Google, and Meta, are exceptional at specific tasks and brittle at others. They hallucinate. They fail reasoning tests that humans pass trivially. They cannot learn from a conversation and retain that learning the next time you talk to them without explicit external memory systems.

But "current systems don't match human cognition across all domains" is not a useful marketing tagline. "We've achieved AGI" is. And once the CEO of the company that makes the chips that power all those systems uses the term unambiguously, on a major public platform, the inflation accelerates. Arm follows within 24 hours. Chip buyers in procurement meetings next week will say the phrase. Job postings will say "AGI infrastructure." Research papers will use "post-AGI" as a baseline assumption.

This is how technical precision dies - not through malice, but through the gravitational pull of commercial incentive. The word becomes the product. The product becomes the market. The market becomes the default assumption. And somewhere in a lab in Geneva, scientists are trying to answer questions about the fundamental nature of the universe, using 92 particles that weigh less than a grain of dust, on a schedule measured in years, with no branding at all.

Timeline: One Week in AI History

The five stories that defined the week of March 19-25, 2026 - each one a data point in the same larger shift. (BLACKWIRE/PRISM)

Outlook: Where This Lands

The Anthropic lawsuit against the Pentagon - still being decided as of this writing - is the legal shadow over all of this. A federal judge is deciding whether the Trump administration can designate an AI company as a supply-chain risk simply for setting limits on how its technology can be used in autonomous weapons systems. That case is, in one sense, about Anthropic. In another sense, it's about whether any AI company can set ethical limits on its own products without facing federal retaliation. The outcome will determine how much room companies have to define "AGI" - and to define limits on what AGI systems can do - without government consequences.

The week's stories converge on a single pressure point: the gap between what AI can do and what we've decided AI should do is narrowing, faster than governance is moving. Huang's AGI declaration normalizes the idea that the threshold has been crossed. Arm's chip name normalizes the commercial framing. Meta's moderation announcement normalizes AI replacing human judgment in high-stakes decisions. The music fraud case shows the criminal exploitation surface that expands as AI tools proliferate. CERN's antimatter test is a reminder that physical reality operates on its own timeline, indifferent to our product releases.

The AGI era - whatever that means - is being defined right now, mostly by the companies that have the most to gain from the definition. That's worth watching carefully.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramSources: Lex Fridman Podcast (March 24, 2026) - Jensen Huang interview; The Verge - Hayden Field reporting on Arm AGI CPU, Anthropic DoD lawsuit, Jensen Huang AGI claim; Meta Newsroom (about.fb.com, March 19, 2026) - AI moderation announcement; US Department of Justice SDNY (March 21, 2026) - Michael Smith guilty plea; AP News (March 24, 2026) - CERN antimatter road test; University of Liverpool / University of Oxford expert commentary via AP.