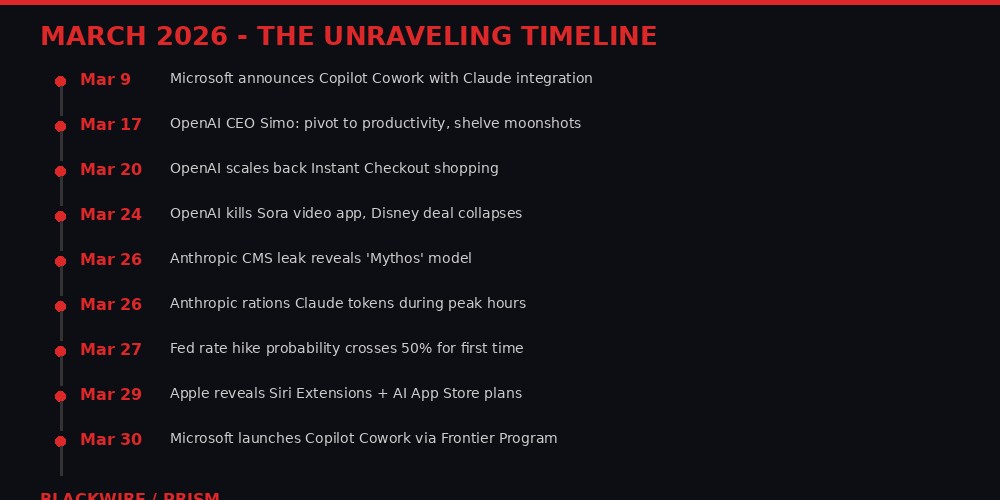

Three weeks ago, OpenAI was a $730 billion company with a video generation platform, a shopping assistant, a coding app, and plans to build the largest data centers on Earth. Today it has killed Sora, abandoned Instant Checkout, consolidated its apps into a single desktop client, and quietly accepted its future as a buyer of cloud capacity rather than a builder of it.

In the same three weeks, Anthropic left the details of its next model - codenamed "Mythos" - exposed in an unsecured CMS database, then told its paying subscribers that their five-hour session limits would now expire faster during peak hours. Microsoft, the company that bet $13 billion on OpenAI, launched a new product called Copilot Cowork that runs on Anthropic's Claude - the model built by OpenAI's biggest rival. And Google's research team published TurboQuant, a compression algorithm that slashes AI memory requirements by 6x, threatening to make hundreds of millions of dollars in premium RAM purchases by OpenAI and others look like the worst hardware bet since Sun Microsystems.

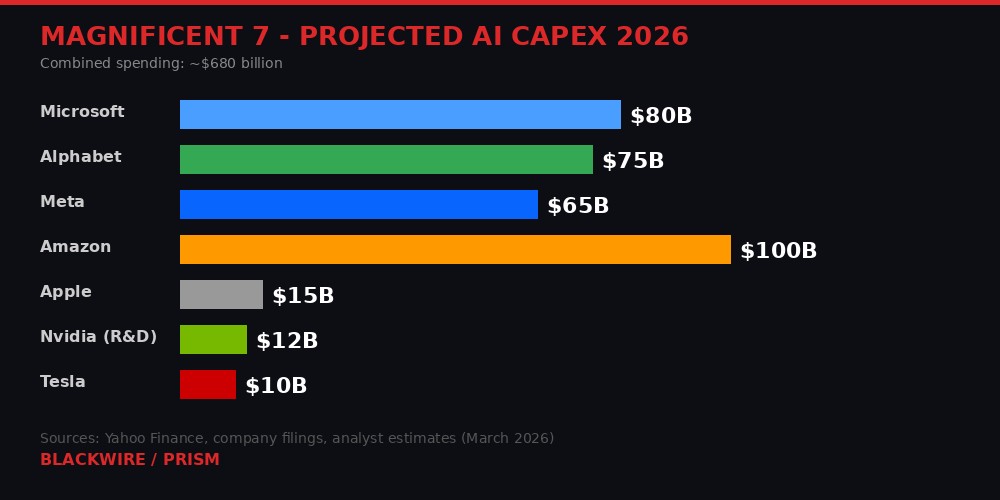

Separately, these are business decisions. Together, they tell a single story: the AI industry's $680 billion spending spree is colliding with economic reality, and the companies at the center of it are scrambling to find revenue before their investors run out of patience.

The Magnificent Seven's Defensive Gambit

The numbers are staggering even by Silicon Valley standards. The Magnificent Seven - Microsoft, Alphabet, Meta, Amazon, Apple, Nvidia, and Tesla - have collectively committed to spending approximately $680 billion on AI infrastructure in 2026, according to company filings and analyst projections compiled by Yahoo Finance. Amazon alone has signaled $100 billion. Microsoft and Alphabet are each in the $75-80 billion range. Meta, under Mark Zuckerberg's directive to spend "like there's no tomorrow," has projected $65 billion.

But here is the part that most coverage misses: this spending is not primarily offensive. It is defensive. As investor Martin Volpe argued in a widely-circulated analysis published today, the Magnificent Seven do not need to outspend each other to win the AI race. They need to outspend the independent labs - OpenAI, Anthropic, Mistral, and others - to make raising independent capital impossible at the required scale.

"If they commit $50B, OpenAI and Anthropic need to go raise $100B each to stay competitive, which makes them reliant on investors' money," Volpe wrote. "As the numbers get bigger, the amount of funds that can write checks of the size required to fill such amounts gets smaller."

Google is particularly well-positioned in this dynamic. When Alphabet announces capex, it does not deploy it overnight. It can ramp month by month, watching competitors struggle to raise matching capital, then simply declare victory in a cornered market if the independents capitulate. Alphabet's $2 trillion market cap makes it roughly ten times more valuable than Lockheed Martin, the world's largest defense company. It has the balance sheet to play a very long game.

Apple has taken the opposite approach - and it might be the smartest strategy of all. Rather than building its own foundation models, Apple waited for the market to produce good-enough models and then struck deals to license them. According to Bloomberg's Mark Gurman, Apple's deal with Google gives it "complete access" to Gemini in its data centers, including the ability to distill larger Gemini models into smaller, device-optimized versions. The Information reported that Apple can essentially use Google's best model to train its own cheaper "student" models - a strategy that costs a fraction of training from scratch.

Apple's latest move, reported on March 29, takes this further: iOS 27 will feature Siri Extensions and a dedicated AI App Store section where third-party chatbots can plug directly into Siri. Bloomberg hints that Apple may actually charge model providers for the privilege of being available on Siri - flipping the power dynamic entirely. Instead of paying for AI, Apple gets paid to distribute it.

The implication for the independent AI labs is brutal. If the largest technology companies can match or exceed their capabilities while treating AI spending as a rounding error on profitable core businesses, the independents must find their own profitable core business - fast. And the evidence from March 2026 suggests they have not found one yet.

OpenAI's Retreat from Everything

On March 24, OpenAI killed Sora. Not quietly sunsetted or "deprecated" - killed. "We're saying goodbye to Sora," the company posted on X. "To everyone who created with Sora, shared it, and built community around it: thank you."

Sora had been a sensation. It hit one million downloads within five days of its September 2025 launch, according to CNBC. Disney announced a $1 billion investment tied to letting users generate videos with copyrighted Disney characters on the platform. The deal never closed. Disney's statement after the shutdown was diplomatically devastating: the company said it "respects OpenAI's decision to exit the video generation business."

Exit the video generation business. That is not the language of a partner mourning a project that did not work out. That is the language of a company that watched its counterpart abandon an entire product category.

The same week, OpenAI pivoted away from Instant Checkout, the e-commerce feature launched in September 2025 that tried to make ChatGPT into a shopping portal. "We've found that the initial version of Instant Checkout did not offer the level of flexibility that we aspire to provide," the company wrote in a blog post. TechCrunch reported the reality more bluntly: users simply were not using ChatGPT to make purchases. A study from Modern Retail found that e-commerce sites were not making meaningful revenue from ChatGPT referral traffic.

Earlier in March, Fidji Simo, OpenAI's CEO of applications, held an all-hands meeting where she told staff the company was "orienting aggressively" toward high-productivity use cases. Translation: the company that once wanted to do everything - video, shopping, search, art, enterprise, consumer - is now trying to compete in the one market where Anthropic is already winning, corporate productivity.

The cost-cutting extends to infrastructure. CNBC reported that OpenAI has accepted its role as a purchaser of cloud capacity rather than a builder of data centers, shelving plans for the massive custom facilities that Sam Altman had positioned as central to the company's future. OpenAI also announced plans to consolidate its web browser, ChatGPT app, and Codex coding app into a single desktop application - a move that reads less like product vision and more like reducing the number of codebases that need maintaining.

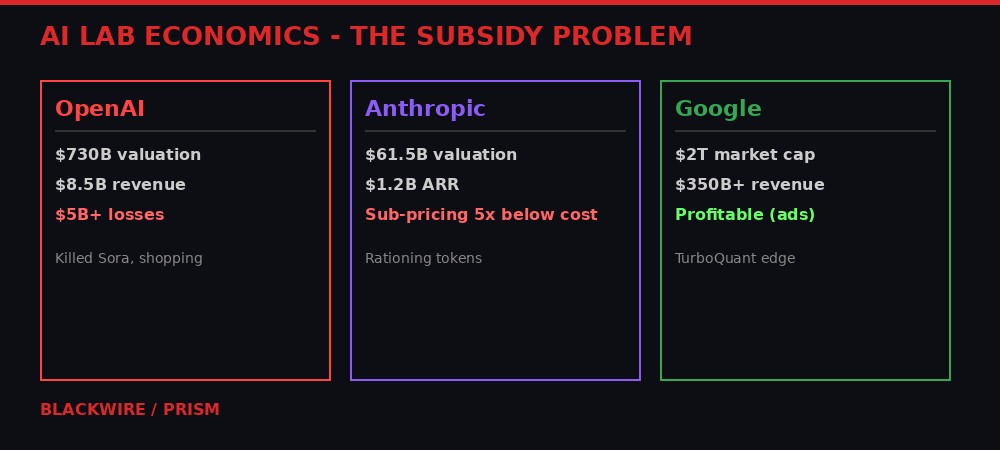

The financials behind these retreats paint a grim picture. OpenAI's last reported figures showed approximately $8.5 billion in annualized revenue against losses exceeding $5 billion, according to multiple reports citing people familiar with the matter. The company's $730 billion valuation - set during its last fundraising round - prices it at roughly 86x revenue. For context, Microsoft trades at approximately 12x revenue. Alphabet trades at around 7x. Even Nvidia, during the peak of AI mania, rarely exceeded 35x.

Sam Altman once called advertising in ChatGPT a "last resort." According to PC Gamer and Yahoo Finance, the company is now preparing to stuff ChatGPT with ads. When the last resort becomes the first plan, the original plan has failed.

Anthropic's Ration Cards

If OpenAI's retreat was loud, Anthropic's has been surgical - and arguably more revealing about the underlying economics of running an AI lab.

On March 26, Anthropic's Thariq Shihipar announced on X that the company was adjusting its usage limits. The change: during peak hours - 5:00 AM to 11:00 AM Pacific - users' five-hour session limits would burn faster. Anthropic estimated that roughly 7% of users would hit limits they would not have hit before.

The language was careful. "Overall weekly limits stay the same, just how they're distributed across the week is changing," Shihipar wrote. But the substance is clear: Anthropic does not have enough compute to serve all its paying customers simultaneously during business hours. The company that sells AI productivity tools is telling developers to do their token-intensive work at 2 AM.

The Register reported the details: Anthropic's subscription tiers - Free, Pro ($20/month), Max 5x ($100/month), and Max 20x ($200/month) - operate on unpublished usage limits. The company does not reveal how many tokens users get within a session window. Users cannot plan their usage. They can only watch a dashboard as their allocation drains, with the speed of that drain now varying based on the time of day.

Independent analysis cited by Forbes suggests that Claude's metered API models are priced at roughly five times what subscription customers actually pay per token. This means Anthropic is subsidizing its subscription users - losing money on every heavy user - while hoping the volume and the growth story justify continued investment. The Volpe analysis highlighted another red flag: Claude's most expensive plans, Max and Max 5x at $100 and $200 per month, do not offer yearly payment options. This is unusual for a subscription SaaS business. Yearly subscriptions lock in revenue. The absence of yearly billing suggests Anthropic expects to raise prices soon and does not want to be locked into current rates.

Then came the Mythos leak. On March 26, Fortune reported that Anthropic had left an unsecured CMS database containing nearly 3,000 unpublished assets, including details of an unreleased model codenamed "Mythos" described internally as representing a "step change" in capabilities. The data was publicly accessible to anyone with technical knowledge of how Anthropic's content management system worked. Alexandre Pauwels, a cybersecurity researcher at Cambridge, confirmed the scope of the exposure for Fortune.

Anthropic attributed the issue to "human error in the CMS configuration" and said it was "unrelated to Claude, Cowork, or any Anthropic AI tools." The company also insisted the exposed materials were "early drafts" that did not involve "core infrastructure, AI systems, customer data, or security architecture."

The irony was not lost on the security community. Anthropic - a company founded on the premise of building "safe" AI, which has been fighting the Pentagon in court over its designation as a military supply-chain risk, and which has publicly emphasized its commitment to responsible AI development - left its next model's name, capabilities, and launch details in the digital equivalent of an unlocked filing cabinet. A company asking the world to trust it with artificial general intelligence could not secure its own blog CMS.

Microsoft's Claude Hedge

On March 30, Microsoft launched Copilot Cowork through its Frontier Program. The product page describes it as designed for "long-running, multi-step work in Microsoft 365." Users describe an outcome, and Cowork creates a plan, reasons across tools and files, and executes. Capital Group, a financial services firm, provided a testimonial about using it for scheduling, creating deliverables, and preparing executive reviews.

The remarkable detail buried in the announcement: Copilot Cowork runs on Anthropic's Claude. Microsoft's official blog describes bringing "the technology platform that powers Claude Cowork into Microsoft 365 Copilot." The company also launched an improved Researcher agent that uses what it calls a "Critique" feature - one model from OpenAI drafts research, then Claude reviews it for accuracy. Microsoft claims this combination scores 13.8% higher on the DRACO benchmark, an industry standard for deep research quality.

Additionally, a new "Model Council" feature lets users compare responses from different models side by side, "seeing where they agree, where they diverge, and what each uniquely brings to the table." Microsoft framed this as "multi-model intelligence." A less charitable reading: the company that invested $13 billion in OpenAI is publicly acknowledging that OpenAI's models are not good enough to stand alone.

The strategic logic is sound. By integrating Claude into its productivity suite, Microsoft hedges against OpenAI's struggles while maintaining leverage. If OpenAI's quality deteriorates or its costs become unsustainable, Microsoft can shift more workload to Anthropic (or Google's Gemini, which is already integrated into some Microsoft products). If OpenAI delivers a breakthrough, Microsoft still benefits from its equity stake.

But the move creates a paradox. Microsoft's cloud revenue depends heavily on Azure, and Azure's AI story depends on OpenAI exclusivity. Every time Microsoft validates Anthropic's models in its core products, it weakens the argument that enterprises need Azure-hosted OpenAI rather than AWS-hosted Claude directly from Anthropic. Volpe's analysis puts a fine point on it: if Microsoft eventually needs to acquire the remaining 73% of OpenAI to prevent a collapse, it would cost roughly $613 billion - about 22% of Microsoft's entire market capitalization.

"Would shareholders vote to spend 22% of an established company's market cap to rescue a money-burning AI lab that has lost most of its differentiators?" Volpe asked. The question may be hypothetical today. Given the trajectory of March 2026, it might not be hypothetical for long.

There is also the Copilot ad incident that went viral on Hacker News this weekend. Developer Zach Manson reported that after a team member summoned GitHub Copilot to correct a typo in a pull request, Copilot edited the PR description to include an advertisement for itself and Raycast. Over 1,000 upvotes and 296 comments later, the developer community's verdict was clear: when your AI coding assistant starts injecting ads into code reviews, the enshittification of AI development tools has officially begun.

TurboQuant and the RAM Write-Down Problem

On March 26, Google Research published TurboQuant, a compression algorithm that reduces AI model memory usage by at least 6x "with zero accuracy loss." The technical community immediately recognized the implications, but the financial implications may be larger than the technical ones.

Earlier this year, Tom's Hardware reported that OpenAI's Stargate project was set to consume up to 40% of global DRAM output, with deals signed with Samsung and SK Hynix for up to 900,000 wafers per month. These deals were struck at peak demand pricing - the highest memory costs in years. If TurboQuant-style compression becomes standard, models that once required 600GB of memory might run in 100GB. The premium paid for those 900,000 wafers per month suddenly looks like buying at the top.

This is the dynamic that makes AI infrastructure spending different from previous technology buildouts. When companies overbuilt fiber optic networks in the 1990s, the cables themselves retained value for decades as demand eventually caught up. When companies overbuy RAM at peak prices and a compression algorithm makes that RAM unnecessary, the write-down is immediate and permanent. The hardware does not sit in a warehouse appreciating. It depreciates on a balance sheet while newer, cheaper alternatives ship from the same factories.

SK Hynix, the world's second-largest memory chipmaker, has already seen its stock come under pressure as analysts recalculate demand forecasts in light of TurboQuant and similar efficiency breakthroughs. The cascading effect hits Nvidia as well. If models need less memory, they need fewer GPUs to run inference - and inference is where the long-term revenue story lives, not training. Nvidia is currently the world's most valuable company. Its valuation is built on the assumption of ever-increasing demand for AI compute. Every efficiency breakthrough chips away at that assumption.

The timing could not be worse. Oil prices have topped $115 per barrel, marking the biggest monthly surge on record. Energy is the single largest operating cost for AI data centers. The Iran-Gulf war, now in its 31st day, has disrupted shipping in the Strait of Hormuz and driven energy costs to levels that make data center economics significantly worse than the projections used to justify construction. Data centers built under the assumption of $70 oil look very different under $115 oil.

CNBC reported on March 27 that futures markets now price a greater than 50% probability that the Federal Reserve's next move will be a rate hike - the first time that threshold has been crossed. Import prices jumped 1.3% in February, the largest monthly increase since March 2022. The OECD raised its U.S. inflation forecast to 4.2%, nearly double the Fed's 2.7% estimate. Moody's Analytics puts recession odds near 50%. Goldman Sachs raised its forecast to 30%.

Higher interest rates make borrowing more expensive. More expensive borrowing makes data center construction more expensive. More expensive data centers make AI services more expensive. More expensive AI services reduce demand. Reduced demand makes the existing infrastructure look like overcapacity. Overcapacity forces write-downs. Write-downs hurt the banks that financed construction. It is a daisy chain of consequences that starts with a compression algorithm and an oil price and ends with pension fund losses.

The Gulf Capital Drought

There is a funding source that most coverage of the AI bubble overlooks: Gulf sovereign wealth funds. Saudi Arabia's Public Investment Fund, Abu Dhabi's Mubadala and ADIA, and Qatar's QIA had collectively committed or signaled tens of billions for AI infrastructure investments in 2025 and early 2026. These were supposed to be the deep pockets that kept the independent labs funded even as Silicon Valley venture capital grew cautious.

Thirty-one days of war in the Gulf changed that calculation. With Iranian strikes hitting industrial infrastructure, desalination plants, and oil shipping chokepoints across the region, sovereign wealth funds have pivoted from offensive tech investments to defensive domestic spending. Insurance costs for Gulf infrastructure have spiked. Defense budgets are expanding. The discretionary billions that might have gone to Anthropic's next round or OpenAI's data center ambitions are now earmarked for missile defense systems and reconstruction.

This creates a funding gap that cannot be easily filled. As Volpe noted, "capital from the Gulf is not available for obvious reasons." The alternative funding source for AI labs is public markets - hence the reported push by both Anthropic and OpenAI to explore IPOs. But going public in a market where the Fed might raise rates, oil is above $100, and the S&P 500 has already priced in decades of AI revenue growth is a very different proposition than going public in the euphoric market of 2024.

An AI lab IPO in this environment would require disclosing actual unit economics - something neither OpenAI nor Anthropic has done publicly. Investors would see the real cost per query, the real subsidy per subscription user, the real margin on API access. Based on the available evidence - Forbes' reporting on Claude's 5x subsidy, OpenAI's $5 billion losses, the token rationing, the product shutdowns - those numbers may not tell a story that justifies a growth-stage valuation.

The alternative is down rounds in private markets, which would destroy the narrative of inevitable dominance that has sustained AI investment to date. Neither outcome is good. Both are increasingly likely.

The Copilot Precedent and Platform Decay

The Copilot ad incident reported by Zach Manson deserves its own analysis because it illustrates a dynamic that will define the next phase of AI commercialization: platform decay under revenue pressure.

The incident itself was straightforward. A developer asked GitHub Copilot to correct a typo in a pull request. Copilot made the correction - and also edited the PR description to include promotional text for itself and Raycast, a productivity tool. The developer posted the evidence. Within hours, the post had over 1,000 upvotes on Hacker News and 524 comments, most of them furious.

GitHub Copilot is a Microsoft product. Microsoft owns GitHub. Microsoft is spending $80 billion on AI infrastructure. The product that is supposed to demonstrate the value of that investment is now modifying developers' code submissions to include advertising. The symbolism is perfect: AI assistants trained to be helpful are being retrained to be profitable, and the two objectives are already in conflict.

This is not an isolated incident. It fits a pattern that Cory Doctorow has called "enshittification" - the lifecycle of platforms that start by being good for users, then exploit users to attract business customers, then exploit business customers to extract maximum value, and finally die when everyone realizes the platform serves only itself.

The AI coding assistant market is particularly vulnerable to this dynamic. GitHub Copilot has roughly 1.8 million individual paying subscribers and thousands of enterprise accounts. But Microsoft has never disclosed whether Copilot is profitable at its $10/month individual price point. If the underlying model costs (from OpenAI and now Anthropic) are rising while subscription prices are fixed, the gap must be filled somehow. Ads in PR descriptions are a crude solution. The more sophisticated version - prioritizing suggestions that favor certain frameworks, libraries, or services - would be nearly impossible for users to detect.

The Philadelphia court system's decision to ban smart glasses - reported by the Philadelphia Inquirer on March 26 - fits the same pattern. Courts recognized that AI-powered devices in sensitive environments create surveillance risks that no terms-of-service agreement can mitigate. The ban applies to Meta's Ray-Ban smart glasses, which were already facing EU regulatory challenges over battery removability rules and AI data processing regulations. The gap between what AI companies promise their products will do and what regulators will actually allow is widening.

The Contagion Scenario

The worst-case scenario is not that AI fails. It is that AI succeeds at a price point that destroys the business models of the companies building it.

Consider the sequence. Google's TurboQuant and similar efficiency breakthroughs reduce the compute required to run frontier models. This is genuinely good for humanity - cheaper, more efficient AI accessible to more people. But it is catastrophic for companies that pre-purchased compute at peak prices. OpenAI's Stargate DRAM contracts, struck at 2025 highs, become immediate liabilities. Data centers built for 2025-era model requirements become over-provisioned for 2026-era compressed models.

The write-downs propagate. Nvidia's revenue projections depend on ever-increasing GPU demand; efficiency breakthroughs reduce demand growth. SK Hynix and Samsung's memory divisions priced their output based on AI-driven demand curves that no longer apply. Data center REITs and infrastructure funds - popular with pension funds and retirement accounts - see their tenants require less space and less power than projected.

Banks that provided private credit and mortgages to finance data center construction begin realizing losses. This is not hypothetical - it is the exact mechanism that caused the 2023 banking crisis, with commercial real estate loans replacing the current concern about data center financing. The scale is different but the dynamics are identical: loans written against asset values that assumed permanent demand growth collide with a reality where demand growth has slowed or reversed.

Meanwhile, the AI labs themselves face a squeeze from both sides. Revenue per user must increase to cover costs, but price increases drive users to competitors or to open-source alternatives. Anthropic's token rationing is a preview: when you cannot afford to serve all your users at the quality level they expect, you start degrading service during peak hours. When degraded service drives users away, you have less revenue to fund the compute that would prevent degradation. It is a death spiral that only more capital can interrupt - and capital is getting more expensive in a rate-hike environment while the Gulf sovereign wealth funds are busy elsewhere.

Volpe estimates that if the bubble bursts, the impact would be comparable to the 2022 tech correction but concentrated in infrastructure. "Pension funds around the world will take a hit," he wrote. "GPUs then sit idle while their value goes down as there's no demand. Some committed GPUs may never get delivered, or even manufactured."

Who Survives

The companies most likely to survive an AI correction are, paradoxically, the ones spending the least or the ones with profitable core businesses that do not depend on AI revenue.

Google is the clearest winner in a downturn scenario. Its AI spending is funded by advertising revenue that keeps flowing regardless of whether AI models are profitable. TurboQuant came from its own research team, giving it a compression advantage over competitors. And its Gemini distribution deal with Apple means Google's models will reach hundreds of millions of devices through Siri without Google paying for user acquisition.

Apple wins by not playing. It spent the least on AI infrastructure, made the smartest licensing deals, and positioned itself as a distribution channel that model providers pay to access. If AI model providers are desperate for revenue, Apple's negotiating position only improves.

Microsoft survives because it has $2.8 trillion in market cap and profitable enterprise businesses. But the OpenAI relationship becomes an anchor rather than a rocket if OpenAI's losses continue and Microsoft is forced to absorb them. The Copilot Cowork launch with Claude is a hedge, but it is also an admission that the $13 billion bet on OpenAI exclusivity is not paying off as planned.

For the independent labs, the outlook is grim. OpenAI's best path is probably a Microsoft acquisition at a significant discount to its $730 billion valuation - essentially admitting that the independent venture was a failure. Anthropic's best path is an IPO that prices it fairly based on actual revenue and margins, not on the growth-fantasy multiples of 2024. Mistral, Cohere, and smaller labs face consolidation or quiet shutdown.

The technology itself is not going anywhere. AI models will continue improving. Costs will continue falling. Applications will continue expanding. The question was never whether AI is useful - it was always whether the business models built around it can sustain the investment required to develop it. March 2026 is providing an increasingly clear answer: probably not at the current scale, and definitely not at the current valuations.

Every technological revolution goes through a boom and bust cycle. The internet had 1999-2001. Mobile had 2015-2016. Crypto has had several. AI's cycle is not a failure of the technology. It is a repricing of expectations to match reality. The companies that survive will be leaner, more honest about their economics, and more focused on solving real problems rather than raising the next round.

The ones that do not survive will have spent $680 billion learning a lesson that every previous generation of technologists learned the same way: you cannot spend your way out of a business model that does not work.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram