The $8 Million AI Music Heist: How One Man Built 700,000 Fake Songs and Robbed Spotify Blind

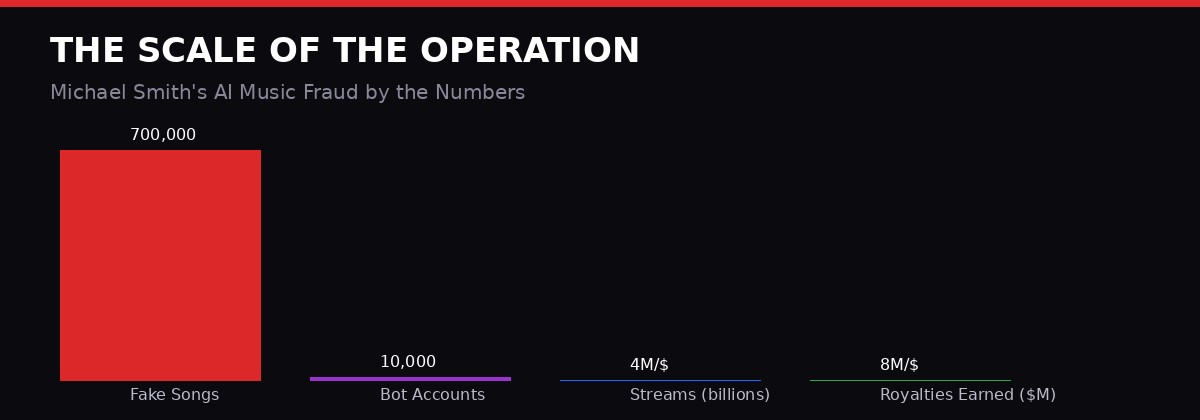

A North Carolina man just pleaded guilty to the largest streaming fraud scheme ever prosecuted - 700,000 AI-generated tracks, billions of fake plays, and $8 million in royalties stolen from the collective pot that every working musician depends on. It is not a story about one bad actor. It is a preview of what happens when AI meets an unguarded payment system.

The Guilty Plea That Exposed a Broken System

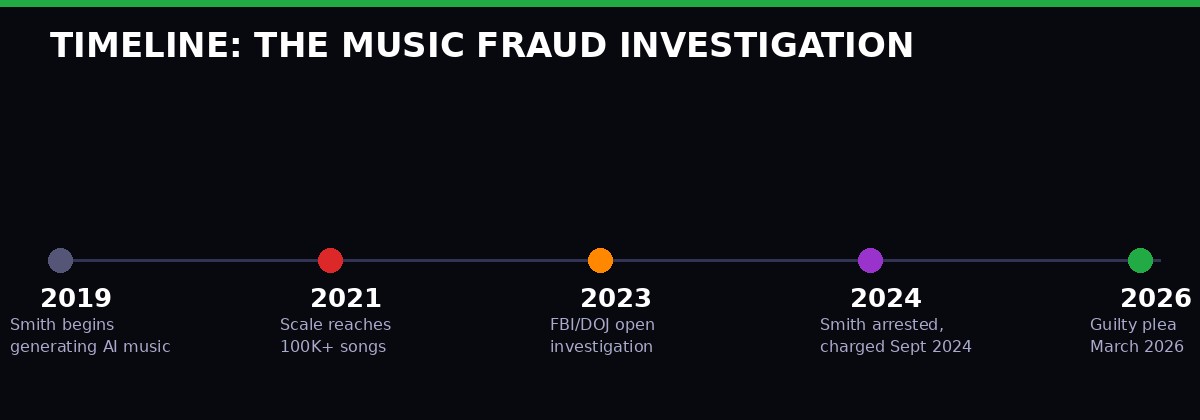

Michael Smith, 52, of Cornelius, North Carolina, pleaded guilty in federal court last week to wire fraud, money laundering, and computer fraud in connection with an AI-powered scheme that ran for at least four years. [The Verge, March 23, 2026; AP News, March 2026]

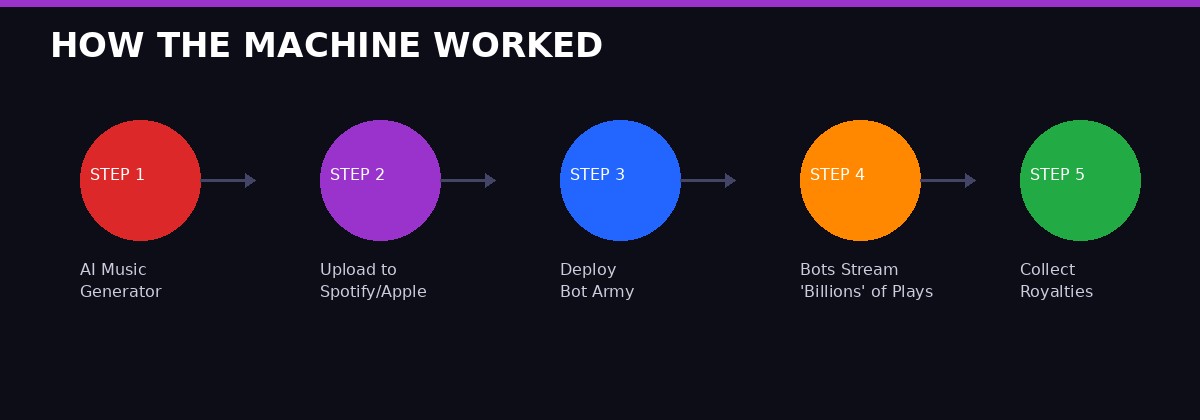

The operation was neither subtle nor complex. Smith used commercial AI music generation tools - the kind freely available online since at least 2019 - to produce hundreds of thousands of songs. Not albums. Not artists. Songs. Generic ambient tracks, low-effort electronic instrumentals, loops masquerading as compositions. The kind of content that populates the grey zones of streaming libraries where nobody is really listening - except bots.

And that was the point.

Smith uploaded these synthetic tracks to Spotify, Apple Music, and Amazon Music under a rotating cast of fake artist names. He then deployed thousands of automated bot accounts to stream those tracks. Continuously. Around the clock. Billions of plays.

The royalty payments arrived via legitimate distribution channels - DistroKid and similar services that aggregate payments from the major platforms. Over time, those payments totaled more than $8 million, according to the Department of Justice.

"Smith fraudulently obtained more than $8 million in royalty payments... by manipulating the number of streams a song received, artificially inflating streaming data with automated bots." - DOJ Southern District of New York, March 2026

The charges he faces - wire fraud and money laundering each carry a 20-year maximum sentence. He also faces Computer Fraud and Abuse Act charges. Sentencing has not yet been scheduled.

How the Machine Worked - Step by Step

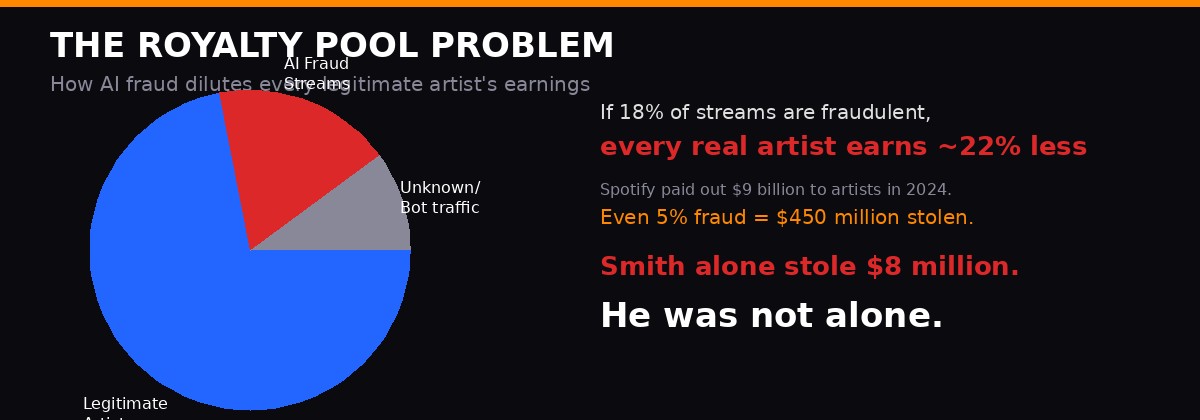

Understanding why this worked requires understanding how streaming royalties actually get paid. The model most platforms use is called a "pro rata" system: every month, a platform collects all subscription revenue and advertising revenue into a giant pool, calculates how many total streams happened that month, and divides the pool by total streams to get a per-stream rate. Individual artists then get paid based on their share of total streams. [Spotify Loud & Clear report, 2024]

The fraud was structural. Smith was not stealing money directly from Spotify's vault. He was inserting fake streams into the denominator and fake artists into the numerator, claiming a slice of a pool that other artists had a legitimate right to. Every dollar that went to a fake AI-generated track was a dollar that did not go to a real musician.

The per-stream rates that platforms pay are remarkably low - typically between $0.003 and $0.005 per play on Spotify, and up to $0.01 on Apple Music. To accumulate $8 million at those rates requires an enormous number of streams. The DOJ says the bots generated "billions" of plays. At $0.004 average, $8 million requires roughly 2 billion streams - an output that would rank a real artist among the most-streamed in the world in any given year.

The technical execution required bot accounts that could evade platform detection. Streaming platforms have invested heavily in bot-detection infrastructure - machine learning models trained to identify inhuman playback patterns: accounts that never skip, that stream the same track repeatedly, that never change volume, that cluster in specific geographic locations. Smith reportedly used IP rotation, varied playback behavior, and distributed the bot accounts across hundreds of fake listener profiles to blend into legitimate traffic. [404 Media background reporting on streaming fraud mechanisms]

The scale of the operation - 700,000 songs - also served a camouflage function. With that many tracks, each individual song only needed modest stream counts. A million plays per song would only require routing about 0.07% of the total bot traffic per track. Spread thin enough, the signals disappear into statistical noise.

A Timeline of Systematic Escalation

Smith's scheme did not begin at full scale. Court documents and investigative reporting suggest an escalation pattern that is common to fraud operations: start small, test what detection thresholds will tolerate, then scale into the gaps. [DOJ SDNY charging documents, 2024]

By 2019, AI music generation tools were becoming commercially accessible. Platforms like AIVA, Amper Music, and later Mubert and Suno had democratized the ability to produce functional, technically competent audio without musical training. The outputs were not Grammy material. But they did not need to be - they just needed to be long enough to register as a stream (typically 30 seconds on Spotify) and passable enough to survive a cursory content review.

By 2021, estimates suggest Smith's catalog had reached six figures. By the time investigators had assembled a case, the operation was generating AI tracks faster than many mid-size record labels produce real music.

The DOJ indictment was first filed in September 2024. That indictment named Smith and described a co-conspirator who helped develop and operate the technical infrastructure. Smith's guilty plea in March 2026 resolved his portion. Sentencing proceedings will determine the severity of his individual consequences - but the systemic questions his case raises are not addressed by any single conviction.

The Royalty Pool as a Crime Scene

The most uncomfortable implication of the Smith case is that the streaming royalty pool itself functioned as a commons that was structurally vulnerable to exploitation. In 2024, Spotify reported it paid out more than $9 billion to rights holders globally. Apple Music, Amazon Music, and Tidal collectively account for additional billions. These are not small stakes.

The pro rata model was not designed with adversarial actors in mind. It was designed to distribute money fairly across a competitive but basically honest marketplace. Music streaming fraud changes that assumption. Once even a small percentage of streams in the pool are fake, the effective per-stream rate for real artists drops - they are sharing the pool with ghosts.

Music industry analysts have been tracking bot fraud for years. A 2021 report by Beatdapp, a streaming analytics firm, estimated that up to 10% of streams across major platforms could be fraudulent. A 2023 MusicWatch survey found that fraud detection remained inconsistent across distributors and platforms. The tools to fight this problem exist - but they have not been deployed comprehensively, partly because platforms had commercial incentives to report high stream counts and partly because aggressive fraud detection risked catching legitimate listeners in the net. [Beatdapp Fraud Report 2021; MusicWatch 2023]

Smaller artists - those earning in the range of $10,000 to $50,000 annually from streaming - are most affected proportionally. For an independent musician whose entire streaming income comes from a dedicated but modest fanbase, the pool contamination is not an abstract problem. It is a direct pay cut they did not consent to and cannot see.

The Industry's Response - and Its Gaps

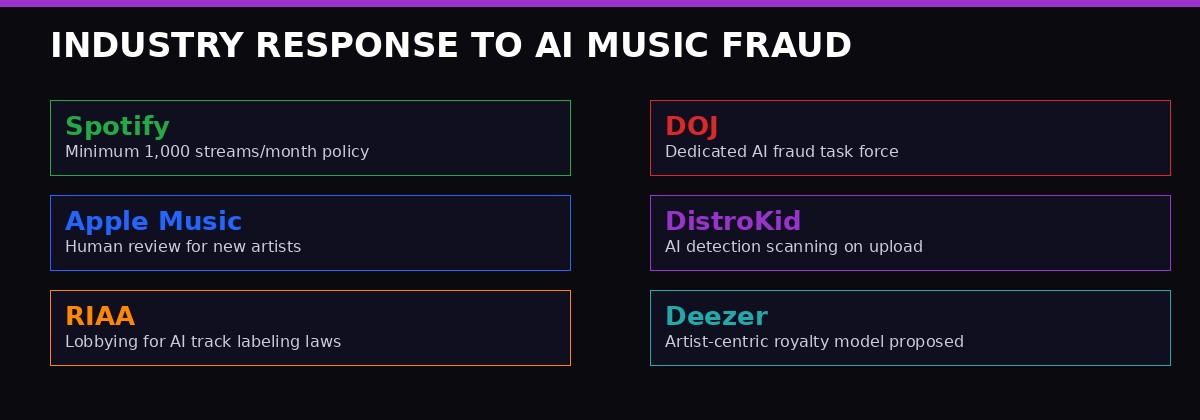

Spotify made news in 2024 when it instituted a minimum streams threshold for royalty payments - requiring at least 1,000 streams per year before a track becomes eligible for payouts. The policy was framed as clearing out "noise" from the catalog and streamlining payments to serious artists. Critics noted that it also conveniently reduced the payout pool by eliminating the long tail of obscure tracks - many of which belong to real artists who simply have not built an audience yet. [Spotify policy announcement, November 2023]

"The minimum streams threshold helps reduce fraud, but it also harms legitimate emerging artists who have small but real audiences. It is a blunt instrument for a surgical problem." - Music industry analyst commentary, 2024

Apple Music has moved toward more manual review for new artists appearing on its platform. Amazon Music has reportedly deployed additional behavioral analysis for streaming patterns. DistroKid, which was named in the Smith case as a conduit for the fraudulent payouts, has since implemented AI-powered scanning at the upload stage to flag suspicious catalog patterns - bulk uploads from single accounts, identical metadata structures, audio fingerprinting for AI-generated content. [DistroKid blog post, 2025]

The Recording Industry Association of America (RIAA) has been lobbying Congress for legislation requiring AI-generated content to be clearly labeled at the point of upload. The proposal - embedded in broader conversations about AI transparency law - would create a verifiable distinction between human-created and AI-generated music in platform databases. That distinction would allow royalty systems to apply different rules to each category, or to flag AI content for enhanced bot-detection scrutiny.

None of these responses are comprehensive. They are adaptations to a threat that the original system was never designed to face. The structural question - whether the pro rata model can survive in an era where AI can generate music faster than humans can consume it - has not been answered. It has been deferred.

The Charges Smith Faces - and What Conviction Signals

Smith pleaded guilty to wire fraud, which carries a maximum sentence of 20 years per count. He also faces money laundering charges, which carry an equivalent maximum, and Computer Fraud and Abuse Act violations. The CFAA charges relate to the bot infrastructure - using automated tools to manipulate platform systems in ways that violate their terms of service constitutes unauthorized computer access under federal law. [DOJ SDNY press release, March 2026]

Federal prosecutors in the Southern District of New York - the same office that handles major financial fraud and organized crime - made a deliberate choice to pursue this case aggressively. SDNY does not take cases it does not intend to win. The decision signals that federal law enforcement views AI-enabled streaming fraud as serious economic crime, not a niche intellectual property dispute.

The restitution component will be complex. Identifying which artists lost income to Smith's operation requires reconstructing what the royalty pool would have looked like without his fraudulent streams. That calculation is technically feasible but computationally intensive, and it is the kind of forensic accounting work that will likely produce a number far smaller than the actual harm inflicted - because the harm was diffused across millions of legitimate artists in amounts too small to individually quantify.

Smith is not facing charges for the damage he did to the music ecosystem. He is facing charges for wire fraud and money laundering - for taking money he was not entitled to take, through deception, and then moving it to conceal its origins. The law has tools for that. It does not yet have tools for "you diluted the income of seven million working musicians by a fraction of a percent per year for four years." That harm is real. It is not currently criminal under any statute that would survive a federal prosecution.

The Second-Order Threat: What Comes After Smith

Michael Smith was not the first person to run an AI music streaming fraud operation. He is the first to face federal prosecution at this scale. But every investigative account of his operation describes tools and techniques that were widely available, not proprietary to Smith. His competitive advantage was operational persistence and willingness to scale - not unique technical skill.

The implication is that dozens or hundreds of smaller versions of this scheme are running right now. They are too small to attract DOJ attention. They are too distributed to be easily detected by platform fraud systems. They are siphoning royalty revenue from the commons at rates that individually look like statistical noise but collectively constitute systematic theft.

The deeper problem is the convergence of two trends. AI music generation is getting better, not worse. The gap between AI-generated audio and human-created music is narrowing in every measurable dimension: tonal range, rhythmic complexity, dynamic variation, harmonic sophistication. The tools that existed when Smith started in 2019 were crude relative to what Suno and Udio produce today - which is already sophisticated enough to fool casual listeners. In three years, the tools will be better still. [Suno AI capability reports, 2025; Udio developer documentation]

Meanwhile, the royalty detection infrastructure at major platforms has not scaled commensurately. Spotify employs sophisticated machine learning for recommendation systems and playlist curation - complex models trained on billions of listening interactions. Its anti-fraud infrastructure is less glamorous, less publicly discussed, and less comprehensively resourced. The economic incentives are asymmetric: the fraud-detection system has to catch everything, every time. The fraudster only has to avoid detection often enough to collect payments.

The music industry has been here before. In the early days of digital distribution, piracy threatened to destroy the revenue model entirely. The industry's response - which took roughly fifteen years and required the cooperation of technology companies, governments, and consumers - did not eliminate piracy. It made piracy inconvenient enough that most people chose the legitimate alternative. AI streaming fraud requires a similar recalibration: not a perfect solution, but a comprehensive enough response that the economics of fraud become less favorable than the economics of legitimate participation.

That recalibration has not happened. The Smith prosecution is a prosecution, not a solution. The royalty pool remains a commons. The AI music generators remain accessible. The bot deployment tools remain available. The second-order effect of this case - beyond its immediate deterrent value - is that it forces a public conversation about whether the current streaming royalty architecture is compatible with a world where AI can produce infinite music at near-zero marginal cost.

That conversation is overdue. Smith's guilty plea just made it impossible to ignore.

The Legal Architecture - and Its Blind Spots

Federal prosecutors charged Smith under statutes designed for financial fraud, not music. Wire fraud (18 U.S.C. § 1343) requires proof of a scheme to defraud using electronic communications. Money laundering (18 U.S.C. § 1956) requires proof that proceeds of specified unlawful activity were processed through financial transactions to conceal their origin. The Computer Fraud and Abuse Act (18 U.S.C. § 1030) covers unauthorized access to computer systems - in this case, using bots to manipulate streaming platforms in ways their terms of service explicitly prohibit.

These are the right charges for what Smith did. But they address the fraudulent extraction of money, not the underlying mechanism that made the extraction possible. The music industry's civil remedies are limited as well. Platforms can sue for breach of contract when fraudsters violate terms of service. Distributors can terminate accounts and attempt to recover payments. But civil litigation is expensive, slow, and most practical only against defendants with recoverable assets.

There is currently no federal statute that specifically addresses AI-generated streaming fraud as a distinct category of crime. The FBI's Internet Crime Complaint Center (IC3) tracks streaming fraud under broader categories of wire fraud and intellectual property crime, but does not break it out as a separate reporting category. That means the scale of the problem nationally is genuinely unknown - we have individual case data, not systemic data. [FBI IC3 Annual Report 2025]

Some legal scholars have argued for a novel theory of liability: that large-scale streaming fraud constitutes a form of commodities manipulation. The royalty pool functions like a financial instrument - every stream is effectively a fractional claim on a shared pool of money. Artificially inflating stream counts manipulates the price of that instrument - the per-stream rate - in ways that disadvantage every legitimate rights holder. That theory has not been tested in court. But the financial forensics required to prove it are not unlike the analysis used in securities fraud cases.

The European Union's AI Act, which began phased enforcement in 2025, includes provisions for transparency and disclosure of AI-generated content. Those provisions do not specifically address streaming fraud, but they create a legal architecture that could support regulatory action against platforms that knowingly host undisclosed AI-generated content at scale. The EU's approach may offer a template for legislation in the United States, where the AI governance debate remains significantly less structured. [EU AI Act enforcement timeline, 2025]

What the Smith case clarifies for the legal community is that the tools to prosecute this crime exist, even if they are imperfect fits. SDNY prosecutors did not need new legislation to build a case. They needed evidence, time, and the willingness to treat a music streaming scheme with the same seriousness they would apply to bank fraud. That willingness is the important precedent here - not the specific charges, but the institutional decision to treat AI-enabled creative industry fraud as a serious federal crime rather than a civil matter between private parties.

Future cases will test how far that willingness extends. If smaller operators running versions of Smith's scheme at one-tenth the scale can be prosecuted - and if the deterrent effect of those prosecutions is real - the economics of streaming fraud begin to shift. The risk-adjusted return on running a bot farm drops. The rational calculation for someone with the technical skills to replicate Smith's operation changes. That is how systemic fraud gets suppressed: not by perfect detection, but by making the expected punishment severe enough relative to the expected return that only the most reckless operators continue.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram