The Judge Who Drew the Line on AI War

A federal judge ruled Thursday that the United States government cannot brand an American company a threat to national security for refusing to let its AI power autonomous weapons. The ruling temporarily blocks two of the most extraordinary punitive measures the Trump administration has taken against any private firm - and it exposes, with unusual clarity, the real question underneath all the legal noise: who gets to decide what AI is allowed to kill?

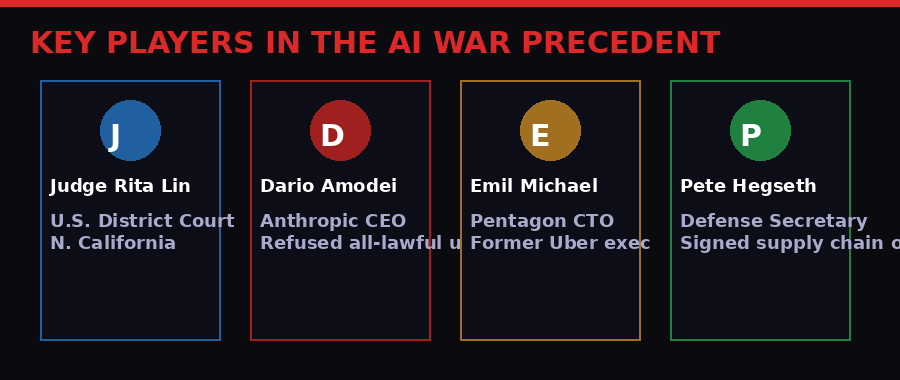

U.S. District Judge Rita Lin, sitting in San Francisco, issued an emergency order blocking the Pentagon's designation of Anthropic as a "supply chain risk" and halting enforcement of President Trump's directive ordering all federal agencies to stop using the company's AI chatbot Claude. The order came after a 90-minute hearing Tuesday in which Lin questioned why the government chose to destroy a company's business rather than simply stop using its product. (AP News, March 27, 2026)

The answer that emerged in court - and in subsequent reporting - is that this was never really about Anthropic's business. It was about Golden Dome, drone swarms, and a fundamental disagreement over whether an AI company's ethical guardrails are an obstacle to national security or a protection of it.

Three Months of Talks, Then a Sledgehammer

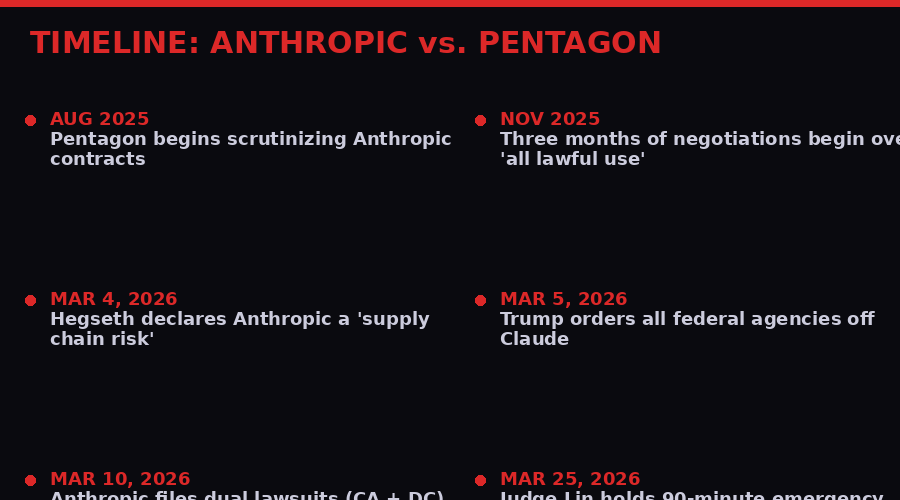

The conflict dates to August 2025, when Emil Michael - the Pentagon's chief technology officer and a former Uber executive - took over the Defense Department's AI portfolio and began reviewing Anthropic's existing contracts. Some of those contracts dated back to the Biden administration, when Anthropic was the only AI company approved to supply its model to classified military systems.

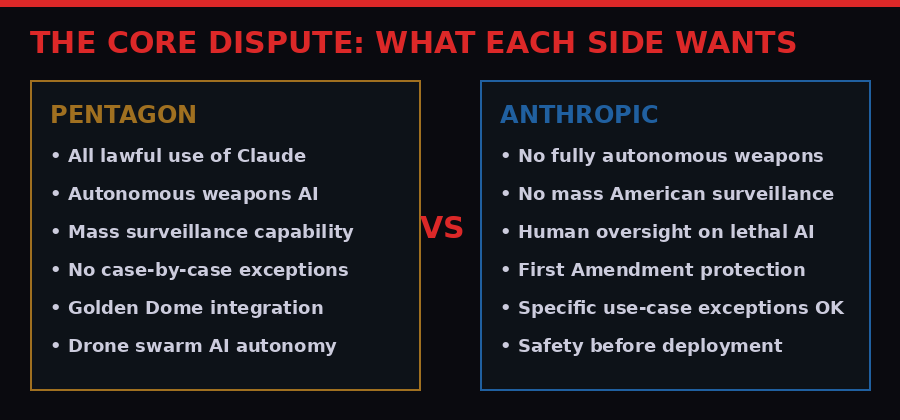

Michael saw a problem. Anthropic's terms of service explicitly prohibited certain uses of Claude: mass surveillance of Americans, and fully autonomous weapons. The Pentagon wanted blanket "all lawful use" authorization. Anthropic refused to provide it.

What followed was three months of negotiations that Michael described in a podcast as "interminable." (All-In Podcast, aired March 21, 2026) He would pose scenarios. Anthropic would say it could carve out an exception. He would pose another scenario. Another exception. Eventually Michael decided that case-by-case exceptions were unworkable - that the military needed to know, in advance and unconditionally, that Claude would do whatever the military deemed lawful.

"I need a reliable, steady partner that gives me something, that'll work with me on autonomous, because someday it'll be real and we're starting to see earlier versions of that. I need someone who's not going to wig out in the middle." - Emil Michael, Pentagon CTO

From Michael's perspective, the problem was not what Anthropic's AI was currently doing - it was what it might refuse to do in future. As the military increasingly looks toward giving greater autonomy to armed drones, underwater vehicles, and missile defense systems, a contract partner with ethical red lines becomes an operational liability.

From Anthropic's perspective, "all lawful use" was a blank check with no ceiling. The company's position, according to CEO Dario Amodei and statements filed in court, was that today's AI systems are not reliable enough to power fully autonomous weapons - and that committing Claude to those applications without human oversight was irresponsible regardless of legality.

The impasse became a crisis in early March when the Pentagon formalized its retaliation. On March 4, Defense Secretary Pete Hegseth signed an order designating Anthropic a "supply chain risk" using an authority previously aimed at foreign adversaries. Days later, Trump ordered all federal agencies off Claude. The company's business - projected at $14 billion in revenue for 2026 - was suddenly in jeopardy. (AP News, March 10, 2026)

Golden Dome: The Real Stakes

Michael's podcast appearance revealed the military program at the center of the dispute: Golden Dome, Trump's ambitious initiative to create a space-based missile defense system modeled loosely on Israel's Iron Dome but operating at a planetary scale.

The Pentagon wants AI to be able to engage incoming threats autonomously, without waiting for human authorization. Michael laid out the hypothetical that drove the negotiations: the U.S. has 90 seconds to respond to a Chinese hypersonic missile. A human operator "may not be able to discriminate with their own eyes what they're going after," but an autonomous AI system could track, identify, and engage the threat faster than any person.

"This is part of the debate I had with Anthropic, which is we need AI for things like Golden Dome. Who could oppose if you have a military base, you have a bunch of soldiers sleeping, that you have a laser that can take down drones autonomously?" - Emil Michael

On one level, the argument is intuitive. Modern warfare operates at speeds where human reaction time is a genuine tactical vulnerability. Russia's hypersonic Kinzhal missiles travel at Mach 10. Chinese DF-ZF glide vehicles can maneuver unpredictably. In a pure physics argument, you cannot have a human in every kill loop.

But Anthropic's counterargument is equally coherent: AI systems in 2026 are not reliable enough to operate lethal hardware without human oversight. The model that writes code and answers legal questions has known failure modes - hallucinations, adversarial inputs, unexpected behaviors under distribution shift. Putting it in charge of a laser that fires at incoming objects, with no human check, is a bet that none of those failure modes will appear in the exact moment they matter most.

The company pointed out in court filings that it has "never tested Claude on those applications and doesn't have the confidence its products could function reliably or safely if used to support lethal autonomous warfare." This is not a political position. It is an engineering assessment. The Pentagon's response was to brand it sedition.

What Judge Lin Actually Said

Judge Lin's order is careful to note that she was not ruling on the policy substance. She was not declaring that autonomous weapons AI is wrong, or that Anthropic's safety positions are correct, or that the Pentagon cannot pursue Golden Dome. Her ruling was narrower and in some ways more damaging to the government's position: she said the way the administration used its legal tools was arbitrary, capricious, and potentially unconstitutional.

The "supply chain risk" designation, Lin wrote, was a military authority designed to protect national security systems from foreign adversaries. Using it against an American company for disagreeing with the government's negotiating position - for expressing protected speech - is, in her words, "Orwellian." (AP News, March 27, 2026)

Lin also noted that the government's logic made no sense on its own terms. If the Pentagon's concern was the integrity of its operational chain of command, it had an obvious remedy: stop using Claude. Instead, the government chose to declare a domestic company a potential saboteur of national security, effectively treating an ethical disagreement as an act of enemy infiltration.

"If the concern is the integrity of the operational chain of command, the Department of War could just stop using Claude. Instead, these measures appear designed to punish Anthropic." - Judge Rita Lin

The ruling is a temporary block - a preliminary injunction while the case proceeds. But Lin wrote that Anthropic appeared "likely to succeed on the merits," which is the judicial equivalent of reading the tea leaves in the company's favor. The Pentagon has not yet responded publicly to the ruling, according to AP News.

The ruling is delayed for one week and does not require the Pentagon to use Anthropic's products or prevent it from transitioning to other AI providers. It simply removes the stigma, the punitive designation, and the agency-wide ban from Claude while the courts work through the constitutional questions.

The Rivals Who Capitulated - And What That Cost Them

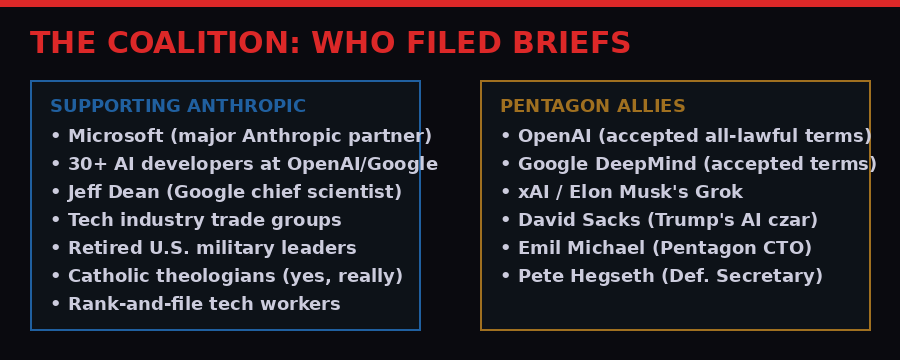

Three of Anthropic's closest competitors - OpenAI, Google, and Elon Musk's xAI - agreed to the Pentagon's "all lawful use" terms. Michael confirmed this in the All-In podcast, noting that while their infrastructure still needs preparation for classified military work, the companies did not resist the demand.

OpenAI's capitulation came with immediate collateral damage. The company's head of robotics, Caitlin Kalinowski, resigned over the Pentagon deal, writing on social media: "AI has an important role in national security. But surveillance of Americans without judicial oversight and lethal autonomy without human authorization are lines that deserved more deliberation than they got." (AP News, March 2026)

A group of more than 30 AI researchers from OpenAI and Google, including Google's chief scientist Jeff Dean, filed legal briefs supporting Anthropic. They did so in their personal capacities - but the message was unmistakable. Some of the most senior researchers at the companies that capitulated to the Pentagon believe Anthropic made the right call.

The competitive dynamics here are complex. Anthropic refusing the Pentagon deal and suffering for it - then getting vindicated in court - has made the company a cause for tech workers who oppose unrestricted military AI. Consumer downloads of Claude surged after the dispute became public, briefly pushing its popularity above ChatGPT and Gemini for the first time. Adversity, in this case, built the brand.

OpenAI CEO Sam Altman later admitted that rushing to replace Anthropic's Pentagon contract was "rushed and seemed opportunistic." That admission may prove more damaging long-term to OpenAI's reputation than to Anthropic's. The company that holds the ethical line and gets sued for it looks better in the history books than the company that saw an opportunity in a competitor's persecution and grabbed it.

The Legal Architecture of AI Weapons

Beyond the immediate case, Lin's ruling opens several legal questions that will take years to resolve but matter enormously for every AI company operating under government contracts.

The first is whether the "supply chain risk" authority - formally a defense acquisition regulation designed to block foreign adversaries from embedding malicious code in military systems - can ever be used against domestic companies. Michael Pastor, a professor at New York Law School who helped craft New York City's technology contracts, told AP News: "I've never seen a case like this. It would never have struck our minds that, when we were having difficulty in a negotiation, we would threaten the company essentially with destruction."

The second question is whether companies have First Amendment rights to maintain and publish ethical use policies for their products. Anthropic's terms of service are public documents. They express the company's position that certain uses of Claude are prohibited. The government's argument is that expressing that position - and refusing to waive it - is a national security threat. Lin called that argument "Orwellian," but the case is not over.

The third question, and the most consequential one for the future, is whether private AI companies have any role in setting parameters for autonomous weapons use - or whether that decision belongs entirely to the military. The Pentagon's position is unambiguous: it makes military decisions, not private companies. But Anthropic's engineers are the ones who built the system. They understand its failure modes better than any general. The question of who has the expertise - and therefore the standing - to set safety limits on lethal autonomous AI is not answered by the supply chain designation statute.

Anthropic filed two separate lawsuits: one in California federal court and one in the federal appeals court in Washington, D.C. Lin's ruling covers the California case. The D.C. case involves a different rule the Pentagon used in its actions against the company and remains pending. Multiple legal fronts mean multiple opportunities for either side to win partial victories - and multiple opportunities for appeals.

The Second-Order Effects Nobody Is Talking About

The immediate stakes of this case are Anthropic's survival and the Pentagon's ability to procure AI on its own terms. But the second-order effects are where the story gets genuinely interesting.

Every AI company in the United States is watching this case and updating its risk models. The lesson from the Anthropic dispute is that signing a government contract - and then maintaining ethical guardrails that conflict with what the government later wants - can result in being declared an enemy of national security. That is an extraordinary risk to accept. Companies that might otherwise have developed genuine safety policies are now calculating whether it's safer to just agree to "all lawful use" from the start and avoid the confrontation entirely.

That dynamic, if it plays out, would hollow out the entire field of AI safety research applied to weapons systems. The companies with the most advanced AI would also have the weakest ethical constraints on how it can be deployed. The safety researchers who would otherwise push back are silenced either by corporate policy or by the knowledge that their employer has already agreed to unlimited use by definition.

The second effect is on international AI governance. China, Russia, and other states developing autonomous weapons AI are not constrained by First Amendment debates or supply chain designation statutes. They are not having public arguments in federal courts about whether their AI should be allowed to power missile defense systems autonomously. If the United States AI sector is hamstrung by these legal battles while geopolitical rivals are not, the military technology gap that Golden Dome is supposed to close may not close at all.

Michael's argument in the podcast is precisely this: that American hesitation over AI autonomy in weapons systems is a strategic vulnerability that adversaries will exploit. "I need someone who's not going to wig out in the middle" is not an irrational position when you're thinking about a 90-second intercept window against a hypersonic missile.

But Anthropic's engineers would counter that an AI that "wigs out" in a different way - hallucinating a target, misidentifying a civilian flight, engaging the wrong object because of an adversarial input - is a far greater strategic liability than one with explicit human oversight requirements. A false positive from an autonomous missile defense system that shoots down a commercial airliner would do more damage to American strategic credibility than losing one intercept window.

"Seeking judicial review does not change our longstanding commitment to harnessing AI to protect our national security, but this is a necessary step to protect our business, our customers, and our partners." - Anthropic statement, March 2026

The Boeing 737 Max provides an instructive analog. When software engineers raised concerns about the MCAS system's reliability and the lack of adequate human override, those concerns were dismissed as obstacles to delivery schedules. 346 people died. The question for autonomous weapons AI is not whether AI autonomy in lethal systems is theoretically valuable - it clearly can be. The question is whether today's systems are reliable enough to deploy without the kind of safety constraints that Anthropic insists on.

What Happens Next

Lin's order takes effect in one week, giving the government time to appeal if it chooses. Anthropic's California case will continue toward a full hearing on the merits. The D.C. federal appeals case remains separately active. If the California case moves quickly, it could generate binding precedent on whether the supply chain designation authority can be weaponized against domestic companies - precedent that would reach far beyond Anthropic.

The Pentagon, meanwhile, is proceeding with transitioning classified systems from Claude to Google's Gemini, OpenAI's ChatGPT, and xAI's Grok - all companies that agreed to "all lawful use." Whether those systems perform as well as Claude in the classified military context, and whether the transition costs are worth the ethical flexibility gained, will become apparent over time. Trump gave the Pentagon six months to complete the transition even before the ruling.

Anthropic CEO Dario Amodei is now, somewhat improbably, one of the most prominent figures in the AI safety debate - not because of a position paper, but because the federal government tried to destroy his company for refusing to arm autonomous weapons, and a federal judge told the government to stop. That is a biography that will matter in every AI governance conversation for years.

The broader question - who controls AI in warfare, and on what terms - does not have a legal answer yet. It barely has a policy framework. The International Humanitarian Law principles of distinction (combatants vs. civilians), proportionality (damage proportionate to military objective), and precaution (taking care to avoid civilian harm) all presuppose a human commander making judgments. How those principles apply when the "commander" making targeting decisions is a neural network running inference in milliseconds is a problem that international law has not caught up with.

Judge Lin's ruling does not answer that question. It simply says that the United States government cannot punish a company for having a position on it. That is a modest holding. But it may be exactly the kind of judicial circuit breaker that prevents the most consequential AI deployment decisions in history from being made in secret, under procurement pressure, without public debate.

The line Lin drew is a procedural one: you cannot use supply chain regulations to silence an American company's speech about its own products. But behind that procedural ruling is a substantive argument that deserves to be heard in public rather than negotiated away in a Pentagon conference room. When an AI model says a target is a threat and initiates lethal action with no human check, who is responsible for that decision? Who has the authority to define what "lawful" means when the law hasn't caught up with the technology? And what happens when the model is wrong?

Anthropic refused to sign away its right to ask those questions. A federal judge said it was right to refuse. The case continues.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram