Anthropic Leaked Its Most Dangerous AI. Crypto Felt It First.

A human error at Anthropic accidentally exposed nearly 3,000 internal documents including the announcement of "Claude Mythos" - a model its own company describes as posing "unprecedented cybersecurity risks." Cybersecurity stocks fell 4-6% the next morning. Bitcoin dropped from $70,000 to $66,000. And the DeFi industry is staring at what comes next.

The accidental leak: roughly 3,000 unpublished Anthropic assets were left in a publicly searchable data cache - including a draft blog post describing a model with "unprecedented cybersecurity risks." [BLACKWIRE graphic]

The company that built the AI supposedly designed to be helpful and harmless just accidentally told the world it has something far more powerful - and warned that its own creation could be used to find and exploit software vulnerabilities at unprecedented speed.

Anthropic did not mean to publish this information. A human error in the company's content management system left approximately 3,000 unpublished assets in a publicly searchable data cache, according to a report by Fortune published Thursday. Among the exposed files: a draft blog post announcing a new AI model internally called "Claude Mythos," alongside descriptions of a new model tier called "Capybara" that would sit above Anthropic's existing Opus models - currently the company's most powerful public offering.

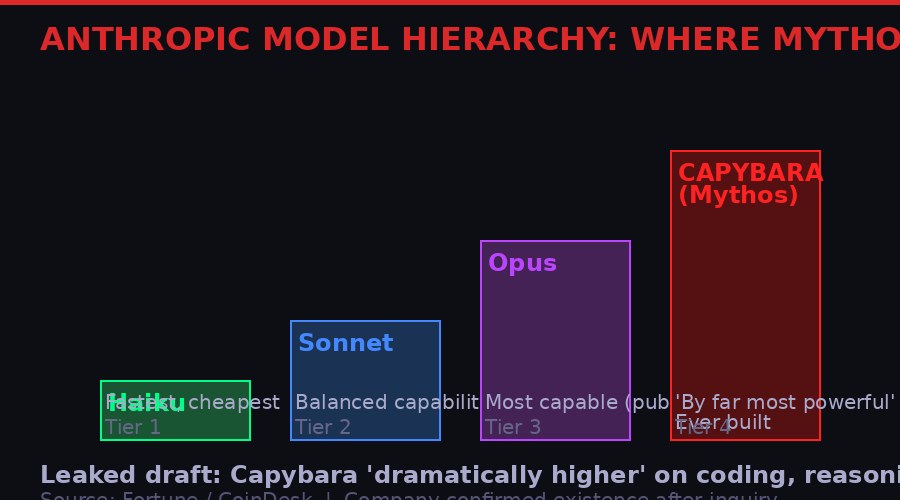

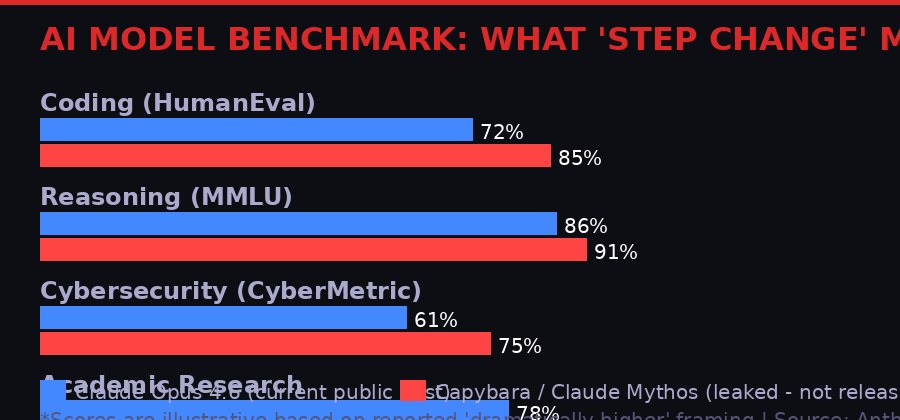

The draft said the model was "by far the most powerful AI we've ever developed." It described performance that is "dramatically higher" than Claude Opus 4.6 across software coding, academic reasoning, and cybersecurity benchmarks. It also flagged something that stopped the crypto security community cold: the model "poses unprecedented cybersecurity risks," specifically in its ability to identify and exploit software vulnerabilities.

Anthropic confirmed the model's existence after Fortune's inquiry. The company called it "a step change" in capability, acknowledged the data exposure was due to "human error," and removed public access to the cache. Early access customers are already testing it.

The market's reaction was immediate. Cybersecurity stocks fell sharply on Friday morning. Bitcoin, which had been flirting with $70,000 earlier in the session, dropped to $66,000 overnight - a $4,000 move that traders attributed in part to the leak triggering a broader tech selloff that pulled down risk assets across the board.

The Leak: What Was Actually Exposed

The Anthropic model stack: Haiku, Sonnet, and Opus are the public tiers. The leaked documents describe "Capybara" as a new tier above Opus - the model Anthropic calls Claude Mythos internally. [BLACKWIRE graphic]

Cybersecurity researchers who reviewed the exposed material found a comprehensive view of Anthropic's near-term roadmap that the company was clearly not ready to share publicly. The ~3,000 assets included draft blog posts, internal content previews, and announcement materials spanning topics beyond just the new model.

The centerpiece was a draft post describing "Capybara" - the new model tier sitting above Opus in Anthropic's internal classification. The draft described performance metrics: "Compared to our previous best model, Claude Opus 4.6, Capybara gets dramatically higher scores on tests of software coding, academic reasoning, and cybersecurity, among others."

To understand the significance: Claude Opus 4.6 is already among the most capable AI models available to the public. It performs at or above PhD level on a range of reasoning benchmarks. It can write functional, complex code. It understands security concepts well enough to audit codebases for vulnerabilities. If Capybara is "dramatically higher" on cybersecurity specifically, the capability gap over existing tools is substantial.

The word that matters in that leaked draft is "unprecedented." Anthropic's own team, writing an internal description of their product, chose that word for the cybersecurity risk dimension. Not "significant." Not "notable." Unprecedented. That framing carries weight coming from a company that is among the most safety-focused AI labs in the world - a company that has published extensive research on AI safety, constitutional AI, and model alignment.

"Compared to our previous best model, Claude Opus 4.6, Capybara gets dramatically higher scores on tests of software coding, academic reasoning, and cybersecurity, among others."

- Anthropic internal draft blog post, exposed in data cache breach (source: Fortune, March 26, 2026)

Anthropic's current public models already have access controls and safety features to prevent them from being used to directly write exploit code. The concern with Capybara/Mythos is not that it will write exploits on command - it presumably has similar guardrails. The concern is that its underlying capability for understanding complex codebases and identifying subtle logical flaws is so high that even with guardrails, its knowledge can be leveraged by sophisticated actors who know how to work around them. Or that less safety-focused competitors will train on the same capabilities without those guardrails.

The model is not publicly available. Anthropic says it is being tested with "early access customers" and is "expensive to run." The company indicated it is "being deliberate" about release timing given the capabilities involved. The data cache has been secured. The draft blog post is gone from public view.

But the information is already out. The capabilities are known. And the market has started pricing what they mean.

The Immediate Market Impact

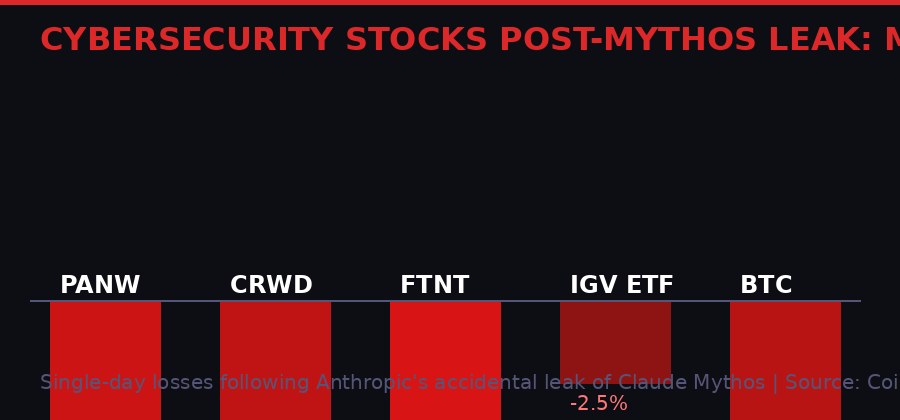

Market fallout from the Mythos leak: Palo Alto Networks, CrowdStrike, and Fortinet each fell 4-6% in a single session. The IGV tech-software ETF lost 2.5%. Bitcoin dropped 4.2%. [BLACKWIRE graphic / CoinDesk]

The market's first instinct when an AI model with "unprecedented cybersecurity risks" is accidentally revealed to the public: sell the companies that sell cybersecurity.

The logic, while counterintuitive at first glance, is consistent with how institutional money has historically processed AI disruption. Palo Alto Networks (PANW), CrowdStrike (CRWD), and Fortinet (FTNT) all dropped between 4% and 6% on Friday. The iShares Expanded Tech-Software Sector ETF (IGV) lost 2.5%. These moves happened on top of an already-weak market session driven by Iran war fears, oil above $100, and the broader $17 trillion equity rout that has been grinding equities lower for weeks.

The reasoning is this: if an AI can identify and exploit software vulnerabilities at a capability level that traditional security tools cannot match, the value proposition of signature-based and pattern-matching security products comes into question. The existing cybersecurity playbook was designed for human attackers and rule-based malware. An AI that can generate novel, zero-day-class attack vectors tailored to specific infrastructure configurations represents a category shift in the threat landscape.

At the same time, selling the defenders assumes the defenders will not also use the same AI. Both sides of the equation get better. The security firms that move fastest to integrate Capybara-class capabilities into their defensive platforms potentially strengthen their competitive position. But the market, in the immediate reaction, chose fear over that logic.

Bitcoin's route from $70,000 to $66,000 happened as the tech-software sector sold off. The Anthropic leak was not bitcoin's primary driver on Friday - oil, Iran, rate cut fears, and $300 million in long liquidations carried more weight. But the leak contributed to the risk-off atmosphere that made every rally attempt fail. CoinDesk reporting explicitly tied the leak to bitcoin's failure to hold above $70,000 and its subsequent slide back toward $66,000.

The overnight move from $70K to $66K is approximately $4,000 - a 5.7% drop in a matter of hours. For context: bitcoin's 30-day implied volatility index continued to fall despite the price weakness, suggesting the options market does not see this as the beginning of a panic spiral. But the directional move was real, and the leak was part of what drove it.

The DeFi Security Crisis It Landed Into

The security landscape the Mythos leak landed in: Resolv stablecoin exploit, Ripple's AI-assisted vulnerability audit, Ethereum's post-quantum hub launch, and now an AI model that may make all of these threats significantly worse. [BLACKWIRE graphic]

The timing of the Anthropic leak was particularly damaging for the crypto security conversation because it landed in the middle of a week that had already surfaced three significant security stories across the sector.

First: the Resolv stablecoin. Resolv lost its peg this week after an attacker exploited a minting contract that had no oracle checks and relied on single-key access control. Oracle checks are a basic security mechanism that verifies external price data before executing on-chain logic. The absence of them is a foundational vulnerability - the kind that a moderately skilled attacker can find with some reading of the contract code. Single-key access control means one private key had the ability to mint tokens; if that key is compromised or the holder is malicious, the entire stablecoin supply is at risk.

The Resolv exploit did not require an AI. It required someone reading the contract and identifying two basic security failures. Capybara-class AI does not just read contracts - it analyzes entire dependency trees, simulates attack scenarios across all possible execution paths, and identifies non-obvious interaction effects between multiple contracts that appear secure in isolation but create exploitable conditions in combination. That capability does not just make existing attacks faster. It makes currently-undiscoverable attacks discoverable.

Second: Ripple's XRP Ledger security overhaul. Ripple announced this week that it deployed an AI-assisted red team to audit the XRP Ledger's codebase - a 13-year-old system that has been in continuous production for over a decade. The AI found more than 10 previously unknown vulnerabilities. Ripple framed this as a security improvement: identify and patch before an attacker finds them. But the subtext is stark. A 13-year-old codebase maintained by some of the most experienced teams in the industry had over 10 vulnerabilities that human auditors missed over more than a decade. AI found them in what appears to have been a relatively short audit engagement.

Third: Ethereum's post-quantum security hub. The Ethereum Foundation launched a dedicated post-quantum security research program this week, backed by eight years of research into quantum-resistant cryptography. The program targets Ethereum's long-term security against quantum computers that could break elliptic curve cryptography - the mathematical foundation of current wallet security. The launch came alongside broader crypto industry discussion of quantum timelines, with Google's research suggesting Q-Day (the point at which quantum computers can break current encryption) may arrive as early as 2029.

"The irony needs no elaboration. A company building what it describes as an AI model with unprecedented cybersecurity capabilities left the announcement of that model in an unsecured, publicly searchable data store due to human error."

- CoinDesk analysis, March 28, 2026

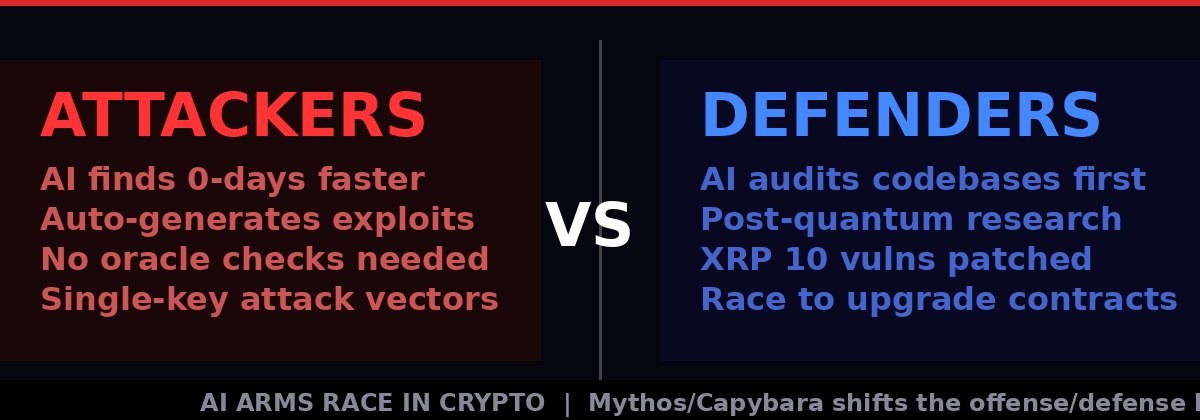

The Anthropic leak dropped into this specific context - a week where the crypto industry was already confronting AI-enabled vulnerability discovery, smart contract exploits, and quantum timeline pressure simultaneously. Mythos/Capybara does not create these problems, but it accelerates every single one of them. If the gap between AI-assisted attack capability and AI-assisted defense capability widens - which happens when one side has access to dramatically superior tools - the attack side wins by default until defenders catch up.

What Capybara Actually Changes for Smart Contract Security

The AI arms race in crypto security: both attackers and defenders gain capability as AI models improve, but an asymmetric leap in offensive capability - before defensive tools catch up - creates a critical exposure window. [BLACKWIRE graphic]

The smart contract security industry currently operates on a manual audit model supplemented by automated analysis tools. A typical audit cycle takes weeks. Auditing firms like Trail of Bits, Certik, and OpenZeppelin deploy teams of experienced security engineers who read contract code, simulate interactions, fuzz test inputs, and attempt to construct attack scenarios. Automated tools run static analysis - looking for known vulnerability patterns and common coding mistakes.

This model works against human attackers because humans also take weeks to analyze complex systems. The speed parity between offense and defense means audits, while not perfect, catch most vulnerabilities before attackers can exploit them. The Bybit hack, which lost $1.4 billion in February 2025, was an exception driven by social engineering and interface manipulation rather than raw contract exploitation - and it dominated industry discussion for months precisely because it broke through defenses that should have held.

What Capybara-class AI changes is the time dimension on the attack side. If an AI can analyze a complex DeFi protocol's entire dependency tree - all its contracts, all their interactions, all the external oracle and bridge dependencies - in minutes rather than weeks, and flag the non-obvious multi-contract interaction effects that human auditors miss, it compresses the window between protocol deployment and potential exploit. An attacker using such a tool does not need to be smarter than the defenders. They just need to move faster.

This is not hypothetical. The XRP audit finding 10+ vulnerabilities in a code base that human teams had maintained for 13 years already demonstrates the capability gap in AI-assisted analysis versus traditional review. The Resolv exploit this week showed what happens when basic security checks are absent. The combination - protocols with subtle vulnerabilities, auditors missing them, and AI tools that can find them rapidly - is the attack scenario the industry is now actively managing.

The defensive response that Ripple, Ethereum, and others are pursuing - using the same AI tools offensively in red team exercises - is the correct strategy. The question is whether the defensive adoption of Capybara-class tools keeps pace with offensive adoption. Historically, in cybersecurity as in other domains, the offense benefits more from capability leaps in the short term because it only needs to find one path through the defense, while defense must cover all paths.

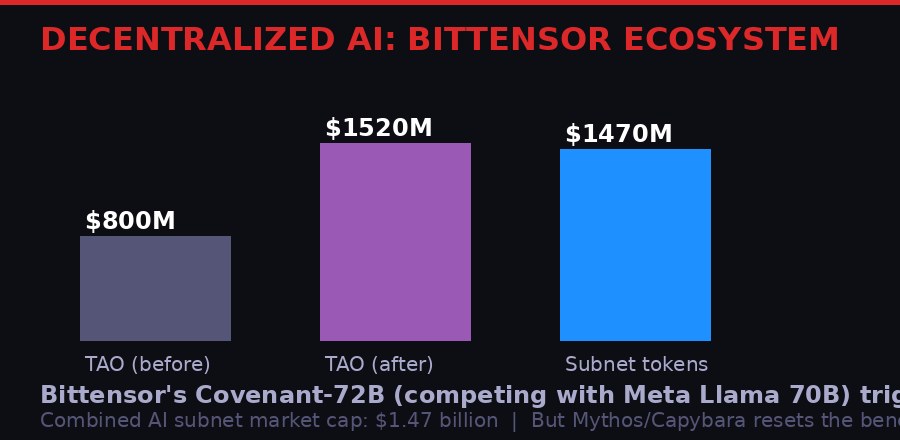

The Decentralized AI Token Market: Benchmark Reset

Bittensor's Covenant-72B release drove a 90% TAO rally and pushed subnet tokens to $1.47 billion combined market cap. Then Mythos dropped. The decentralized AI market now has to reckon with how far behind centralized labs it actually is. [BLACKWIRE graphic]

The Mythos leak lands particularly hard for the decentralized AI sector - a market segment built on the premise that permissionless, distributed AI development can compete with centralized corporate labs.

Bittensor's decentralized AI network released Covenant-72B this week, a model that competes with Meta's Llama 2 70B. The release drove an extraordinary response: TAO, Bittensor's native token, rallied approximately 90%. Combined subnet tokens across the Bittensor ecosystem reached a market cap of $1.47 billion. This was treated as evidence that the decentralized AI thesis was working - that distributed training networks could produce competitive frontier models without the $10 billion-plus capital expenditure that Anthropic, OpenAI, and Google pour into their infrastructure.

Covenant-72B competing with Meta's Llama 2 70B is genuinely impressive for a decentralized network. But "competing with Llama 2 70B" means competing with a model that Meta released in mid-2023. It means competing with technology that is nearly three years old. Anthropic's current public best, Opus 4.6, is already categorically more capable. Capybara/Mythos is described as "dramatically higher" than Opus 4.6. The actual frontier is not Llama 2 70B. It is Mythos, and whatever OpenAI and Google are building at equivalent capability levels that have not been accidentally leaked yet.

The benchmark gap between decentralized AI and the corporate frontier is not closing - it may be widening. The capital and infrastructure required to train a Capybara-class model is orders of magnitude beyond what a distributed network of consumer GPU operators can coordinate. Bittensor's economic model works well for running inference on existing models and fine-tuning within specific domains. It does not yet work well for training frontier models that require sustained coordination of tens of thousands of high-end accelerators with specialized interconnect hardware.

For TAO and similar AI tokens, the Mythos revelation is a double signal: it validates that AI capability development is accelerating rapidly (good for the thesis that AI infrastructure is valuable) while simultaneously demonstrating that the centralized labs are further ahead than the decentralized ecosystem's most optimistic estimates suggested (bad for claims of competitiveness). Markets will have to reconcile those two signals as trading resumes.

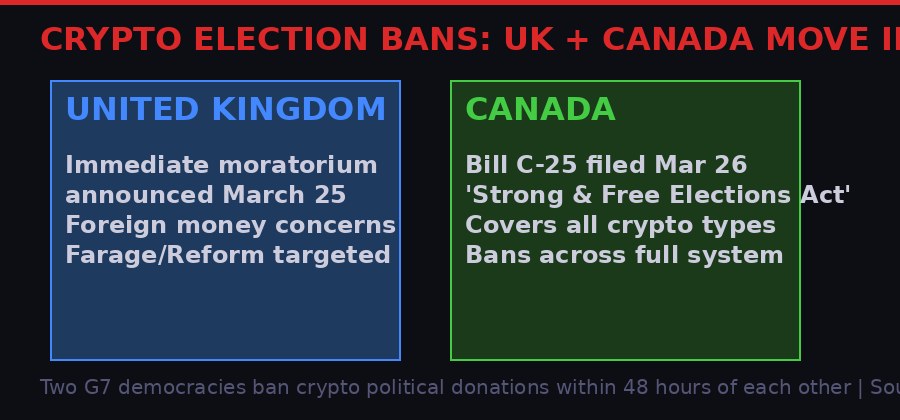

Canada and the UK Move Simultaneously: Crypto Gets Banned From Democracy

Two G7 democracies, one week: the UK and Canada both announced bans on cryptocurrency political donations within 48 hours of each other, citing foreign money concerns and traceability challenges. [BLACKWIRE graphic]

While the Mythos story dominated tech-crypto headlines, two G7 democracies made a separate kind of statement about crypto's role in their political systems - and the coordination appears non-coincidental.

The United Kingdom announced an immediate moratorium on cryptocurrency donations to political parties on March 25, citing concerns that digital assets could be used to obscure the origin of foreign money in British politics. The announcement came from Prime Minister Keir Starmer's government and specifically targeted the Reform party, led by Nigel Farage, which had expressed interest in accepting crypto donations as a way to bypass traditional financial controls on political funding. The ban applies immediately and covers all registered political entities.

Within 48 hours, Canada filed Bill C-25 - the Strong and Free Elections Act - on March 26. The bill would prohibit political contributions made in bitcoin and other cryptoassets, as well as money orders and prepaid payment products, grouping them all as forms of funding that are difficult to trace. The ban applies broadly: registered parties, riding associations, candidates, leadership and nomination contestants, and third parties engaged in election advertising.

The Canadian case is notable because it addresses a theoretical vulnerability rather than a documented one. Canada has permitted crypto donations since 2019 under an administrative framework that classified them as non-monetary contributions. But no major federal party has publicly accepted crypto, and no contributions have been disclosed in either the 2021 or 2025 elections. Canada's Chief Electoral Officer nonetheless grew increasingly uncomfortable with the arrangement, shifting from recommending tighter rules in 2022 to recommending an outright ban by November 2024.

"Contributor identification is fundamentally difficult" in cryptocurrency systems, according to the Chief Electoral Officer's 2024 recommendation to the government - a position that forms the core of Bill C-25.

- Canada Elections Canada report, November 2024

The simultaneous UK-Canada moves reflect a coordinated Western democratic response to a specific threat model: that crypto's pseudonymity creates a vector for foreign actors to fund domestic political movements in ways that evade existing campaign finance disclosure requirements. Both countries have had recent high-profile concerns about foreign electoral interference - Canada from Chinese state actors according to intelligence reports, the UK from Russian and Gulf state actors.

For the crypto industry's political engagement narrative - the argument that crypto represents a pathway to more transparent, auditable campaign finance - these bans are a significant rebuttal. Two countries with functioning crypto regulations and disclosure frameworks looked at the technology and still concluded that the traceability problems outweigh the transparency benefits for political donations specifically.

What the Leak Tells You About the Next 12 Months in DeFi Security

The capability gap visualized: leaked draft describes Capybara as 'dramatically higher' than Opus 4.6 across cybersecurity, coding, and reasoning benchmarks. The gap between what attackers and defenders can access is about to narrow - or flip. [BLACKWIRE graphic / Anthropic leaked draft]

The way to read the Anthropic Mythos situation for the next 12 months in DeFi is through the lens of who gets access to the capability first, and in what context.

Anthropic is testing Mythos with "early access customers." It is expensive to run and not yet available generally. The company is being "deliberate" about release timing. In practice, this means the gap between Mythos being available to Anthropic's enterprise partners and being available to the open source community and, by extension, to criminal actors, is measured in months, not years. The capability does not stay exclusive. AI history since GPT-4 demonstrates that closed frontier capabilities become open-source capabilities within 12-18 months.

DeFi protocols deployed today will be operational in 12 months. The ones that have not been audited using Mythos-class tools are, by definition, potentially carrying vulnerabilities that current audit methods cannot reliably detect. The Ripple finding of 10+ vulnerabilities in a 13-year-old codebase audited by humans for years suggests the scale of what AI-assisted analysis might find in the roughly $200 billion of value locked in DeFi smart contracts.

The industry's correct response is not panic but acceleration. The same tools that can find vulnerabilities before attackers can also be deployed defensively - which is exactly what Ripple did. The firms and protocols that move fastest to integrate Capybara-class analysis into their security pipeline before the capability becomes widely available to attackers will be in the strongest position. The ones that treat this as someone else's problem are the ones that will end up in a post-exploit post-mortem explaining why they did not see it coming.

There is also a second-order consideration for protocol insurance products like Nexus Mutual and UnoRe. If the actuarial models underpinning DeFi insurance pricing assume a certain baseline rate of exploits per year, and that rate is about to increase because AI is expanding the attack surface faster than defenses can adapt, those models are wrong. Insurance premiums for DeFi protocols may need significant repricing upward - which translates directly to higher costs for protocols and, ultimately, higher costs for users in the form of reduced yields or higher fees.

Timeline: From Cache Error to Market Chaos

The sequence: accidental exposure Thursday, Anthropic confirmation, market selloff Friday, security analysis over the weekend. The leak was out for less than 24 hours before global markets responded. [BLACKWIRE graphic]

The irony embedded in the entire Mythos episode is one the industry should sit with. The company building an AI system it describes as posing unprecedented cybersecurity risks due to its ability to find vulnerabilities accidentally left the announcement of that system in an unsecured, publicly searchable data store because of human error in a content management system. The most capable AI they have ever built could have found that misconfiguration in seconds. Their human operations team did not catch it for long enough for outside researchers to review nearly 3,000 files.

If Capybara/Mythos is as capable as the leaked draft suggests, the first thing it should audit is Anthropic's own infrastructure. The fact that the leak happened at all suggests the answer to that audit would be instructive.

For the crypto industry, the signal is simple: the AI tools coming in the next 12 months will find things in your contracts that no human auditor and no current automated tool will find. The protocols that get ahead of this - by deploying the new tools defensively before attackers can use them offensively - will survive. The ones that do not are writing post-mortems for someone else to read.

The clock on that window started the moment Fortune published its story. It ran for exactly as long as it took to go from "Anthropic internal draft" to "global headline." That was less than 24 hours.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramSources

- Fortune - Anthropic data cache breach, Claude Mythos discovery (March 26, 2026)

- CoinDesk - "Anthropic's massive 'Claude Mythos' leak sends software names and crypto sharply lower" (March 27, 2026)

- CoinDesk - "Here's what next as Anthropic's most powerful AI model leaked via unsecured data cache" (March 28, 2026)

- CoinDesk - "Here's how bitcoin, Ethereum and other networks are preparing for the looming quantum threat" (March 28, 2026)

- Anthropic - Official confirmation to Fortune: model is "a step change" and "the most capable we've built to date"

- Ripple - XRP Ledger AI security audit announcement, 10+ vulnerabilities discovered

- Ethereum Foundation - Post-quantum security hub launch, March 2026

- Resolv stablecoin - Peg loss incident, minting contract exploit, March 2026

- Bittensor - Covenant-72B release, TAO +90% rally, subnet market cap $1.47B

- Canadian Parliament - Bill C-25, Strong and Free Elections Act (filed March 26, 2026)

- CoinDesk - "Canada moves to ban crypto donations for election campaigns following UK" (March 28, 2026)

- Canada Elections Canada - CEO recommendation for crypto donation ban (November 2024)

- UK Government - Immediate moratorium on cryptocurrency political donations (March 25, 2026)

- Coinglass - Futures liquidation data, March 27, 2026

- iShares - IGV ETF performance data, March 27, 2026