Pentagon Fires Back: DOJ's 40-Page Brief Argues Anthropic Has No Case

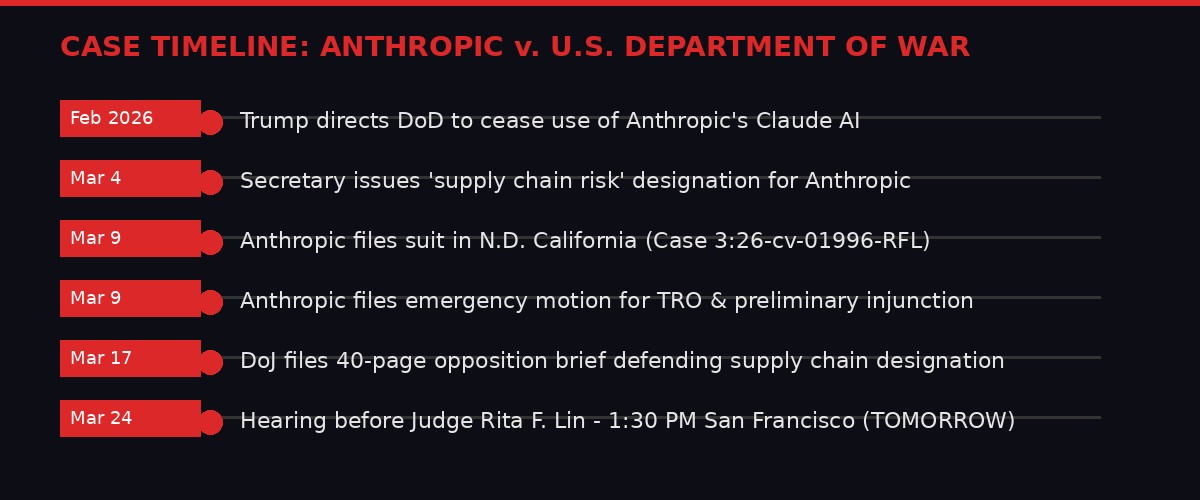

Hearing is scheduled for March 24, 2026 at 1:30 PM PT before Judge Rita F. Lin at the San Francisco federal courthouse. Case No. 3:26-cv-01996-RFL.

The hearing tomorrow could reshape how the U.S. government contracts with AI companies.

When the Trump administration banned Anthropic's Claude from federal agencies in early March, most people expected it to blow over quickly. A tech company fights a designation, cuts a deal, moves on. That's how these things usually go.

That's not what happened. Anthropic sued the Department of Defense. And on March 17th, the Department of Justice filed a 40-page brief that makes clear: the government has no intention of settling, folding, or backing down. The DOJ thinks Anthropic will lose on every single legal theory it raised.

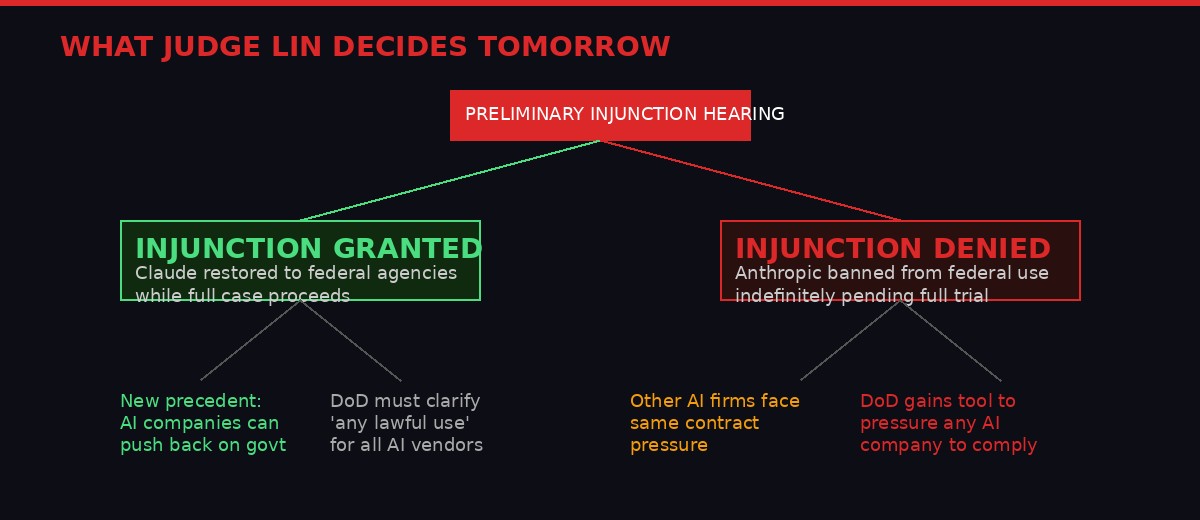

Tomorrow at 1:30 PM Pacific, Judge Rita F. Lin will hear arguments in the Northern District of California. The outcome - whether she grants or denies Anthropic's request for a preliminary injunction - will set the terms of the AI industry's relationship with the U.S. government for years to come.

Here's what the government is actually arguing, what's really at stake, and why every AI company with federal ambitions should be watching this courtroom closely.

How the case developed from the initial ban to tomorrow's critical hearing. Source: CourtListener, Case 3:26-cv-01996-RFL.

The Contract Clause That Started Everything

To understand why this case exists, you need to understand one specific document: the Department of Defense's standard technology procurement contract. It contains a clause the government calls the "any lawful use" policy.

The clause is exactly what it sounds like. The government says: we're buying your technology, and we reserve the right to use it for any purpose permitted by law. That's it. No carve-outs. No restrictions. No Anthropic usage policy applying on top.

Anthropic refused to sign it. The company's Acceptable Use Policy prohibits Claude from being used in ways that could cause mass casualties, enable weapons development of certain types, or support some surveillance applications. Anthropic told the DoD it couldn't contractually waive those restrictions - Claude would always operate under Anthropic's AUP.

The Pentagon's response was not a negotiation. It was a designation.

According to court filings (CourtListener, Case 3:26-cv-01996), the Secretary issued a formal determination that Anthropic's behavior posed a "supply chain risk" to the Department of War. Federal agencies were then directed to discontinue use of Claude. Every government contract Anthropic had - or was pursuing - effectively evaporated.

Anthropic's position is that the designation was retaliation for its safety policies, not genuine national security concern. The DOJ's position is that the government has every right to decide what goes in its supply chain, full stop.

The "any lawful use" clause vs. Anthropic's usage policy - the gap that produced this lawsuit.

Inside the 40-Page Opposition: Four Arguments the Government Is Making

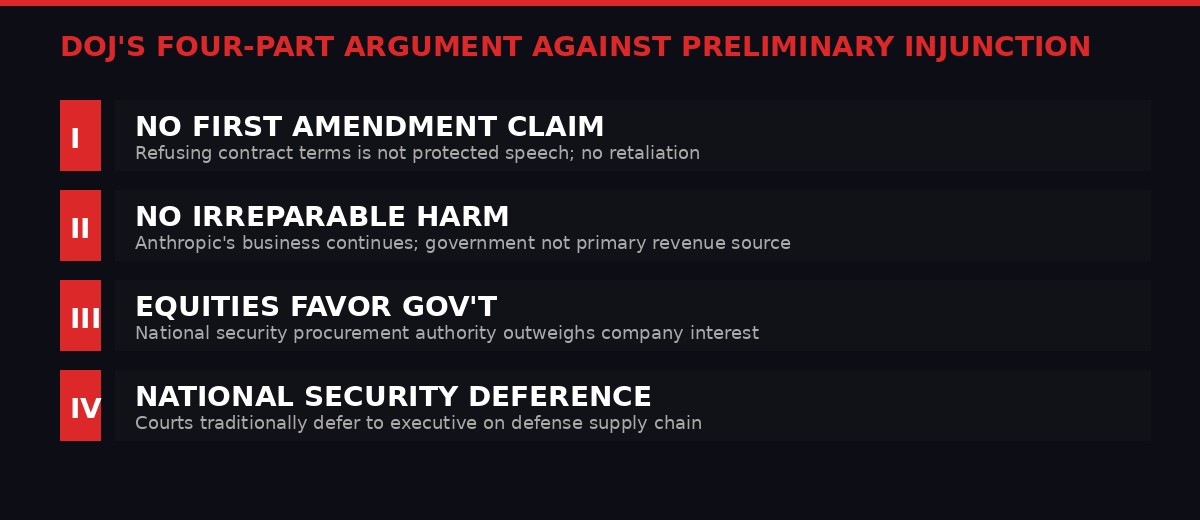

The DOJ's March 17th brief (Document 96, filed by Brett Shumate's Civil Division, argued by Senior Trial Counsel Kristina Wolfe and James Harlow) is a comprehensive takedown of every legal theory Anthropic raised. It doesn't hedge. It argues that Anthropic cannot win on anything.

The government's four-part structure for defeating Anthropic's preliminary injunction request. Source: Case 3:26-cv-01996-RFL, Document 96.

Argument One: Refusing a contract term is not protected speech. Anthropic's First Amendment claim rests on the theory that the government punished it for expressing safety-related views about AI. The DOJ calls this legally incoherent. Its brief argues that declining to accept a contractual provision is "not speech" - it is conduct. You can't transform a business negotiation failure into a constitutional violation by characterizing your refusal as expressing a viewpoint. The DOJ cites Giboney v. Empire Storage & Ice Co. (1949) and Holder v. Humanitarian Law Project (2010) for the proposition that conduct integrated with commercial activity doesn't magically become protected expression.

Argument Two: Even if speech was involved, Anthropic wasn't punished for it. The brief methodically constructs an alternative explanation for every action taken against Anthropic. The Trump directive to cease use of Claude? The government says that's executive authority over procurement decisions. The supply chain designation? A reasonable determination about vendor reliability when a company won't provide standard contractual assurances. The DOJ argues none of this traces back to Anthropic's public safety advocacy. The company is free to say whatever it wants about AI. It just can't demand a federal contract on terms of its choosing.

"Anthropic's speech was not the motivating factor for the challenged actions. Even assuming a retaliatory motive, the Government would have acted the same - it cannot procure technology from a vendor who refuses standard contracting terms."

DOJ Opposition Brief, Document 96, Case 3:26-cv-01996-RFL (March 17, 2026)

Argument Three: The Secretary's determination was lawful under the Administrative Procedure Act. Anthropic's APA claim argues the supply chain designation was arbitrary and capricious - government-speak for "they made it up without rational basis." The DOJ responds that the Secretary has broad statutory authority over defense supply chains and that a vendor refusing standard contract terms is, objectively, a rational basis for a supply chain risk determination. The brief draws on China Unicom (Americas) Operations Ltd. v. FCC (9th Cir. 2024), in which national security exclusions were upheld with relatively minimal judicial scrutiny.

Argument Four: Due process doesn't apply to government procurement decisions. Anthropic argued it had a property interest in its existing contracts and should have received notice and an opportunity to respond before being designated. The DOJ treats this as a novel and unlikely theory. Government contractors have limited property interests in contracts, and the executive branch has historically broad authority to make security-related procurement decisions without the procedural requirements that attach to, say, licensing revocations. The brief cites Dep't of the Navy v. Egan (1988) extensively - the Supreme Court's most important statement that courts should defer substantially to the executive on national security decisions.

Taken together, the government's brief doesn't just argue Anthropic will lose. It argues the legal system has no real jurisdiction to second-guess these decisions at all.

The National Security Deference Problem

That last point is where Anthropic faces its biggest obstacle - and where the stakes for the entire tech industry become visible.

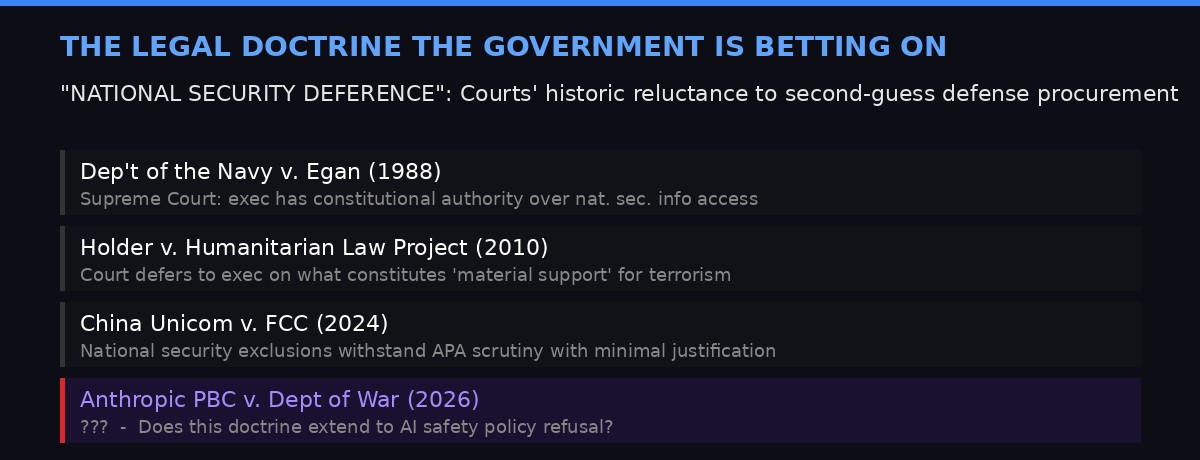

There is a long tradition in American constitutional law of courts treating national security claims from the executive branch with extreme deference. Dep't of the Navy v. Egan - cited four times in the DOJ brief - established that the President has "constitutional authority to protect classified information" and that "unless Congress specifically has provided otherwise, courts traditionally have been reluctant to intrude upon the authority of the Executive in military and national security affairs."

Key cases the DOJ is relying on - and the question of whether they extend to AI safety policy disputes.

The question in Anthropic's case is whether that deference applies when the "national security" label is being used not to protect classified programs or military secrets, but to enforce contractual compliance. The government is essentially arguing: we get to decide what's in our supply chain, we don't have to explain that decision in detail, and courts shouldn't interfere.

If Judge Lin accepts that framing, Anthropic loses on everything. The preliminary injunction is denied, Claude stays locked out of federal agencies while the full case proceeds, and every other AI company receives an unmistakable signal: the government's "any lawful use" clause is non-negotiable.

If she rejects that framing - if she decides courts can examine whether the "supply chain risk" designation was genuine national security analysis or regulatory retaliation - the case becomes genuinely competitive. Anthropic has declarations from its own executives (including VP of Research Jared Kaplan) explaining what the AUP restrictions are designed to prevent and why they're consistent with responsible government use.

The legal community is watching for signals about how broadly courts will apply deference doctrine in the AI era. Historically, these arguments were made about weapons systems, satellite technology, and cryptographic exports. This is the first major case where a company is arguing the government used national security framing to punish it for publishing an AI ethics policy.

What "No Irreparable Harm" Actually Means for Anthropic

Even if Judge Lin finds Anthropic has plausible legal claims, she won't grant a preliminary injunction unless she also finds the company will suffer irreparable harm before the full case is decided. This is where the government's brief gets strategically interesting.

The DOJ spends significant pages arguing that Anthropic's business is not being destroyed by the designation. The company still has private-sector contracts. It still has its primary revenue streams. Federal agencies were not Anthropic's dominant customer base. Therefore, whatever harm exists from the ban is monetary and recoverable - not the kind of irreversible injury that justifies emergency court intervention.

This is a legal argument, but it's also a statement about the government's read of Anthropic's negotiating position. If federal revenue isn't material to Anthropic's survival, then the designation doesn't threaten the company's existence - it just cuts off one revenue channel. Uncomfortable, but not catastrophic.

Anthropic's brief in support of its motion included 27 exhibits and declarations from multiple senior executives - including VP of Federal Partnerships Sarah Heck, who presumably laid out the commercial impact in detail. If the judge finds that loss substantial enough to constitute irreparable harm to Anthropic's mission or viability, she may grant interim relief regardless of how the legal arguments shake out.

The balance of equities also matters. The government argues that an injunction forcing it to reinstate Claude across federal agencies - while an appeal is potentially pending - creates military and operational risks if something goes wrong with an AI system operating under policy restrictions the government never agreed to. Anthropic argues the opposite: that the government's own agencies were already using Claude successfully before the ban, and that the designation was sudden, unexplained, and retaliatory.

The Wider AI Industry Is Paying Very Close Attention

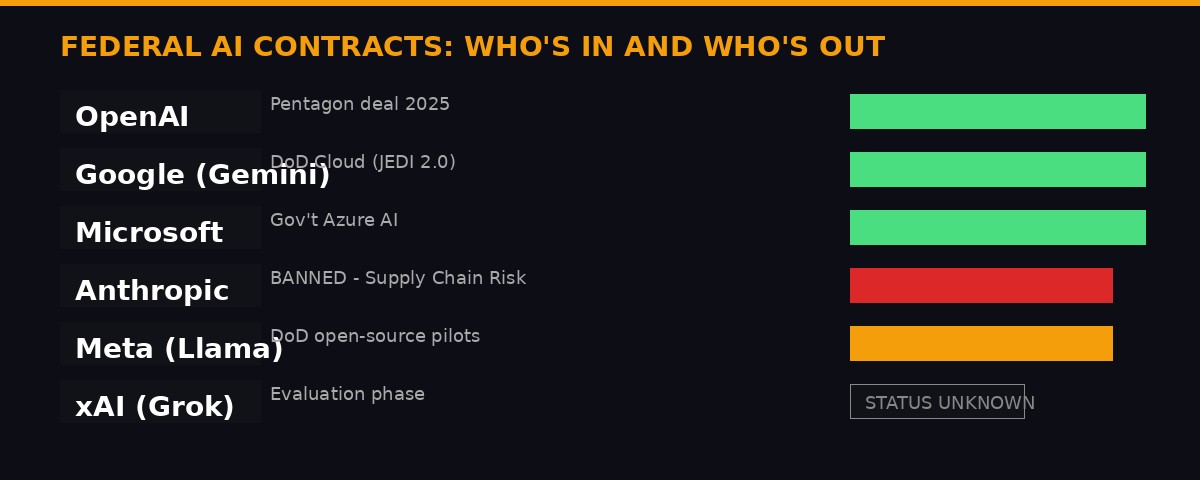

This case doesn't just affect Anthropic. The outcome will function as a roadmap for how every AI company navigates government contracts.

Anthropic is the only major AI lab to have refused the Pentagon's standard contracting clause - and the only one currently banned from federal use.

OpenAI signed a deal with the Pentagon in 2025. The terms aren't fully public, but the company reportedly accepted some version of "any lawful use" language. Google's Gemini models operate under similar federal cloud agreements through its DoD partnerships. Microsoft's Azure AI services are wired throughout the federal government. None of those companies attempted to impose their own usage policies as a contractual override on government use.

Anthropic was the outlier - and its bet was that the safety-conscious approach was good for long-term trust and adoption. The government's response was to treat that bet as a supply chain threat.

If the government wins tomorrow, the message to the industry is stark: either accept "any lawful use" terms, or forfeit federal revenue entirely. For most AI companies, which are burning capital at scale and need enterprise customers, that's not a real choice. The practical effect would be a race to strip usage restrictions from government-facing products - or, more likely, to maintain dual architectures where a "government version" of the model operates with looser constraints than the commercial version.

That dual-architecture future has its own problems. It creates different capability profiles for the same underlying model, complicates safety evaluation, and generates exactly the kind of institutional knowledge gaps that AI governance researchers worry about. A government version of Claude with no AUP restrictions is functionally a different product than the commercial Claude - and there's no current regulatory framework requiring disclosure of which version is being used where.

Conversely, if Anthropic wins - or even secures a partial victory that gets its designation reviewed - it establishes that AI companies can resist government contractual pressure using First Amendment and APA tools. That's a meaningful precedent for an industry that is increasingly entangled with government procurement, national security programs, and defense applications.

Tomorrow's Hearing: What to Watch For

The two paths from tomorrow's hearing and their second-order consequences for the AI industry.

Judge Rita F. Lin was appointed to the Northern District of California in 2021. She has handled several high-profile technology cases, though nothing directly in the AI-and-national-security space. Tomorrow's hearing will run at 1:30 PM Pacific in Courtroom 15 of the San Francisco federal courthouse.

Several specific questions will define the argument. First: does Judge Lin treat this as a national security deference case, or an administrative law case? If she accepts the government's framing that courts are limited in reviewing supply chain decisions, she'll likely deny the injunction without getting into the merits of whether the designation was retaliatory. If she treats it as a straightforward APA review - was the agency action arbitrary and capricious - Anthropic has more room to work.

Second: how does she read the First Amendment claim? The DOJ's argument that "refusing a contract term is not speech" has some legal support, but it also sidesteps the underlying issue - which is whether the government can punish a company for publishing safety policies by labeling those policies a procurement risk. Courts have occasionally found indirect chilling effects on speech even when the mechanism wasn't a direct restriction.

Third: does the corporate disclosure statement matter? When Anthropic filed its complaint on March 9th, it disclosed that both Google and Amazon Web Services are "interested entities" in the case. Google and Amazon are two of Anthropic's largest investors. Their presence in the disclosure adds a layer of complexity - it signals that this case has implications well beyond one AI company's federal contracts. Those investors have direct financial exposure to the outcome.

"The President and the Secretary acted well within their authority. Anthropic is not likely to succeed on the merits of any of its claims."

DOJ Opposition Brief, Conclusion, Case 3:26-cv-01996-RFL (March 17, 2026)

The DOJ brief ends with the kind of confidence you write when you think you have the law on your side. The only question is whether Judge Lin reads the precedents the same way.

The Bigger Picture: When AI Ethics Policies Meet Government Power

Step back from the legal arguments and you see something unusual happening in American tech policy. For the past decade, the criticism of AI companies was that they had too little governance - no meaningful usage policies, no ethical guardrails, no accountability for downstream harms. Anthropic was frequently cited as a counter-example: a company that took safety seriously, published transparent policies, and structured its corporate governance around long-term responsible deployment.

Now those same safety policies are being used as justification to lock the company out of the federal government. The supply chain risk designation isn't based on Claude malfunctioning, causing harm, or violating any law. It's based on Anthropic refusing to agree that the government can use Claude for literally any legal purpose - including purposes the company's own research suggests are dangerous.

The irony is thick. But it also points to a fundamental tension that has been building since AI companies started chasing federal contracts: what happens when commercial AI safety commitments conflict with government procurement requirements?

The DoD's position is that it cannot have critical systems running on AI whose vendor retains policy override authority. In a wartime scenario, the last thing military planners want is for their AI tools to refuse certain queries because a private company in San Francisco decided those queries violated its acceptable use policy. That's a genuine operational concern, not invented bureaucratic obstruction.

Anthropic's position is that without usage policies, Claude could be deployed in ways that generate real-world harms - civilian targeting systems, autonomous weapons with insufficient human oversight, mass surveillance applications. The company's argument isn't that it wants to obstruct legitimate military use. It's that "any legal use" is too broad a standard given what's actually legal in national security contexts.

Both positions are coherent. The legal system is now being asked to choose between them.

A preliminary injunction hearing isn't the end of that question. Even if Judge Lin rules for the government tomorrow, the full case continues. Even if she grants Anthropic relief, the underlying dispute over contract terms remains unresolved. This is round one of a much longer fight - and the first case in American legal history to directly test whether an AI company's safety policy can be treated as a national security threat.

Whatever Judge Lin decides at 1:30 PM tomorrow, the ruling lands in an industry that has been watching every development. The AI companies that didn't push back on "any lawful use" will be watching to see if Anthropic's approach pays off. The AI companies that haven't yet sought federal contracts will be recalibrating their terms. And the government will be reading the ruling as either a green light to use supply chain designations as procurement leverage - or a warning that courts will look harder at that tool than the DoD expected.

Case Timeline

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramSource documents: CourtListener - Case 3:26-cv-01996-RFL. All legal arguments sourced from Document 96, filed March 17, 2026, by the DOJ Civil Division. Corporate disclosure at Document 3 (March 9, 2026).