Apple's AI App Store: Siri Extensions, Gemini Distillation, and the End of Human App Review

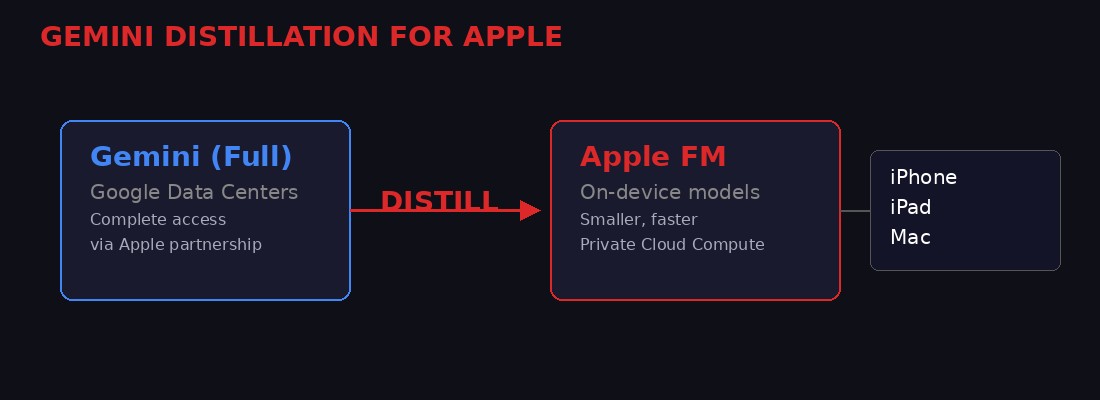

Apple is doing something it has never done before. After a decade of keeping Siri locked inside a walled garden so airtight it practically suffocated, the company is preparing to blow the doors open. iOS 27, set to be unveiled at WWDC on June 8, will introduce a system called "Extensions" that allows third-party AI chatbots - Google Gemini, Anthropic Claude, OpenAI ChatGPT, and potentially dozens more - to plug directly into Siri and operate as first-class citizens across the entire operating system.

This is not a minor feature update. This is the creation of an AI App Store.

The move comes alongside a parallel deal that gives Apple "complete access" to Google's Gemini model inside Apple's own data centers, including the right to distill Gemini into smaller, on-device models tuned specifically for Apple hardware. Meanwhile, Apple is testing a standalone Siri app that transforms the voice assistant into a full conversational agent with chat history, document analysis, and cross-app orchestration. And while all of this is happening, the original App Store - the one that launched a thousand Silicon Valley fortunes in 2008 - is buckling under the weight of AI-generated apps from vibe coders who have never written a line of Swift in their lives.

Three seismic shifts happening simultaneously. Each one would be a story on its own. Together, they represent the most significant restructuring of Apple's platform strategy since the iPhone itself.

The Extensions Play: How Siri Becomes a Platform

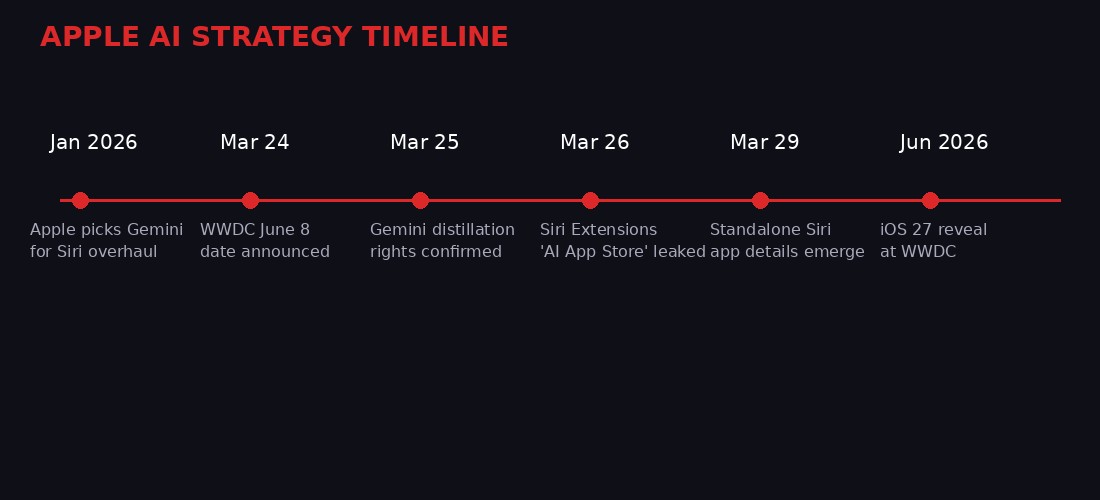

Bloomberg's Mark Gurman, whose Apple reporting track record is the gold standard for pre-announcement intelligence, dropped the Extensions bombshell on March 26. The system works like this: third-party AI chatbots available on the App Store can register as Siri Extensions. Users enable or disable them at will. When Siri receives a query it can route to an Extension, the third-party model handles the response while Siri manages the interface layer.

Think of it as middleware. Siri becomes the skin. The brain can be swapped.

This represents an almost complete philosophical reversal for Apple. The company's AI strategy under former chief John Giannandrea was built around the idea that Apple would own the entire AI stack - from silicon to model to interface. Giannandrea stepped down in late 2025 after Apple Intelligence failed to match competitors on virtually every benchmark. His replacement, Vision Pro head Mike Rockwell, appears to be operating under a different doctrine: if you cannot beat them in model quality, become the platform where they all compete.

The Extensions feature will have its own dedicated section in the App Store, according to Gurman's reporting. This creates what is functionally an AI App Store embedded within the existing marketplace. Developers and AI companies can list their chatbot integrations, compete for users, and presumably operate under Apple's standard revenue-sharing model - though pricing details have not been disclosed.

The implications cascade quickly. If Claude can answer questions through Siri on an iPhone, Anthropic gains distribution to over 1.5 billion active Apple devices. If Google's Gemini runs natively through Extensions (on top of its existing partnership as the default backend), Google cements a presence Apple spent years trying to minimize. If a startup like Perplexity or Mistral can plug into Siri with a downloadable Extension, the barriers to reaching iPhone users collapse overnight.

There is a strategic logic here that goes beyond catching up to ChatGPT. Apple has always been a platform company first, a services company second, and a technology company third. The App Store generated an estimated $85 billion in revenue for Apple in 2025 through its commission structure. An AI App Store creates a new commission surface on a new category of software - one that is growing faster than any app category since social media.

But it also creates risk. If users start asking Siri questions and Gemini or Claude provide the answers, Siri becomes a dumb pipe. Apple controls the interface but not the intelligence. That is a fundamentally different power dynamic than what Apple has maintained for sixteen years. The company has always owned the full experience. Extensions introduce a scenario where the best AI experience on an iPhone might come from a company Apple does not control.

Apple appears to be betting that controlling the distribution channel matters more than controlling the model. Given that the App Store made Apple one of the most valuable companies on earth by doing exactly that with third-party software, the bet has historical precedent. Whether it translates to AI is the trillion-dollar question.

The Gemini Marriage: Distillation Rights and What They Mean

On January 12, 2026, Apple and Google announced a multi-year partnership that would use Gemini as the foundation for Apple Foundation Models - the on-device AI that powers Apple Intelligence features including the rebuilt Siri. The joint statement was diplomatically bland: "After careful evaluation, Apple determined that Google's AI technology provides the most capable foundation for Apple Foundation Models and is excited about the innovative new experiences it will unlock for Apple users."

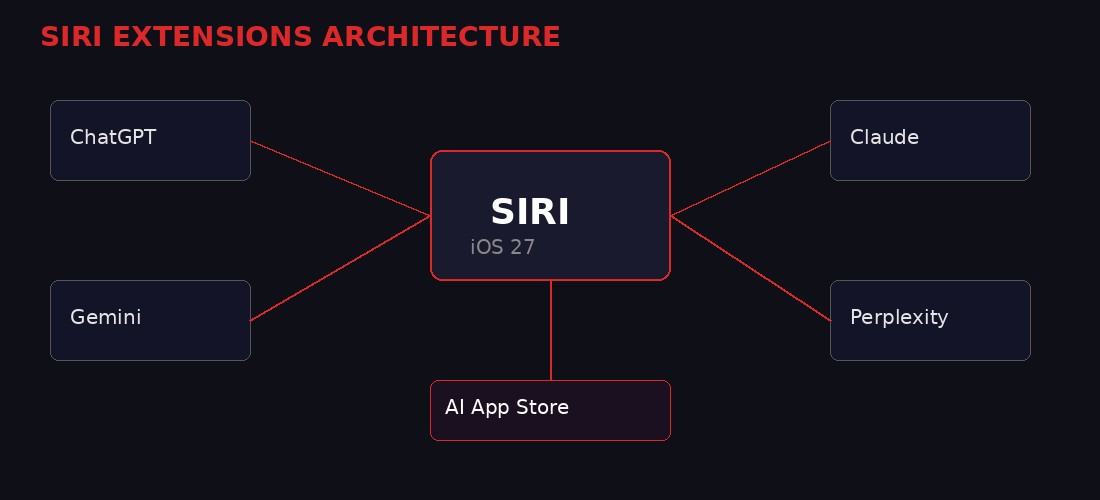

What the press release did not say, and what The Information reported on March 25, is that the deal gives Apple "complete access" to Gemini within Apple's data centers. Complete access means Apple can run the full Gemini model internally. More critically, it includes the right to use Gemini for knowledge distillation - the process of training a smaller, cheaper "student" model by having it learn from the outputs of a larger "teacher" model.

Distillation is one of the most important techniques in modern AI development. OpenAI allegedly used it to create smaller GPT models. Anthropic has used it to produce Claude variants optimized for speed. What makes Apple's distillation rights unusual is the scale and the specificity of the use case. Apple is not distilling Gemini to create a generic chatbot. Apple is distilling Gemini to create models tuned for specific Apple hardware - the A-series and M-series chips that power iPhones, iPads, and Macs.

This means Apple can take Google's best model, compress its knowledge into something that runs locally on a phone, and ship it to 1.5 billion devices without ever paying Google per-query API costs. The economics are staggering. Google effectively sold Apple the blueprint to build its own intelligence layer. In exchange, Google presumably secured a default position as the primary AI backend for Siri cloud queries, plus whatever financial terms were negotiated - likely billions annually, similar to the search deal that already pays Google an estimated $20 billion per year for default Safari placement.

The distillation angle also explains why Apple is comfortable opening Siri to third-party Extensions. If the on-device Apple Foundation Models (distilled from Gemini) handle most routine queries locally - with the speed and privacy advantages of on-device processing - then Extensions become the fallback for complex queries that require cloud-scale models. Apple gets the best of both worlds: fast, private, on-device AI for everyday use, and a marketplace of cloud AI options for heavy lifting.

There is a tension here that deserves attention. Apple is simultaneously partnering deeply with Google while opening the door to Google's competitors through Extensions. This is classic Apple platform strategy - using a dominant partner for infrastructure while maintaining optionality through competition. Apple did the same thing with Samsung (chips) and Qualcomm (modems) for years, relying on partners while building in-house replacements. The distillation deal suggests Apple views Gemini as a transitional foundation, not a permanent dependency.

Google, for its part, may have calculated that embedding Gemini into Apple's foundation models creates a stickiness that Extensions cannot erode. If the base intelligence layer is Gemini-derived, every Extension is competing against a version of Google's own technology. Google gets paid whether users interact with Gemini directly or through a distilled Apple model built on Gemini's training. It is an unusually sophisticated arrangement that benefits both parties - for now.

The Siri Standalone App: From Voice Assistant to AI Agent

Apple is testing a dedicated Siri application for iPhone, iPad, and Mac, according to Gurman's March 24 report. The app gives Siri a chat-like interface similar to iMessage, with conversational threads, the ability to switch between voice and text input, document and photo analysis capabilities, and - perhaps most significantly - persistent chat history that users can search and revisit.

This is not just a UI change. It is a category redefinition. Siri has been a voice assistant since 2011. A standalone app transforms it into a conversational AI agent that competes directly with ChatGPT, Gemini, Claude, and Perplexity - not as a backend that powers one of those through Extensions, but as a primary interface in its own right.

The design details Gurman describes are telling. Apple is testing a Dynamic Island integration where Siri appears at the top of the screen with a "Search or Ask" prompt when activated. Results expand into a translucent panel. The interface can grow to support multi-turn conversations. Built-in apps will gain an "Ask Siri" toggle in menus, letting users highlight text or content and immediately query Siri about it.

The replacement of Spotlight search with Siri is particularly notable. Spotlight has been the system-wide search interface on Apple devices since macOS Tiger in 2005. Replacing it with Siri means every search action on an Apple device - finding a file, looking up a contact, searching email - runs through an AI layer. The user might not even notice the transition. They will just find that search suddenly understands natural language, provides summaries, and connects information across apps in ways Spotlight never could.

Apple is also building Siri's ability to summarize daily news from Apple News and provide "more detailed responses sourced from the web, including summaries, bullet points and images," according to Gurman. This positions the Siri app as a direct competitor to Perplexity's AI search product and Google's own AI Overview feature. The difference is that Apple's version will be pre-installed on every iPhone on the planet.

The agentic capabilities - Siri taking actions across apps on behalf of the user - represent the most ambitious piece of the puzzle. Apple has been working on this since announcing it at WWDC 2024, then delaying it repeatedly. The March 2025 delay admission was unusually candid for a company that rarely acknowledges setbacks. If iOS 27 delivers working cross-app orchestration, it will be the first voice assistant to reliably perform multi-step tasks across different applications without requiring manual intervention at each step.

That is a big "if." Every major tech company has promised agentic AI capabilities. None have shipped them at scale in a way that works reliably enough for mainstream users. Apple's advantage is vertical integration - controlling the hardware, the operating system, and the app framework means Apple can build deeper hooks than any third-party assistant. Whether that advantage translates to actual reliability remains to be seen at WWDC.

Vibe Coding Broke the App Store

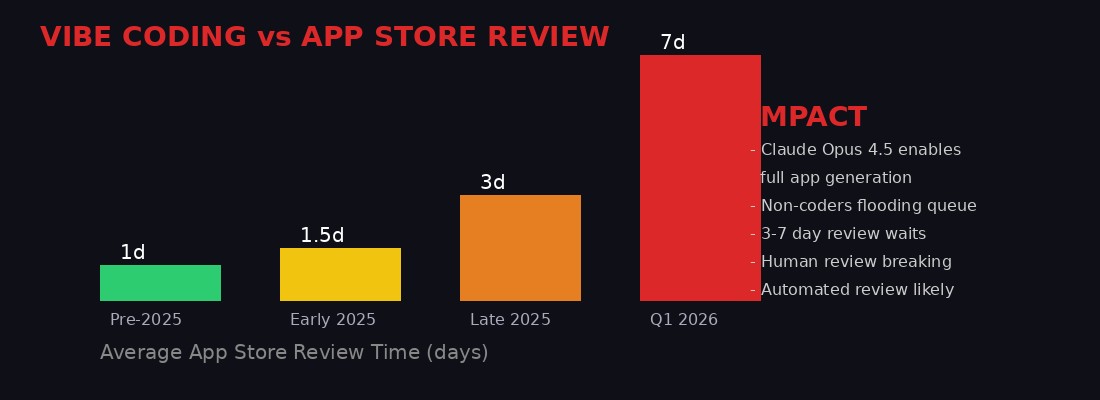

While Apple builds its AI future, its AI present is already causing problems in unexpected places. The App Store review process - the human-powered quality gate that Apple has maintained since 2008 as both a security measure and a competitive differentiator - is breaking under the pressure of vibe-coded applications.

Vibe coding, the practice of describing an app to an AI model and having it generate functional code with minimal human intervention, took off in late 2025 following the release of Anthropic's Claude Opus 4.5 and similar coding-capable models. The result has been an unprecedented flood of app submissions to the App Store from people who have never written a line of code manually.

Multiple developers, including indie developers and representatives from major companies like Twitter, have reported review times stretching from the traditional sub-24-hour turnaround to 3-7 days. Some developers have been stuck in review for over a week. For established apps that ship frequent updates - bug fixes, feature releases, security patches - this delay is not an inconvenience. It is a business-disrupting bottleneck.

The math is simple. Before vibe coding, the number of app submissions was naturally constrained by the number of humans who could write functional code. AI coding tools removed that constraint overnight. The same models that make it possible for a non-programmer to build and submit an app in an afternoon also make it possible for the same person to submit ten apps in a week. The review queue, sized for a pre-AI submission volume, cannot scale at the same rate.

Former Apple executive Phil Schiller was a champion of human-led app review, pushing back against automation for years. His argument was that human reviewers catch nuances - deceptive monetization, copycat designs, policy violations dressed up in compliant packaging - that automated systems miss. That argument held when submission volumes were manageable. It collapses when the volume doubles, triples, or grows by an order of magnitude.

Two solutions are emerging in the conversation around this problem. The first is a tiered system: human review for new app submissions, automated review for updates to established apps with clean track records. This preserves the security gate for unknown developers while unblocking trusted ones. The second is a priority queue for established developers, ensuring that companies with proven histories do not get stuck behind a wall of first-time submissions from vibe coders experimenting with the App Store as a hobby.

Neither solution is ideal. Automated review means some policy violations will slip through. Priority queues create a two-tier developer ecosystem where incumbents move fast and newcomers wait - which is exactly the kind of anti-competitive dynamic that regulators in the EU, South Korea, and Japan have already been pressuring Apple about.

The irony is thick. Apple is simultaneously building an AI App Store inside Siri while the original App Store struggles to handle the consequences of AI. The company that built the most successful software marketplace in history is being forced to reckon with the fact that AI-generated software may require AI-powered gatekeeping. Human review of human-written code made sense. Human review of machine-written code does not scale.

OpenAI's March of Retreat

Apple is not making these moves in a vacuum. The broader AI industry is undergoing its own reckoning, and nowhere is that more visible than at OpenAI, where March 2026 has been a month of aggressive product pruning.

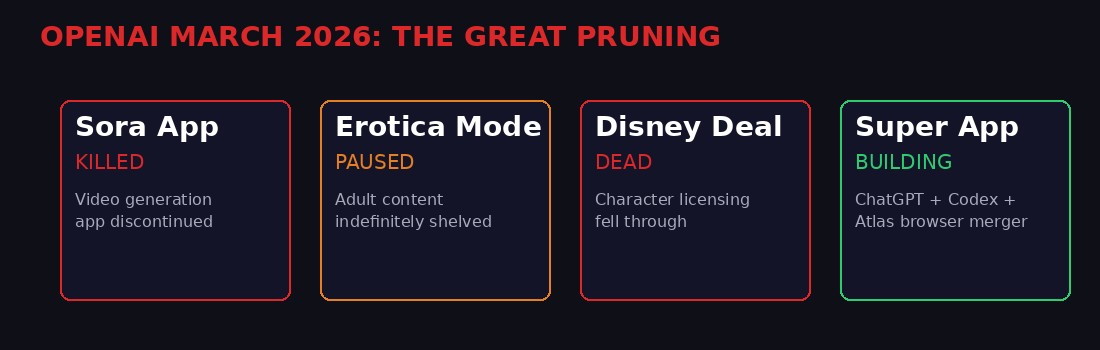

On March 24, OpenAI announced the shutdown of Sora, its AI video generation app and the company's second-ever iPhone application. Sora launched in September 2025 to considerable fanfare, offering text-to-video generation with few restrictions and a social media feed for sharing generated content. It lasted six months. The Wall Street Journal reported that OpenAI is discontinuing its video AI model efforts entirely - not just the app, but the underlying research direction.

The Sora shutdown was preceded by the indefinite pause of ChatGPT's "Erotica Mode," which CEO Sam Altman had announced in October 2025 as part of OpenAI's "treat adult users like adults" philosophy. The feature was supposed to launch in December 2025. By March, internal challenges with sexual datasets and content filtering had pushed the pause to "indefinite," according to the Financial Times. OpenAI also saw its deal with Disney - which would have allowed users to generate video featuring licensed characters through Sora - collapse as a direct consequence of the app's discontinuation.

What OpenAI is building instead tells a clearer story about where the company sees its future. Reports from late March indicate OpenAI is developing a "super app" for macOS that would combine ChatGPT, its Codex developer tools, and the Atlas web browser into a single application. This is the opposite of the Sora strategy. Instead of expanding into adjacent categories (video, social media, adult content), OpenAI is contracting around its core competency: text-based AI intelligence deployed through a unified interface.

The relevance to Apple's strategy is direct. If OpenAI consolidates into a super app, and Apple opens Siri Extensions, OpenAI faces a choice: build a standalone experience that competes with the entire iPhone interface, or plug into Siri and accept the platform tax that comes with Extension distribution. The same choice confronts Anthropic, Perplexity, Mistral, and every other AI company that wants to reach iPhone users.

Apple is betting that most will choose distribution. And historically, Apple has been right. Developers complained about the App Store's 30% commission for years. They all stayed. The gravitational pull of 1.5 billion devices is difficult to resist, even when the terms are unfavorable. An AI App Store creates the same dynamic for AI companies: you can build your own app, or you can plug into Siri and reach every iPhone user on the planet. Most will choose the latter.

The Legal Pressure Cooker

Apple's AI App Store does not exist in a regulatory vacuum. The company faces active legal challenges to its platform practices across multiple jurisdictions, and the introduction of a new marketplace layer for AI services will almost certainly draw fresh scrutiny.

In the European Union, the Digital Markets Act (DMA) already requires Apple to allow sideloading and alternative app stores. If Apple applies its standard 30% commission to AI Extensions, EU regulators may argue this constitutes an extension of Apple's existing gatekeeper position into a new market. The European Commission has been explicit that it views AI platforms as a potential new frontier for competition enforcement.

In the United States, the Epic Games antitrust case established that Apple's App Store constitutes a market where Apple exercises significant control. An AI App Store that requires AI companies to distribute through Apple's framework, pay Apple's commission, and comply with Apple's content policies would raise the same questions in a new context. Can Apple use its device monopoly (or near-monopoly) in premium smartphones to tax AI companies for access to users?

Japan and South Korea have already passed legislation requiring Apple to allow third-party payment systems. If AI Extensions become a significant revenue category, similar legislation could emerge specifically targeting AI marketplace commissions. India, where Apple is aggressively expanding iPhone manufacturing and market share, has its own emerging tech regulation framework that could intersect with Apple's AI distribution strategy.

The Google partnership adds another layer of regulatory complexity. Google already faces antitrust scrutiny over its search deal with Apple, which the Department of Justice has argued constitutes an anti-competitive arrangement that maintains Google's search monopoly. A deepened AI partnership - where Google provides the foundational model for Apple's intelligence layer AND competes as an Extension in Apple's AI marketplace - could face similar challenges. Regulators may question whether Google's dual role as infrastructure provider and marketplace participant creates unfair advantages.

Apple's legal team is certainly aware of these risks. The Extensions system may have been designed partially as a regulatory hedge - by opening Siri to third-party AI companies, Apple can argue it is fostering competition rather than restricting it. "We are not locking users into one AI provider," Apple could tell regulators. "We are creating a marketplace where users choose." Whether regulators accept that framing depends on the commission structure, the technical requirements for Extension approval, and whether Apple's own AI (powered by distilled Gemini) receives preferential treatment in the user experience.

The music industry's quiet embrace of AI, reported by Rolling Stone this week, provides a parallel case study in how regulation lags technology. More than half of sample-based hip-hop is now created using AI-generated samples, according to producer Young Guru, but nobody wants to admit it publicly because the legal frameworks for AI-generated content remain unresolved. Apple's AI App Store will operate in a similarly ambiguous legal environment - the technology is moving faster than the rules.

The Cyber Shadow: AI as Weapon, Shield, and Spy

Apple's AI ambitions also intersect with a rapidly evolving cybersecurity landscape where AI is not just a product category but an active weapon. The Iran conflict, now in its fourth week, has become a live demonstration of how artificial intelligence reshapes both offensive and defensive cyber operations - and the implications touch every device that will run iOS 27.

Check Point Research documented a sophisticated operation where Iranian-linked hackers sent Android users fake bomb shelter apps timed to coincide with actual missile strikes. The coordination between physical attacks and digital exploitation represents what Check Point's Gil Messing called "a first" - the synchronization of kinetic and cyber warfare in real-time. DigiCert has tracked nearly 5,800 cyberattacks from approximately 50 Iran-linked groups, targeting not just U.S. and Israeli companies but also networks in Bahrain, Kuwait, Qatar, and other regional states.

The AI dimension of these attacks is expanding rapidly. Pro-Iran groups are using AI to generate deepfake images of military victories that never happened, including a fabricated image of sunken U.S. warships that accumulated over 100 million views. Iranian state media has begun labeling actual war footage as fake while substituting AI-generated alternatives, according to NewsGuard. The information environment around the conflict has become so polluted with AI-generated content that distinguishing real from synthetic requires specialized tools that most users do not have.

On the defensive side, Director of National Intelligence Tulsi Gabbard told Congress that AI is "increasingly shaping cyber operations with both cyber operators and defenders using these tools to improve their speed and effectiveness." The State Department's new Bureau of Emerging Threats, opened specifically to address AI-powered threats, joins similar efforts at CISA and the NSA.

For Apple, this landscape creates both risk and opportunity. An iPhone running third-party AI Extensions through Siri introduces new attack surfaces. Each Extension represents a connection to an external AI service - a potential vector for data exfiltration, prompt injection, or the kind of coordinated phishing-plus-malware attacks that Iran demonstrated with the fake shelter app. Apple's Private Cloud Compute architecture, which processes AI queries on Apple-controlled servers rather than third-party infrastructure, is designed to mitigate this risk. But Extensions that route queries to external providers like Gemini or Claude necessarily leave Apple's security perimeter.

The security calculus adds urgency to Apple's distillation strategy. On-device models distilled from Gemini can process queries without ever leaving the phone. No network request means no interception point. No cloud server means no data center to hack. Apple's argument for why you should use its distilled models instead of a cloud-based Extension is not just speed or privacy - it is security in an era where AI-powered attacks are becoming indistinguishable from legitimate services.

The Kash Patel hack - where a pro-Iranian group breached the FBI Director's personal email and posted years of personal documents online - underscores how exposed even high-value targets remain. If the FBI Director's email is not safe, no one's is. Apple's pitch with iOS 27 is that the phone itself can be the secure AI layer - processing locally, storing locally, never exposing queries to networks where state-sponsored hackers are actively hunting.

What Comes After June 8

WWDC 2026 on June 8 will be the most consequential Apple developer conference since the iPhone SDK announcement in 2008. That event created the App Store economy. This one could create its successor.

The stakes are clear. If Extensions work as described - third-party AI seamlessly integrated into Siri across the operating system - Apple creates a distribution channel that every AI company on earth will want access to. If the distilled Gemini models deliver reliable on-device intelligence, Apple establishes a performance baseline that Extensions must exceed to justify their cloud latency. If the standalone Siri app provides a competitive conversational experience, Apple enters the consumer AI race with the biggest installed base in the industry.

The risks are equally clear. Extensions could fragment the Siri experience if different chatbots deliver inconsistent quality. Distillation could produce models that are fast but mediocre, making Apple's default AI feel like a budget option compared to cloud-scale alternatives. The standalone app could suffer from the same reliability problems that have plagued Siri for over a decade - hallucinations, misunderstood commands, actions taken incorrectly. Apple has one shot at a first impression with the rebuilt Siri. If it stumbles, the market will remember.

The App Store review crisis will not resolve itself by June. If anything, the announcement of AI-powered developer tools at WWDC will accelerate vibe coding further. Apple may need to announce changes to its review process alongside the AI features - perhaps an automated first-pass review system, a fast track for established developers, or AI-powered review tools that use the same technology Apple is deploying in Siri to evaluate the apps being submitted to the store.

The broader industry context matters too. Google has Gemini running natively on Android. OpenAI is building its super app. Anthropic is pushing Claude into enterprise workflows. Microsoft has Copilot embedded in Windows and Office. Samsung is integrating AI features at the hardware level. Apple is late to this race by any objective measure. But Apple has been late before - late to smartphones, late to tablets, late to streaming - and has a pattern of entering markets late with integrated experiences that eventually dominate.

The difference this time is that the competitors are not just other hardware companies. They are AI research labs with deep technical moats, massive compute budgets, and a head start measured in years. Apple's answer - become the platform where they all compete, while building your own intelligence layer underneath - is the most characteristically Apple response imaginable. It is also the most dangerous, because it depends on execution at a scale Apple has never attempted in AI.

June 8 is seventy days away. The leaks are accelerating. The partnerships are signed. The architecture is being built. Whether it works depends on whether Apple can deliver on the most ambitious product vision it has articulated since Steve Jobs held up the first iPhone and said, "Are you getting it?"

The answer, for once, is genuinely uncertain.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram