Unbreached: Apple Lockdown Mode's Perfect Record While Government Spyware Spreads to Criminals

For four years, Apple's Lockdown Mode has held an unprecedented record - zero successful spyware attacks. Meanwhile, an exploit kit linked to the US government has leaked out of official hands and is now being wielded by Russian intelligence operatives and Chinese financial criminals. Two things can be true at once: the best consumer mobile hardening ever built is working, and the ecosystem around it is fracturing in dangerous ways.

The Record That Surprised Even Apple's Critics

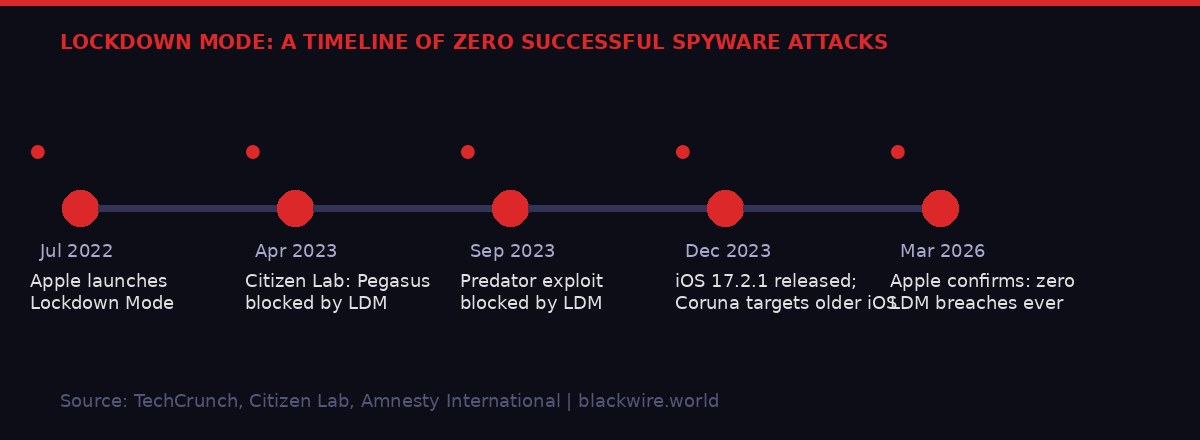

Apple launched Lockdown Mode in July 2022. The announcement was specific and unusual: this was not a general security update, not a new authentication layer, not a marketing rebrand. It was a hardening feature aimed explicitly at people who had reason to believe governments might want to break into their phones.

The target demographic was narrow. Journalists in authoritarian countries. Human rights lawyers. Opposition politicians. Intelligence officers operating in hostile environments. Executives carrying genuinely sensitive corporate secrets. For most iPhone users, Lockdown Mode would never matter. For a small slice, it could mean the difference between a compromised device and a secure one.

Nearly four years later, Apple spokesperson Sarah O'Rourke delivered a statement that amounted to a quiet milestone: "We are not aware of any successful mercenary spyware attacks against a Lockdown Mode-enabled Apple device," she told TechCrunch on Friday, March 27, 2026.

That single sentence carries significant weight. Apple is not an organization prone to bold product claims. It is legally cautious, communications-disciplined, and rarely comments on security specifics with anything approaching certainty. For it to state plainly that Lockdown Mode has never been bypassed - while acknowledging it can't prove a negative - represents a meaningful commitment.

"We have not seen any evidence of an iPhone being successfully compromised by mercenary spyware where Lockdown Mode was enabled at the time of the attack." - Donncha O Cearbhaill, Head of Security Lab, Amnesty International

Amnesty International's security lab, which has documented dozens of spyware attacks against journalists and activists over the past decade, independently backed Apple's position. Citizen Lab at the University of Toronto - the other major academic body tracking government spyware - has not published a single confirmed Lockdown Mode bypass in any of its research.

Two separate incidents made the case even cleaner. In April 2023, Citizen Lab researchers publicly confirmed that Lockdown Mode had actively blocked a Pegasus attack - the NSO Group's flagship spyware tool, generally considered among the most sophisticated iOS exploits ever deployed commercially. Then in September 2023, they confirmed a second block, this time against Predator spyware made by Intellexa. Not neutralized after infection. Blocked. The attack chain simply terminated when it detected the security posture.

How Lockdown Mode Actually Works - and Why It Holds

Most security features try to detect attacks after they begin. Lockdown Mode takes a different approach: it dramatically reduces the attack surface before anything happens. The logic is architectural. A spyware developer cannot exploit a feature that doesn't exist.

Patrick Wardle, an Apple cybersecurity expert who has also been publicly critical of Apple's security culture when warranted, called it "one of the most aggressive consumer-facing hardening features ever shipped." That characterization matters because Wardle is not an Apple apologist - he has spent years documenting macOS and iOS vulnerabilities for a living.

The specific mechanisms matter to understand why the protection is so robust. Lockdown Mode blocks most message attachment types - one of the most common spyware delivery vectors. It restricts WebKit JavaScript features - the browser engine that powers most zero-click exploit chains targeting iPhones. It disables certain FaceTime features, wired connections to unknown devices, and configuration profiles that attackers might use to establish persistent access.

"It kills entire delivery mechanisms/exploit classes. It blocks most message attachment types, restricts WebKit features. This is really a huge reduction in remotely reachable attack surface, especially for zero-click exploit chains." - Patrick Wardle, Apple cybersecurity expert

The term "zero-click" is critical here. Traditional spyware required the target to click a malicious link or open a malicious file. By 2019, the most sophisticated operators had moved beyond that. Zero-click exploits can compromise a device via an incoming text message, an iMessage attachment, or even a phone call - without the target interacting at all. These attacks are devastating precisely because human vigilance cannot stop them.

Lockdown Mode attacks zero-click exploits at the structural level. By restricting the features those attack chains depend on, it forces spyware developers to find entirely new, more complex, and more expensive routes. And crucially, a newly discovered Google finding shows that at least one exploit kit - the Coruna toolkit - would actually abort its infection attempt if it detected Lockdown Mode was active. Not because it couldn't proceed. Because proceeding risked detection.

That behavioral detail reveals something important about the economics of spyware development. These tools are expensive. A working zero-click iOS exploit can cost millions of dollars to develop and maintain. Operators do not want to burn them on targets who might expose the technique. Lockdown Mode, even if theoretically bypassable, changes the risk calculus for attackers in ways that effectively protect users.

What Lockdown Mode restricts: Most message attachment types - Certain WebKit JavaScript features - Wired connections to unknown computers - FaceTime calls from unknown contacts - Mobile Device Management configuration profiles - 2G network connections - Link previews in Messages

The Coruna Leak: When Government Weapons Go Criminal

The same week Apple confirmed its spyware-free record, a separate story surfaced that should have been bigger news: a powerful suite of iOS hacking tools, linked by security researchers to the US government, has proliferated beyond official use and is now being deployed by Russian intelligence and Chinese financial criminals.

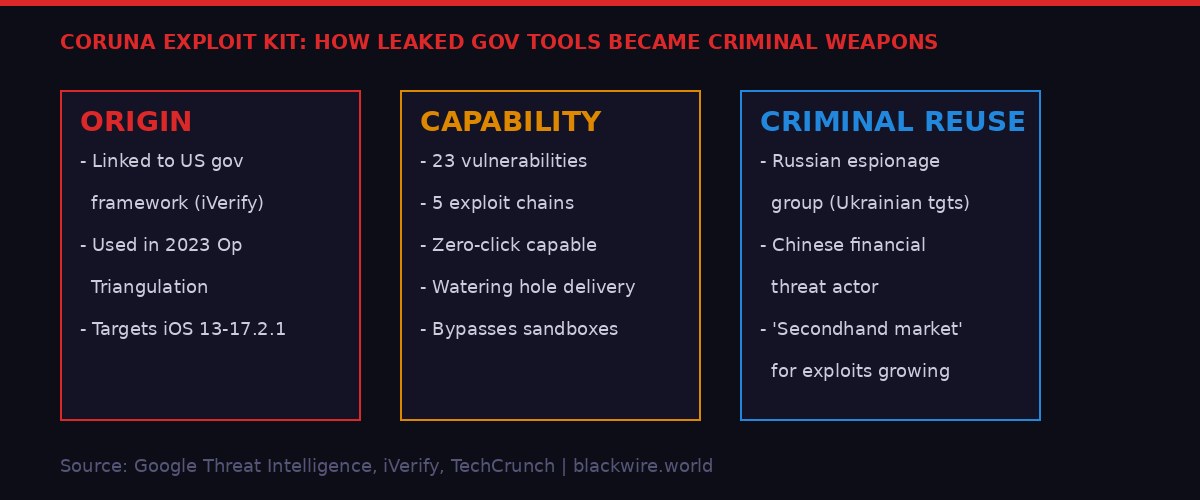

Google's Threat Intelligence team first identified the exploit kit, internally dubbed Coruna, in February 2025. At that point, it appeared in exactly the context you'd expect from a government surveillance tool: a surveillance vendor was using it to hack a target on behalf of a paying government customer. Standard operating procedure for the mercenary spyware industry.

Then it reappeared, in a different context entirely. Months later, Google researchers found the same Coruna exploit kit in use by a Russian espionage group, targeting Ukrainian users in a broad-scale campaign. Then it surfaced again, this time in the hands of a financially motivated threat actor based in China, pursuing criminal objectives rather than intelligence collection.

The exploit kit is technically formidable. It can compromise iPhones through a watering hole attack - simply visiting a malicious website is sufficient. It chains together 23 separate vulnerabilities across five separate exploit paths. It works against devices running iOS 13 through iOS 17.2.1, a version released in December 2023. Any unpatched iPhone from roughly 2019 to late 2023 is potentially vulnerable.

Mobile security company iVerify, which obtained and reverse-engineered the Coruna tools, linked components of the kit to a 2023 campaign called Operation Triangulation, in which a threat actor targeted iPhones belonging to employees of Russian cybersecurity firm Kaspersky. Russia's Federal Security Service (FSB) attributed those hacks to the US government.

iVerify's conclusion was direct: "While iVerify has some evidence that this tool is a leaked US government framework, that shouldn't overshadow the knowledge that these tools will find their way into the wild and will be used unscrupulously by bad actors. The more widespread the use, the more certain a leak will occur."

This is not a hypothetical risk. The precedent is well-established. In 2017, the NSA's Windows exploitation toolkit - including the EternalBlue back door - was stolen and published. Within months, cybercriminals were using it. The resulting WannaCry ransomware attack, attributed to North Korea, devastated hospitals, telecoms, and logistics companies across more than 150 countries. A tool built for targeted intelligence collection became a global infrastructure weapon.

The question with Coruna is not whether the tools would escape. They have. The question is how the proliferation happened - whether through a compromised contractor, a disgruntled insider, an adversary's intelligence operation, or something more systemic - and whether the agencies involved are prepared to acknowledge it.

The Intellexa Conviction and What It Means for State-Sponsored Spyware

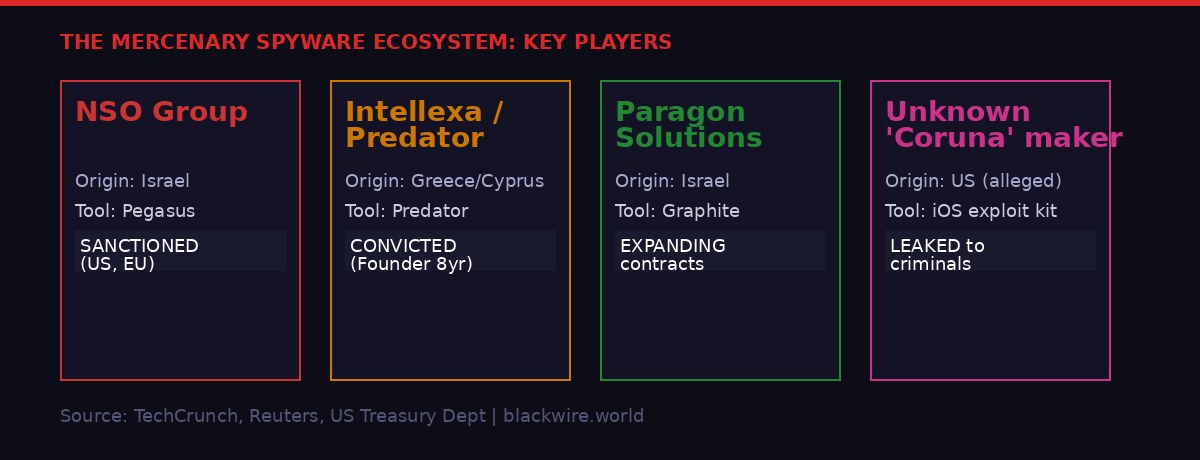

The same week brought another significant development in the mercenary spyware ecosystem: Tal Dilian, founder of Intellexa and the man behind Predator spyware, is planning to appeal his Greek conviction - and his public statements are pointing fingers directly at the Greek government.

Dilian was convicted in February 2026 on charges related to the mass wiretapping of Greek political figures, military officials, and journalists using Intellexa's own Predator tool. The scandal, sometimes called "Greek Watergate," resulted in the resignations of the head of Greece's national intelligence service and a senior aide to Prime Minister Kyriakos Mitsotakis. Dilian was sentenced to eight years in prison.

What makes his appeal announcement notable is not the legal move itself - convicted executives appeal convictions regularly - but the framing. "I believe a conviction without evidence is not justice, it could be part of a cover-up and even a crime," Dilian told Reuters. He said he was willing to share evidence with national and international regulators.

The implication is plain: that the Greek government was the actual customer directing the hacks, that Intellexa was the tool rather than the decision-maker, and that Dilian is being made the fall guy for a political scandal that should extend to elected officials. Whether that case holds in court is a separate question. But his willingness to frame it that way publicly represents the most direct challenge yet to the official Greek position that the surveillance was an Intellexa problem, not a government problem.

Surveillance industry dynamics are such that Dilian's claim is structurally plausible regardless of its factual accuracy. Companies like Intellexa, NSO Group, and Paragon Solutions do not operate autonomously. Their tools are sold to governments. Governments direct their use. The companies provide technical capability; the intelligence agencies provide targeting decisions. When something goes wrong - when a journalist is hacked, when a politician is surveilled, when a democratic country turns these tools on its own citizens - the companies take the legal exposure while the governments maintain institutional deniability.

That model is under increasing stress. The US imposed sanctions on Dilian in 2024, making him personally untouchable for anyone doing business in jurisdictions that respect Treasury designations. NSO Group has been blacklisted and fought in US courts for years. Greece's own courts convicted an Intellexa executive. The legal perimeter around the industry is tightening - but the tools themselves are not becoming less effective or less available.

The Coruna situation illustrates what happens when that tension reaches a breaking point. Government-developed or government-acquired tools do not stay contained. They proliferate through contractors, through adversarial intelligence operations, through the secondhand exploit market that Google researchers now explicitly identify as an emerging threat vector. The question is not whether leaked government cyberweapons will be used against civilians. They already are.

The EU Commission Breach: Cloud Infrastructure as a Target

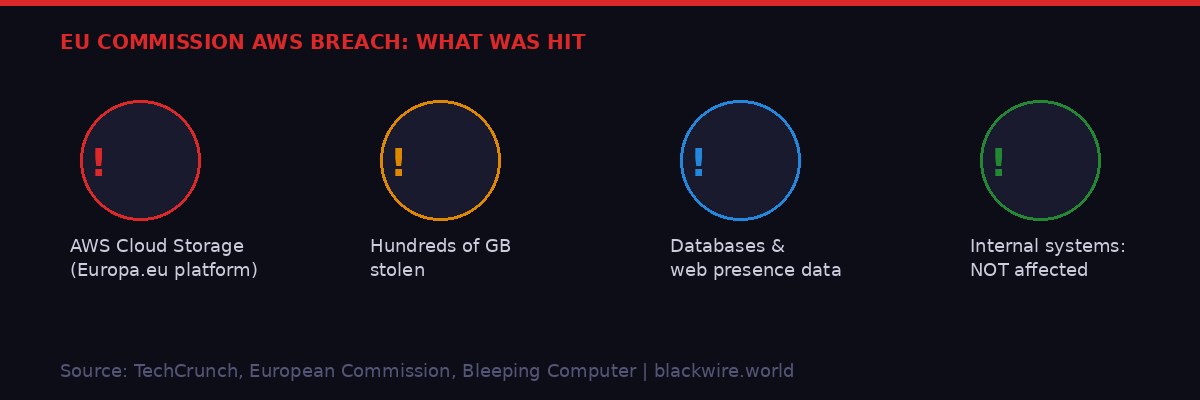

On the same day Apple confirmed Lockdown Mode's zero-breach record, the European Commission confirmed it had been the victim of a cyberattack. The target was not its internal network, its classified communications, or its diplomatic systems. It was the Commission's cloud storage: specifically, data hosted on Amazon Web Services that powers the Europa.eu public web platform.

European Commission spokesperson Nika Blazevic confirmed to TechCrunch that "the Commission discovered a cyber-attack, which affected part of our cloud infrastructure," adding that the breach "affected its cloud infrastructure hosting the Commission's web presence on the Europa.eu platform." The Commission said internal systems were not affected and that the attack had been contained.

Bleeping Computer, which broke the story, reported that hackers stole hundreds of gigabytes of data including multiple databases from the Commission's AWS account. The attackers provided screenshots as evidence of their access. The Commission has not confirmed what data was stolen or who was responsible.

The framing of "web presence only" should not be dismissed as reassuring. Public-facing infrastructure databases can contain registration data, contact forms, submitted documents, system credentials stored carelessly in configuration files, API keys linking to deeper systems, and metadata that helps attackers map the broader network. The EU Commission handles policy documents, regulatory filings, and correspondence that could be valuable for intelligence services or commercial espionage operations alike.

This breach is also a case study in the risks of cloud monoculture. AWS is effectively the default backbone for a significant portion of European government and enterprise cloud infrastructure. That centralization creates operational efficiency but also systemic risk: a breach at the cloud layer is not a breach of one system but potentially of many institutions sharing the same provider, the same security assumptions, and in some cases the same misconfigurations.

The timing matters for another reason. The EU is currently finalizing its Cyber Resilience Act and NIS2 directive implementation - two pieces of legislation designed to impose minimum cybersecurity standards on critical infrastructure operators across member states. The Commission being breached during that policy process is, at minimum, embarrassing. It also raises the question of whether the Commission's own cloud security posture meets the standards it is legislating for others.

Physical Intelligence's $11 Billion Robot Brain Bet

Not all this week's big tech news was about security failures. Physical Intelligence, the San Francisco-based robotics AI startup, is reportedly in talks to raise approximately $1 billion in new funding at a valuation exceeding $11 billion - effectively doubling its $5.6 billion valuation from just four months ago.

The deal would be led by Founders Fund, with Lightspeed Venture Partners also in discussions alongside returning backers Thrive Capital and Lux Capital, per Bloomberg. That investor roster reads like a who's-who of high-risk, high-conviction Silicon Valley capital - the kind of investors who have the patience and portfolio construction to absorb a multi-year research bet.

Physical Intelligence's core ambition is to build what co-founder Sergey Levine described as "ChatGPT, but for robots" - a general-purpose AI model that can control a physical robot to perform a wide variety of tasks without being trained specifically for each one. The analogy is intentionally broad. GPT models can write poetry, code, analyze images, and summarize documents without being fine-tuned for each task. Physical Intelligence wants to build the equivalent for physical manipulation: fold laundry, peel vegetables, pack boxes, assemble components, all from a single foundational model.

The company employs roughly 80 people, has no timeline for commercialization, and its other co-founder Lachy Groom was explicit with TechCrunch in January that there are no constraints on capital deployment: "There's no limit to how much money we can really put to work. There's always more compute you can throw at the problem."

That posture - research-first, commercialization-later, compute-unlimited - is only viable with investors who are betting on a decade-long payoff. The $11 billion valuation is not based on revenue. It is based on the possibility that the first company to crack general robotic manipulation will own a market that dwarfs anything currently visible.

SK Hynix, RAMmageddon, and the AI Memory Bottleneck Nobody Is Talking Enough About

Beneath all the model releases and AI product announcements, there is a hardware constraint that is quietly limiting the entire AI industry: memory. Specifically, high-bandwidth memory, or HBM - the specialized DRAM architecture that sits directly on or near AI accelerator chips and feeds them data fast enough to keep GPU compute utilized.

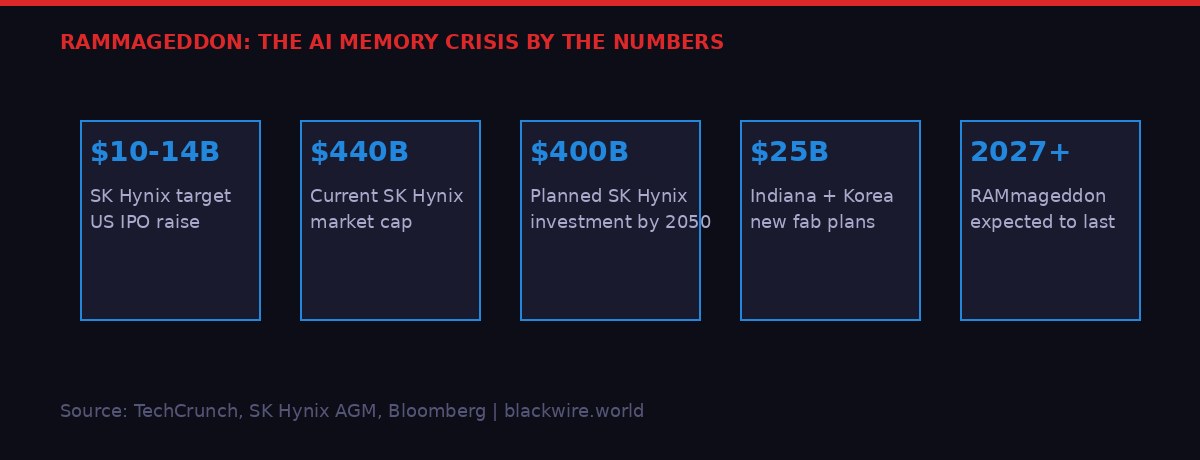

SK Hynix, the South Korean semiconductor giant that controls a dominant share of HBM production and supplies the memory stacks in Nvidia's H100 and H200 accelerators, is planning a US stock market listing that could raise $10 to $14 billion. The company filed a Form F-1 confidentially this week and is targeting the second half of 2026 for the actual IPO.

The valuation argument is geographic. SK Hynix has a market cap of roughly $440 billion on the Korean KOSPI exchange, but its valuation multiples trail comparable US-listed semiconductor firms by a measurable margin. The thesis is that a US listing - potentially structured as American Depositary Receipts to comply with Korean holding company rules requiring SK Square to maintain a 20% stake - would allow US institutional and retail investors to buy the stock more easily, increasing demand and reducing the valuation discount.

There is precedent. TSMC has historically traded at a premium in its US-listed shares versus its domestic Taiwan listing during periods of strong AI demand. If the same dynamic applies to SK Hynix, the IPO could raise substantially more capital than the headline estimates while simultaneously boosting the company's overall market valuation.

Why does any of this matter beyond semiconductor investors? Because SK Hynix's capital position directly determines how quickly HBM supply expands - and HBM supply directly determines how many AI accelerators can be built. The company has announced plans for $25 billion in new facilities in South Korea and Indiana, a $7.9 billion deal to acquire advanced EUV lithography scanners from ASML by 2027, and an extraordinarily ambitious $400 billion investment roadmap through 2050 centered on a semiconductor cluster in Yongin.

All of that requires capital. The US IPO is not a financial engineering exercise. It is the funding mechanism for the hardware layer that the entire AI industry depends on. Nature magazine projects the HBM shortage - dubbed "RAMmageddon" in hardware circles - will persist through at least 2027 without significant new supply. Google's announcement this week of TurboQuant, an AI memory compression algorithm, represents a software-side workaround, but not a permanent solution to the fundamental supply constraint.

The ripple effects from SK Hynix's filing are already visible. Artisan Partners, a major Samsung Electronics shareholder, publicly called for Samsung to consider a similar US ADR listing, citing valuation parity concerns. If both companies list in the US, it would represent a significant shift in where semiconductor investment capital concentrates - and potentially accelerate the timeline for resolving the memory bottleneck that is constraining AI development across the industry.

The Week's Larger Pattern: Tools Without Controls

Taken together, this week's technology news traces a single recurring theme: powerful tools - whether cryptographic, military, or financial - are escaping the control structures designed to contain them.

Apple's Lockdown Mode works because it imposes constraints on the phone's own capabilities. It doesn't try to detect malicious code; it removes the surface that malicious code depends on. That design philosophy - reduce the attack surface rather than trying to out-smart attackers - is proving uniquely durable against an adversary class with essentially unlimited development budgets and nation-state resources.

The Coruna leak illustrates the inverse problem. When you build powerful offensive tools and deploy them in the world, you lose control of them. The NSA's EternalBlue became WannaCry. Now US-linked iOS exploit kits are in the hands of Russian intelligence and Chinese criminals. The tools themselves don't care about their creator's intentions - they function for whoever holds them.

The Intellexa conviction, the EU Commission breach, and even the Physical Intelligence fundraise all fit the same frame. Spyware companies tell themselves they only sell to responsible governments, until a Greek court establishes otherwise. The EU Commission deploys cloud infrastructure with the expectation of security, until a hacker extracts hundreds of gigabytes and posts screenshots. A robotics company raises $11 billion to build general-purpose physical AI, betting that the tool itself will find productive uses before problematic ones.

Apple Lockdown Mode's four-year perfect record is genuinely remarkable. But it is remarkable in part because it is the exception - a defensive technology that works as well as its designers intended, protecting the specific population it was designed for, without obvious failure modes. In a week filled with examples of tools escaping their design constraints, that's worth noting plainly.

For anyone in a high-risk category - journalists, activists, politicians, executives carrying genuinely sensitive information - enabling Lockdown Mode on Apple devices takes about thirty seconds and costs nothing. The security researchers are unanimous that the tradeoffs are worth it. The question is whether that message is reaching the people who need to hear it.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramTechCrunch - Apple Lockdown Mode statement (Lorenzo Franceschi-Bicchierai, March 27, 2026) - TechCrunch - Coruna exploit kit report (Zack Whittaker, March 3, 2026) - Google Cloud Security Blog - Coruna: A Powerful iOS Exploit Kit (March 2026) - iVerify - First Known Mass iOS Attack (March 2026) - TechCrunch - Intellexa conviction appeal (Zack Whittaker, March 25, 2026) - Reuters - Tal Dilian appeal statement (March 24, 2026) - TechCrunch - EU Commission cyberattack confirmation (Zack Whittaker, March 27, 2026) - Bleeping Computer - EU Commission AWS breach (March 27, 2026) - TechCrunch - Physical Intelligence fundraise (Connie Loizos, March 27, 2026) - TechCrunch - SK Hynix US IPO analysis (Kate Park, March 27, 2026) - Citizen Lab - Lockdown Mode blocking Pegasus (April 2023) - Citizen Lab - Lockdown Mode blocking Predator (September 2023) - Nature - RAMmageddon forecast (March 2026)