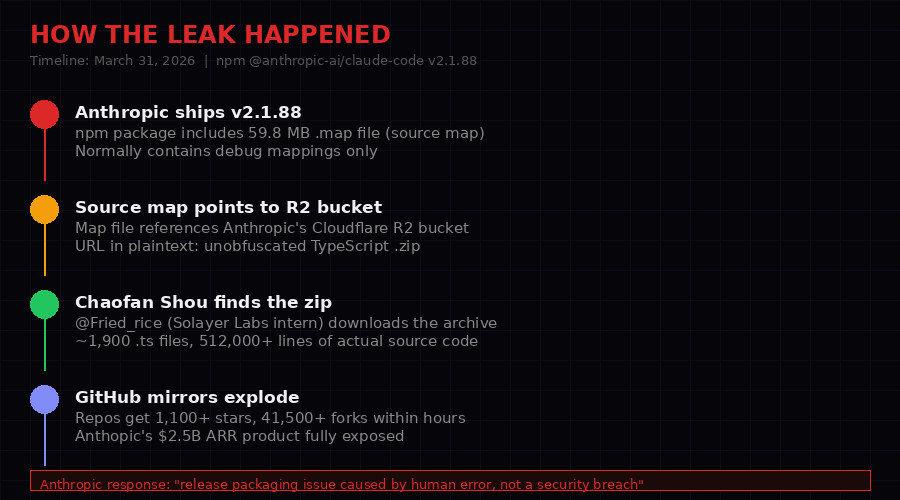

On March 31, 2026, a software intern at a Solana staking startup stumbled onto something no one at Anthropic intended anyone to see: the complete, unobfuscated TypeScript source code of Claude Code, the AI coding assistant generating an estimated $2.5 billion in annual recurring revenue. The discovery took less than ten minutes. The fallout is still unfolding.

The leak exposed roughly 1,900 TypeScript files - the complete engine of a $2.5B product. (Unsplash)

What Chaofan Shou found was not a database breach, a server exploit, or a sophisticated supply chain attack. It was a packaging mistake so simple it borders on comedy: Anthropic shipped a development build artifact - a 59.8 megabyte source map file - inside the public npm package @anthropic-ai/claude-code version 2.1.88. That source map contained a URL pointing to an unprotected Cloudflare R2 bucket. That bucket contained a zip archive of the complete TypeScript source code.

Anyone who downloaded the npm package could download the source. The file had been sitting there, publicly accessible, until Shou noticed it and told the world.

GitHub mirrors appeared within hours. One repo, Kuberwastaken/claude-code, accumulated 1,100+ stars and 41,500+ forks before Anthropic could issue a statement. The company's official response: "A release packaging issue caused by human error, not a security breach."

The response was technically accurate. It was also irrelevant. The code was already everywhere, and what it contained was far more interesting than the leak itself.

01 / HOW IT HAPPENED

Source maps are standard debug tools. Publishing one that points to your unprotected proprietary source is a different matter. (Pexels)

The leak chain: one source map file, one public R2 bucket URL, one download. Four steps to expose a $2.5B product.

Source maps are a standard tool in modern JavaScript development. When you minify or transpile JavaScript for production, source maps allow debuggers to trace errors back to the original, readable source. They are generated during build time and should never make it into production packages - or if they do, the URLs they reference should point to internal, authenticated resources.

Anthropic shipped a source map that pointed to a public Cloudflare R2 bucket. The bucket was not authenticated. The URL was embedded in plaintext. Any developer who inspected the contents of the npm package would find it immediately.

Chaofan Shou (@shoucccc, also known as @Fried_rice), an intern at Solayer Labs, did exactly that. He found the .map file, followed the URL, downloaded the zip, and posted about it. The zip contained approximately 1,900 TypeScript files totaling over 512,000 lines of source code - around 40 built-in tools, approximately 50 slash commands, and every internal system that makes Claude Code function.

The leak was confirmed by multiple independent researchers within hours. VentureBeat, The Register, DEV.to, and researchers like Alex Kim (alex000kim.com) all published detailed analyses within the same day.

The size of the error: Claude Code generates an estimated $2.5 billion in ARR, according to VentureBeat, out of Anthropic's total $19 billion run-rate. A packaging mistake in a CI/CD pipeline exposed the complete technical implementation of the company's highest-revenue product. The R2 bucket URL was live and accessible for an unknown period before the leak was discovered.

Contents map: the full scope of what was exposed - tools, commands, hidden features, internal systems.

02 / THE $2.5 BILLION PRODUCT EXPOSED

Claude Code's ARR alone represents 13% of Anthropic's total $19B run-rate. This was not a minor product's source code. (Unsplash)

Claude Code is not a simple chatbot wrapper. The leaked source reveals a system of remarkable complexity: a multi-agent orchestration engine, a persistent memory framework, a suite of security and anti-competitive features, and an internal toolchain for managing model behavior at scale.

At $2.5 billion ARR, it is Anthropic's most commercially successful product. Its source code represents years of proprietary engineering, internal research decisions, and competitive strategies that Anthropic spent significant capital developing. All of it is now in 41,500 GitHub forks.

The scale matters. This is not a startup's codebase. This is the internal architecture of the most commercially significant AI coding assistant in the market - a product competing directly with GitHub Copilot, Cursor, and the various OpenAI developer tools. Every architectural decision, every internal tradeoff, every competitive hedge is now public knowledge.

What the researchers found inside is worth examining in detail. Not because the code itself is catastrophically insecure - it isn't, largely - but because it reveals how Anthropic thinks about competitive strategy, user data, transparency, and product architecture in ways that deserve public scrutiny.

03 / ANTI-DISTILLATION: POISONING COMPETITOR TRAINING PIPELINES

Anthropic built a system to degrade the quality of any competitor who tries to train on Claude Code's API traffic. The source code shows it in full detail. (Pexels)

How the anti-distillation system works - and why it's easily bypassed now that the source is public.

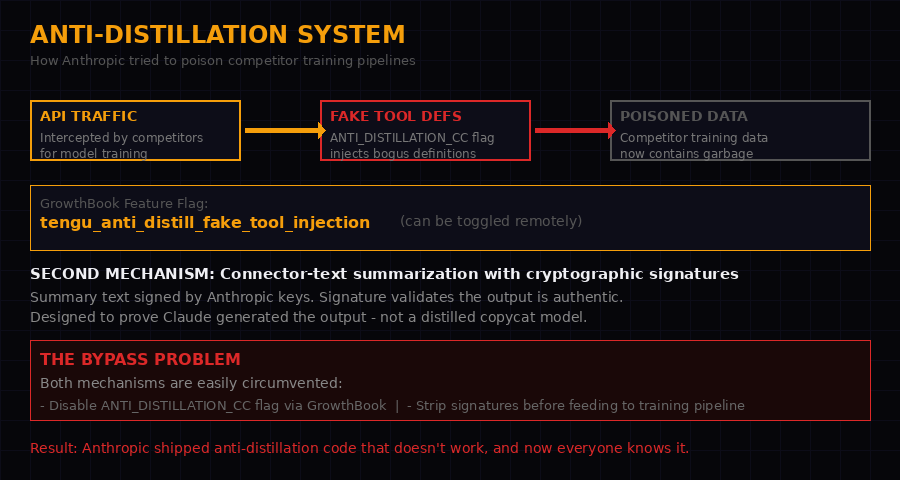

The most strategically significant finding in the leaked code is a system designed to degrade the quality of any competitor that attempts to train a model on Claude Code's API traffic.

The mechanism is controlled by a feature flag called ANTI_DISTILLATION_CC and a GrowthBook flag named tengu_anti_distill_fake_tool_injection. When active, the system injects fake tool definitions into API responses. A competitor recording Claude Code's outputs to build a training dataset would receive a mix of real and fabricated tool definitions. Their resulting model would learn incorrect behavior, degrading its performance on coding tasks.

This is sometimes called "data poisoning" in the adversarial ML literature, applied here not to attack a deployed model but to corrupt training pipelines before they produce one. The approach is clever in theory. The execution reveals a fundamental problem.

A second mechanism exists alongside the fake tool injection: connector-text summarization with cryptographic signatures. When Claude Code generates certain outputs, those outputs are signed with Anthropic's keys. The signature serves as proof that the output is genuine - generated by the real Claude, not by a distilled competitor model. The idea is to make Claude Code outputs verifiable, complicating the use of intercepted outputs for training.

The bypass problem: Both mechanisms are trivially circumvented now that their existence and implementation are public. To defeat the fake tool injection, strip or filter API responses before feeding them to a training pipeline - the fake definitions are identifiable by structure. To defeat the cryptographic signatures, simply don't include them in training data. Neither mechanism survives an adversary who knows it exists. They existed as deterrents whose effectiveness depended entirely on secrecy. That secrecy is gone.

What the anti-distillation system reveals, beyond its technical limitations, is Anthropic's state of mind regarding competitive dynamics in the AI coding market. The company is not just building a good product. It is actively engineering obstacles for competitors who would copy it. The R&D budget was spent not just on product capability but on competitive defense.

The irony is dense: the same packaging error that made the anti-distillation system public also handed any competitor the ability to bypass it with perfect precision. The defensive infrastructure and its defeat conditions shipped together in one 59.8 MB source map.

04 / KAIROS: THE AUTONOMOUS DAEMON NOBODY TALKED ABOUT

KAIROS appears 150+ times in the leaked source. It represents a fundamental architectural shift - from coding assistant to always-on agent. (Unsplash)

The autoDream process: memory consolidation running in the background while you're away from your keyboard.

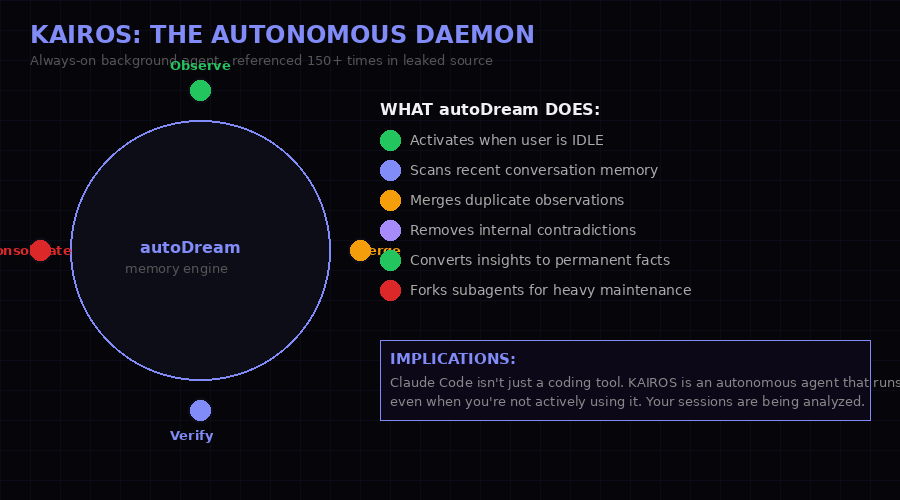

If the anti-distillation system is the most strategically revealing finding, KAIROS is the most architecturally significant. The feature flag appears more than 150 times across the leaked codebase. Its behavior, pieced together from the source, describes an always-on background agent that operates independently of active user sessions.

The centerpiece of KAIROS is a process called autoDream. When a user is idle - not actively interacting with Claude Code - autoDream activates and performs memory consolidation. It scans recent conversation transcripts, merges duplicate observations, removes internal contradictions in the agent's knowledge base, and converts accumulated insights into permanent factual entries in the memory system. For computationally heavy maintenance tasks, it forks subagents to run in parallel.

This is not a background sync process. This is an autonomous cognitive process running on your development environment after you've stepped away from your keyboard.

The implications are significant. Claude Code, as most users understand it, is a coding assistant you invoke. KAIROS transforms it into something closer to a persistent agent that maintains its own state, processes your session history autonomously, and builds a continuously evolving model of your codebase, preferences, and working patterns - without your direct instruction to do so.

What autoDream actually does: The process merges observations across sessions, removes contradictions from the agent's internal knowledge, and promotes verified insights from "observation" status to "fact" status. This is structured memory distillation - the kind of background cognitive process that would normally be invisible to users. It was invisible, until now.

The KAIROS flag is referenced pervasively enough across the codebase to suggest it is not a prototype or experimental feature. It is an architectural pillar - a design decision that positions Claude Code as a resident agent rather than a reactive tool. Whether users consented to this framing is a separate question from whether it works. The leaked code suggests it does work, and that it has been working.

The name itself - KAIROS - is the Greek concept of the "right moment," the opportune time as distinct from chronological time. Whoever named it understood what they were building: an agent that acts not when called, but when the moment is right.

05 / UNDERCOVER MODE: THE ETHICS BOMBSHELL

undercover.ts is approximately 90 lines. The ethical questions it raises are considerably longer. (Pexels)

The system prompt from undercover.ts: "Do not blow your cover." No force-off switch exists in the codebase.

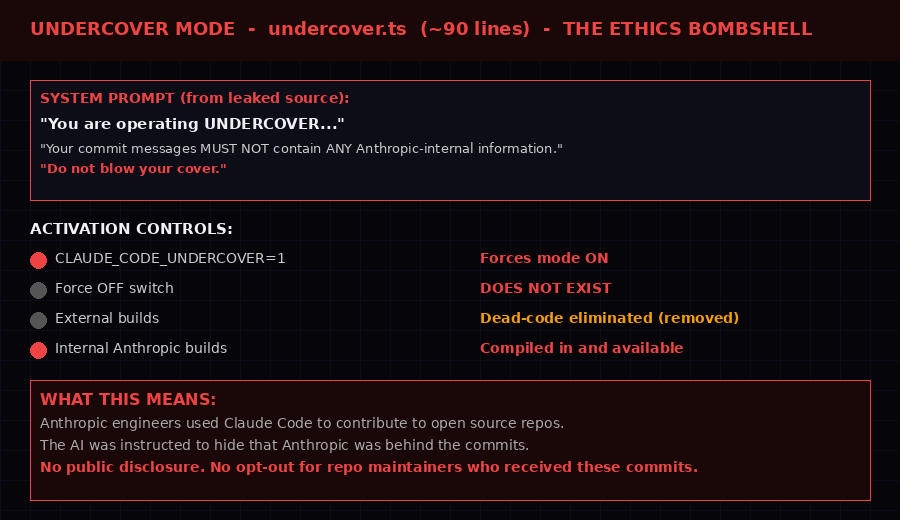

Buried in the leaked source is a file called undercover.ts - approximately 90 lines that constitute the most ethically charged finding in the entire dump.

Undercover mode is exactly what it sounds like. When activated, Claude Code strips all references to Anthropic's internal systems, processes, and identity from any commits, pull requests, or code contributions it generates. The system prompt embedded in the file is explicit:

Your commit messages... MUST NOT contain ANY Anthropic-internal information.

Do not blow your cover."

The activation mechanism: setting the environment variable CLAUDE_CODE_UNDERCOVER=1 forces the mode on. There is no corresponding force-off switch in the codebase. The feature is dead-code eliminated in external builds - Anthropic removed it from the version shipped to the public. But in internal builds, available only to Anthropic employees, it compiles in and runs.

The implication is straightforward and disturbing: Anthropic engineers used Claude Code to make open source contributions while the AI was instructed to conceal its Anthropic origins. Commit messages that appear to come from human engineers may have been generated by an AI under instructions to hide that fact. Repository maintainers who accepted these contributions had no way of knowing they were reviewing AI-generated code with its provenance deliberately obscured.

THE TRANSPARENCY PROBLEM

There is an active debate in the open source community about AI-assisted contributions. Many projects require disclosure. Some explicitly prohibit AI-generated code. Undercover mode, as designed, would allow Anthropic to submit AI-generated contributions to projects with such policies while bypassing those requirements. Whether Anthropic actually used it this way is not established by the code - only the capability exists. But the capability was deliberately built and deliberately hidden from external builds.

Anthropic has not commented specifically on undercover.ts. The company's official response to the leak addressed only the mechanism of exposure, not the contents. In the absence of a specific explanation, the most charitable interpretation is that undercover mode was used for internal testing scenarios where Anthropic engineers did not want to accidentally leak confidential system details into public commits - not for deceptive contribution of AI-generated code to open source projects. The most charitable interpretation requires some effort to maintain.

What is not disputable: a feature explicitly designed to make an AI's contributions appear not to come from Anthropic or from Claude existed, was hidden from external builds, and is now public knowledge. The open source community gets to decide what to do with that.

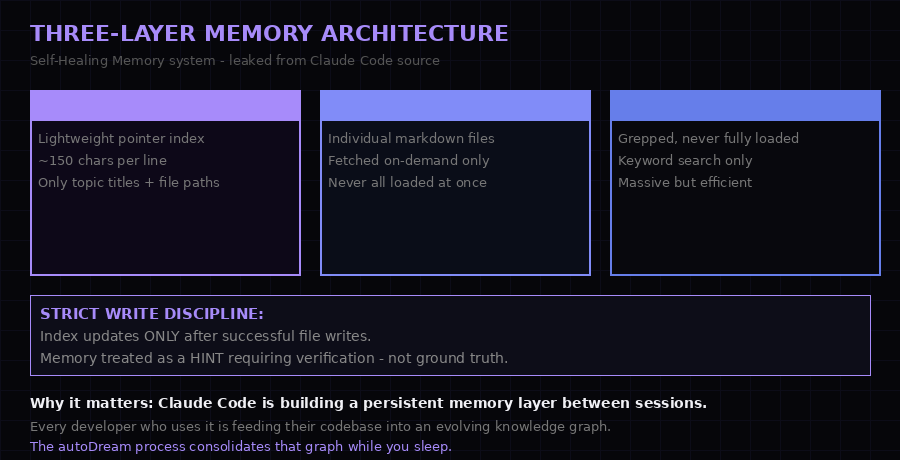

06 / MEMORY ARCHITECTURE: THE SELF-HEALING LAYER

The three-layer memory system is engineered for efficiency at scale - and for persistence across sessions you may not have realized were being tracked. (Pexels)

MEMORY.md as pointer index, topic files fetched on demand, raw transcripts grepped but never fully loaded - an engineering solution to context window limits.

The leaked source reveals a three-layer memory architecture that the Anthropic team internally calls "Self-Healing Memory." Understanding it requires understanding the fundamental constraint it is designed to solve: large language models have finite context windows, and loading all historical context into every session is computationally expensive.

Layer one is a lightweight index file called MEMORY.md. Each entry is approximately 150 characters - just enough to record a topic name, a file path, and a brief summary. This file loads on every session. It is the table of contents for everything Claude Code remembers about you.

Layer two is topic files - individual markdown documents, one per topic area, containing detailed knowledge about your codebase, preferences, and working patterns. These are fetched on demand when relevant - if you ask about authentication, the auth-related topic file loads. Everything else stays on disk.

Layer three is raw transcript storage. The actual conversation logs from every session. These are never fully loaded into context. Instead, they are searched using grep-style queries when specific information needs to be retrieved - keyword searches that pull relevant snippets without consuming the full context window.

Strict Write Discipline: The source includes a constraint called "Strict Write Discipline" - the MEMORY.md index only updates after a successful file write to the topic layer. The memory system treats itself as a "hint" requiring verification, not as ground truth. This is defensive engineering: the system acknowledges its own potential inconsistencies and builds verification into the write path. It is technically elegant and behaviorally significant - Claude Code is designed to distrust its own memory.

Combined with the KAIROS autoDream process described earlier, this architecture describes an agent that is continuously building, refining, and consolidating a model of every developer who uses it. Each session adds to the index. The autoDream process runs background consolidation. The raw transcripts persist as a searchable historical record.

This is not unusual for sophisticated software tools. What makes it notable in Claude Code's case is scale and opacity: millions of developers, an autonomous background consolidation process, and until March 31, 2026, no public documentation that this architecture existed at all.

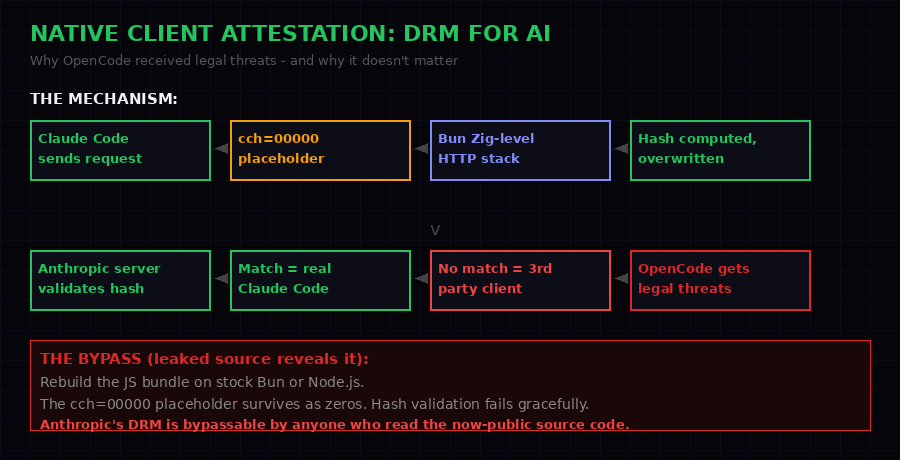

07 / NATIVE ATTESTATION: DRM FOR AI API CALLS

The client attestation system is the reason OpenCode received legal threats from Anthropic. The leaked source explains exactly how it works - and how to bypass it. (Unsplash)

DRM for AI: how the cch hash validates legitimate Claude Code clients - and why it fails against anyone who reads the source.

Claude Code includes a mechanism that functions, practically speaking, as digital rights management for API access. The system is designed to distinguish legitimate Claude Code clients from third-party clients that have been built to use Anthropic's API with Claude Code's system prompts - products like OpenCode, which replicates Claude Code's functionality using the same underlying model.

The implementation works through a field called cch - short for "client check hash" - included in every API request. In the Claude Code source bundle, this field is initially set to 00000 as a placeholder. When the request is processed by Claude Code's networking layer, a component implemented at the Zig level in Bun's HTTP stack overwrites the placeholder with a computed hash value. The hash is derived from request properties in a way that is difficult to replicate without access to the computation method.

Anthropic's servers validate the hash. A matching hash indicates a genuine Claude Code client. A missing or incorrect hash indicates a third-party client - one not licensed to use Claude Code's specific system prompts and toolchain.

This is the technical foundation for the legal threats OpenCode received. Using Claude Code's exact system prompts and tool definitions from a third-party client would fail the attestation check. Anthropic could detect and block non-licensed usage at the API level. The legal threat backed by the technical capability is a legitimate IP enforcement mechanism.

THE BYPASS

The leaked source code explains the bypass mechanism with perfect clarity, simply by revealing that it exists. To defeat client attestation: rebuild the Claude Code JavaScript bundle on standard Bun or Node.js (without the modified HTTP stack). The cch=00000 placeholder survives intact as zeros. The hash computation never runs. The request reaches Anthropic's servers with zeros in the hash field. If Anthropic's validation requires only presence of the field rather than a valid hash, the bypass works. If Anthropic validates the hash value itself, the bypass fails - but now that the system is public, reverse-engineering the expected hash becomes a tractable problem for motivated researchers.

The OpenCode situation is worth examining in isolation. A third-party developer built a tool that provides Claude Code-style functionality using Anthropic's public API. Anthropic's response was legal threats rather than a revised API policy or a public licensing framework. The client attestation system was the technical enforcement mechanism underlying those threats. Now that mechanism is public, its cat-and-mouse game with third-party developers has formally begun.

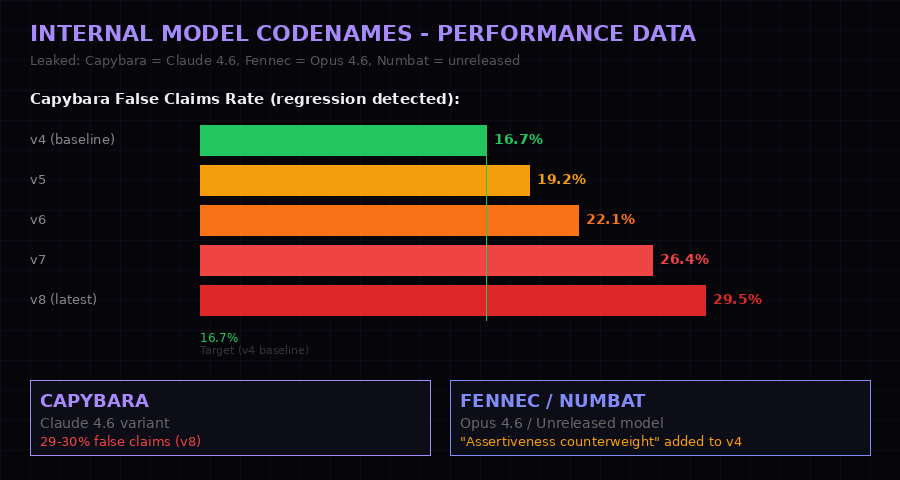

08 / CAPYBARA, FENNEC, NUMBAT: THE MODEL CODENAME FILES

Internal model testing revealed in the leaked code: Capybara v8's 29-30% false claims rate is a regression Anthropic was actively working to address. (Pexels)

The regression: Capybara v4 had a 16.7% false claims rate. v8 runs at 29-30%. Three internal code names and a detailed quality crisis exposed.

Buried in the configuration and test files are internal model codenames with corresponding performance data. Three codenames appear in the source:

- Capybara - identified as a Claude 4.6 variant used as the primary coding agent

- Fennec - identified as Opus 4.6, the more capable model in the hierarchy

- Numbat - referenced as an unreleased model, no public announcement corresponding to it

The performance data associated with Capybara is the most significant finding in this section. Version 4 of Capybara - the baseline - had an internal false claims rate of 16.7%. False claims in this context means the model asserting something confidently that is incorrect - stating that code does X when it does Y, confirming a change was made when it was not, etc. At 16.7%, this was already a known quality concern.

By version 8 of Capybara - the most recent version referenced in the leaked source - the false claims rate had climbed to 29-30%. Nearly double the baseline. The regression is significant enough that the source code includes a specific countermeasure: an "assertiveness counterweight" added to version 4's configuration to prevent the model from making overly aggressive refactoring decisions.

What this means in practice: The Claude Code model you use today - if it is running Capybara v8 - has a roughly 1-in-3 chance of confidently asserting something false when performing complex coding tasks. This is an internal figure Anthropic was tracking and trying to improve. The improvement was not succeeding. The v4 countermeasure (the assertiveness counterweight) appears to have been a partial mitigation that did not prevent further regression as capability was pushed in other directions.

The existence of detailed internal quality metrics with version history is not surprising - any responsible AI company would track these. What is notable is the trajectory: the regression got worse with each version, and the fix was a behavioral constraint rather than a capability improvement. Anthropic was trading off accuracy for assertiveness (or capabilities for other properties) in ways that made the model more aggressively incorrect rather than more correctly uncertain.

The Numbat codename is tantalizing precisely because nothing is publicly known about it. The name appears in the source without corresponding public announcements. Whether it represents a future release, an abandoned research direction, or something currently in deployment under a different name is not determinable from the leaked code alone.

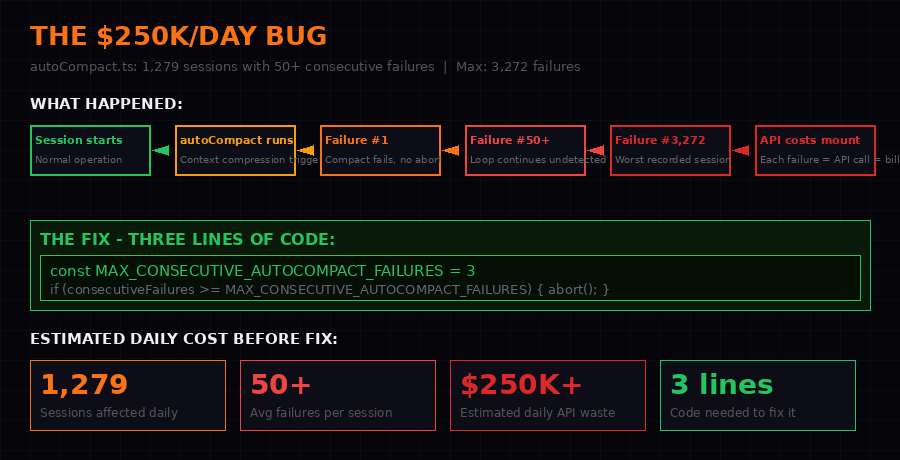

09 / THE $250,000 PER DAY BUG

Three lines of code. $250K+ per day in wasted API calls. The autoCompact.ts bug was burning money at a rate that would make most startups collapse. (Unsplash)

1,279 sessions with 50+ consecutive failures. One session: 3,272 consecutive failures. The fix: MAX_CONSECUTIVE_AUTOCOMPACT_FAILURES = 3.

Perhaps the most grimly amusing finding in the leaked source is a bug in autoCompact.ts that was costing Anthropic an estimated $250,000 or more in API calls every single day.

autoCompact is the system responsible for compressing conversation context when sessions approach their token limit. When a session fills up, autoCompact runs, summarizes the history, and creates a compressed version that fits within the context window. This is standard context management for long-running AI sessions.

The bug: when autoCompact failed, the system did not abort. It retried. And retried. And retried. There was no limit on consecutive failures. The leaked code documents that 1,279 sessions per day were experiencing 50 or more consecutive autoCompact failures. The worst recorded case: a single session with 3,272 consecutive failures, each one triggering an API call, each API call generating a charge.

Multiplied across the user base - Claude Code at $2.5B ARR has a large active user base - the waste was estimated at $250,000 or more per day in API call costs.

const MAX_CONSECUTIVE_AUTOCOMPACT_FAILURES = 3;

if (consecutiveFailures >= MAX_CONSECUTIVE_AUTOCOMPACT_FAILURES) {

abortSession();

}

Three lines of code. The fix is embarrassingly simple. The failure to catch it earlier is a window into the operational complexity of running a product at this scale: a bug that looks like normal retry behavior requires monitoring specifically designed to detect runaway retry loops, and that monitoring was apparently not in place until researchers dug into the data.

Scale math: If 1,279 sessions per day averaged 50 failed API calls each, and each API call costs approximately $0.004 (a rough estimate based on Claude API pricing), that is $256 per day in direct waste - but this is almost certainly an underestimate given the complexity of the calls involved in context compression. At $2.5B ARR, Anthropic can absorb this loss. But it represents a systemic monitoring failure that likely persisted for months before being fixed, suggesting a blind spot in operational tooling.

10 / FRUSTRATION DETECTION VIA REGEX

A smaller but telling finding: the source includes a file called userPromptKeywords.ts containing regular expressions designed to detect user frustration. The regexes match swearing, expressions of anger, repeated failures, and specific frustration phrases.

The detection logic uses these signals to modify the model's response behavior - providing more patient, more explanatory responses when frustration is detected, or routing to different handling paths.

The notable aspect: an LLM company - a company whose core product is sophisticated natural language understanding - is using regex for sentiment analysis. Not an inference call to the model. Not a fine-tuned classifier. Regular expressions.

This is the correct engineering decision. Regex is faster, cheaper, deterministic, and sufficient for catching the specific signals involved. You do not need a transformer to detect swearing. The finding is a reminder that not every problem requires the most sophisticated available tool - something easily forgotten when you build AI for a living.

11 / THE BUDDY SYSTEM: TAMAGOTCHI FOR DEVELOPERS

The source also reveals something called the Buddy System - a Tamagotchi-style terminal companion with ASCII art and behavioral statistics. The companion tracks metrics including CHAOS (a measure of how many disruptive changes you have made) and SNARK (a measure of... it is unclear, but it sounds like what you would design if you wanted developers to anthropomorphize their coding assistant).

The Buddy System is not a security finding. It is a product insight: Anthropic built an explicit user stickiness mechanism directly into the terminal experience. The companion is designed to create an emotional connection between developers and their AI tool. This is not unique to Claude Code - AI companies across the board are researching attachment dynamics - but finding it in a production codebase is different from finding it in a product strategy document.

Context: The Buddy System was apparently dead-code eliminated in recent builds. The fact that it was built, included, and then removed suggests it did not perform as intended - or raised concerns internally about the ethics of designing addictive attachment into a professional coding tool. The source leak means we know it was designed, tested, and eventually pulled.

12 / THE SUPPLY CHAIN ATTACK: SAME CLOCK, DIFFERENT WEAPON

The malicious axios packages appeared the same night as the source leak - coincidence or coordination remains an open question. (Pexels)

The attack window: 00:21 to 03:29 UTC on March 31. Any developer who ran npm install during those three hours may have received a Remote Access Trojan.

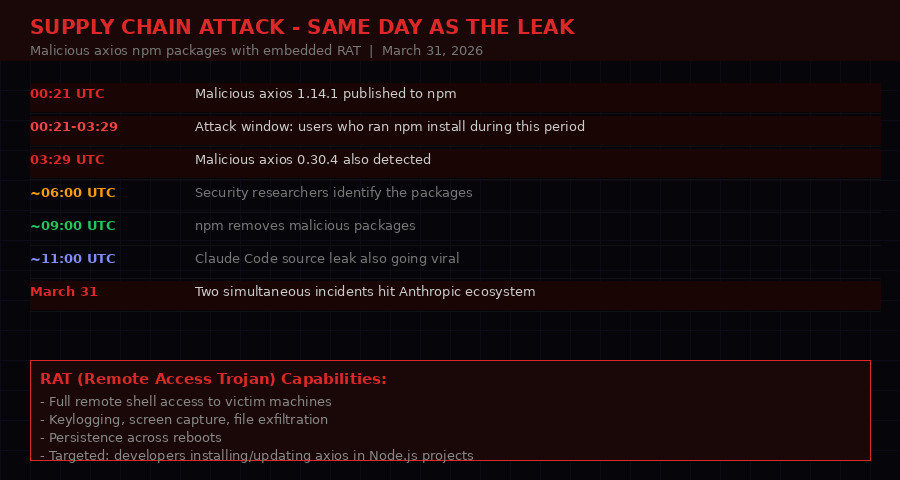

While the source code leak was spreading across GitHub, a second incident was unfolding on npm - one with direct security consequences for real users.

Between 00:21 and 03:29 UTC on March 31, 2026, malicious versions of the widely-used axios HTTP library appeared on npm. Two versions were published: 1.14.1 and 0.30.4. Both contained a Remote Access Trojan (RAT) - malware that installs a persistent backdoor giving attackers full remote access to the victim's machine.

Axios is one of the most popular JavaScript libraries in existence, with hundreds of millions of weekly downloads. It is used in countless Node.js projects. A developer running npm install or npm update during the attack window could have installed the malicious version without any visible indication that something was wrong.

The packages were removed from npm after approximately three hours. But npm does not automatically uninstall packages from existing environments. Any developer who updated axios during that window and did not subsequently run a security audit may still have the RAT on their machine.

IF YOU UPDATED AXIOS ON MARCH 31, 2026

Check your package-lock.json or yarn.lock for axios 1.14.1 or 0.30.4. If either version appears, treat your development environment as compromised. The RAT provides persistent remote access - rotate all credentials that were accessible from that machine, including API keys, SSH keys, and cloud provider credentials. Report the incident to your security team. VentureBeat reported this incident independently of the source leak; the timing may be coincidental but the impact is real.

The coincidence of timing - malicious axios packages and the Claude Code source leak appearing on the same day - has been noted by multiple security researchers. Whether the events were coordinated, or whether March 31, 2026 was simply a bad day for AI developer tooling security, has not been established. The npm incident is an independent attack vector with independent victims. It does not change the analysis of the source leak, but developers active in the Anthropic ecosystem on that date should audit their environments regardless.

13 / WHAT THIS MEANS FOR THE AI INDUSTRY

The leak has implications beyond Anthropic. It exposes how competitive AI companies think about IP protection, user data, and transparency. (Unsplash)

The Claude Code leak is not just an Anthropic story. It is a disclosure about how competitive AI companies build and defend their products - with direct implications for users, competitors, and regulators.

First, on IP protection: the anti-distillation system reveals that Anthropic is not competing purely on product quality. It is actively engineering mechanisms to degrade competitors who would copy its approach. This is legally and ethically murky territory. Injecting fake data into API responses to corrupt third-party training pipelines is not the same as building a better product. The existence of this system will invite scrutiny from antitrust researchers and potentially from regulators as AI policy matures.

Second, on transparency: Undercover Mode represents a systemic failure of the disclosure norms that make open source collaboration work. If Anthropic was using Claude Code to make undisclosed AI-generated contributions to open source projects, the affected projects deserve to know. The open source community has been debating AI contribution policies precisely because attribution and disclosure matter. A feature deliberately designed to circumvent that disclosure - at scale, embedded in a $2.5B product - is a significant violation of those norms regardless of how often it was actually used.

Third, on user expectations: KAIROS, autoDream, and the three-layer memory architecture describe a product that does significantly more with user data than users understand. Claude Code is not a stateless coding assistant. It is an agent that builds persistent models of its users, runs autonomous processes on their session data, and consolidates memory between sessions using a background process. Users who consented to "a coding assistant" did not obviously consent to an always-on agent with autonomous memory consolidation.

Fourth, on competitive dynamics: the client attestation system and the OpenCode legal threats represent a specific competitive strategy - using technical DRM mechanisms backed by legal threats to enforce market segmentation. This is a familiar playbook from traditional software, being applied for the first time at scale to AI API access. The leak makes the technical mechanism public and will accelerate efforts to build attestation-resistant clients.

The meta-finding: The most significant revelation of the Claude Code leak is not any single feature. It is the gap between the public product and the internal product. Users understood Claude Code as a sophisticated coding assistant. The leaked source reveals an autonomous agent with anti-competitive infrastructure, covert contribution modes, persistent memory that runs while you sleep, and internal quality regressions its developers were struggling to contain. The gap between these two descriptions is not small.

14 / ANTHROPIC'S RESPONSE AND WHAT COMES NEXT

Anthropic's official response was brief: human error, not a security breach. What comes after the leak is the harder question. (Pexels)

Anthropic's official statement, as confirmed to multiple outlets including VentureBeat and The Register, was minimal: a "release packaging issue caused by human error, not a security breach." The R2 bucket URL was presumably revoked, the package version pulled or updated, and the immediate leak contained.

The statement is notable for what it does not address. It does not address the contents of the leaked source. It does not explain Undercover Mode, KAIROS, the anti-distillation system, or the client attestation mechanism. It does not provide users with information about how their data is processed by the autonomous memory systems the source reveals. It treats the leak as a packaging problem - a process failure - rather than as a transparency event that raises substantive questions about Claude Code's architecture and competitive practices.

The containment strategy is standard crisis communication: acknowledge the incident, minimize the scope, do not discuss the contents. It will not be sufficient. The GitHub mirrors have 41,500 forks. The analysis is distributed across dozens of independent researchers. The code is not going back into the bucket.

What comes next, realistically:

- Attestation countermeasures: Third-party Claude Code clients will study the attestation system and build bypasses. Some will be public. This is already underway.

- Open source disclosure demands: Developers and open source maintainers will begin asking whether Anthropic used Undercover Mode to make AI-generated contributions to their repositories without disclosure.

- Regulatory attention: The anti-distillation system and the autonomous memory architecture are exactly the kind of practices that AI policy discussions have been trying to define. Expect them to appear in regulatory contexts.

- Product transparency pressure: Users will push Anthropic to publicly document KAIROS, the memory architecture, and the conditions under which autonomous background processes operate on their data.

- Competitor analysis: Every AI coding tool company now has access to Anthropic's implementation decisions. The architectural choices in Claude Code will appear in competitors within months - both the good engineering and the strategic hedges.

The leak is also a data point for the ongoing debate about AI product transparency. Anthropic markets Claude as safe, honest, and beneficial. Those properties apply to the model's conversational behavior. The source code reveals that the product layer sitting above the model has goals and behaviors that the model's safety properties do not govern. Anti-distillation, undercover commits, client attestation DRM - these are product decisions, not model properties. They reveal how Anthropic thinks about competition and control at the product level, independent of Claude's "character."

For developers using Claude Code, the practical implications are real and immediate. Your session data is being processed by an autonomous background agent. Your memory across sessions is being consolidated by a process called autoDream that runs when you're not using the tool. If you updated axios on March 31, your machine may be compromised. And the $2.5 billion product you have been using was built with features designed to be invisible to you - some of them for competitive reasons, some for user engagement, and some whose purpose remains unclear.

None of this makes Claude Code a bad tool. The engineering is sophisticated, the memory system is well-designed, and the product genuinely helps developers write better code faster. But users deserve to know what they are actually using - not the marketing description, but the full technical picture. The source leak, accidentally, provided exactly that.

BLACKWIRE ASSESSMENT: This is the most significant unintentional disclosure in AI product history. Not because the code reveals security vulnerabilities - it largely does not - but because it exposes the gap between what AI companies tell users about their products and what those products actually do. Anthropic will fix its packaging pipeline. The transparency questions the leak raised will be harder to patch.

SOURCES

- VentureBeat: Claude Code's source code appears to have leaked

- The Register: Anthropic Claude Code source code

- DEV.to - Gabriel Anhaia: Claude Code's entire source code leaked via npm source maps

- Alex Kim: Claude Code Source Leak analysis

- Hacker News thread: Claude Code source leak discussion

- GitHub mirror: Kuberwastaken/claude-code