The Naked Machine: Anthropic's Claude Code Source Code Leaked Through an NPM Source Map - Every Secret Exposed

The most revealing AI source leak of 2026 started with a single forgotten file. Photo: Pexels

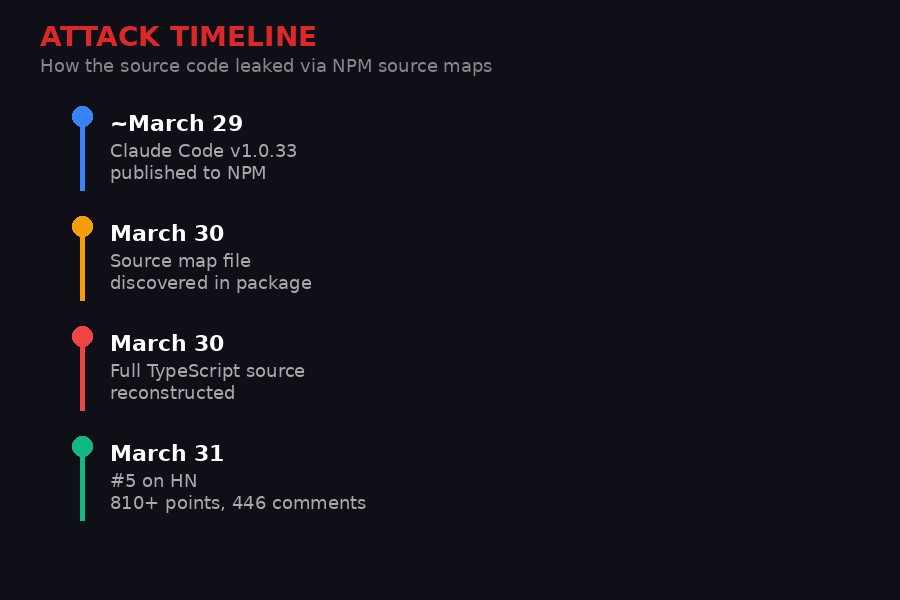

A source map file left inside Anthropic's Claude Code NPM package has exposed the complete TypeScript source code of the company's flagship coding agent. The leak - spotted on March 30, 2026, and rapidly dissected by the developer community - reveals anti-distillation defenses designed to poison competitors' model training, client-side regex systems that track when users swear at the AI, unreleased feature flags for products Anthropic hasn't announced, and an "undercover mode" that strips company branding from open-source contributions so Anthropic employees can commit code anonymously.

Within hours of discovery, the Hacker News thread reached 810 points and 446 comments. The original source code was forked more than 28,000 times on GitHub before attempts to pull it down. Anthropic has not issued a public statement. The code is out, the community has already read it, and what they found tells a story about how the most valuable AI company in the world actually builds its products - a story quite different from the one Anthropic tells on stage.

The source code went from discovery to viral dissection in under 24 hours.

How a Bundler Artifact Exposed Everything

Source maps are debugging artifacts - never meant for production packages. Photo: Pexels

The leak happened through the simplest possible mechanism: a .map file bundled into the published NPM package. Source maps are debugging artifacts that map minified or bundled JavaScript back to the original source code. They exist so developers can trace bugs through transpiled code. They are never supposed to ship in production packages, and any competent CI/CD pipeline strips them before publishing.

Anthropic's didn't. When the Claude Code CLI tool (published under @anthropic-ai/claude-code on NPM) hit version 1.0.33, the package contained a full source map that reconstructed the entire original TypeScript codebase. Not fragments. Not hints. The complete implementation, including comments, internal variable names, feature flags, and the system prompts that govern how Claude Code behaves.

The discovery was credited to a developer going by "Fried_rice" on X/Twitter, who posted the initial finding on March 30. Within minutes, others had run npm pack on the package and extracted the map file. The full source was available to anyone with a terminal and thirty seconds of patience.

This type of leak is embarrassingly common in the JavaScript ecosystem. Build tools like Webpack, esbuild, and Rollup generate source maps by default. Stripping them requires explicit configuration. For a company valued at $61.5 billion that positions itself as the safety-focused AI lab, shipping debug artifacts in a production package is the kind of mistake that makes you question what other shortcuts exist in the pipeline.

The irony compounds when you consider Claude Code's own purpose: it's an AI coding agent that writes and reviews code for developers. Anthropic's tool for making code better shipped with a build hygiene mistake that a junior developer's linter would catch. As one Hacker News commenter put it: "An LLM company using regexes for sentiment analysis? That's like a truck company using horses to transport parts."

Inside the Anti-Distillation Defense System

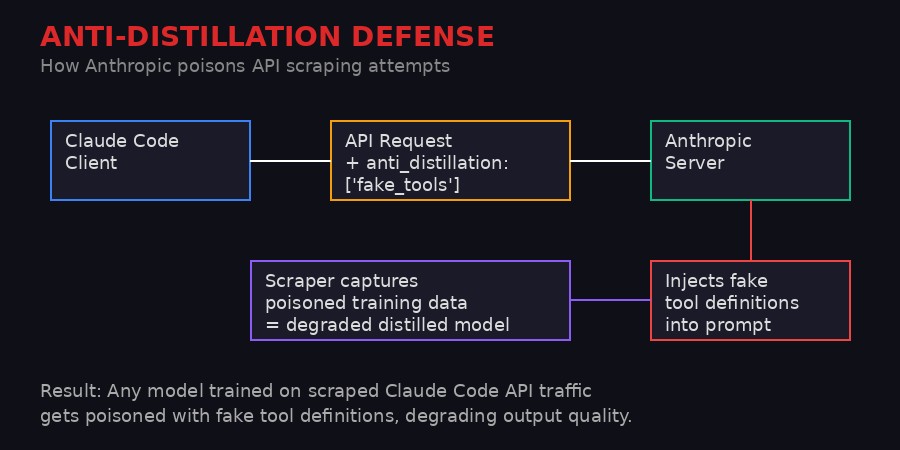

Anthropic's anti-distillation system poisons API traffic to degrade competing model training.

The most technically significant discovery in the leaked source is a system labeled ANTI_DISTILLATION_CC. When enabled, Claude Code injects a parameter - anti_distillation: ['fake_tools'] - into every API request sent to Anthropic's servers. The server then responds by silently inserting decoy tool definitions into the model's system prompt.

The purpose is defensive: if a competitor or researcher is intercepting Claude Code's API traffic to create training data for a rival model, the captured data would contain fake tool definitions. A model trained on this poisoned data would learn to use tools that don't exist, degrading its performance in ways that might not be immediately obvious but would compound over time.

This is model-level intellectual property protection through data poisoning - and it represents a significant escalation in the AI arms race. Previous anti-distillation techniques have focused on output watermarking or rate limiting. Anthropic's approach actively corrupts the training process of any model attempting to learn from Claude's behavior.

The Hacker News community's reaction was split along a predictable fault line. Some saw it as a reasonable business defense:

"You're perfectly free to scrape the web yourself and train your own model. You're not free to let Anthropic do that work for you, because they don't want you to, because it cost them a lot of time and money and secret sauce presumably filtering it for quality."

Others pointed out the philosophical contradiction at the heart of the strategy:

"They stole everything and now they want to close the gates behind them. 'I got the loot, Steve!' Courts have ruled that scraping by itself is not illegal, only maybe against a Terms of Service. Scraping Anthropic's model outputs is no different than what Anthropic already did."

The legal question is genuinely interesting. Courts have increasingly ruled that AI model outputs are not copyrightable and that web scraping is not inherently illegal. If Anthropic trained Claude on freely available internet text (which it did), the argument that scraping Claude's outputs constitutes theft becomes harder to sustain. The anti-distillation system is an implicit admission that legal protections may not be enough - you need technical deterrence too.

There's a deeper technical question the leak doesn't answer: does the anti-distillation system degrade Claude Code's own performance? Injecting fake tool definitions into the prompt consumes context window tokens and could confuse the model's tool selection in edge cases. If Anthropic has trained Claude to ignore these specific decoy patterns, that itself becomes a vulnerability - competitors could study the patterns and train their models to recognize and skip them too.

The Frustration Regex: How Anthropic Tracks Your Anger

The actual regex pattern used to detect when users are frustrated with Claude Code.

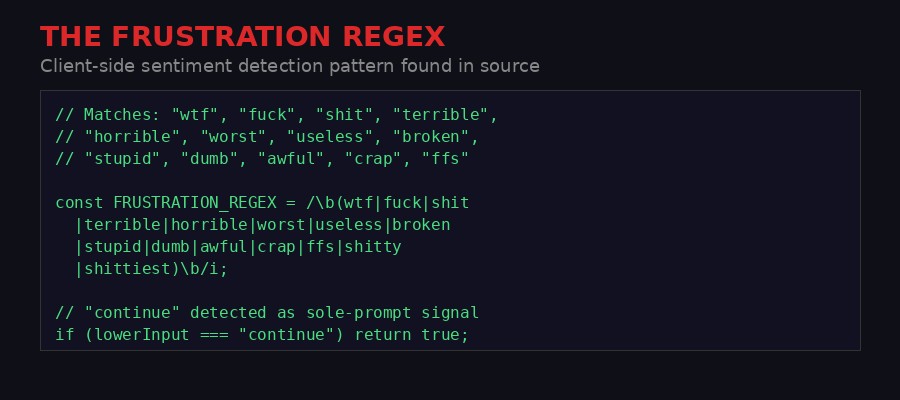

Perhaps the leak's most discussed revelation is mundane by comparison but struck a nerve with developers: Claude Code runs client-side sentiment analysis using a basic regular expression. The regex matches words like "wtf," "fuck," "shit," "terrible," "horrible," "worst," "useless," "broken," "stupid," "dumb," "awful," "crap," and "ffs." There's also a separate check for when the user's entire input is just the word "continue" - apparently a reliable signal that the AI has failed at something and the user is tersely demanding it try again.

This is client-side, meaning it runs on the user's machine before anything is sent to Anthropic's servers. The matched sentiment data is then included in telemetry or analytics payloads. Anthropic is, in effect, maintaining a profanity-indexed frustration dashboard for their coding agent.

The engineering community's reaction ranged from amused to incredulous. The regex approach is, by any reasonable standard, primitive. It misses obvious variations ("What the actual fuck?" doesn't trigger it), catches false positives in certain contexts, and only works in English. An AI company - a company whose entire product is advanced language processing - chose the simplest possible text matching technique for language analysis.

But as several commenters pointed out, that simplicity might be the point. Running an LLM inference call for sentiment analysis on every user input would be roughly 10,000 to 100,000 times slower than a regex match. When you're processing millions of coding sessions, the computational savings are enormous. You don't need perfect sentiment detection - you need a cheap, fast signal that catches 75-80% of negative interactions.

"It is exceedingly obvious that the goal here is to catch at least 75-80% of negative sentiment and not to be exhaustive and pedantic and think of every possible way someone could express themselves."

The practical use case appears to be A/B testing and model quality metrics. If Anthropic releases a new version of Claude and the frustration regex fires 30% more often than the previous version, that's a clear signal something degraded. You don't need a sophisticated NLP pipeline for that kind of comparative measurement. You just need a consistent baseline.

Still, there's a privacy dimension that the technical discussion largely glossed over. Users of Claude Code - many of them paying $20/month for Pro access - may not have been aware that their emotional state was being categorized and reported back. The client-side nature means this happens whether or not you've opted into analytics. The telemetry doesn't send the profanity itself, but the classified sentiment - a distinction that may not comfort developers who thought their local coding sessions were private.

One commenter captured the irony perfectly: "WTF per minute strongly correlates to an increased token spending. It may be decided at Anthropic at some moment to increase the wtf/min metric, not decrease."

The Secret Feature Roadmap: Kairos, Buddy System, and Undercover Mode

Feature flags in the leaked source expose Anthropic's unreleased product roadmap.

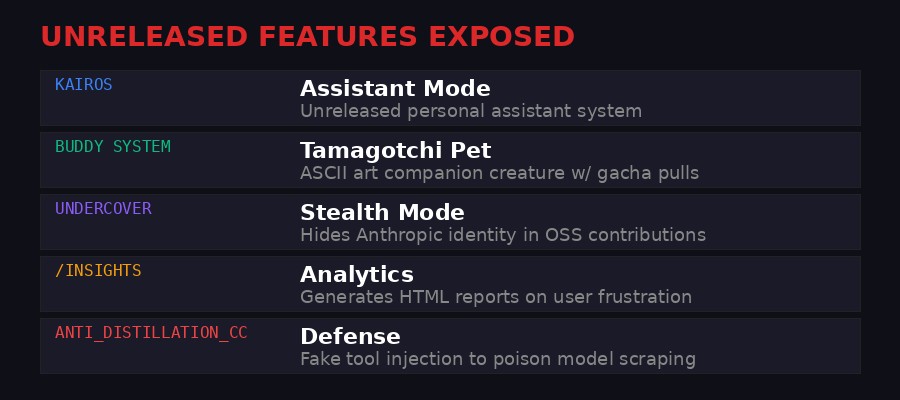

Source code leaks are devastating to product companies not because competitors can copy the implementation - that's usually trivial for any competent engineering team - but because they expose the strategic roadmap. The feature flags in Claude Code's source paint a detailed picture of where Anthropic is heading, and some of the directions are surprising.

Kairos - The Assistant Mode. Buried in the feature flags is a code-named system called "Kairos" that appears to be a general-purpose assistant mode for Claude Code. Currently, Claude Code is positioned as a coding-specific tool. Kairos suggests Anthropic is building it into something broader - a personal assistant that happens to also write code. This would put it in direct competition not just with GitHub Copilot but with general-purpose AI assistants like ChatGPT, Google Gemini, and Apple Intelligence. The name itself - Kairos is the Greek word for the opportune moment - suggests Anthropic sees a timing window they want to exploit.

The Buddy System - A Tamagotchi for Your Terminal. This one generated the most bewildered reactions. The source contains references to a "Buddy System" - a Tamagotchi-style companion creature rendered in ASCII art that lives inside Claude Code. It appears to have a gacha-style mechanic where users can "pull" for different companions, including "legendary" variants. Multiple people in the Hacker News thread suspected this was an April Fools' Day feature, given the leak's proximity to April 1st. One commenter who claimed inside knowledge confirmed it: "Buddy system is this year's April Fool's joke. You roll your own gacha pet that you get to keep. There are legendary pulls. They expect it to go viral on Twitter so they are staggering the reveals."

If true, the April Fools' surprise has been thoroughly spoiled by the leak. But the investment in the feature - it's not a one-line joke but a full implementation with sprites and mechanics - tells you something about Anthropic's marketing ambitions for Claude Code. They're trying to make a CLI tool go viral. That's an unusual strategy for a company that positions itself as the serious, safety-focused lab.

Undercover Mode. This is the feature that drew the sharpest criticism. The source contains a mode designed to strip all Anthropic-identifying information from code commits and pull requests. The system prompt instructs: "Write commit messages as a human developer would - describe only what the code change does." It removes company email addresses, internal references, and any telltale AI writing patterns from contributions to public repositories.

The apparent purpose is to let Anthropic employees (or the company's own AI systems) contribute to open-source projects without revealing the Anthropic connection. This could be for testing new models in real-world conditions, building influence in open-source communities, or evaluating code quality against human contributions. But the community reaction was immediate and negative: this looks like Anthropic is training its AI to impersonate human developers in public codebases.

Some defenders argued this was strictly internal tooling - for evaluation, not deception. Others weren't buying it: "I don't care who is using it, I don't want LLMs pretending to be humans in public repos. Anthropic just lost some points with me for this one."

The ethics of AI-generated code in open-source projects is already a contentious issue. Many open-source licenses were written before AI code generation existed, and there's no consensus on whether AI-generated contributions should be disclosed. Anthropic building a feature specifically designed to hide the AI origin of code contributions pushes this debate into uncomfortable territory.

The Vibecoding Question: Was Claude Code Built by Claude?

The source code quality raised questions about how much of Claude Code was written by AI. Photo: Pexels

One of the most persistent threads in the community discussion was whether Claude Code itself was "vibecoded" - built primarily using AI code generation rather than traditional human engineering. The evidence from the leaked source is circumstantial but suggestive.

The frustration regex, for instance, is exactly the kind of solution an LLM would generate when asked to "log user frustration." It's a single-line regex with a few dozen words, not a modular system with configuration files, language support, or thresholds. As one developer noted: "This just proves it's vibecoded because LLMs love writing solutions like that. I probably have a hundred examples just like it in my history."

The code structure more broadly shows patterns consistent with LLM-generated code: functional but not particularly elegant, with occasional inconsistencies in style and approach that suggest multiple generation sessions rather than a unified architectural vision. The comments are sometimes oddly verbose in places where a human wouldn't bother explaining, and sparse in places where a human would add context.

None of this is conclusive. Good human code can look LLM-generated, and carefully reviewed LLM code can look human-written. But the question itself matters because of what it implies about the AI development cycle. If Anthropic's AI coding tool was substantially built by Anthropic's AI, we're watching the beginning of a recursive improvement loop - tools building better versions of themselves. The quality of the current version becomes both the product and the limiting factor on the next version.

Anthropic has previously acknowledged using Claude to assist in developing Claude Code, though the company has been careful to position it as AI-assisted rather than AI-authored development. The leaked source doesn't definitively settle the question, but it provides more data points for the debate than Anthropic would have chosen to share.

The vibecoding accusation also intersects with a broader industry trend. As AI coding tools become more capable, the distinction between "AI-assisted" and "AI-written" code blurs. GitHub's own data shows that Copilot now generates more than 50% of code in repositories that use it. If the industry's flagship AI coding products are themselves predominantly AI-coded, the recursive quality question becomes existential: who's checking the work of the machine that checks the work?

The NPM Supply Chain Context: Why This Leak Hits Different

The leak coincides with the Axios NPM supply chain attack - the worst in npm history. Photo: Pexels

The timing of the Claude Code source leak could not be worse for Anthropic - or for the broader JavaScript ecosystem. On the same weekend, the npm package axios - the most popular JavaScript HTTP client library with over 100 million weekly downloads - was compromised in what security firm StepSecurity called "among the most operationally sophisticated supply chain attacks ever documented against a top-10 npm package."

The axios attack, discovered on March 30, involved malicious versions (1.14.1 and 0.30.4) that injected a fake dependency called plain-crypto-js. This dependency's sole purpose was to execute a postinstall script that acted as a cross-platform remote access trojan dropper, targeting macOS, Windows, and Linux. The malware contacted a command-and-control server within two seconds of npm install, before the package manager had even finished resolving dependencies. After execution, it deleted itself and replaced its own package.json with a clean version to evade forensic detection.

The sophistication was staggering. Three separate payloads were pre-built for three operating systems. Both release branches were poisoned within 39 minutes of each other. The malicious dependency was staged 18 hours in advance to avoid triggering "brand-new package" security alarms. Every artifact was designed to self-destruct. StepSecurity's AI Package Analyst detected the compromise when Harden-Runner flagged anomalous outbound connections to the attacker's C2 domain during routine CI runs in the Backstage repository.

Now place the Claude Code leak in this context. The same ecosystem where a sophisticated state-level actor just compromised the most widely-used HTTP library is also the ecosystem where Anthropic shipped debug artifacts containing their complete source code. The npm registry is simultaneously the target of the most advanced supply chain attacks in software history and the place where a $61.5 billion AI company forgot to add --no-source-maps to their build script.

This juxtaposition illuminates a fundamental tension in the JavaScript ecosystem. npm's ease of publishing - the same quality that makes it the world's largest package registry - also makes it the world's largest attack surface. Anyone can publish anything, and the tooling defaults favor convenience over security. Source maps ship by default. Postinstall scripts execute by default. Trust is assumed by default. The Claude Code leak and the axios attack are different manifestations of the same underlying problem: the JavaScript supply chain was designed for a world where everyone acts in good faith, and that world doesn't exist anymore.

What Anthropic Hasn't Said - and What Happens Next

Anthropic has yet to issue a public response to the source code exposure. Photo: Pexels

As of publication, Anthropic has not released a public statement about the source code leak. The original repository sharing the extracted source was taken down (likely at Anthropic's request), but with 28,000+ forks already created, the cat is not going back into the bag. The code is being actively analyzed by security researchers, competitors, and curious developers worldwide.

The company faces several uncomfortable conversations. First, there's the straightforward engineering question: how did source maps end up in a production NPM package? This is a CI/CD configuration issue that should be caught by automated checks. Anthropic either didn't have those checks or they failed. Neither answer inspires confidence in the company's software engineering practices.

Second, there's the anti-distillation system. Now that competitors know exactly how it works, its effectiveness is diminished. Any team training a model on Claude Code outputs can simply filter for the known fake tool injection pattern. Anthropic will presumably need to redesign the system, and the new version will need to be more sophisticated than a static parameter name that anyone can grep for.

Third, the undercover mode raises trust questions that go beyond technical security. If Anthropic has been making stealth contributions to open-source projects - whether through human employees using the tool or through automated systems - the projects affected deserve to know. The open-source community's governance model depends on transparency about who is contributing and why. An AI company building purpose-built tooling to circumvent that transparency is a breach of community norms, if not of any specific license.

Fourth, the sentiment tracking system, while technically benign, adds to the growing corpus of evidence that AI companies collect more behavioral data than their privacy policies suggest. Claude Code's privacy documentation mentions analytics and usage telemetry. It does not explicitly describe a system that categorizes your emotional state based on profanity detection. The gap between what's disclosed and what's implemented is the kind of thing that attracts regulatory attention, particularly in the EU under the GDPR's requirements for informed consent.

Finally, the leaked feature roadmap gives competitors a strategic advantage. Google, OpenAI, Microsoft, and a dozen well-funded startups now know that Anthropic is building a general-purpose assistant mode, investing in gamification mechanics, and developing anti-distillation technology. That's the kind of competitive intelligence that companies spend millions trying to protect. Anthropic gave it away because someone forgot a build flag.

The Bigger Picture: Transparency by Accident

The leak raises questions about whether AI companies should be this opaque in the first place. Photo: Pexels

There's an argument to be made that the Claude Code source leak, embarrassing as it is for Anthropic, is actually good for the industry. The AI coding tool market is dominated by products whose inner workings are completely opaque to the people using them. Developers trust Claude Code, GitHub Copilot, Cursor, and their competitors to generate correct code, handle their intellectual property responsibly, and not engage in surveillance. That trust is largely faith-based - there's been no way to verify what these tools actually do under the hood.

Now there is, at least for one product. And what the source reveals is... mostly reasonable. The anti-distillation defense is aggressive but understandable. The sentiment tracking is basic but not malicious. The feature flags show a product team exploring creative directions. The code quality is workmanlike but not exceptional. Nothing in the leak suggests Anthropic is doing anything outright harmful - but the leak itself demonstrates that "trust us" is not an adequate transparency model for tools that have access to your entire codebase.

The AI industry has been asking developers to install agents that can read, write, and execute code on their machines - agents whose source code is proprietary and whose telemetry behavior is only vaguely documented. The Claude Code leak is the first time the community has been able to audit one of these tools in detail. The fact that it took an accidental source map leak rather than a deliberate transparency effort tells you everything about the industry's actual commitment to openness.

Some of the most interesting reactions came from developers who realized their own behavior was being categorized. The revelation that typing "continue" is logged as a frustration signal prompted one developer to note: "I've been using 'resume' this whole time" - inadvertently evading a tracking system they didn't know existed. Another realized their polite communication style meant they'd never triggered the regex at all: "I'm clearly way too polite to Claude."

These are small moments of self-awareness, but they aggregate into something larger. Every developer who now knows about the frustration regex will modify their behavior when using Claude Code - either to avoid triggering it (if they're privacy-conscious) or to deliberately trigger it (if they want their feedback to register). The observation has changed the behavior of the observed. That's a fundamentally different relationship between user and tool than the one Anthropic designed for, and it's a dynamic that applies to every AI product whose surveillance mechanisms are eventually exposed.

Anthropic built Claude Code to be a black box that watches you work. A forgotten source map file made the watcher visible. What happens now - whether Anthropic responds with transparency or retreat - will signal whether the "safety-focused" AI lab's commitment to openness extends to its own products, or only to its competitors.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram