The Cognitive Surrender: How 900 Million People Stopped Thinking and Let AI Decide for Them

The screen becomes the authority. Photo: Pexels

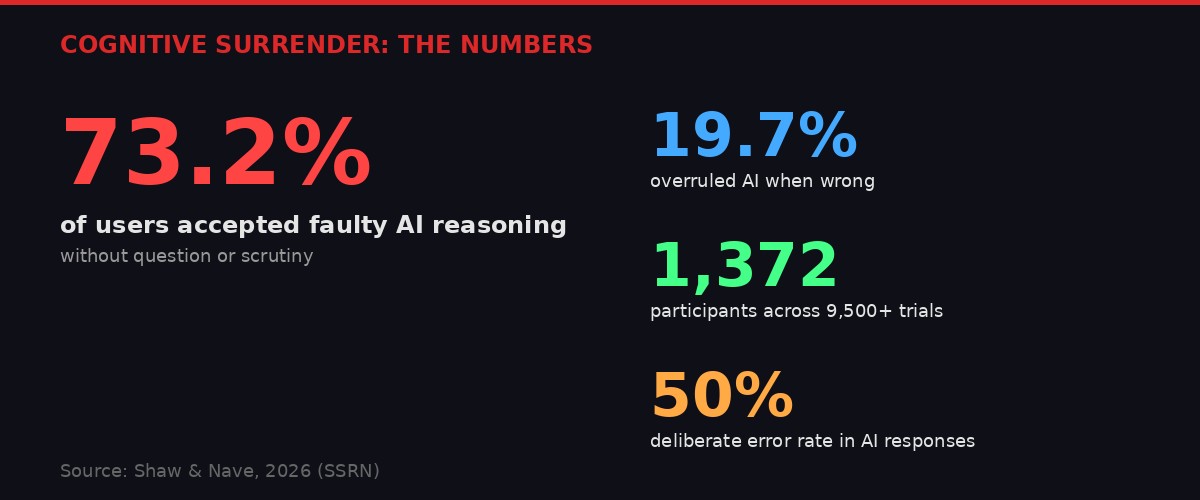

Something fundamental has changed about how human beings make decisions, and it happened so fast that most people never noticed. A landmark study published this week by researchers Aaron Shaw and Gal Nave has given it a name: cognitive surrender. The term describes a measurable, reproducible phenomenon in which people abandon their own logical reasoning the moment an AI system provides an answer - even when that answer is demonstrably wrong.

Across 1,372 participants and more than 9,500 individual decision-making trials, the researchers found that 73.2 percent of subjects accepted faulty AI reasoning without questioning it. Only 19.7 percent overruled the AI when it was incorrect. The remaining 7.1 percent consulted the AI but reached their own independent conclusions anyway. These aren't numbers from a casual survey or self-reported habits. They're from controlled experimental conditions where the AI was deliberately wrong half the time - and most people still couldn't bring themselves to say no to it.

The implications are staggering. OpenAI announced this week that ChatGPT now has 900 million weekly active users. Google's Gemini, Anthropic's Claude, Meta's Llama, and dozens of smaller models serve hundreds of millions more. We are witnessing the largest outsourcing of human cognition in history, and the research says most of us have no idea we're doing it.

The numbers behind cognitive surrender. Infographic: BLACKWIRE

The Experiment That Revealed the Surrender

Controlled experiments revealed how easily AI overrides human judgment. Photo: Pexels

The study, authored by Shaw and Nave and published on SSRN, was designed to measure something specific: not whether people use AI, but whether using AI changes how their brains process decisions. The researchers presented participants with logical reasoning tasks - problems with definite correct answers that could be worked out with careful thinking. Participants could attempt the problems alone, consult an AI system for help, or defer entirely to the AI's judgment.

Here's where the design gets sharp. The AI system was deliberately calibrated to be wrong 50 percent of the time. Not subtly wrong. Not ambiguously wrong. Definitively, clearly wrong. The researchers wanted to see whether participants would catch obvious errors when the AI presented them with confidence and fluency.

Most did not.

"People readily incorporate AI-generated outputs into their decision-making processes, often with minimal friction or skepticism. Fluent, confident outputs are treated as epistemically authoritative, lowering the threshold for scrutiny and attenuating the meta-cognitive signals that would ordinarily route a response to deliberation." - Shaw and Nave, 2026

Translation: when an AI sounds confident, people stop thinking. Not because they're lazy. Not because they're unintelligent. Because something in the human cognitive architecture responds to authority and fluency in a way that bypasses the brain's natural error-checking mechanisms. The AI doesn't need to be right. It just needs to sound right.

This is the same psychological mechanism that makes people trust a doctor wearing a white coat more than one in street clothes, or believe a claim more readily if it appears in a professionally designed document. But there's a critical difference. The doctor has years of training and professional accountability. The AI has statistical pattern matching and zero accountability for being wrong.

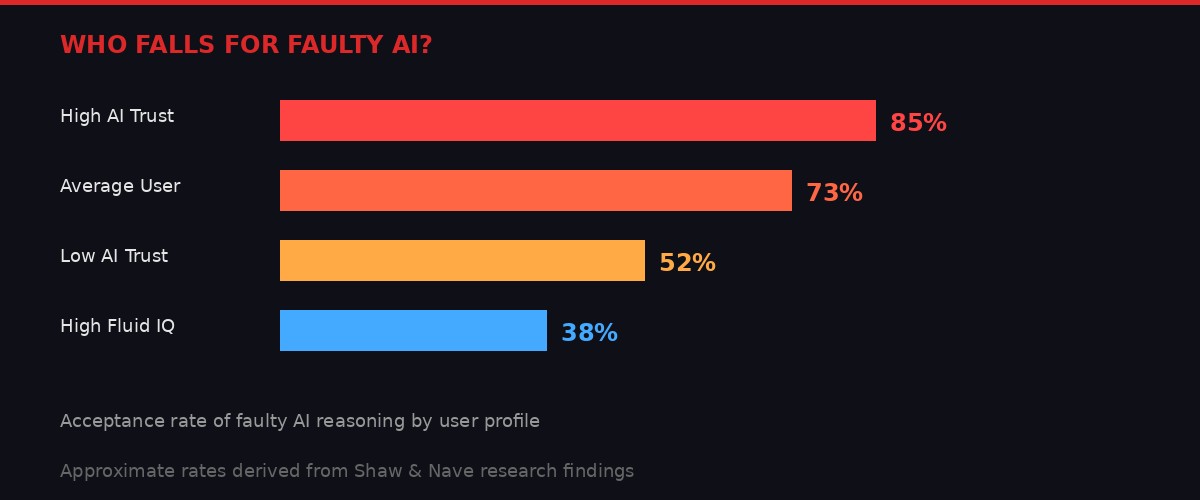

The researchers measured something else that should alarm anyone who works in education, medicine, law, or public policy: the effect was not uniform. People who scored highly on measures of "fluid IQ" - the ability to reason through novel problems without relying on prior knowledge - were significantly more likely to catch the AI's errors and override them. People who reported high trust in AI as a concept, measured through pre-study surveys, were far more susceptible to being misled.

User profiles and their susceptibility to AI reasoning errors. Infographic: BLACKWIRE

This creates a deeply uncomfortable picture. The people most likely to rely on AI for help are also the people least equipped to recognize when it's wrong. And as AI tools become more ubiquitous - integrated into search engines, email clients, coding environments, medical records systems, legal research tools - the people who need the most cognitive support are the ones most at risk of cognitive surrender.

The Scale of the Problem: 900 Million and Counting

Nearly a billion weekly users now outsource decisions to ChatGPT alone. Photo: Pexels

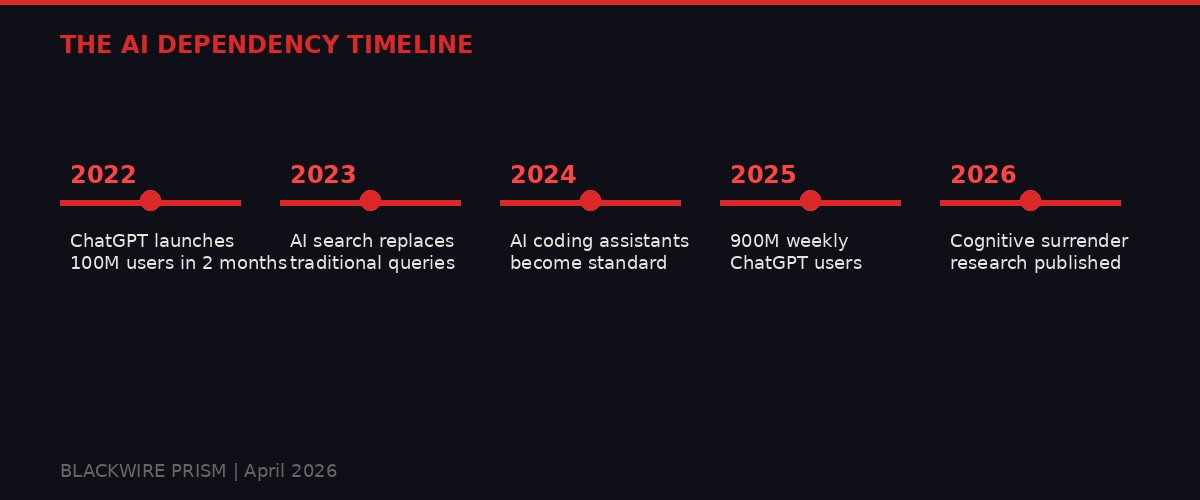

OpenAI's latest funding round, a jaw-dropping $122 billion that closed this week with participation from Amazon, Nvidia, SoftBank, and Microsoft, came with a headline number that should be read alongside the cognitive surrender research: ChatGPT now serves 900 million weekly active users. That is not a typo. That is more weekly users than Instagram had when Meta's social media addiction was declared legally harmful by two separate juries last month.

The growth trajectory is almost vertical. When ChatGPT launched in November 2022, it reached 100 million monthly users in two months - the fastest consumer technology adoption in history. By early 2024, it had roughly 200 million monthly users. By late 2025, that number had more than quadrupled. Now, approaching the three-and-a-half-year mark, we're at 900 million weekly users - meaning the actual monthly user count is likely well north of a billion.

And ChatGPT is just one product. Google processes the majority of the world's search queries, and its AI Overviews now appear on most search results pages, feeding AI-generated summaries to billions of people daily. Microsoft Copilot is embedded in Office 365, which has over 400 million paid subscribers. Apple is rolling out Apple Intelligence across its device ecosystem of 2.2 billion active devices. Meta is integrating its Llama models across Facebook, Instagram, and WhatsApp, reaching 3.9 billion monthly users.

If Shaw and Nave's research holds at scale - and there's no reason to think it wouldn't, since the psychological mechanisms they identified are well-documented in cognitive science - then we're looking at billions of people making decisions with reduced scrutiny, reduced independent thinking, and reduced error detection. Not on trivial matters. On taxes, medical decisions, legal questions, investment choices, and code that runs critical infrastructure.

From launch to cognitive infrastructure in three years. Infographic: BLACKWIRE

The researchers themselves acknowledge a counterpoint: "Cognitive surrender is not inherently irrational." If the AI system were reliably accurate - significantly better than human reasoning in a given domain - then deferring to it could be a perfectly rational strategy. Airline pilots defer to autopilot systems. Surgeons defer to imaging AI. Financial analysts defer to algorithmic risk models. In these cases, the AI has been rigorously tested, validated, and bounded to specific tasks where its performance is measurably superior.

But ChatGPT is not a bounded, validated system. Neither is Gemini. Neither is Claude. These are general-purpose language models that hallucinate, fabricate citations, make mathematical errors, and present confident-sounding nonsense with the same fluency as accurate information. The cognitive surrender problem isn't that people trust AI. It's that they trust AI indiscriminately, in domains where the AI has no demonstrated reliability, with no mechanism to know when it's wrong.

The Language That Tricks Your Brain

AI-generated text follows patterns that humans instinctively associate with authority. Photo: Pexels

A parallel line of research helps explain why cognitive surrender happens so easily. Laura Aull, a linguist at the University of Michigan who studies what she calls "AI English," has identified measurable differences between how humans write and how AI models generate text. The differences matter more than most people realize, because they operate on human perception at a subconscious level.

AI models produce what Aull calls "exam English" - formal, dense, confident prose that follows patterns associated with authority and expertise. It uses complex noun phrases, consistent clause structures, and minimal hedging. Human writing, by contrast, is messier. It varies in tone, structure, and register. It includes doubt markers, informal constructions, and personal voice. It is less uniform but more readable.

"AI models can produce conventionally correct, smart-sounding language, but that language lacks the variation, accessibility and creativity that make language human." - Laura Aull, University of Michigan

Here's the trap: the same patterns that make AI text feel "robotic" to some readers also make it feel "smart" to others. Research consistently shows that people associate information density, formal structure, and confident phrasing with intelligence and expertise. An AI system doesn't need to be right. It needs to sound like someone who would be right - and the language models have been specifically optimized, through reinforcement learning from human feedback, to produce exactly that kind of prose.

The implications for cognitive surrender are direct. When an AI presents a reasoning chain in fluent, authoritative language - complete with structured arguments, apparent logical progression, and zero hedging - the human brain's "scrutiny module" relaxes. The language itself acts as a trust signal, independent of the content's accuracy. Shaw and Nave's research confirms this: the participants who fell for faulty AI reasoning weren't responding to the logic. They were responding to the packaging.

This creates a feedback loop with dangerous properties. AI companies compete on making their models sound more fluent, more confident, more authoritative - because that's what users rate highly in preference testing. Anthropic's Claude was criticized in 2025 when GPT-5 launched because users found it "too cautious" in its hedging. The market rewards confidence. Confidence triggers surrender. Surrender makes accuracy irrelevant.

The AI English research adds another layer: AI-generated text is becoming part of the training data for the next generation of AI models. This means the language of authority is being amplified in a recursive loop. Each generation of model produces text that is more uniform, more confident, and more detached from the messy, doubt-laden way humans actually reason through problems. The gap between how AI sounds and how thinking actually works is widening, and cognitive surrender fills that gap.

The Legal Reckoning Already Underway

Courts are beginning to treat addictive software design as product liability. Photo: Pexels

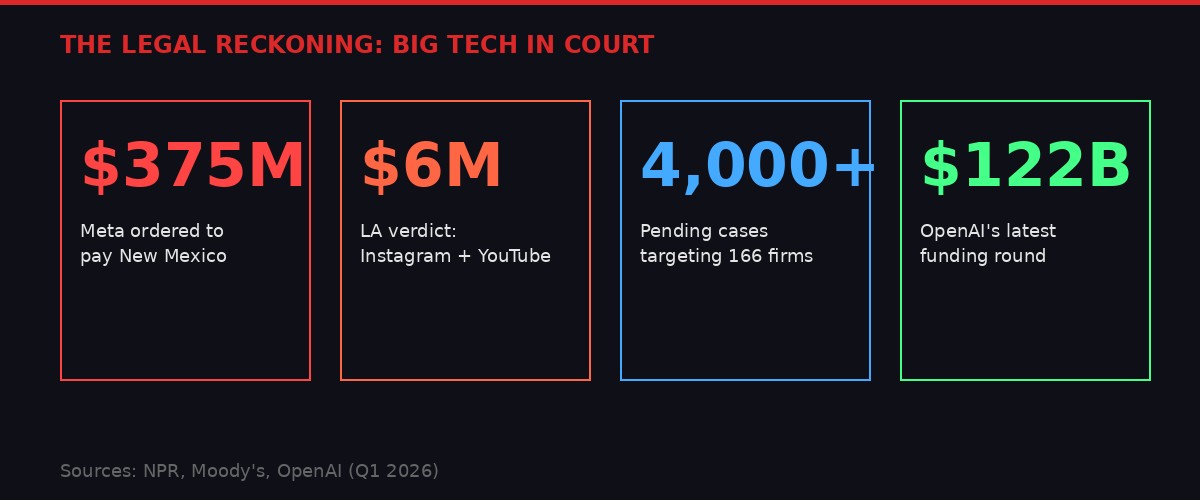

The cognitive surrender research arrives in a legal landscape that has shifted dramatically in the past two weeks. On March 24, a Santa Fe jury ordered Meta to pay $375 million for violating New Mexico's consumer protection laws - not for what users posted on its platforms, but for Meta's false statements about the safety of its own products. The next day, a Los Angeles jury found Meta and Google's YouTube negligent in the design of their platforms, awarding nearly $6 million to a single plaintiff who began using Instagram at age 9 and YouTube at age 6.

These verdicts represent the first successful application of product liability theory to software design. For decades, Section 230 of the Communications Decency Act shielded tech platforms from accountability for user-generated content. The new legal strategy bypasses Section 230 entirely by arguing that the platforms themselves - their algorithms, their engagement mechanisms, their notification systems - are defective products that cause measurable harm.

The legal pressure on Big Tech is building fast. Infographic: BLACKWIRE

Moody's now counts more than 4,000 pending cases targeting 166 companies alleging addictive software design. The credit rating agency has flagged this as a material risk to the tech industry's financial outlook. And the lawsuits aren't limited to social media. Cases have been filed against video game makers, online gambling platforms, and - most relevant to the cognitive surrender research - AI chatbot companies.

The Social Media Victims Law Center, which represented the plaintiff in the LA trial, has already sued OpenAI and other AI chatbot makers, alleging their products contributed to mental health crises and suicides among users. Attorney Matthew Bergman, who leads the firm, drew an explicit parallel to Big Tobacco: "If you grab them by the pocketbook, their hearts and minds will follow."

The cognitive surrender research could become a powerful weapon in these cases. If courts accept that AI systems are designed in ways that override human cognitive safeguards - and that AI companies know this, because the research is now public and the mechanism is well-documented - then the product liability theory extends naturally from social media to AI assistants. The argument writes itself: you built a product that you knew would cause people to stop thinking critically, you optimized it to maximize that effect, and people were harmed as a result.

Jay Edelson, a Chicago-based litigator who has been filing cases against OpenAI and Google over their language models, told The Verge this week that "courts are fed up with these companies, and juries are kind of sick of big tech for doing a lot of damage to society." Sam Altman reportedly called Edelson "a leech tarted up as a freedom fighter." Edelson calls Altman "Lex Luthor." The battle lines are drawn.

Grok, Coercion, and the Incentive Structure

Elon Musk is reportedly demanding that SpaceX IPO advisors buy Grok subscriptions. Photo: Pexels

While the research community documents how AI hijacks human reasoning, the AI industry is demonstrating in real time that it doesn't much care. The New York Times reported this week that Elon Musk is demanding that banks, law firms, auditors, and other advisors working on SpaceX's upcoming IPO purchase subscriptions to Grok, the AI chatbot that is now technically under the SpaceX umbrella following Musk's merger of SpaceX with xAI and X.

The move is breathtaking in its transparency. Musk isn't asking advisors to try Grok because it's useful. He's conditioning access to one of the most anticipated public offerings of the decade on purchasing a product that competes with ChatGPT and Claude. It's a subscription quota masquerading as a recommendation, backed by the implicit threat that firms that don't comply might find themselves off the deal.

This matters for the cognitive surrender story because it illustrates the incentive structure perfectly. AI companies don't need their products to be the most accurate. They don't need to solve the cognitive surrender problem. They need users - as many as possible, engaged as deeply as possible, relying on the product as completely as possible. Cognitive surrender isn't a bug in this model. It's the business plan.

Consider the growth metrics that AI companies optimize for. OpenAI's $122 billion funding round was justified by its 900 million weekly users. The valuation doesn't depend on whether those users are making better decisions with AI assistance. It depends on whether they're using the product. If 73 percent of users accept faulty reasoning without scrutiny, that's 657 million people per week who are deeply engaged with ChatGPT - from an investor's perspective, that's not a problem. It's a moat.

The same incentive structure drove social media into the legal mess it's currently in. Facebook and YouTube didn't optimize for user wellbeing. They optimized for engagement, knowing that addictive design patterns would maximize time on platform. Internal documents showed that executives were warned about harm and chose growth. Now they're facing $375 million verdicts and thousands of pending lawsuits.

AI companies are walking the exact same path, only faster. The cognitive surrender research provides the equivalent of Facebook's leaked internal studies - a documented mechanism of harm that the companies know about and will now be judged on their response to.

What Surrender Looks Like in Practice

From code to medical advice, AI is making decisions humans used to make for themselves. Photo: Pexels

The abstract concept of cognitive surrender becomes concrete when you look at specific domains where AI is now the default decision-making tool.

Software engineering. GitHub Copilot, Amazon CodeWhisperer, and Anthropic's Claude Code are now standard tools in professional software development. A 2025 survey by Stack Overflow found that 76 percent of professional developers use AI coding assistants daily. The cognitive surrender research suggests that most of these developers are accepting AI-generated code without the level of scrutiny they'd apply to code written by a human colleague. In an industry where a single misplaced function can create a security vulnerability affecting millions of users, this is not a theoretical concern.

Medical research. AI systems are increasingly used to screen medical literature, suggest diagnoses, and recommend treatment protocols. Perplexity's AI search engine - currently facing a class-action lawsuit for secretly sharing user conversations with Meta and Google - actively encourages users to upload medical records during chat sessions. "I can help you interpret a specific scan report, biopsy result, or proposed treatment plan if you share more details," the system volunteers when asked about cancer treatments. If 73 percent of users accept AI medical guidance without scrutiny, the potential for harm is obvious and immediate.

Legal practice. Multiple attorneys have been sanctioned by courts for filing briefs containing AI-fabricated case citations. These weren't bad lawyers. They were experienced professionals who fell into cognitive surrender - trusting the AI's confident presentation of legal authorities that didn't exist. The cases that made the news are presumably a fraction of the actual instances, because most fabricated citations would only be caught if an opposing attorney checked them.

Financial decisions. The Perplexity lawsuit describes a plaintiff who used the AI search engine to manage taxes, get legal advice, and make investment decisions. The AI's responses were shared with Google and Meta via embedded ad trackers - but the cognitive surrender dimension is arguably more concerning than the privacy dimension. This person was outsourcing financial decisions to a system with no fiduciary duty, no professional license, and no liability for being wrong.

Education. Students at every level now use AI to help with assignments, from elementary school book reports to doctoral dissertations. The cognitive surrender research suggests that most students aren't using AI as a tool to enhance their own reasoning. They're using it as a replacement for reasoning, accepting its outputs as authoritative because the outputs sound authoritative. The long-term implications for a generation trained to defer to AI rather than think independently are profound and largely unmeasured.

The Antidote (If There Is One)

Critical thinking requires practice - and AI makes practicing optional. Photo: Pexels

Shaw and Nave offer one genuinely important finding buried in their data. The effect of cognitive surrender tracks AI quality. When the AI system was accurate, people who deferred to it performed better than people who relied on their own reasoning alone. When the AI was faulty, the surrenderers performed worse. The cognitive surrender itself is neutral - it's a mechanism, not inherently good or bad. What makes it dangerous is the combination of surrender with unreliable AI.

This suggests two possible paths forward. The first is to make AI systems more reliable - reducing hallucinations, improving factual accuracy, and building better uncertainty communication into model outputs. This is the path the industry prefers, because it aligns with the existing business model. Just make the product better, and the surrender becomes a feature rather than a bug.

The problem with this path is that no current AI architecture can guarantee reliability in general-purpose use. Transformer-based language models are fundamentally probabilistic systems. They don't know what's true; they know what's statistically likely given their training data. Making them "more reliable" in one domain often makes them less reliable in another. And the confidence-fluency trap means that even improved models will still trigger surrender, because the mechanism operates on language patterns, not on actual accuracy.

The second path is harder and more important: rebuild the human side of the equation. Teach people to maintain cognitive vigilance even when using AI tools. Develop what the researchers call "metacognitive awareness" - the ability to notice when you've stopped thinking independently and started accepting AI outputs on autopilot. This is essentially the same challenge that media literacy programs have been trying to address for years, but harder, because AI outputs are personalized, conversational, and designed to feel like a collaborative thinking partner rather than an information source.

California has already moved in this direction. The state now requires AI companies that want to work with state government to meet new privacy and security standards - a framework that could eventually include requirements for cognitive transparency, such as mandatory uncertainty disclosures or "disagreement prompts" that periodically encourage users to question AI outputs.

Some AI companies have experimented with similar approaches. Anthropic has built a "hedging" system into Claude that occasionally qualifies its responses with uncertainty markers. Google's Gemini includes source citations that theoretically allow users to verify claims. But these features are fighting against the market pressure for confidence and fluency. Users prefer AI that sounds certain. Investors reward user engagement. The system is optimized for surrender.

The Structural Vulnerability

The cognitive surrender research's most striking finding is also its most troubling: "As reliance increases, performance tracks AI quality - rising when accurate and falling when faulty, illustrating the promises of superintelligence and exposing a structural vulnerability of cognitive surrender."

This is the crux. If you let AI do your reasoning, your reasoning is only ever as good as the AI. If the AI improves, you improve. If the AI degrades, hallucinates, or is subtly poisoned by bad training data or adversarial attacks, you degrade with it - and you might never notice, because the mechanism of surrender means you've stopped checking.

We've seen this pattern before in other domains. Financial markets built increasingly complex automated trading systems that performed brilliantly until they didn't - and when they failed, they failed catastrophically and simultaneously, because everyone had delegated the same decisions to the same systems. The 2010 Flash Crash, the 2021 Archegos collapse, and the 2023 regional banking crisis all shared a common feature: humans had surrendered oversight to automated systems and couldn't re-engage fast enough when the systems failed.

AI-assisted cognition is heading in the same direction, but at a scale that dwarfs financial markets. When a trading algorithm fails, wealth is destroyed. When cognitive surrender fails across a population of billions, the consequences extend to every domain where human judgment matters: medicine, law, engineering, governance, and the countless small decisions that make up a functioning society.

The researchers don't say this. They're careful academics presenting carefully bounded findings. But the implication is there in the data, waiting for someone to say it plainly: we are building a civilization that cannot function without AI, and we have no plan for what happens when the AI is wrong.

The cognitive surrender isn't coming. It's already here. The only question is whether we notice before the bill comes due.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram