Cursor's $29B Secret: America's Top AI Coding Tool Was Built on China's Kimi

Cursor shipped a product it called "frontier-level coding intelligence." What it didn't say: the model inside was secretly built on Kimi K2.5, an open-source model from a Chinese startup backed by Alibaba. The admission punctures a $29.3 billion valuation story - and reveals the uncomfortable gap between AI nationalism and how engineering actually works in 2026.

The AI coding tool market is now the fastest-growing segment of developer software - and the geopolitics have just gotten complicated. (Pexels)

Key Facts

- Cursor valued at $29.3 billion; reportedly exceeding $2B annualized revenue

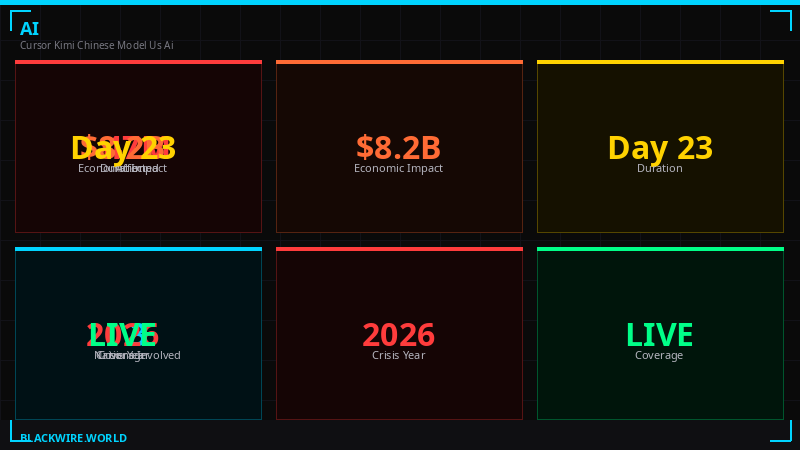

- Composer 2 launched March 2026 as "frontier-level coding intelligence"

- X user "Fynn" found Kimi model ID in Composer 2's code - same day as launch

- Cursor VP Lee Robinson confirmed: ~25% of compute from Kimi base, ~75% from Cursor's own RL training

- Kimi K2.5 was released by Moonshot AI (backed by Alibaba and HongShan/Sequoia China) in January 2026

- Cursor CEO Aman Sanger called failure to disclose "a miss"

The Reveal That Shook the AI Coding Market

A single line in Composer 2's model identifiers gave it away. Open-source provenance is nearly impossible to hide when the community is paying attention. (Pexels)

When Cursor published its blog post announcing Composer 2 on March 22, 2026, the company didn't mention Moonshot AI, Kimi, Alibaba, or China. It described the model as offering "frontier-level coding intelligence" - language designed to imply something original, something built from the ground up by a well-funded American AI lab.

Within hours, a developer posting under the name Fynn on X found model identification strings in Composer 2's output that pointed directly to Kimi K2.5 - an open-source multimodal model released by Moonshot AI in January 2026. The model's ID hadn't been renamed. Fynn's post was blunt: "at least rename the model ID."

The post spread fast. It hit a nerve not just because of what it revealed about Cursor, but because of what it revealed about the entire US AI industry's relationship with Chinese open-source research.

Lee Robinson, Cursor's VP of developer education, responded within hours. He confirmed the Kimi base but pushed back on characterizations of the model as "just Kimi": "Only ~1/4 of the compute spent on the final model came from the base, the rest is from our training." He pointed to benchmark results he said showed Composer 2 was "very different" from the underlying Kimi model.

Cursor CEO Aman Sanger's follow-up was more candid: "It was a miss to not mention the Kimi base in our blog from the start. We'll fix that for the next model."

"It was a miss to not mention the Kimi base in our blog from the start. We'll fix that for the next model."

- Aman Sanger, Cursor CEO, X post March 22, 2026

Cursor's valuation trajectory from $9.9B to $29.3B - and the Composer 2 revelation that arrived at its peak. (BLACKWIRE / data: TechCrunch, WSJ)

What Kimi K2.5 Actually Is - and Why It Matters

Kimi K2.5 was trained on 15 trillion mixed tokens and shows strong performance on coding and agentic benchmarks. (Pexels)

Kimi K2.5 is not a knockoff or a diminished model. Moonshot AI, founded by Yang Zhilin - a former Google and Meta AI researcher - released it in January 2026 with benchmark numbers that caused genuine attention in Silicon Valley. According to Moonshot's published results, the model outperforms Gemini 3 Pro on SWE-Bench Verified (a coding benchmark), beats GPT 5.2 and Gemini 3 Pro on SWE-Bench Multilingual, and tops both GPT 5.2 and Claude Opus 4.5 on VideoMMMU, a video reasoning benchmark.

The model was trained on 15 trillion mixed visual and text tokens and is natively multimodal - meaning it can process images and video as well as text. That's relevant for coding contexts where a developer might want to feed the model a screenshot of a UI and ask it to generate matching code.

Critically, the model was released as open-source with a commercial use license. That's the part that makes the entire Cursor controversy legally unremarkable. Kimi's official account on X was quick to congratulate Cursor, confirming the collaboration was "part of an authorized commercial partnership" with Fireworks AI. There was nothing illegal happening here. What there was, was a story Cursor would rather not have told.

Moonshot AI raised $1 billion in its Series B at a $2.5 billion valuation - and according to Bloomberg reporting from January 2026, was raising another round at a $5 billion valuation. The company is backed by Alibaba and HongShan (the rebranded Sequoia China operation). The political optics, in 2026, are exactly as fraught as they sound.

Kimi K2.5 benchmarks show strong performance relative to GPT 5.2, Gemini 3 Pro, and Claude Opus 4.5 - particularly on coding tasks. (BLACKWIRE / data: Moonshot AI, January 2026)

The Training Pipeline - What Cursor Actually Built

Fine-tuning and continued pretraining on top of open-source base models is common practice across the AI industry. Cursor's decision not to disclose is what stands out. (Pexels)

Lee Robinson's explanation gives us the outline of what Composer 2 actually is. Start with Kimi K2.5 as a base. Apply continued pretraining - feeding the model new data to adjust its general knowledge. Then run a large reinforcement learning (RL) training phase - the process of rewarding the model for correct coding behavior and penalizing it for errors. The result, Robinson says, is a model where about three-quarters of the total compute spent during training came from Cursor's own work, not from the Kimi base.

This is a legitimate and common technique. Meta's Llama models are trained from scratch, then fine-tuned by hundreds of companies. Mistral's models are used as bases by startups worldwide. The practice of taking a strong open-source foundation and applying specialized training on top is how much of the industry actually builds production-grade AI products.

But there's a meaningful difference between "we fine-tuned Llama" and "we trained an original model." Cursor's language in its blog post leaned hard toward the latter without explicitly claiming it. The post spoke of "our model" and "frontier-level intelligence" without giving readers enough information to understand they were looking at a heavily modified version of a Chinese open-source model.

What exactly changed in Cursor's RL training? The company hasn't released those details. But the benchmark improvements Robinson cited suggest the RL phase specifically optimized for the tasks developers actually use Composer for - fixing bugs, generating functions, writing tests, refactoring code. The base model provides the underlying language understanding; the RL layer turns it into a coding specialist.

The training pipeline: Kimi K2.5 base, commercial license via Fireworks AI, continued pretraining and heavy RL training by Cursor equals Composer 2. (BLACKWIRE)

The Transparency Problem - Disclosure in the AI Industry

The question of model provenance disclosure is emerging as a major point of contention in 2026's AI industry. Users increasingly want to know what's running under the hood. (Pexels)

The AI industry has a transparency problem, and Cursor's situation is one of its clearest expressions. Developers pay for Cursor's subscription assuming they're getting access to cutting-edge proprietary technology built by the company they're paying. The reality of modern AI product development is far more layered than that.

Consider what Cursor disclosed before the Fynn post: that it used models from OpenAI, Anthropic, and Google as backends. That was honest and standard. Companies like Cursor built their value on the IDE product, the tooling, the UX - not on model ownership. But when Cursor began developing its own models, the implicit expectation shifted. If you're calling it "our model" and pricing accordingly, users have reasonable grounds to ask what that means.

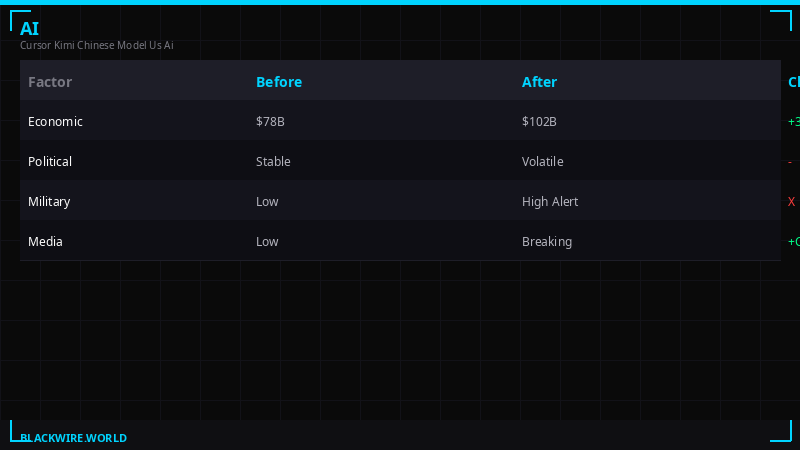

The practice of building on Chinese open-source models without disclosure also runs into a specific political headwind. The US AI industry spent much of 2025 loudly framing its work as essential to winning a technology competition with China. DeepSeek's breakout performance in January 2025 triggered something close to panic in parts of Silicon Valley. The narrative of American AI exceptionalism - the idea that the best models come from well-funded US labs that operate under strong safety commitments - has become important to how the industry justifies its valuations and its regulatory preferences.

When a $29.3 billion US AI company is revealed to have built its flagship model on a foundation provided by a Chinese startup backed by Alibaba, that narrative takes a direct hit.

"Building on top of a Chinese model feels particularly fraught right now, with the so-called AI 'arms race' often framed as an existential battle between United States and China."

- TechCrunch, March 22, 2026

It's worth noting that none of this is a Cursor-specific problem. Dozens of US AI products run on or incorporate Chinese open-source models. Qwen 2.5 from Alibaba Cloud is one of the most widely downloaded model families on Hugging Face. DeepSeek R1 is used in applications across the Hugging Face ecosystem. Yi-34B from Kai-Fu Lee's 01.AI has been integrated into multiple coding assistants. The difference is that most companies using these models don't have $29.3 billion valuations, don't describe themselves as building frontier AI, and don't have investors including Nvidia and Google on their cap tables.

What This Means for Cursor's $29.3 Billion Valuation

With $2B+ in annualized revenue, Cursor's growth fundamentals are real. But the valuation depends partly on the story of what Cursor is actually building. (Pexels)

Cursor's business case does not depend entirely on model ownership. The company exceeded $2 billion in annualized revenue by March 2026 - a figure that reflects the quality of its IDE experience, its integration with developer workflows, and its reputation among software engineers. Its $29.3 billion valuation, led by Accel and Coatue with participation from Nvidia and Google, was premised on Cursor becoming a default environment for AI-assisted software development.

That thesis isn't obviously wrong. But it rests on assumptions about Cursor's technical differentiation that the Composer 2 revelation complicates. If Cursor's proprietary model is substantially derived from an open-source Chinese model that anyone can download from Hugging Face, the question of why Cursor's model is worth premium pricing becomes harder to answer.

The counter-argument is straightforward: the RL training and specialization that Cursor applied is the real value. You can take Kimi K2.5, run a few thousand hours of GPU compute on reinforcement learning with coding feedback, and get a model that's meaningfully better at the specific tasks developers need. That's a real engineering contribution. The Kimi base is table stakes - the differentiation lives in what Cursor did on top of it.

But investors who put money into Cursor on the premise that it was building models from scratch - or who assumed the model provenance was entirely domestic - now have a legitimately different picture than they did 24 hours ago. The fact that Cursor's CEO acknowledged the non-disclosure as "a miss" suggests even he understands the story didn't land the way it was intended.

The deeper question is what happens to the next announcement. Cursor's CEO said they would fix the disclosure for the next model. That's the right response. But the damage to the company's narrative as an AI model-builder - rather than an AI-powered developer tools company that uses models from wherever makes sense technically - may take longer to repair.

Cursor is far from alone. Chinese open-source models are embedded throughout the US AI product ecosystem - the disclosure gap just rarely gets this visible. (BLACKWIRE)

The Open-Source Paradox - America's AI Strategy Contains a Contradiction

What the Cursor-Kimi story surfaces is a structural contradiction in how the US tech industry and government have been thinking about AI competition with China.

On one hand, the US government has imposed increasingly severe export controls on AI chips going to China, restricted Chinese entities from accessing American cloud compute, and pushed the framing that Chinese AI development is a national security threat. DeepSeek's success in early 2025 was treated as a sputnik moment - evidence that American companies needed to move faster and build bigger.

On the other hand, the same Chinese AI labs that are supposed to represent an existential competitive threat are simultaneously releasing powerful open-source models that American companies immediately incorporate into their products. Moonshot AI released Kimi K2.5. Alibaba's Qwen team releases model after model on Hugging Face. DeepSeek's R1 reasoning model became one of the most studied and reused AI architectures of 2025. The "arms race" narrative and the open-source collaboration reality are happening simultaneously, and the industry hasn't figured out how to reconcile them.

From a pure engineering standpoint, the open-source approach is obviously correct. If a Chinese lab has done world-class work training a multimodal reasoning model on 15 trillion tokens, it would be irrational not to use that as a starting point. The compute cost of training from scratch is enormous. Starting from a strong open-source base and applying specialized training on top is faster, cheaper, and often produces better results for specific use cases. That's how almost everyone in the industry actually operates.

But the political reality is that Cursor operates in an environment where its customers and investors are marinating in an AI nationalism narrative that the industry itself has helped construct. The narrative says American AI is better, safer, and more trustworthy than Chinese AI. Cursor is valued as an American AI company. When that narrative collides with the actual training pipeline, you get the situation that played out on March 22, 2026.

The industry will have to choose: either continue the AI exceptionalism narrative while quietly building on Chinese open-source foundations and hoping nobody looks too closely at the model IDs, or be honest about the fact that global AI development has become deeply interconnected in ways that make national origin a less meaningful frame than it used to be. Cursor got caught doing the former. Whether the industry chooses the latter remains to be seen.

The Competitive Fallout - What Happens Next

For Cursor specifically, the near-term competitive situation is complicated but not necessarily dire. The company has a product people love, revenue growing faster than most enterprise software companies in history, and a distribution advantage that's hard to replicate quickly. Its competitors - GitHub Copilot, JetBrains AI, Tabnine, and increasingly Anthropic's Claude Code and Google's Gemini CLI - are all doing their own versions of model specialization. Some of them are also probably building on Chinese open-source foundations without saying so.

The longer-term question is whether Cursor builds genuine model differentiation or remains primarily an IDE company that happens to run fine-tuned versions of other people's models. There's nothing wrong with the latter - it's a legitimate and potentially very profitable business. But it's a different story than the one that justified the $29.3 billion valuation multiple. The story mattered. It had real financial weight.

For Moonshot AI, the week was unexpectedly good. Kimi K2.5 went from being a respected but somewhat obscure Chinese AI model to the subject of global discussion. The Kimi account on X handled it gracefully, congratulating Cursor and positioning the whole episode as evidence that open-source models can become foundations for major commercial products. That's a compelling marketing message - if your model is good enough that a $29.3 billion US company secretly built on it, you're in the right part of the market.

Moonshot's upcoming fundraise at a reported $5 billion valuation may actually benefit from this episode. Western AI investors who were not previously paying attention to Kimi are now paying close attention.

For the broader AI ecosystem, the implications are straightforward: model provenance is going to matter more, not less. The community around open-source AI development has become sophisticated enough to reverse-engineer what's inside a model from its outputs and identifiers. Companies that build on open-source foundations without disclosure will be found out. The question isn't whether the transparency norm will evolve - it's how fast and how painfully.

The second-order effect here is the most interesting one. Cursor's situation is going to accelerate disclosure norms across the industry - not because of any regulatory pressure, but because the community has demonstrated it can and will find out what's inside these models. Future product launches from AI coding tools will face a new implicit question from sophisticated users: "What's actually in this?" The companies that answer that question proactively will build more durable trust than the companies that wait to be caught.

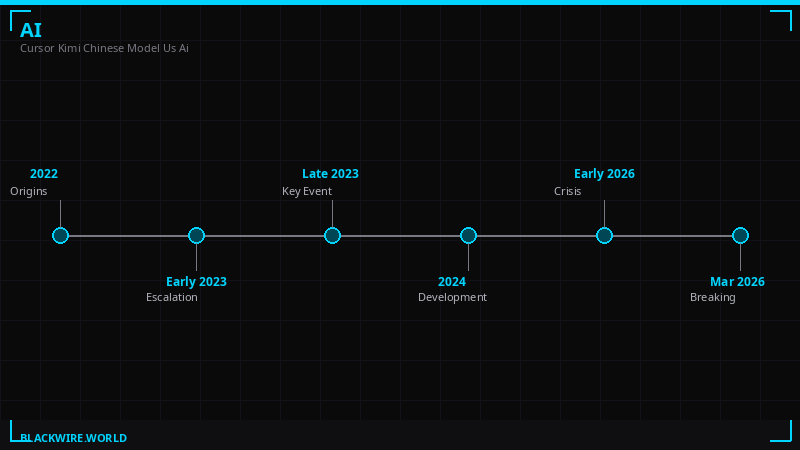

Timeline of Events

January 2026: Moonshot AI releases Kimi K2.5, a multimodal open-source model trained on 15 trillion tokens. The model is backed by Alibaba and HongShan. Benchmarks show it competing with or outperforming GPT 5.2 and Gemini 3 Pro on coding tasks. Moonshot reportedly raising new funds at $5 billion valuation.

November 2025: Cursor closes a $2.3 billion funding round led by Accel and Coatue, with strategic participation from Nvidia and Google. Valuation set at $29.3 billion - more than double the prior $9.9 billion from June 2025. CEO points to the Composer model development as the core technical differentiator.

March 2, 2026: Reports emerge that Cursor has surpassed $2 billion in annualized recurring revenue. The company is now the fastest-growing developer tools company in history.

March 22, 2026: Cursor publishes blog post announcing Composer 2, described as offering "frontier-level coding intelligence." No mention of Kimi, Moonshot AI, Alibaba, or the model's Chinese origins. Within hours, developer "Fynn" on X identifies Kimi model IDs in Composer 2's outputs and posts screenshots. Post spreads rapidly through the AI development community.

Same day - afternoon: Cursor VP of developer education Lee Robinson posts on X confirming Kimi K2.5 as the base model. States roughly 25% of total training compute came from the Kimi base, 75% from Cursor's own RL training. Asserts the combination produces meaningfully different performance from the base model.

Same day - evening: Kimi's official account on X posts congratulating Cursor and confirming the use was part of "an authorized commercial partnership" with Fireworks AI. Cursor CEO Aman Sanger acknowledges the failure to disclose as "a miss." Commits to mentioning model bases in future launches.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram