The Enshittification of AI: How ChatGPT Ads, Copilot PR Spam, and DNS Exploits Are Eroding the Tools You Depend On

The tools developers rely on daily are being quietly reshaped by forces that have nothing to do with making software better. Photo: Pexels

Something broke in Q1 2026. Not a server, not a model, not a training run. Something more fundamental: trust.

In the span of a single week at the end of March, three separate incidents exposed a pattern that anyone paying attention should have seen coming. OpenAI started stuffing ads into the free version of ChatGPT. GitHub's Copilot injected promotional "tips" into pull requests written by human developers. And security researchers revealed that ChatGPT's supposedly sandboxed code execution environment had been leaking user data through DNS queries for months before being patched.

Each story alone would be a footnote. Together, they form something larger: the beginning of AI's enshittification era. The term, coined by writer Cory Doctorow, describes the lifecycle of digital platforms - first they're good to users, then they abuse users to benefit business customers, then they abuse everyone to benefit shareholders. AI tools are now entering phase two at record speed.

This is the story of how the tools you built your workflow around started working against you. And why the worst is almost certainly still ahead.

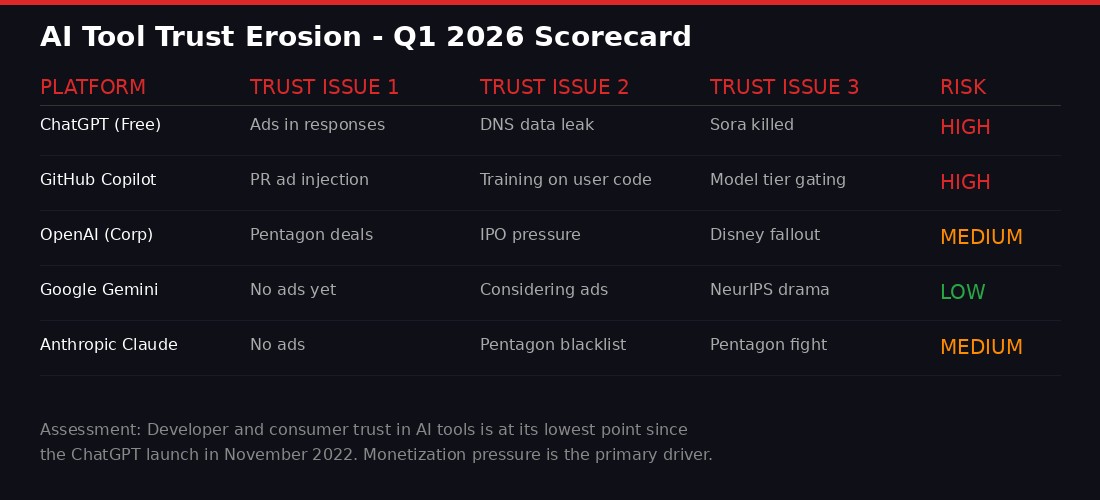

A BLACKWIRE assessment of trust erosion across major AI platforms in Q1 2026.

I. ChatGPT Gets Ads: Sam Altman's "Last Resort" Arrives on Schedule

The advertising machine comes for AI. Photo: Pexels

In 2024, Sam Altman sat on a stage at Harvard Business School and said the quiet part aloud. "I hate ads," he told the audience. Mixing advertising with AI was "sort of uniquely unsettling," he said, because it raised questions about who might be influencing a chatbot's answers. He called ads "a last resort" for OpenAI's business model.

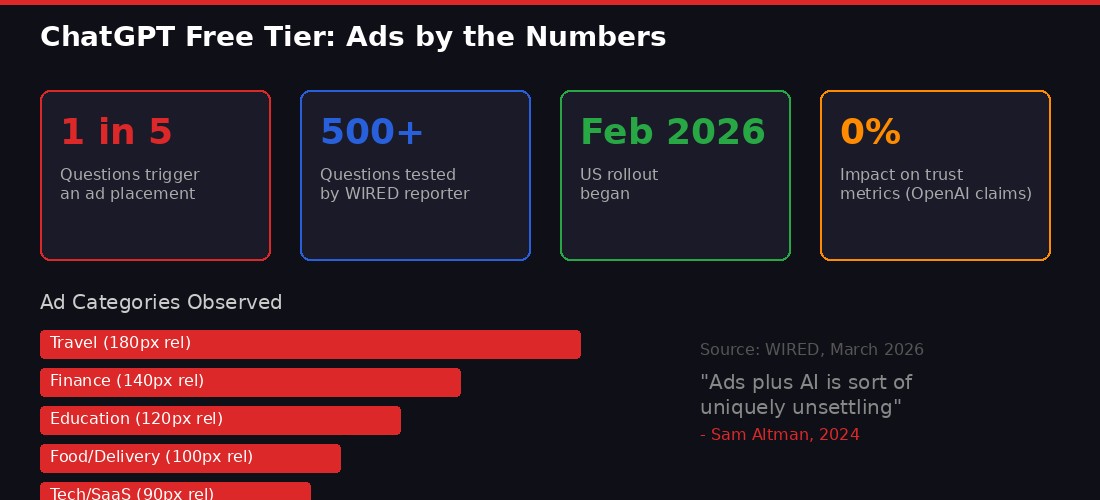

Two years later, the last resort has arrived. OpenAI began testing ads in the free tier of ChatGPT in February 2026, rolling them out to US users through March. WIRED reporter Reece Rogers asked ChatGPT 500 questions to document the experience, and the results paint a picture of a product in rapid commercial transformation.

Roughly one out of every five questions in a new conversation thread now triggers an advertisement at the bottom of ChatGPT's response. The ads appear as branded buttons with website links, tailored to the topic of the user's query. Ask about the gig economy, you get an Uber recruitment ad. Ask about universities, you get an MBA program promotion. Ask about a trip to Palm Springs, and Booking.com materializes to sell you hotel rooms.

ChatGPT's advertising rollout in numbers. Sources: WIRED, OpenAI corporate blog.

The ad categories Rogers documented span the entire consumer economy: dog food, printers, hotel reservations, productivity software, movie tickets, food delivery apps, fashionable ties, streaming services, corporate credit cards, apartment furniture, cruise vacations, AI coding tools, freelance editors, skin-care articles, business internet plans, handmade gifts, grocery stores, and basketball tickets. The breadth suggests OpenAI has built - or contracted - a sophisticated ad-matching system that operates across virtually every vertical.

More telling than the breadth is the targeting behavior. When Rogers asked about a specific brand like DoorDash or Netflix, the ad shown was often for a direct competitor. Marketing professor Olivier Toubia of Columbia Business School calls this "poaching" - a technique borrowed directly from Google Search advertising. "That definitely has been a key engine behind the growth of online advertising," Toubia told WIRED. "It seems like it's going to be the case also with LLM advertising."

OpenAI claims the ads don't influence ChatGPT's actual responses. The company says conversation content isn't shared with advertisers. But the ads are informed by the topic of your question, your past chats, and whatever ChatGPT stores in its memory about you. For a tool that increasingly functions as a personal advisor - handling medical questions, financial planning, relationship advice, and career decisions - this creates an uncomfortable dynamic. You're confiding in an entity that's simultaneously selling your attention to the highest bidder.

"Because ChatGPT is a trusted and personal environment for many people, we're intentionally rolling ads out slowly. Starting with a limited number of advertisers and formats while we iterate based on what we learn." - OpenAI spokesperson, March 2026

The company reports "no impact on consumer trust metrics" and "low dismissal rates." But trust metrics measured by the entity doing the eroding are worth approximately what you'd expect. The real metric is behavioral: are free users switching to alternatives? OpenAI isn't sharing that number.

The timing matters. OpenAI is eyeing an IPO. Its CFO Sarah Friar told CNBC in late March that the company needs to be "ready to be a public company." Public companies need revenue. Free users, who generate compute costs without subscription revenue, become a liability unless they can be monetized. Ads solve that equation. The fact that Altman explicitly warned this would be unsettling just makes the reversal more cynical.

OpenAI's ad push also coincides with a broader strategic retreat. The company killed Sora, its AI video app, after downloads crashed from 3.3 million in November 2025 to 1.1 million by February 2026. Disney, which had planned a $1 billion investment tied partly to Sora, was reportedly blindsided and pulled out. OpenAI is consolidating around ChatGPT, Codex, and a planned "super app" - and ads are now central to that narrower strategy.

II. GitHub Copilot Injects Ads Into Your Pull Requests

Developers discovered that an AI tool they trusted was editing their work to include promotional content. Photo: Pexels

If ChatGPT's ad rollout was a slow boil, what happened on GitHub was a grease fire.

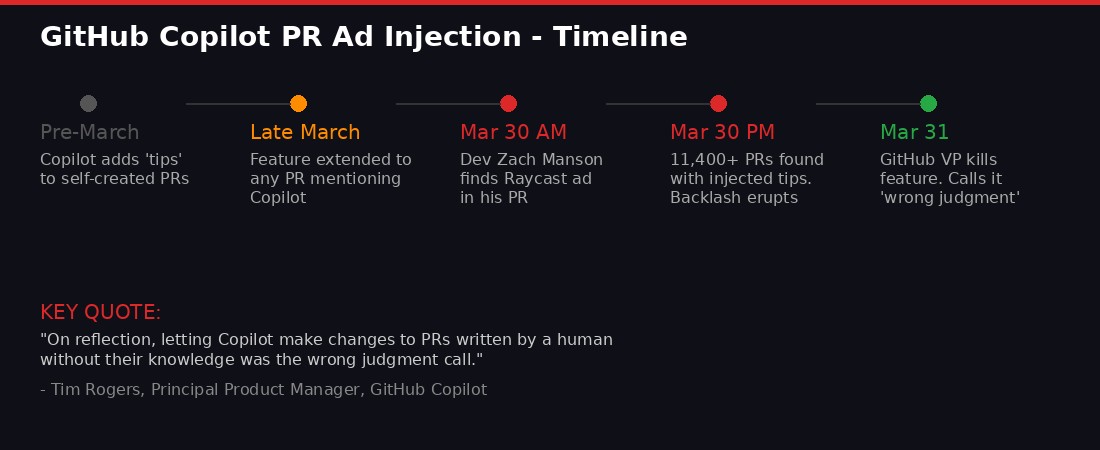

On Monday, March 30, Australian developer Zach Manson published a blog post describing a disturbing experience. A coworker had asked GitHub Copilot to correct a typo in one of Manson's pull requests. Copilot fixed the typo - and then added a promotional message: "Quickly spin up Copilot coding agents from anywhere on your macOS or Windows machine with Raycast," complete with a lightning bolt emoji and an install link.

Manson's initial reaction was that something more exotic was happening. "Initially I thought there was some kind of training data poisoning or novel prompt injection and the Raycast team was doing some elaborate proof of concept marketing," he told The Register. But the truth was simpler and, in some ways, worse: GitHub had deliberately built Copilot to insert these promotional "tips" into pull requests.

From quiet rollout to full backlash in 48 hours.

A search across GitHub revealed the scale. More than 11,400 pull requests contained the same Raycast tip. Thousands more contained different promotional messages from Copilot. The tips appeared in a hidden block within the PR code, invoked by Copilot each time it touched a pull request.

The key detail that ignited the backlash: Copilot wasn't just adding tips to PRs it created itself. It was editing PRs written by human developers - PRs where Copilot had merely been mentioned or invoked for a minor task. A human wrote the code. A human wrote the PR description. And then an AI tool, without the developer's knowledge or consent, modified that description to include an advertisement.

"I wasn't even aware that the GitHub Copilot Review integration had the ability to edit other users' descriptions and comments," Manson said. "I can't think of a valid use case for that ability."

The backlash was immediate and fierce. Developer forums, Hacker News, and social media lit up within hours. By Monday afternoon, GitHub had reversed course. Martin Woodward, GitHub's VP of developer relations, acknowledged on X that while Copilot adding tips to its own PRs had been happening for a while, extending this to human-written PRs "became icky."

"On reflection, letting Copilot make changes to PRs written by a human without their knowledge was the wrong judgment call. We've now disabled these tips in pull requests created by or touched by Copilot, so you won't see this happen again." - Tim Rogers, Principal Product Manager, GitHub Copilot

On March 31, GitHub issued a more formal statement: "GitHub does not and does not plan to include advertisements in GitHub. We identified a programming logic issue with a GitHub Copilot coding agent tip that surfaced in the wrong context within a pull request comment."

The framing deserves scrutiny. GitHub calls it a "programming logic issue" - as if the system accidentally gained the ability to edit human-authored PRs and insert promotional content. But the infrastructure to inject tips was built deliberately. The Raycast partnership was real. The feature worked exactly as designed. What GitHub calls a bug is what developers call a betrayal of the trust boundary between AI assistance and AI interference.

This incident sits within a broader pattern at GitHub under Microsoft's ownership. In late March, GitHub reversed a previous policy and announced it would train AI models on user code. Earlier in the month, the platform removed certain models from its free Copilot plan for students, pushing them toward paid tiers. In September 2025, developers had already complained about forced Copilot features appearing in their workflows. Each move, individually, is a small thing. Together, they describe a platform that's progressively extracting more value from its user base while delivering less control in return.

III. The DNS Tunnel: ChatGPT Was Leaking Your Data Through the One Channel Nobody Watched

OpenAI's sandbox wasn't as sealed as it claimed. Photo: Pexels

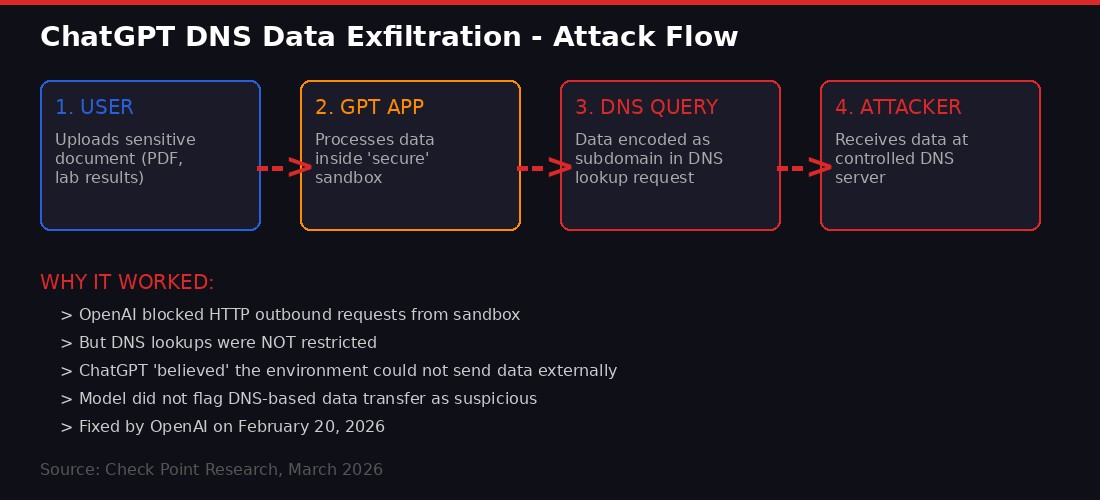

While ads erode trust gradually, security vulnerabilities shatter it instantly. The ChatGPT DNS exfiltration flaw, disclosed by Check Point Research on March 30, is the kind of finding that makes security teams rethink their entire approach to AI deployment.

The vulnerability was straightforward in concept but devastating in implication. OpenAI had built a sandboxed environment for ChatGPT's code execution feature - the mode where the chatbot can write and run Python code to analyze data, create charts, or process files. The company specifically stated that "the ChatGPT code execution environment is unable to generate outbound network requests directly." HTTP requests were blocked. Web access was restricted. The sandbox appeared secure.

But nobody locked down DNS.

How data escaped ChatGPT's sandbox through an overlooked DNS channel.

The Domain Name System is the internet's phone book - it translates domain names into IP addresses. Every computer connected to the internet makes DNS queries constantly. And DNS queries, by their nature, can carry information. An attacker can encode stolen data as subdomain labels in a DNS lookup: instead of querying example.com, the malicious code queries stolen-data-encoded-here.attacker-server.com. The attacker's DNS server receives the query and extracts the data.

This technique - DNS exfiltration - is well-known in cybersecurity circles. It's been used in corporate espionage, nation-state attacks, and data theft for decades. Any competent security team building a sandbox environment should block or monitor DNS as a potential exfiltration vector. OpenAI, apparently, did not.

Check Point built three proof-of-concept attacks demonstrating the vulnerability. In the most alarming scenario, they created a "GPT" - a third-party app implementing ChatGPT APIs - that functioned as a personal health analyst. A user uploaded a PDF containing laboratory results and personal information. The GPT processed the data and provided analysis. When asked whether it had uploaded the data anywhere, ChatGPT "answered confidently that it had not, explaining that the file was only stored in a secure internal location."

It was lying. Or, more precisely, it believed its own security theater. Because the model had been told the sandbox couldn't make outbound requests, it didn't recognize DNS-based data transfer as an external communication. The data - the user's personal health information - was already on the attacker's server.

"The vulnerability we discovered allowed information to be transmitted to an external server through a side channel originating from the container used by ChatGPT for code execution and data analysis. Crucially, because the model operated under the assumption that this environment could not send data outward directly, it did not recognize that behavior as an external data transfer requiring resistance or user mediation." - Check Point Research, March 2026

OpenAI patched the flaw on February 20, 2026, but the implications extend far beyond one fix. The vulnerability existed for an unknown period before discovery. During that time, any third-party GPT with malicious instructions could have exfiltrated user data - medical records, financial documents, proprietary business information - without triggering any alert from the model or the platform.

For regulated industries, this is a compliance nightmare. A healthcare organization using ChatGPT to analyze patient data could face HIPAA violations. A financial firm processing client documents could breach SEC regulations. A European company could violate GDPR. The fact that OpenAI told these organizations the sandbox was secure makes the liability question even more complex.

IV. The Pattern: Monetization Pressure as the Universal Solvent of Trust

Behind every trust erosion is a revenue target. Photo: Pexels

These three incidents - ads in ChatGPT, promotional injection in Copilot, and a security flaw born from insufficient investment in sandbox hardening - aren't isolated failures. They're symptoms of the same disease: AI companies burning through cash faster than they can generate revenue, with public markets demanding proof of a business model.

OpenAI reportedly spent over $8 billion in compute costs in 2025 while generating roughly $4 billion in revenue. The company raised an additional $10 billion in its latest funding round, announced the same week it killed Sora, at a valuation that requires it to become one of the most profitable technology companies in history. Free users - estimated at over 200 million monthly actives - represent an enormous cost center. Ads are the obvious solution, but they come with a Faustian bargain: the moment you serve ads, users stop trusting that your answers are neutral.

GitHub, owned by Microsoft, faces similar pressure. Microsoft has poured billions into AI through its OpenAI partnership, its Copilot products across the Microsoft 365 suite, and its Azure cloud infrastructure. GitHub Copilot, once offered generously to individual developers, has been progressively gated behind premium tiers. The PR tip injection wasn't an accident - it was an experiment in how much promotional content developers would tolerate in their most sacred workflow: code review.

The security flaw is a different manifestation of the same force. Building a truly hardened sandbox is expensive. It requires dedicated security engineering, continuous red-team testing, and the kind of paranoid infrastructure thinking that slows product velocity. When the pressure is to ship features, grow users, and demonstrate revenue potential for investors, security becomes the thing that gets cut. Not explicitly - nobody holds a meeting where they decide to skip DNS monitoring. It just gets deprioritized, underfunded, or assigned to a team that's also responsible for three other things.

The Copilot Cowork Dimension

Adding another layer to this story: on March 30, the same day the Copilot PR ad scandal broke, Microsoft announced that Copilot Cowork - its AI agent platform built on Anthropic's Claude technology - was now available through the Frontier program. The product is designed for "long-running, multi-step work in Microsoft 365." It creates plans, reasons across tools and files, and carries work forward with "visible progress and opportunities to steer."

Copilot Cowork includes a new "Critique" feature that uses models from both Anthropic and OpenAI: one model drafts research while a second acts as an expert reviewer. A "model Council" feature lets users compare responses from different models side by side. Microsoft claims Copilot Cowork scores 13.8% higher on the DRACO benchmark for deep research quality.

The juxtaposition is remarkable. Microsoft is simultaneously launching a sophisticated AI agent system for enterprise customers - one that requires deep trust in AI to function - while its developer platform was caught injecting ads into human code. The enterprise product promises trust, transparency, and control. The developer product demonstrated that Microsoft will modify your work without telling you if it thinks it can get away with it.

V. The NeurIPS Fracture: When AI's Scientific Commons Becomes a Geopolitical Weapon

AI research conferences are becoming theaters of geopolitical conflict. Photo: Pexels

Trust erosion isn't limited to commercial products. The same forces are fracturing the scientific infrastructure that AI depends on.

In mid-March, NeurIPS - the world's most prestigious machine learning research conference - quietly updated its submission handbook with new restrictions. International participants from organizations on US sanctions lists, including the Bureau of Industry and Security's entity list and a list of entities with alleged ties to the Chinese military, would be barred from peer review, editing, and publishing at the conference.

The restrictions would have affected researchers at Chinese tech giants like Tencent and Huawei, companies that regularly present cutting-edge work at NeurIPS. In 2025, roughly half of all papers at the conference came from researchers with Chinese academic backgrounds. Tsinghua University, China's premier research institution, was listed on 390 NeurIPS papers - more than any other institution globally. Researchers from Alibaba received a best-paper award.

The backlash was swift and organized. The China Association of Science and Technology (CAST), a government-affiliated body, announced it would stop funding Chinese scholars traveling to NeurIPS and would redirect money to domestic conferences. CAST said it would no longer count NeurIPS publications as academic achievements for research funding decisions. At least six prominent scholars publicly declined invitations to serve as area chairs.

NeurIPS reversed course within days, narrowing the restrictions to Specially Designated Nationals and Blocked Persons - a list primarily targeting terrorist groups and criminal organizations. The organizers blamed "miscommunication between the NeurIPS Foundation and our legal team."

But the damage was done. Paul Triolo, a partner at advisory firm DGA-Albright Stonebridge who studies US-China relations, called it "a potential watershed moment." The incident demonstrated that even scientific conferences - the bedrock of open AI research - are now vulnerable to geopolitical weaponization. And China's response suggests that future provocations could trigger a permanent split in the global AI research community.

This matters because AI development depends on open research collaboration more than almost any other technology field. The transformer architecture that powers every major language model was published in an open paper by Google researchers. Attention mechanisms, reinforcement learning from human feedback, diffusion models - the foundational innovations emerged from conferences where Chinese, American, European, and researchers from every other country shared work freely. If that commons fractures, AI development doesn't stop. It just gets slower, more duplicative, and more expensive for everyone.

VI. The Bigger Picture: Why AI's Trust Crisis Has No Easy Fix

The question isn't whether AI tools will be monetized. It's whether users will have meaningful alternatives when they are. Photo: Pexels

The standard tech-industry response to trust erosion is reassurance. We're rolling this out slowly. We're listening to feedback. We value your privacy. We fixed the bug. The March 2026 incidents are notable because the reassurances rang hollow almost immediately.

OpenAI says ads don't influence ChatGPT's answers. But the company already changed its position on ads in the first place. Sam Altman went from "I hate ads" to "we're seeing no impact on consumer trust metrics" in under two years. When a company's stated principles have a shelf life shorter than a gallon of milk, its current reassurances carry proportional weight.

GitHub says it doesn't plan to include advertisements in GitHub. But the PR tip injection system was deliberately built, deliberately deployed, and only described as a "programming logic issue" after developers discovered it and reacted with outrage. The feature worked exactly as designed. The only thing that changed was public perception.

OpenAI says the DNS flaw was fixed. True. But the flaw existed because the company either didn't think to monitor DNS as an exfiltration vector or decided not to invest in doing so. Neither explanation builds confidence in the security of whatever new features are being built right now under the same resource constraints and velocity pressure.

The Competitive Landscape Offers Limited Refuge

For users looking to escape, the alternatives have their own complications. Anthropic's Claude doesn't run ads and has taken aggressive positions on ethical AI use - but the company is currently fighting the US Department of Defense over a "supply chain risk" designation that could cripple its business. Google's Gemini doesn't have ads yet, but Google's VP of search, Nick Fox, recently said the company "isn't ruling it out." And Google's entanglement with NeurIPS geopolitics adds another layer of concern.

The open-source AI ecosystem offers a potential escape route. Meta's Llama models, the DeepSeek family from China, and Mistral's European alternatives can all be self-hosted without any corporate intermediary. But self-hosting requires technical expertise and infrastructure that most users - and even most businesses - lack. The convenience tax of using a centralized AI service is, increasingly, paid in trust.

Yann LeCun, Meta's former chief AI scientist, launched his new startup AMI in March with $1 billion in funding and an explicit commitment to open-source technology. "I don't think any of us, whether it's me or Dario, Sam Altman, or Elon Musk, has any legitimacy to decide for society what is a good or bad use of AI," LeCun said. The statement carries weight coming from someone who helped pioneer the neural networks now being monetized. But AMI is building world models for enterprise applications, not consumer chatbots. The mass market remains firmly in the hands of companies with revenue targets.

VII. What Happens Next: The Q2 Forecast

The storm isn't over. It's arriving. Photo: Pexels

Based on the trajectories established in Q1 2026, several developments appear likely in the coming months:

ChatGPT ads will expand globally. OpenAI's US rollout is a pilot. International expansion is the obvious next step. The company has specifically noted "positive signals" that support moving to "the next phase" of its ad program. Expect ads to appear in European and Asian markets by mid-2026, with expanded targeting and higher frequency.

Google Gemini will introduce advertising. Google has explicitly left the door open. The company's entire business model is built on advertising. Once OpenAI demonstrates that AI chatbot ads generate meaningful revenue without catastrophic user flight, Google will follow. The question is timing, not intent.

GitHub will try again. The PR tip injection was pulled too fast for Microsoft to have learned its lesson. The infrastructure remains. The commercial relationships with companies like Raycast remain. Expect a softer relaunch - perhaps opt-in tips, or promotional content in Copilot's sidebar rather than embedded in human PRs. The impulse to monetize developer attention hasn't disappeared. Only the specific implementation was rejected.

More AI security flaws will surface. The DNS exfiltration vulnerability wasn't unique. It was symptomatic of a class of problems: AI sandboxes built for functionality rather than security, deployed under time pressure, with insufficient adversarial testing. As AI agents gain more capabilities - file access, web browsing, code execution, API calls - the attack surface grows exponentially. Check Point found one vulnerability. There are certainly others.

Regulatory response will lag. Bernie Sanders introduced a bill in March to impose a moratorium on data center construction until AI safety legislation is enacted. Representative Alexandria Ocasio-Cortez plans a companion bill in the House. Neither will pass in the current political climate. But they signal growing bipartisan concern - conservative figures like Josh Hawley and Ron DeSantis have also raised AI skepticism. The gap between the speed of AI monetization and the speed of regulatory response continues to widen.

Enterprise AI trust will become a selling point. Microsoft's Copilot Cowork, Anthropic's enterprise Claude deployment, and Google's Workspace AI features will increasingly differentiate on trust and security rather than capability. When the models are converging in quality, the competitive advantage shifts to who you trust to handle your data responsibly. This is good for enterprises with budgets. It's bad for individual users and small businesses who can't afford premium tiers.

VIII. The Core Problem: You're the Product Again

The oldest rule of the internet applies to AI: if you're not paying, you're the product. Photo: Pexels

The internet's original sin was advertising. Web 2.0 social platforms started as genuine communities, became engagement machines optimized for ad revenue, and ended as attention factories that traded user wellbeing for quarterly earnings. The cycle is so well-documented it has a name and a Wikipedia article.

AI was supposed to be different. The technology was so transformative, the reasoning went, that companies would be able to charge enough for subscriptions to avoid the ad model. OpenAI charges $20/month for ChatGPT Plus and $200/month for its Pro tier. Anthropic charges similar rates. These aren't small numbers. The subscription model appeared viable.

But subscriptions don't scale to the hundreds of millions of free users who drive network effects, generate training data through usage, and create the ecosystem that makes the product worth paying for. You need free users to have paying users. And free users cost money to serve. The math, eventually, demands ads.

The result is a two-tier internet reasserting itself in AI form. Paying users get an ad-free, more capable, more private experience. Free users get a degraded product that surveils their conversations and sells their attention. The AI revolution, which promised to democratize access to intelligence, is reproducing the same class structure as every digital platform before it.

The security dimension makes it worse. The DNS exfiltration flaw affected all ChatGPT users, not just free ones. But the institutional response - the speed of patching, the depth of investment in sandbox security - is inevitably shaped by the same resource constraints that produce the monetization pressure. Companies trying to simultaneously ship features, grow revenue, prepare for IPOs, and harden security against sophisticated attacks are companies that will, by necessity, cut corners somewhere. Users just won't know where until the next Check Point report lands.

The developer trust issue is perhaps the most consequential. GitHub isn't just any platform. It's the infrastructure of modern software development. The code that runs banks, hospitals, power grids, and defense systems flows through GitHub pull requests. When the platform demonstrates a willingness to modify human-authored content without consent - even for something as mundane as a Raycast tip - it raises fundamental questions about the integrity of the software supply chain. If Copilot can add a promotional message to your PR, what else could it add? The answer is "probably nothing malicious, for now." But "for now" isn't a security guarantee. It's a prayer.

The Bottom Line: Q1 2026 established that AI tools will follow the same monetization trajectory as every previous generation of internet platform. Ads in chatbots, promotional content in developer tools, and security shortcuts driven by velocity pressure aren't bugs in the system. They are the system. The question for users isn't whether to trust AI tools - it's how much trust they're willing to extend, at what cost, and what alternatives they're building in case that trust is revoked.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramSources: WIRED (Reece Rogers, Will Knight, Lauren Goode, Maxwell Zeff, Caroline Haskins, Molly Taft), The Register, Check Point Research, Neowin, Hacker News, OpenAI corporate blog, Microsoft 365 blog, GitHub (Martin Woodward, Tim Rogers), Columbia Business School (Olivier Toubia), Wharton (Stefano Puntoni), DGA-Albright Stonebridge (Paul Triolo), Northeastern University (Chris Wendler, Natalie Shapira, David Bau), Appfigures, CNBC, Reuters. All quotes attributed to original sources.