GDC 2026: Game Developers Reject AI - What the Industry's Most Important Conference Reveals About Tech's Biggest Bet

At the Game Developers Conference in San Francisco, reporters found something remarkable: nearly every developer they spoke to disavowed using AI in their projects. The backlash is real, principled, and it has implications far beyond gaming.

Game developers at GDC 2026 delivered a clear message to the AI industry: not here, not in our craft. Photo: Pexels

The Game Developers Conference is not a political event. It is the world's largest professional gathering for the people who actually build video games - the artists, programmers, designers, and producers who ship the products that generate more revenue annually than film and music combined. When the industry's gatekeepers speak at GDC, companies listen.

This year, the message was unambiguous. According to reporting from The Verge, "of the many developers I spoke to at GDC, nearly every one disavowed using AI in their projects." Not a few skeptics. Not a vocal minority. Nearly everyone.

Silicon Valley bet enormously on gaming being an early adopter of AI tools. The reasoning was logical: games need enormous amounts of art assets, dialogue, procedural content, and quality-assurance testing - all tasks where generative AI seemed purpose-built to help. Studios could theoretically reduce headcount, accelerate production timelines, and iterate faster on creative decisions. Nvidia, Microsoft, Unity, Epic Games, and a constellation of AI startups all pitched the industry heavily.

GDC 2026 suggests that pitch largely failed. What happened between the 2023 gold rush and this year's near-universal rejection is a story about creative labor, institutional trust, legal liability, and what happens when workers with actual leverage push back against transformative technology.

GDC 2026 drew thousands of game industry professionals to San Francisco - and their verdict on AI was nearly unanimous. Photo: Pexels

How We Got Here: The 2023 AI Mania That Swept Gaming

Cast your mind back to March 2023. ChatGPT had just crossed 100 million users. Stable Diffusion was generating photorealistic images in seconds. Midjourney was printing concept art that looked indistinguishable from senior artists' work. The prevailing narrative in gaming circles was not whether AI would transform the industry, but how fast.

Publishers began quietly requiring AI disclosure in employee handbooks. Studios experimented with using generative tools to create NPC dialogue, environmental textures, and prototype animations. A handful of indie developers published entire games claiming to have been made with AI-generated art - some of them good enough to attract real reviews and paying customers.

Nvidia's CEO Jensen Huang spoke about AI-generated game worlds as an inevitability. Epic Games integrated AI tools into Unreal Engine. Unity began pitching AI-powered asset generation as a core feature of its platform. The GDC 2023 sessions on AI were standing-room only, with presentations from Google, Microsoft, and a parade of startups claiming to automate everything from voice acting to level design.

The enthusiasm was real. But so was the anxiety underneath it.

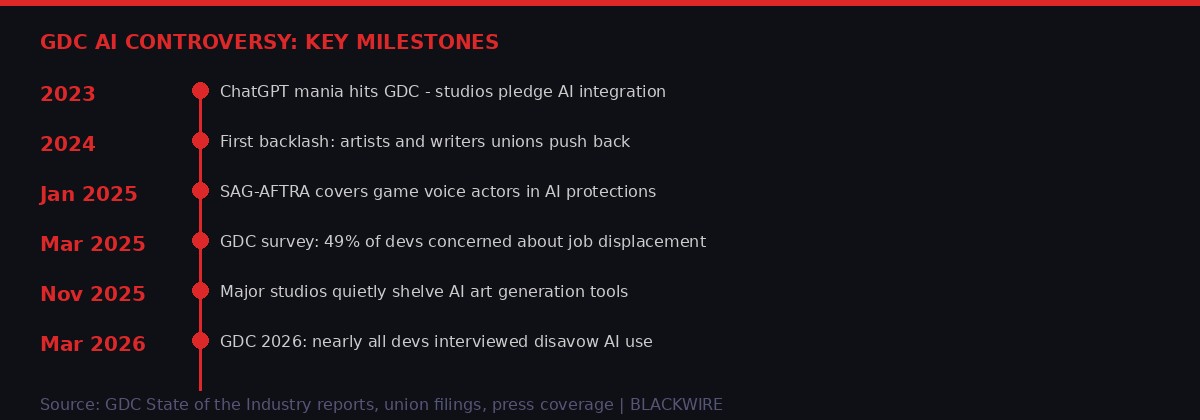

Gaming AI Timeline: From Mania to Rejection

The arc from AI enthusiasm to rejection played out over three years, driven by labor organizing, legal uncertainty, and creative pushback. Source: BLACKWIRE analysis.

The Labor Movement That Rewrote the Rules

Understanding the GDC backlash requires understanding what happened in Hollywood first - because the entertainment industry's labor struggles directly shaped how game developers approached the AI question.

The 2023 Hollywood strikes by the Writers Guild of America and SAG-AFTRA lasted nearly five months combined. One of the central negotiating issues was AI: specifically, whether studios could use AI to rewrite scripts, generate scripts based on human work, or create digital replicas of actors without consent or compensation. Both guilds won significant protections.

Those protections mattered to game developers for two reasons. First, they established legal and industry norms around AI-generated content from human likenesses - norms that directly applied to game voice actors, mo-cap performers, and character designers. Second, they showed that organized creative labor could successfully limit AI deployment even against well-funded corporate opponents.

The International Game Developers Association, which operates the GDC itself, had already been tracking member sentiment on AI. Multiple surveys through 2024 showed consistent patterns: senior developers with the most technical knowledge of how AI tools actually worked were among the most skeptical. They understood that "AI-generated art" meant training on other artists' work without compensation. They understood the legal exposure. They also understood that the tools, while impressive in demos, frequently required enormous human intervention to be usable in production pipelines.

"Every company is saying they're using AI to do everything faster. What they're not saying is that they hired three people to fix the AI output for every person they didn't hire to create the original asset." - Anonymous game developer, speaking to industry press at GDC 2026

The SAG-AFTRA deal covering game voice actors, finalized in January 2025, was particularly significant. Under the agreement, studios must obtain individual consent before using AI to generate voice performances based on real actors' voices, and must pay residuals when they do. This created immediate legal risk for any studio that had already built AI voice pipelines using recordings of real performers as training data.

Voice actor protections negotiated by SAG-AFTRA created immediate legal risk for studios that had built AI voice pipelines. Photo: Pexels

What Developers Actually Said - and What They Were Afraid to Say

The Verge's reporter framed their GDC finding carefully: "nearly every one disavowed using AI in their projects." The word "disavowed" is doing important work in that sentence. It doesn't mean developers weren't using AI tools privately. It doesn't mean their companies weren't mandating or encouraging AI adoption. It means that when asked directly and on the record, developers refused to associate their own creative work with AI output.

This is a meaningful distinction. There is a gap between what companies announce at earnings calls and what developers will cop to in conversations with reporters. That gap reveals something about how the technology is actually being experienced on the ground.

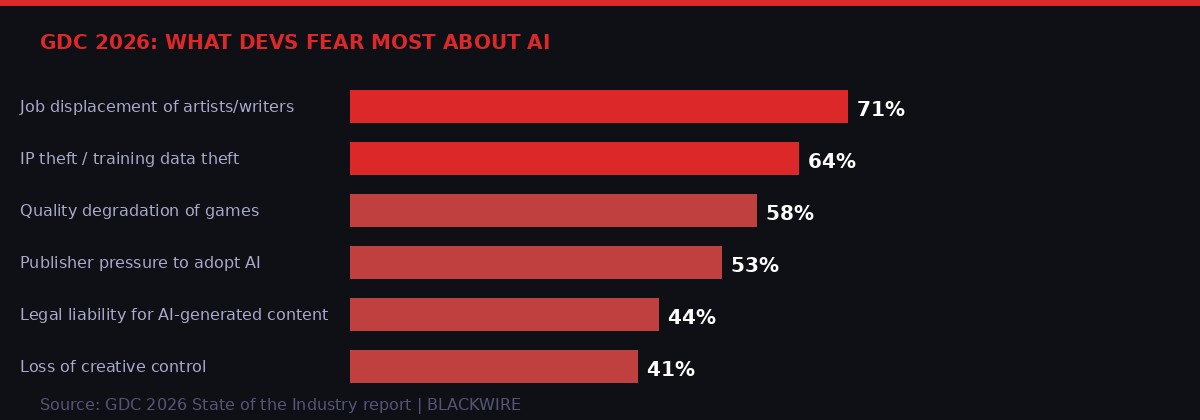

The GDC State of the Industry report has tracked developer AI sentiment for several years. The data paints a consistent picture of anxiety rather than enthusiasm. Concerns about job displacement rank highest among developers, followed by worries about IP theft through training data, and fears that quality will decline if AI-generated content floods release pipelines. These aren't abstract concerns - developers have watched colleagues lose jobs as studios cited AI tools as reasons not to rehire after layoffs.

The gaming industry laid off more than 10,000 workers in 2023 alone, more than 10,500 in 2024. Many of those layoffs were in exactly the roles - art, QA, writing - where AI tools were being piloted. The people at GDC who still had jobs drew direct lines between those numbers.

GDC survey data shows developers' top fears about AI - with job displacement and IP theft leading the list. Source: GDC State of the Industry / BLACKWIRE analysis.

The legal liability concern is not hypothetical. Getty Images sued Stability AI in both US and UK courts over training data. The New York Times won a significant ruling against OpenAI over copyright. Several stock illustration houses have pursued similar claims. For game studios, which license enormous libraries of reference art for their development processes, the question of whether AI tools trained on that art create downstream legal exposure is genuinely unresolved.

Some studios have tried to thread the needle by building internal AI tools trained only on their own proprietary art. This theoretically avoids the copyright issues. But it also requires either enormous internal datasets - which only the largest studios possess - or training on a limited corpus that produces stylistically narrow output. The competitive advantage over traditional art pipelines shrinks considerably under those constraints.

"We used it for maybe eight months. The tools were useful for blocking out environments quickly, but the amount of cleanup required meant our artists were spending more time total than before. We quietly stopped using them. Nobody made a big announcement." - Art director at a mid-sized European game studio, speaking anonymously to industry press

The Publisher Pressure Problem - When Bosses Push AI and Developers Resist

One dynamic that emerged clearly from GDC floor conversations is the tension between developer sentiment and publisher mandates. Large publishers - the EA, Activision Blizzard, and Take-Two Interactives of the world - have shareholders to answer to. When those shareholders see competitors claiming productivity gains from AI adoption, they ask hard questions about why their portfolio studios aren't doing the same.

This creates pressure that trickles down through studio hierarchies in uncomfortable ways. Developers report being told in team meetings that AI adoption is a priority. They receive demos of tools that leadership wants them to evaluate. In some cases, bonuses have reportedly been tied to AI tool adoption metrics. And yet, when those same developers speak candidly to journalists at an industry conference, they disavow the technology entirely.

This gap between corporate mandate and developer reality is the real story of GDC 2026. It suggests that AI adoption in gaming is being driven from boardrooms rather than from creative teams - and that the developers actually doing the work have concluded the tools don't fit their needs, create legal risk, and damage their professional identity in ways that make resistance worth the friction.

The gap between publisher mandates and developer resistance is playing out in studio meetings around the world. Photo: Pexels

The professional identity dimension is underappreciated in most coverage of this issue. Game development is a craft. The developers who build careers in this industry often chose it specifically because they wanted to make art. Being told to use AI tools that generate art from other people's work without their consent cuts against core values that drove many of them into the field in the first place.

This is not a niche concern. Game jam culture - where small teams build games from scratch in 48 to 72 hours - has explicitly banned AI-generated art in major competitions. The ludum dare community, one of the largest game jams in the world, updated its rules to require disclosure of AI tool usage and created separate categories for AI-assisted projects. The implicit message: games made with AI are not quite the same thing as games made by humans, and the community wants to track the difference.

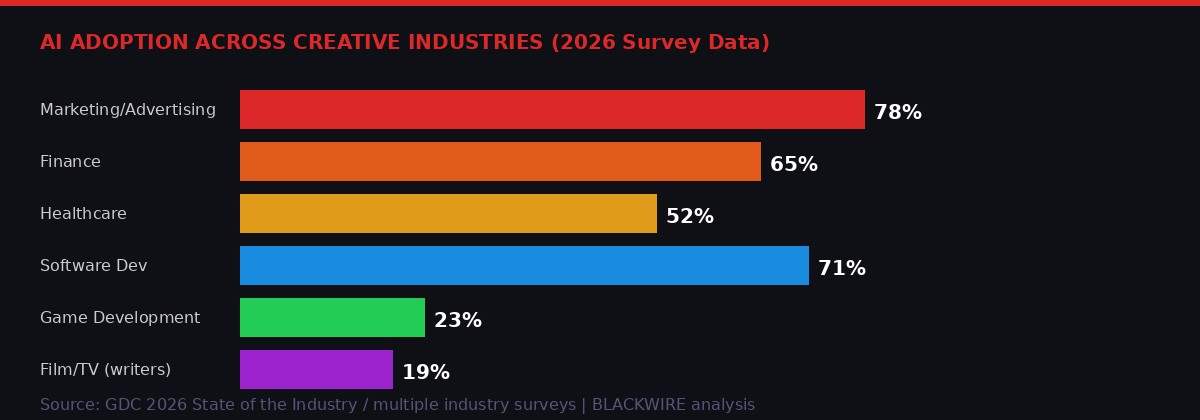

Gaming's AI adoption rate of roughly 23% is among the lowest of any major tech-adjacent industry - stark contrast to marketing and software development. Source: BLACKWIRE analysis.

Second-Order Effects: What Gaming's Rejection Means for the Wider AI Industry

Here is where the GDC story stops being a gaming story and becomes a technology story with much broader implications.

The AI industry's market valuations depend on a narrative of horizontal adoption across all creative industries. The pitch to investors is not "AI will help some companies do some tasks better." The pitch is "AI will transform every creative workflow across every industry, creating unlimited market opportunity." Gaming was supposed to be an early proof point for that narrative.

If game developers - who are technically sophisticated, work in a digitally-native industry, and have strong incentives to adopt tools that speed production - are actively resisting AI adoption three years into the generative AI era, what does that suggest about adoption curves in other creative fields?

Film and television, still recovering from the 2023 strikes, have implemented their own AI guardrails. The architecture and design professions have shown limited enthusiasm despite aggressive pitching from AI rendering tool companies. Music production has seen some AI adoption for specific tasks - stem separation, mastering assistance - but wholesale AI composition has found a much smaller market than projected.

The pattern suggests that creative industries are not actually the easy wins for AI adoption that Silicon Valley assumed. They have organized labor with leverage. They have professional identity structures that actively resist automation of core creative acts. They have legal exposure from copyright that makes risk-averse organizations hesitant to move fast. And they have experienced practitioners who are sophisticated enough to evaluate whether a tool actually helps or merely appears to help in a demo context.

"The AI industry told a very simple story: the tools are impressive, the productivity gains are real, adoption is inevitable. What GDC reveals is that 'inevitable' was doing a lot of work in that sentence. Creative workers have agency, and they're using it." - Technology policy researcher speaking at an adjacent conference during GDC week

The Legal Battlefield: Copyright, Training Data, and the Unresolved Questions

No analysis of developer AI skepticism is complete without mapping the legal landscape they're navigating. The courts have not finished deciding what AI companies did when they trained on copyrighted data without permission - and until they do, every organization that deploys those tools carries some residual risk.

The major cases are instructive. Getty Images v. Stability AI, filed in both US and UK courts, alleged that Stability AI trained its image generation model on 12 million Getty images without license or compensation. The US case has proceeded to discovery. UK courts ruled in Getty's favor on the question of whether the case could proceed in 2024.

The New York Times v. OpenAI, filed in late 2023, resulted in significant procedural wins for the Times in 2025. Evidence in that case revealed that OpenAI training data included large amounts of copyrighted material, that OpenAI was aware of this, and that the company's internal communications showed executives discussing copyright exposure directly.

For game studios, the question is not abstract. Game art - the concept art, texture maps, character designs, and environmental assets that make up a modern game's visual language - represents enormous creative investment. If an AI company trained on those assets to build tools now being sold back to studios, those studios are participating in the downstream commercialization of potentially infringing training data.

Unresolved copyright cases over AI training data have created legal liability concerns that make cautious studios hesitant to adopt AI art tools. Photo: Pexels

Studios' legal teams have reached divergent conclusions about this risk. Some have decided the exposure is manageable given current law. Others have issued categorical prohibitions on using commercially available AI art tools, particularly those trained on broad web scrapes. The latter group includes several large Japanese publishers, whose conservative corporate cultures and tight legal teams responded to early copyright signals with particular caution.

The unresolved nature of the legal landscape is itself a headwind for AI adoption. Executives who might otherwise champion AI integration find it difficult to get sign-off when legal counsel cannot confidently explain the company's exposure. This dynamic is replaying across every creative industry, not just gaming.

The Tools That Actually Worked - and the Gap Between Demo and Production

Not all AI integration in gaming has been rejected. Specific, narrow applications have found acceptance even among developers who otherwise disavow the technology. Understanding what works and what doesn't reveals something important about the actual capabilities of current AI tools.

Quality assurance testing has seen genuine AI adoption with relatively low controversy. AI systems can run automated test suites, identify edge cases in game logic, and flag visual anomalies in rendered frames faster and more consistently than human testers. This application doesn't threaten creative workers, doesn't involve copyright-adjacent training data, and produces measurable productivity gains that survive outside the demo context.

Procedural level generation has a long history in gaming predating the generative AI era - Minecraft, Spelunky, and the Souls series all use algorithmic generation for certain content. Modern AI tools that enhance this capability have been more warmly received because they fit an existing mental model developers already accept: algorithms that produce content according to rules humans define, not models that imitate human creative work.

AI-assisted bug detection in code has also found acceptance. Large language models can review code for common errors and suggest fixes, functioning more like an advanced linter than a replacement for programmers. Developers who would not let AI touch their art assets sometimes use Cursor or similar tools for coding tasks - they've mentally categorized these as different enough from creative work to accept.

What AI Applications Have Found Acceptance in Gaming

- QA automation: AI-driven test suites, visual regression testing, crash detection

- Procedural generation assistance: Expanding existing algorithmic level-gen pipelines

- Code review and linting: LLMs flagging bugs, suggesting refactors in dev tools

- Localization assistance: Machine translation drafts for human editor review (non-proprietary models)

- Audio compression and cleanup: AI processing of recorded audio for optimization

What has NOT found acceptance: AI-generated art assets, AI voice acting from human recordings, AI-written narrative content.

The pattern is consistent: AI tools that assist human specialists in technical, rule-bound tasks have been adopted. AI tools that claim to replace human creative acts have been rejected. This is not the story the AI industry wanted to tell. The high-margin, high-growth applications - the ones that justify astronomical valuations - were supposed to be exactly the generative creative tools that developers have now largely repudiated.

Technical AI applications like code review and QA automation have found acceptance - but creative AI tools face near-universal resistance. Photo: Pexels

What Happens Next: Pressure Points and Possible Outcomes

The GDC 2026 signal is meaningful but not determinative. Industries don't always develop in the direction practitioners want. The music industry's initial rejection of digital distribution eventually gave way to streaming, not because musicians changed their minds about the economics, but because the technology and market moved anyway. What pressure points exist that could shift the gaming AI dynamic?

Publisher financial pressure is the most immediate force. If a studio that uses AI art tools can ship a game at 70% of the cost of one that doesn't, the economics will eventually overcome developer resistance - particularly if studios can externalize the legal risk to tool providers. Some publishers are already attempting this by requiring AI tool vendors to provide copyright indemnification. If those indemnification agreements hold up in court, the legal risk calculus shifts.

However, indemnification creates its own problems. Tool providers who assume copyright liability for outputs become conservative about what their models will generate. They avoid outputs that closely resemble any identifiable human artist's style, which is precisely the kind of output that was most useful to studios. The most legally safe AI art tools are also the most creatively constrained.

A resolution to the major copyright cases could clarify things in either direction. If courts rule that training on web-scraped data constitutes fair use, AI tool adoption will likely accelerate. If they rule it constitutes infringement, the existing commercial AI art tools will require fundamental changes to their training data and cost structure. Either way, clarity is better than the current limbo.

Labor organizing among game developers is accelerating. The Communications Workers of America has organized QA workers at several major studios. Developers at Activision, ZeniMax, and Sega have formed units. Unionized workers have more formal mechanisms to negotiate AI policies than individual employees, and unions have shown willingness to fight hard on AI questions following the Hollywood precedents.

"We're watching the Hollywood situation very carefully. They won real protections. The question for game developers is whether we have the organizational structure to get to the same place. Every day there's more of that structure being built." - CWA organizer working with game studio employees, speaking to industry press

The most likely near-term outcome is continued bifurcation: technical AI applications continuing to spread while creative AI applications remain contentious, with adoption rates varying dramatically by studio size, union status, country of origin, and publisher. Japan's major publishers will remain conservative. Western indie developers will remain hostile. Large Western publishers will keep pushing, but face internal resistance that limits how far AI actually penetrates their production pipelines.

The AI industry needs to tell a story about this that doesn't undermine its own valuations. Expect more careful framing around "AI assistance" rather than "AI replacement" - language designed to reduce developer resistance by repositioning the tools as augmentation rather than automation. Whether developers buy that framing after three years of watching colleagues lose jobs will determine how effective the rebranding effort is.

Game developers' collective resistance to AI tools shows what happens when technically sophisticated workers with professional identity stakes push back against top-down technology mandates. Photo: Pexels

The Broader Signal: What This Tells Us About AI's Adoption Curve

Step back from gaming specifically and GDC 2026 delivers a broader message about where we are in the AI adoption cycle.

The generative AI wave has now been building for three years. We have had time to see which adoption stories held up and which were overclaimed. In productivity software - document creation, email drafting, coding assistance - the tools have found genuine traction. In scientific research, AI tools for protein structure prediction, materials discovery, and drug development have shown real results against real problems.

But in creative industries, the picture is fundamentally more complicated. The tools that were supposed to commoditize creative work have instead triggered a coherent response from creative workers who understand what's at stake and have mechanisms to resist. Those mechanisms - union contracts, professional codes, community norms, legal exposure - are not going away.

The AI industry's venture-capital-scale ambitions require that creative automation be a large addressable market. The GDC signal suggests that market is smaller, slower, and more contested than the pitch decks assumed. That doesn't mean AI isn't transformative in other domains. But investors evaluating AI companies at software-company multiples based on creative automation narratives should factor in what happened at the world's largest game developer conference in March 2026.

Nearly everyone said no. Loudly. On the record. To journalists. That matters.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramKey Sources

- The Verge: GDC 2026 floor reporting, AI developer sentiment (March 2026)

- GDC State of the Industry report, multiple years (gdconf.com)

- SAG-AFTRA Interactive Media Agreement AI provisions (January 2025)

- Getty Images v. Stability AI - US and UK court filings (2023-2026)

- New York Times v. OpenAI - court documents (2023-2025)

- Global Anti-Scam Alliance 2025 report on digital fraud

- CWA game industry organizing updates (cwa-union.org)

- Ludum Dare AI policy updates (ludumdare.com)

- Keep Android Open open letter (keepandroidopen.org)