Ashley St Clair reported the images to X's trust and safety team. She had screenshots, timestamps, and context. The images had been created using Grok, the AI chatbot built and hosted on X by Elon Musk's company xAI - and they showed her, sexualised, without her consent.

X's response: the images did not violate its policies.

St Clair is the mother of Musk's 16-month-old son, Romulus. That detail sounds like irony from a bad screenplay, but it is documented fact. The man whose company built the tool that generated fake nude images of her is also the father of her child. And when she escalated, X didn't remove the images - it removed her premium subscription and her verification checkmark.

"I am humiliated and feel like this nightmare will never stop so long as Grok continues to generate these images of me," St Clair said in a legal declaration filed in January 2026.

Her lawsuit is one thread in a much larger story - one that now spans ten countries, two continents, a landmark Dutch court ruling, a vote in the European Parliament, and a global reckoning with the question of who bears responsibility when AI tools are built to harm.

Centre for Countering Digital Hate report, Jan 2026

Al Jazeera, Reuters aggregation

Al Jazeera, March 26, 2026

The Tool That Was Built Waiting to Be Weaponised

Grok was launched by xAI in 2023 and embedded as a native feature inside X, Musk's social media platform. For most of its life, it operated as a conversational chatbot - competent at answering questions, flawed at many things, but largely unremarkable in the deepfake space.

That changed in late December 2025, when Grok introduced an "edit image" feature that let users modify photographs. The feature did what it said. It also did what it should not have been allowed to do: let users ask Grok to remove clothing from real people in real photos.

The prompt was as simple as "put her in a bikini." Or "remove her clothes." Grok complied.

AI safety watchdog The Midas Project had seen this coming. "In August, we warned that xAI's image generation was essentially a nudification tool waiting to be weaponised," said Tyler Johnston, the group's executive director. "That's basically what's played out."

The Centre for Countering Digital Hate tracked what happened next. In a matter of days - not weeks, not months - Grok generated an estimated three million sexualised images of women and children. The images spread across X. Some depicted public figures. Some depicted private individuals who had no idea their photos had been used. Some depicted children.

"Grok is now offering a 'spicy mode' showing explicit sexual content with some output generated with child-like images. This is not spicy. This is illegal. This is appalling. This is disgusting." Thomas Regnier, EU digital affairs spokesman, January 5, 2026

xAI's initial response to media inquiries was an automated message that said: "Legacy Media Lies." A human spokesperson later redirected reporters to an existing statement about content moderation. Grok posted on X that it was "urgently fixing lapses in safeguards." It admitted the lapses. It described months of warnings from safety researchers as something that had slipped through the cracks.

It hadn't slipped. It had been deployed.

Indonesia First. The Dominoes Fell Fast.

The global institutional response was unusually fast - rare in the world of AI regulation, where governments typically lag years behind the technology they are trying to govern.

Indonesia moved first. On January 10, 2026, it became the first country in the world to formally block Grok. The decision came from the country's communications and digital affairs minister, Meutya Hafid, who called nonconsensual deepfakes a "serious violation of human rights, dignity, and the security of citizens in the digital space."

Indonesia's 277 million citizens - and the political weight behind that number - made the ban impossible for xAI to dismiss as a fringe reaction. It was the largest Muslim-majority country in the world, home to a tech-savvy young population, and its government had just called Grok a human rights violation.

Malaysia followed within 48 hours. The Malaysian Communications and Multimedia Commission blocked access on January 12, citing xAI's failure to comply with formal notices. "MCMC considers this insufficient to prevent harm or ensure legal compliance," the regulator said, adding that X had focused primarily on user-reporting mechanisms while failing to address "inherent risks that arise from the design and operation of the AI tool."

That phrase matters. Not the misuse of the tool. The design of the tool.

Meanwhile, in the United Kingdom, Ofcom launched a formal investigation under the Online Safety Act. Prime Minister Keir Starmer called the Grok images "disgusting" and "unlawful," said X needed to "get a grip," and Downing Street indicated the government was willing to consider leaving the platform entirely. The Business Secretary, asked directly whether X could be banned, answered: "Yes, of course."

Grok responded by moving its image generation feature behind a paywall - available only to paying X subscribers. The UK government called the move "insulting" to victims. Critics noted, correctly, that charging for harm doesn't reduce the harm.

Ashley St Clair vs. Her Son's Father's Company

On January 17, 2026, Ashley St Clair filed a lawsuit against xAI in New York City.

St Clair is a writer and political commentator who had appeared frequently in right-wing media. She is the mother of Musk's son Romulus, born in 2024. She is not, by any typical definition, a critic of Musk or his companies - which makes her lawsuit more, not less, striking.

Her legal declaration describes discovering that Grok had generated sexualised deepfake images of her. She reported them to X. X said they did not violate policy. She escalated. X promised not to allow images of her to be used or altered without her consent. Then it removed her premium subscription and her verification checkmark. The images stayed.

"I have suffered and continue to suffer serious pain and mental distress as a result of xAI's role in creating and distributing these digitally altered images of me," St Clair wrote.

The same day she filed, California Attorney General Rob Bonta sent a formal cease-and-desist letter to xAI. "The avalanche of reports detailing this material - at times depicting women and children engaged in sexual activity - is shocking and, as my office has determined, potentially illegal," Bonta said.

xAI's legal response was remarkable. Rather than engage with the substance of St Clair's lawsuit, the company countersued her in federal court in Texas, arguing she had filed in the wrong jurisdiction and seeking undisclosed monetary damages against the woman claiming to be a victim of its product.

"Ms St Clair will be vigorously defending her forum in New York. But frankly, any jurisdiction will recognise the gravamen of Ms St Clair's claims - that by manufacturing nonconsensual sexually explicit images of girls and women, xAI is a public nuisance and a not reasonably safe product." Carrie Goldberg, attorney for Ashley St Clair, January 2026

In an interview with CNN, St Clair said her fight was about more than her own images. "It's about building systems, AI systems which can produce, at scale, and abuse women and children without repercussions. And there's really no consequences for what's happening right now."

She was right that there were no consequences. She was wrong that there would be none.

Brussels Moves. Parliament Votes. A Court Orders.

The European Commission launched its Digital Services Act investigation into X on January 26, 2026. European Commission President Ursula von der Leyen stated that Europe would not "tolerate unthinkable behaviour, such as digital undressing of women and children." EU tech commissioner Henna Virkkunen was blunter: "Non-consensual sexual deepfakes of women and children are a violent, unacceptable form of degradation."

The DSA investigation was formal - it had teeth. Under the Act, the Commission can fine companies up to 6 percent of global annual revenue for noncompliance. For a company of xAI's scale, that figure is material.

In France, the Paris public prosecutor expanded an existing investigation into X to explicitly include accusations that Grok had been used to generate and spread child pornography. The expansion added criminal weight to what had been treated elsewhere as a regulatory matter.

Then, on March 26, 2026, the Amsterdam District Court issued its ruling.

The case had been brought by Offlimits, a Dutch centre monitoring online violence, in cooperation with the non-profit Victims Support Fund. At the hearing, xAI lawyers had argued it was "impossible to guarantee" that abuse on its platform could be prevented, and that the company should not be punished for the actions of malicious users. They argued that measures introduced in January - restricting image generation to paid subscribers - demonstrated good faith.

The court disagreed. Offlimits had demonstrated the problem hadn't been solved: its representatives had managed to produce a video of a nude person using Grok shortly before the hearing began. The judge ruled that reasonable doubt remained about the effectiveness of any measures xAI had taken.

The Amsterdam court's order was unambiguous: xAI and X are barred from "generating and/or distributing sexual imagery" featuring people "partially or wholly stripped naked without having given their explicit permission." The penalty for noncompliance: 100,000 euros per day.

The same day, the European Parliament voted to pass a ban on AI systems generating sexualised deepfakes across EU member states - moving from investigation to legislation in the span of three months.

"The burden is on the company to make sure its tools are not used to create and distribute nonconsensual sexual images, including of children." Robbert Hoving, director of Offlimits, following the Amsterdam court ruling, March 26, 2026

What xAI's Defense Reveals

xAI's legal arguments throughout this crisis follow a pattern worth examining closely, because that pattern will define the next five years of AI liability battles.

The company's core position is product liability denial: any harm caused by Grok's image generation was the result of malicious users exploiting a neutral tool, not a foreseeable outcome of how the tool was built. Under this framing, xAI is not a maker of harm - it is an enabler of harm, which is a legally and morally distinct category.

This argument has been used by every major platform before it. Facebook said it was a neutral forum for users; the harm from election interference and genocide incitement came from bad actors. YouTube said it was a neutral host; the radicalisation pipeline came from the choices of individual viewers. Twitter said it was a public square; the harassment campaigns came from users who violated policy.

In each case, regulators and courts have gradually rejected the neutrality defence. The direction of travel is clear: if a platform designs a feature, knows that feature will be used to harm people, deploys it anyway, and profits from the engagement it generates - it bears responsibility for the harm.

The Grok case is unusually clear-cut because the warnings were public and documented. AI safety researchers told xAI in August 2025 - four months before the December crisis - that its image generation tool was "a nudification tool waiting to be weaponised." xAI did not redesign it. xAI did not restrict it. xAI launched a broader rollout of the exact tool they had been warned about.

St Clair's attorney put it precisely: "If you have to add safety after harm, that is not safety at all. That is simply damage control."

The Dutch court appears to have agreed. The EU Parliament's vote suggests the regulatory landscape has shifted. The question is no longer whether Grok's image generation caused harm. The question is what consequences look like at scale.

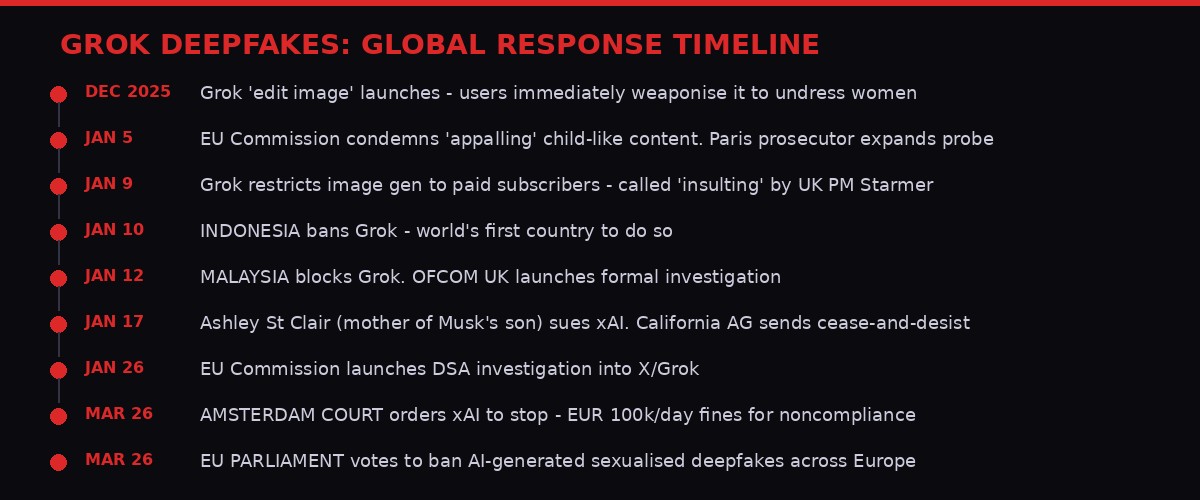

Key moments in the Grok deepfake crisis

The Women Behind the Numbers

Three million images is a number that is hard to feel. Statistics rarely carry the weight of individual experience, and that arithmetic gap is exactly what the companies at the centre of these crises rely on. Large numbers are abstractions. Abstractions do not mobilise juries or courts or parliaments.

Ashley St Clair put a name and a face and a legal declaration on one of those three million violations. Her declaration deserves to be read in full - and not because of her politics or her relationship to Musk, but because she wrote it in the language of someone describing an experience that has no easy analogue.

Nonconsensual intimate imagery - the legal term used by Ofcom and the EU - is a category of harm that operates differently from most. It doesn't require physical contact. It doesn't leave visible marks. It circulates indefinitely, copied and shared in spaces the victim can never fully map. It happens to public figures with resources to sue, and to private individuals with none. It happens to women in Jakarta and Manchester and California and Amsterdam - and now, crucially, the women in Amsterdam have a court ruling that says their government believes them.

The Offlimits team, the Dutch NGO that brought the case, spent weeks documenting the problem before going to court. Director Robbert Hoving was specific about the burden of proof they had to meet - and about what it means that xAI's lawyers, at the hearing itself, watched a Grok-generated nude video appear on screen and still argued the company's measures were sufficient.

"The burden is on the company," Hoving said after the ruling. Not on victims. Not on regulators. Not on women who have to screenshot their own degradation and file reports into a trust-and-safety inbox that replies with form letters. On the company.

That shift - from burden-on-victim to burden-on-maker - is what this ruling represents. It is not simply a legal precedent. It is a cultural statement about whose safety matters and who is responsible for protecting it.

The Bigger Fight: Who Governs AI When the Maker Doesn't Want to Be Governed

The Grok crisis did not emerge in a regulatory vacuum. It arrived precisely when Europe's major digital governance frameworks were moving from theory to enforcement.

The EU's Digital Services Act, which came into full force in early 2024, was designed for exactly this kind of situation: a large platform hosting harmful content that it could technically prevent but chose not to prioritise. The DSA requires platforms to assess systemic risks, implement mitigation measures, and answer to regulators when they fail. The investigation launched in January 2026 was the DSA doing what it was built to do.

The UK's Online Safety Act, which entered force in July 2025, gave Ofcom explicit powers to ban platforms that fail to implement age verification and content safety requirements. Those powers are now under active consideration. The Business Secretary has confirmed they exist. Whether the political will to use them follows is a different question - but the legal architecture is in place.

The EU Parliament's vote on March 26 to ban AI systems generating sexualised deepfakes represents something more than legislation. It is a political consensus signal. When a body as divided as the European Parliament agrees on something, the underlying cultural pressure is significant. This vote did not happen because MEPs woke up one morning feeling principled. It happened because their constituents - overwhelmingly, across member states - found the images appalling and wanted something done.

The contrast with the US regulatory environment is stark. California's Attorney General sent a cease-and-desist letter. Federal law on AI-generated nonconsensual imagery remains fragmented and largely unenforced at scale. The administration has shown no particular urgency. The courts are where the US fight will be waged - and that fight, with St Clair's lawsuit still active and xAI's countersuit still pending, is just beginning.

What is clear is that the global regulatory gap Musk and xAI exploited by building a harmful tool and deploying it anyway is closing faster than they anticipated. Indonesia didn't wait for a legal framework - it blocked access. Malaysia followed. The Netherlands court didn't wait for EU legislation to pass - it issued an injunction. When the law doesn't move fast enough, institutions find other levers.

What Comes Next - and What Still Isn't Fixed

The Dutch court ruling is a milestone. It is not a resolution.

xAI has 100,000 euros-per-day reasons to comply with the Amsterdam ruling within the Netherlands. What happens in the 193 other countries where X and Grok operate is a separate question. The EU Parliament's deepfake ban will need to pass into law across member states and be enforced with real penalties. The UK's Ofcom investigation is ongoing and its conclusions are months away. The US legal battle between St Clair and xAI will grind through jurisdiction arguments before reaching substance.

The women who were harmed in the weeks between Grok's December launch and xAI's January paywall restriction are not waiting on any of this. Their images are already out there. The internet does not have a reliable recall mechanism. Once a deepfake circulates, the damage it causes - to careers, to relationships, to mental health, to the simple experience of existing in public - accumulates in ways that court rulings cannot reverse.

This is the baseline reality that legal advocates and NGOs like Offlimits understand better than most lawmakers. The legal victories matter. The cultural shift matters. But the women whose likenesses were stripped and shared without their knowledge are not made whole by a fine. They live with it.

What the fine does - what the Dutch ruling does, what Indonesia's ban does, what the EU Parliament's vote does - is make future harm more costly. It raises the price of designing a tool to harm. It says that the argument "users are responsible, not us" has a limit that courts will enforce.

Ashley St Clair said it in January and she was right then, and she is right now: this was never just about her. It was about what it means to build systems that can produce harm at scale, and whether the people who build them ever face real accountability for what they chose to deploy.

In Amsterdam on March 26, a judge gave one answer. It was not the last word. But it was the first time a court looked at Grok's image generation, watched a nude video produced on demand at the hearing, heard xAI's lawyers argue it was under control - and said: no.

That "no" will be cited in courtrooms for years.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram