The Breach That Exposed How AI Really Gets Built: Inside the Mercor Hack That Shook OpenAI, Meta, and Anthropic

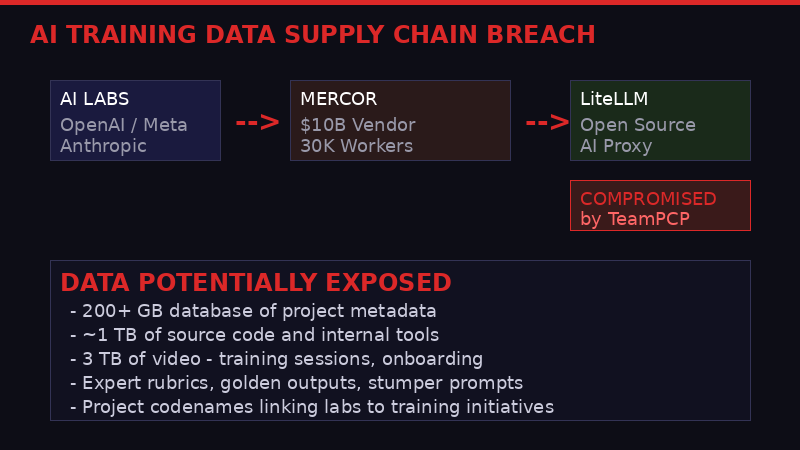

A supply chain attack on an obscure AI proxy tool cascaded into the most sensitive layer of the AI industry - the human data factories that teach frontier models to think. Meta has frozen all work with the breached firm. OpenAI is investigating. Thousands of contractors are out of work. And somewhere, a hacking crew is sitting on terabytes of what might be the most valuable trade secrets in technology.

The AI training data supply chain is one of the industry's best-kept secrets. A single breach just ripped it wide open.

On March 31, an email landed in the inboxes of Mercor employees with the kind of terse corporate language that signals genuine panic underneath. "There was a recent security incident that affected our systems along with thousands of other organizations worldwide," the company wrote. No specifics. No timeline for resolution. Just an acknowledgment that something had gone very, very wrong.

Within 72 hours, the full picture started to emerge - and it was worse than anyone outside the AI industry's innermost circle might have guessed. Mercor, a San Francisco startup valued at $10 billion and one of the primary suppliers of proprietary training data to Meta, OpenAI, and Anthropic, had been caught in the blast radius of a supply chain attack that compromised a widely used open-source AI proxy tool called LiteLLM. The attackers - a group calling itself TeamPCP - had poisoned two versions of LiteLLM's software updates, and Mercor was among thousands of organizations that installed the tainted code.

But while most of those thousands of victims are dealing with ordinary cybersecurity headaches, Mercor's breach is categorically different. The data at stake isn't customer records or financial information. It's something far more valuable to the companies building the most powerful AI systems on Earth: the secret recipes that teach their models to think.

What Mercor Actually Does - The $10 Billion Data Factory Nobody Talks About

How human expertise flows through Mercor's data pipeline into frontier AI models. Source: WIRED, The Verge, company filings.

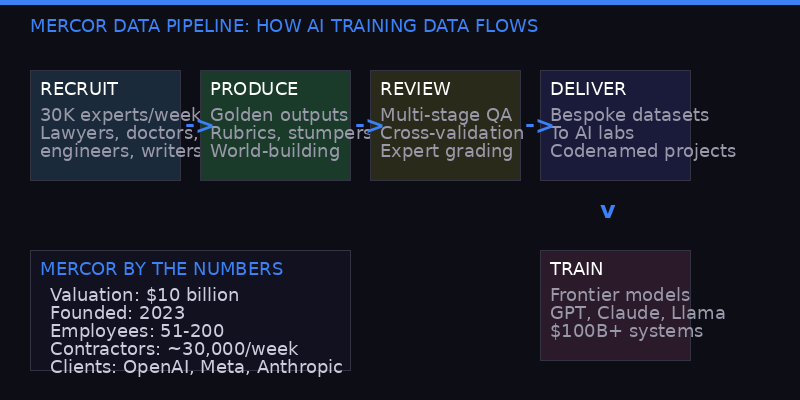

To understand why this breach matters, you need to understand what Mercor does - and why virtually nobody outside of Silicon Valley's AI inner circle has heard of it despite its staggering valuation.

Mercor was founded in 2023 by three 19-year-olds from the Bay Area: Brendan Foody, Adarsh Hiremath, and Surya Midha. It started as a jobs platform that used AI interviews to match overseas engineers with tech companies. But the founders quickly discovered that AI labs had an insatiable appetite for something specific: highly curated, expert-generated training data. They pivoted, and the money followed. By 2025, Mercor was valued at $10 billion, making its trio of founders the world's youngest self-made billionaires (The Verge).

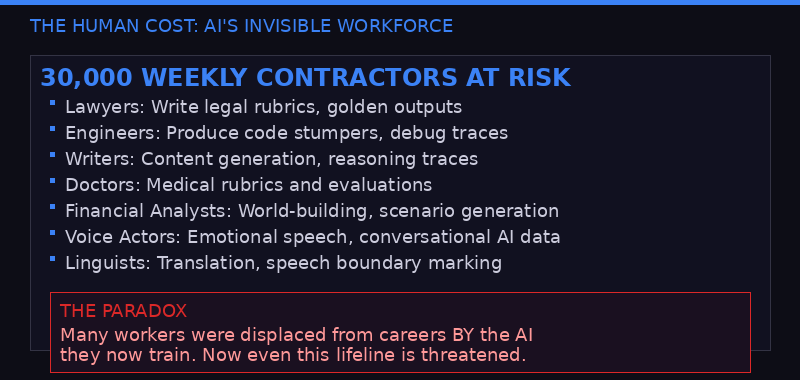

The business model is conceptually simple but operationally massive. Mercor employs approximately 30,000 professionals each week - lawyers, doctors, financial analysts, software engineers, linguists, even wildlife conservation scientists - to produce bespoke datasets that teach AI models how to perform specific tasks. These aren't mechanical data-labeling gigs. Workers engage in elaborate exercises: writing "golden outputs" (the ideal chatbot response), crafting detailed rubrics that define what "good" looks like in a given domain, producing "stumpers" (prompts designed to make models fail), and participating in what Mercor calls "world-building" - role-playing entire corporate environments to generate realistic business documents that models can learn from.

One type of project gathers groups of lawyers, HR managers, or bankers for simulated corporate scenarios. Teams receive dedicated email addresses, calendars, and chat applications, then spend days creating hundreds of documents - slide decks, meeting notes, financial forecasts - for fictional companies. Workers have reported seeing references to teams labeled "Management Consulting World No. 133," suggesting the operation involves hundreds, possibly thousands, of parallel workstreams (The Verge).

The secrecy around this work is extreme. Mercor and its competitors - Scale AI, Surge AI, Handshake, Turing, Labelbox - use codenames for client projects. Workers typically aren't told which AI lab they're training data for. Managers refer to clients simply as "the client." It's rare to see any CEO from these firms speaking publicly about specific services. The training data is considered the crown jewels of the AI industry - the proprietary ingredient that differentiates a $100 billion model from a $10 billion one.

That wall of secrecy just got a sledgehammer taken to it.

The Attack Chain: From LiteLLM to the Crown Jewels

The kill chain: how a poisoned open-source update cascaded into AI's most sensitive data layer.

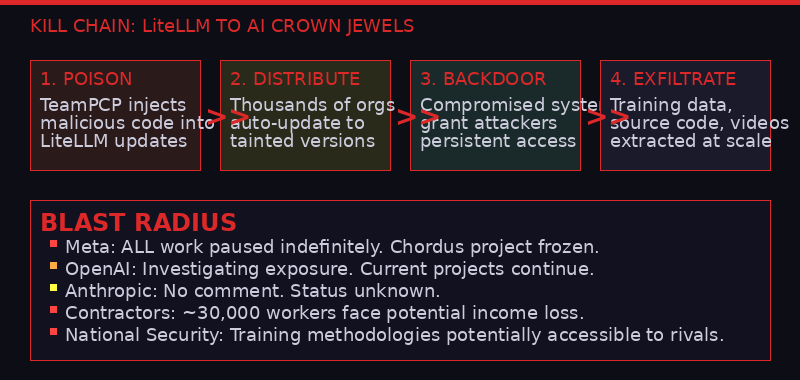

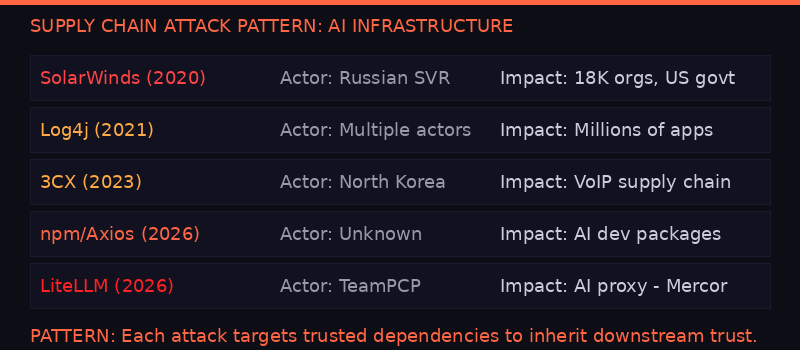

The breach traces back to TeamPCP, a financially motivated hacking group that has been on a supply chain rampage in recent months. The group compromised two versions of LiteLLM, an open-source tool that developers use to manage API calls across multiple large language model providers. It's the kind of unglamorous infrastructure software that sits deep in the plumbing of thousands of organizations - the sort of thing that gets auto-updated without anyone thinking twice about it.

TeamPCP injected malicious code into LiteLLM's updates, effectively backdooring every organization that installed them. The technique is a textbook supply chain attack - compromise a trusted dependency, and you inherit the trust relationships of everyone who uses it. It's the same class of attack that hit SolarWinds in 2020, Log4j in 2021, and 3CX in 2023. But this time, the blast radius included AI's most sensitive data layer.

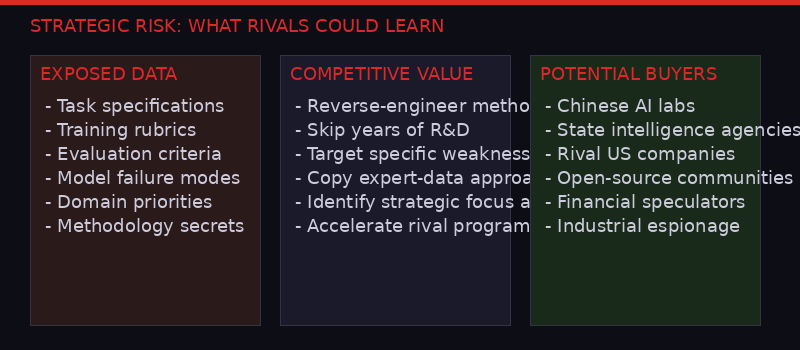

For most of the thousands of affected organizations, the breach is a routine incident - reset credentials, audit logs, patch the vulnerability, move on. For Mercor, it's existential. The data flowing through their systems isn't just sensitive in the privacy-violation sense. It's sensitive in the competitive-intelligence sense. If an attacker accessed Mercor's internal systems, they could potentially see exactly what kind of training data OpenAI, Meta, and Anthropic are producing - what tasks they're prioritizing, what capabilities they're trying to build, where their models are failing, and how they're trying to fix those failures.

"AI labs are sensitive about this data because it can reveal to competitors - including other AI labs in the US and China - key details about the ways they train AI models," WIRED reported (WIRED, April 3). The phrasing is diplomatic. What it means in practice is that if a Chinese AI lab got access to Mercor's project databases, they could potentially reverse-engineer the training methodologies of America's leading AI companies - not the weights or the architecture, but the human-expertise layer that increasingly differentiates frontier models from each other.

Mercor confirmed the attack in its internal email but offered no details about what was accessed. Their LinkedIn statement, posted three days after the breach disclosure, said their "security team moved promptly to contain and remediate the incident" and that they had engaged "leading third-party forensics experts." Standard crisis communications. The kind of language that tells you nothing about the actual damage.

Meta's Freeze: What It Signals About the Severity

Meta's indefinite pause on Mercor work affects hundreds of contractors and at least one major project called Chordus.

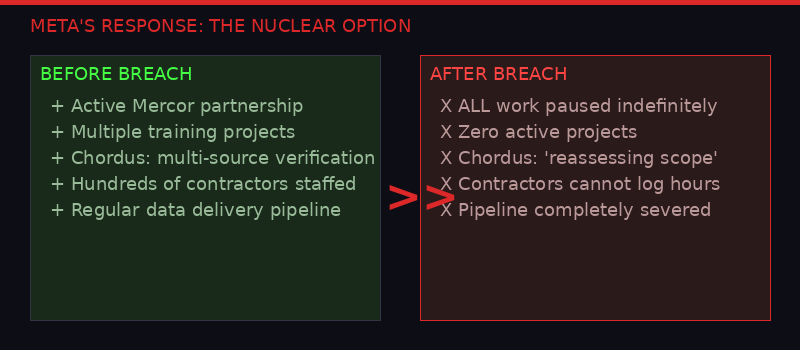

The most telling indicator of severity isn't Mercor's statement. It's Meta's response.

Meta has paused all work with Mercor indefinitely. Not paused one project. Not implemented additional security reviews. All work, indefinitely. Two sources confirmed the freeze to WIRED. That's the nuclear option in vendor management - the kind of decision that doesn't get made over a minor security scare.

The freeze has immediate human consequences. Contractors who were staffed on Meta-specific projects through Mercor cannot log hours until the projects resume - if they resume. They are, functionally, out of work with no warning and no timeline for resolution. Mercor is reportedly working to find alternative projects for those affected, but the company's internal communications, viewed by WIRED, suggest the situation is chaotic.

One of the affected projects carries the codename "Chordus" - a Meta initiative designed to teach AI models to use multiple internet sources to verify their responses to user queries. In a Slack channel dedicated to the project, a Mercor project lead told staff that the company was "currently reassessing the project scope." Contractors weren't told why. They just knew their income had disappeared.

The Chordus detail is revealing. Teaching AI models to cross-reference and verify information from multiple sources is one of the frontier challenges in the field. It's the difference between a chatbot that confidently hallucinates and one that can actually be trusted for research and analysis. If the data associated with this project was exposed, it would give a competitor significant insight into Meta's approach to solving one of AI's hardest problems.

OpenAI's response has been notably different. The company has not stopped its current projects with Mercor but is "investigating the startup's security incident to see how its proprietary training data may have been exposed," a spokesperson confirmed to WIRED. The spokesperson added that "the incident in no way affects OpenAI user data" - a clarification that suggests the company is already thinking about the legal and PR dimensions of the breach. Anthropic, the third major client, did not respond to requests for comment.

The divergence between Meta's freeze and OpenAI's continued operation is itself interesting. It could mean Meta had more sensitive data flowing through Mercor at the time of the breach. It could mean Meta's internal security assessment found evidence of deeper compromise. Or it could simply mean Meta's risk tolerance is lower. Without more transparency from any of the companies involved, the public is left guessing.

TeamPCP: The Hackers Behind the Curtain

TeamPCP's supply chain attacks have been gaining momentum for months. Mercor is their highest-profile victim yet.

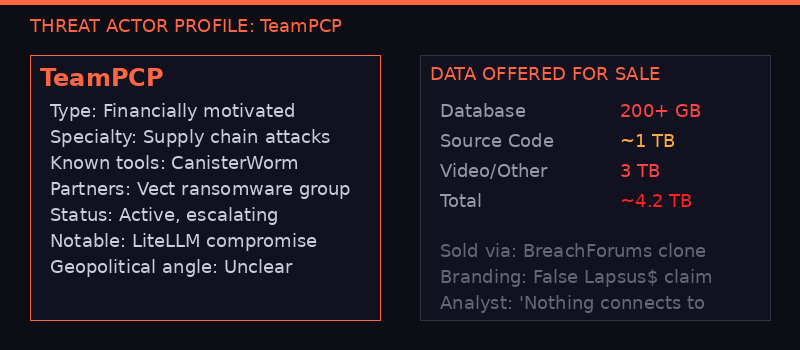

TeamPCP is a relatively new entrant to the cybercrime ecosystem, but they've been building momentum with alarming speed. Their specialty is supply chain attacks - compromising trusted software updates to backdoor downstream organizations at scale. The LiteLLM compromise is their most impactful operation to date, but it's part of a broader campaign that has been gaining sophistication.

The group operates with a dual motivation that makes them harder to categorize than typical cybercriminal outfits. They are primarily financially motivated - they extort victims, work with ransomware groups like Vect, and sell stolen data. But they've also shown a geopolitical dimension. TeamPCP has deployed a data-wiping worm called "CanisterWorm" through vulnerable cloud instances that have Farsi as their default language or clocks set to Iran's time zone. Whether this represents genuine political motivation or opportunistic targeting is unclear.

"TeamPCP is definitely financially motivated," Allan Liska, an analyst at the security firm Recorded Future who specializes in ransomware, told WIRED. "There might be some geopolitical stuff as well, but it's hard to determine what's real and what's bluster, especially with a group this new."

Adding confusion to the attribution picture, a group using the well-known name "Lapsus$" claimed this week to have breached Mercor separately. On a Telegram channel and a BreachForums clone, the actor offered to sell an array of alleged Mercor data: a 200-plus gigabyte database, nearly 1 terabyte of source code, and 3 terabytes of video and other information. But security researchers say the Lapsus$ branding is now used by multiple unrelated criminal groups, and Mercor's own confirmation of the LiteLLM connection points to TeamPCP as the actual attacker.

The data being offered for sale - if genuine - is extraordinary in its scope. Three terabytes of video likely includes recordings of AI training sessions, contractor onboarding calls, and possibly the elaborate world-building exercises that Mercor conducts. The source code could include Mercor's proprietary matching algorithms, quality-assessment systems, and project-management tools. The database could contain project metadata linking specific AI labs to specific training initiatives - the kind of competitive intelligence that would be worth millions to a state-sponsored operation or a rival AI lab.

Liska examined the dark-web posts and concluded: "There is absolutely nothing that connects this to the original Lapsus$." The real Lapsus$ group, which famously breached Nvidia, Samsung, and Microsoft in 2022, was largely dismantled after arrests in the UK. The name has since become a free-floating brand that any cybercriminal group can adopt for credibility.

The Human Toll: 30,000 Workers in Limbo

The breach's human cost: thousands of contractors face lost income and uncertain futures.

The breach narrative tends to focus on the corporate victims - Meta, OpenAI, Anthropic - but the most immediate human impact falls on Mercor's approximately 30,000 weekly contractors. These are the lawyers, engineers, writers, and specialists who produce the training data that powers the world's most advanced AI systems. Many of them were already in precarious employment situations before the breach.

The Verge's extensive reporting on Mercor's workforce paints a picture of an industry built on precarity. Contractors sign up for projects that can be canceled without warning. One worker described saving for first and last month's rent on a new apartment when her project was abruptly terminated with "no warning, no security, nothing." Another found himself recording fake phone conversations - pretending to ask a chatbot for fitness plans while pots and pans clanged in his kitchen - for what he assumed was voice-model training data. A former online tutor, replaced by a chatbot at his previous job, was hired to listen to dialogue clips slowed to 0.1x speed and mark speech boundaries to the millisecond.

These workers operate under strict NDAs and monitoring software. They typically don't know which AI lab they're working for or how their output will be used. The work is cognitively demanding - crafting "stumpers" that break frontier models requires deep domain expertise and creative thinking - but the employment relationship is as disposable as a gig-economy ride. Projects appear and vanish. Contracts offer no guarantees of continuity.

Now, with Meta's freeze affecting an unknown number of Mercor-staffed projects, contractors face the prospect of lost income through no fault of their own. The breach happened in Mercor's infrastructure, caused by a vulnerability in a third-party tool that contractors never touched. But they're the ones who stop getting paid.

This dynamic exposes a fundamental asymmetry in the AI industry's labor model. The companies at the top - Meta, OpenAI, Anthropic - can absorb a breach. Mercor, valued at $10 billion, will survive it. But the 30,000 workers who actually produce the data that makes these systems work have zero cushion. They're the most essential and most expendable link in the chain simultaneously.

The irony is sharp enough to cut: many of these workers were displaced from previous careers by the very AI systems they're now hired to train. Writers who lost content-marketing jobs to ChatGPT. Tutors replaced by chatbots. Engineers whose roles were automated. They found work teaching AI to do what they used to do - and now even that precarious lifeline is threatened by a security breach in the infrastructure that connects their labor to the companies that consume it.

The Competitive Intelligence Dimension: What China Could Learn

The breach's strategic dimension: training methodology insights could accelerate rival AI programs by months.

Strip away the cybersecurity jargon and the corporate crisis management, and the Mercor breach raises a question that should concern anyone paying attention to the AI race: what happens when the secret ingredient in America's most strategically important technology gets exposed?

The AI industry's competitive dynamics have shifted dramatically in the past 18 months. Model architectures are increasingly commoditized - transformer variants, mixture-of-experts, the basic building blocks are well understood. Compute is a differentiator, but one that money can buy. What increasingly separates a market-leading model from a fast follower is the quality and specificity of its training data - particularly the human-generated data that teaches models to reason, verify, and operate in specific professional domains.

This is exactly the data that Mercor handles. The company's LinkedIn page describes its mission as connecting "human expertise with leading AI labs and enterprises to train frontier models." Their recent posts reference an initiative called APEX-Agents, where Mercor-generated expert tasks were used to post-train a model that achieved state-of-the-art legal performance and, surprisingly, strong medical performance despite having zero medical tasks in its training set. The lesson: high-quality expert data in one domain can generalize to others. It's a finding with profound implications for anyone trying to build competitive AI systems.

If the Mercor breach exposed the specific types of tasks, rubrics, and evaluation criteria that OpenAI, Meta, or Anthropic use to train their models, that information could be extraordinarily valuable to Chinese AI labs like Alibaba's Qwen team, ByteDance, or DeepSeek. Not because it would give them the model weights - those were never at Mercor - but because it would give them the playbook. The methodology. The specific approaches to generating expert data that took American companies years and billions of dollars to develop.

There's no public evidence that any state-sponsored actor has accessed the breached data. TeamPCP appears to be financially motivated, not state-sponsored. But the data is now in the wild - or at least, portions of it are being offered for sale on dark-web forums. Once data leaves the vault, controlling who ultimately acquires it becomes exponentially harder. A financially motivated hacker who sells to the highest bidder doesn't check the buyer's passport.

The U.S. government has spent enormous political capital trying to restrict China's access to advanced AI chips through export controls. It has sanctioned companies and blacklisted entities to prevent technology transfer. But the Mercor breach illustrates a vulnerability that no export control can address: the training methodology isn't hardware that can be interdicted at a port. It's information. And information, once breached, moves at the speed of a file transfer.

The Broader Pattern: Supply Chain Attacks on AI Infrastructure

The AI stack is built on layers of open-source dependencies. Each one is a potential attack vector.

The Mercor breach is not an isolated incident. It's the latest - and most consequential - in a pattern of supply chain attacks targeting AI infrastructure that has been accelerating throughout 2026.

TeamPCP's LiteLLM compromise is itself part of what security researchers describe as "a larger supply chain hacking spree in recent months that has been gaining momentum," according to WIRED's reporting. The group has been systematically targeting tools and dependencies that sit in the AI development pipeline - the connective tissue between AI labs and the services they depend on.

Earlier this year, a separate supply chain attack hit the npm ecosystem, targeting packages used in AI development workflows. Malicious code was injected into popular Node.js packages, compromising developers who installed them. The attack shared tactical similarities with TeamPCP's approach - poison a trusted dependency, inherit the trust of everyone downstream.

The pattern reveals a structural vulnerability in how the AI industry builds its infrastructure. Frontier AI labs invest billions in security for their model weights, training clusters, and inference infrastructure. But their operations depend on a vast ecosystem of open-source tools, third-party services, and vendor relationships that don't receive anywhere near the same level of security investment. LiteLLM is a prime example: it's an essential piece of plumbing that allows developers to manage API calls across multiple LLM providers, but it's an open-source project maintained by a small team. It's exactly the kind of dependency that sophisticated attackers target because the ratio of impact to effort is astronomical.

The SolarWinds parallel is instructive. In 2020, Russian intelligence compromised SolarWinds' software update mechanism and used it to infiltrate 18,000 organizations, including the U.S. Treasury, the Department of Commerce, and the Cybersecurity and Infrastructure Security Agency (CISA) itself. The attack worked because SolarWinds sat at a chokepoint - its software was trusted by thousands of organizations, and that trust was inherited by the malicious update. LiteLLM occupies a similar position in the AI stack, and the Mercor breach demonstrates that the consequences of a supply chain attack at this layer can be just as severe.

What makes the AI supply chain particularly vulnerable is the speed at which it evolves. New tools, frameworks, and dependencies appear and gain adoption at a pace that outstrips security review processes. AI developers are under enormous competitive pressure to ship fast, and security often takes a back seat to velocity. The LiteLLM compromise exploited this dynamic - the tainted updates were installed by organizations that trusted the package and auto-updated without rigorous review.

The lesson is clear but uncomfortable: the AI industry has a supply chain security problem that no individual company can solve. It requires industry-wide coordination on dependency vetting, update verification, and incident response - the kind of collective action that competitive dynamics actively discourage.

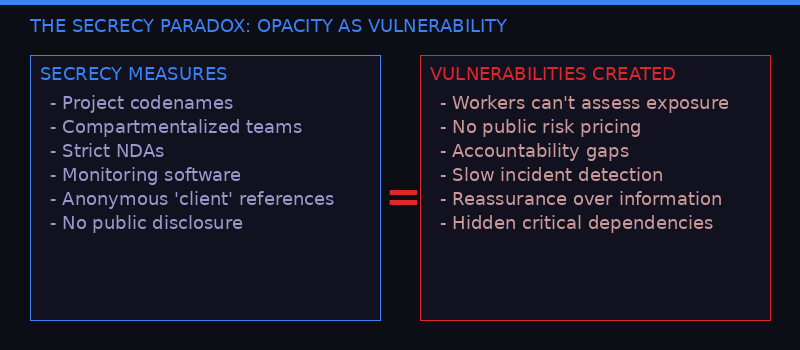

The Secrecy Problem: When Opacity Becomes Vulnerability

The AI industry's culture of secrecy around training data creates the very vulnerabilities it's designed to prevent.

There's a deep irony in the Mercor breach that goes beyond the usual cybersecurity narrative. The extreme secrecy that surrounds AI training data - the codenames, the NDAs, the compartmentalized project structures - is designed to protect competitive advantages. But that same secrecy creates the conditions that make breaches more damaging when they occur.

Consider the information asymmetry. Mercor's contractors don't know which AI lab they're working for. They don't know how their data is used. They don't know what other projects exist alongside theirs. This compartmentalization is intended as a security measure - if a contractor is compromised, they can only reveal information about their own project. But it also means that when a systemic breach occurs at the infrastructure level (as happened with LiteLLM), the contractors have no way to assess their own exposure. They can't take protective measures because they don't know what needs protecting.

The AI labs themselves maintain strict secrecy about their data-sourcing relationships. Until WIRED and The Verge began reporting on Mercor and its competitors in recent months, the general public had almost no visibility into the industry's largest harvesting of human expertise ever attempted. The companies producing the world's most powerful AI systems preferred to let people imagine that the magic happened entirely inside their own walls - that brilliant engineers and massive GPU clusters were the whole story.

The reality, as the Mercor breach exposes, is that these companies are deeply dependent on a fragile ecosystem of vendors, contractors, and open-source tools. Meta didn't just lose access to proprietary training data. It lost access to an entire workforce and project infrastructure that it had built its AI development roadmap around. That's not a minor supplier relationship. That's a critical dependency that was hidden behind layers of secrecy until a breach ripped the curtain away.

The secrecy also creates accountability gaps. When Mercor tells contractors that a project is being "reassessed" without explaining why, those contractors can't make informed decisions about their own employment. When Meta pauses all Mercor work without public explanation, the market can't price the risk. When OpenAI says the breach "in no way affects" user data without disclosing what data was affected, the public is left with reassurance instead of information.

Transparency wouldn't eliminate breaches. But it would create the conditions for faster detection, better risk assessment, and more accountable incident response. The current model - where everything is secret until it catastrophically isn't - serves nobody well.

What Happens Next: The Fallout That's Still Coming

The breach disclosure is barely a week old. The real consequences are months away.

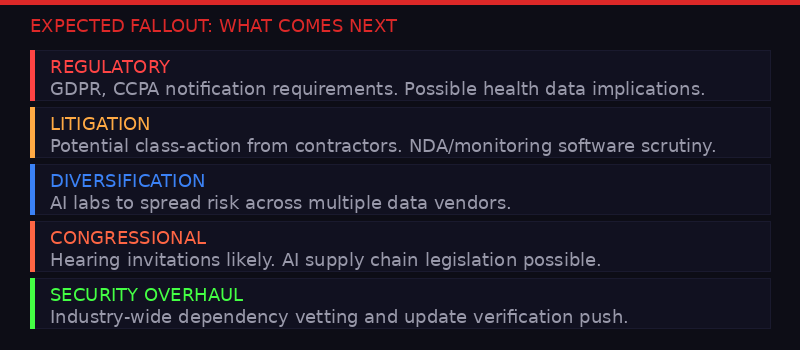

The Mercor breach is still in its early stages. The forensic investigation is ongoing, the full scope of data exposure is unknown, and the downstream consequences are still materializing. But based on the pattern of similar incidents, several outcomes are likely.

First, expect regulatory attention. The breach involves the personal data of thousands of contractors - their identities, employment records, and potentially their work product. Depending on the jurisdictions involved, Mercor may face notification requirements under GDPR (many contractors are based in Europe and Southeast Asia), CCPA, and other data-protection frameworks. If the breach exposed health-related training data - which is plausible given that Mercor trains models for medical applications - additional regulatory frameworks may apply.

Second, expect litigation. The AI training data industry operates in a legal gray zone where contractors sign broad NDAs and monitoring agreements but receive few protections in return. A breach that exposes their work product and personal information could trigger class-action lawsuits, particularly if contractors can demonstrate financial harm from lost employment or identity theft.

Third, expect the AI labs to diversify their data-sourcing relationships. The Mercor breach demonstrates the risk of concentration - when too much critical data flows through a single vendor, a breach at that vendor becomes a systemic event. Meta, OpenAI, and Anthropic will likely accelerate efforts to build redundant data-sourcing capabilities, either through alternative vendors (Scale AI, Surge AI, Handshake) or through in-house operations. This diversification will take time and money, potentially slowing the pace of model development in the short term.

Fourth, expect increased scrutiny of the entire AI data supply chain. The Mercor breach is a wake-up call for an industry that has treated training data as a competitive secret while failing to invest adequately in the security of the infrastructure that produces it. Congressional interest in AI safety has been growing. A breach that potentially exposed America's AI training methodologies to foreign adversaries is exactly the kind of incident that generates hearing invitations and legislative proposals.

Timeline: The Mercor Breach

- Late March 2026 - TeamPCP compromises two versions of LiteLLM, an open-source AI proxy tool used by thousands of organizations

- March 31, 2026 - Mercor confirms breach to employees via internal email, citing "a recent security incident that affected our systems along with thousands of other organizations worldwide"

- March 31-April 1 - Meta pauses all work with Mercor indefinitely. Contractors on Meta projects told they cannot log hours

- April 1 - Mercor's LinkedIn statement confirms LiteLLM supply chain connection and engagement of forensic experts

- April 2 - Actor using Lapsus$ name offers alleged Mercor data for sale: 200+ GB database, ~1 TB source code, 3 TB video/data

- April 3 - WIRED reports Meta freeze and OpenAI investigation. OpenAI confirms incident doesn't affect user data. Anthropic doesn't respond

- April 3 - Mercor employee tells contractors on Meta's "Chordus" project that scope is being "reassessed"

- Ongoing - Forensic investigation continues. Full scope of data exposure unknown

Finally, the Mercor breach should prompt a fundamental question about how the AI industry structures its most critical supply chains. The current model concentrates enormous value - proprietary training methodologies, expert-generated data, competitive intelligence about rival AI labs - in a small number of startup vendors that lack the security resources of the companies they serve. Mercor has 51-200 employees, according to LinkedIn. It's responsible for securing training data worth billions to companies worth hundreds of billions. That's a security mismatch that virtually guarantees future breaches.

The AI industry likes to talk about alignment - making sure AI systems behave in ways that serve human interests. The Mercor breach reveals that the industry hasn't even aligned its own supply chain security with the value of the assets flowing through it. Until it does, the question isn't whether there will be another breach of this magnitude. It's when.

Sources: WIRED (Maxwell Zeff, Zoe Schiffer, Lily Hay Newman), The Verge (Jay Peters, Hayden Field), Mercor LinkedIn, Recorded Future (Allan Liska), court filings, internal Mercor communications viewed by WIRED.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram