Meta's Great Purge: AI Takes Over Content Moderation and Nobody Voted For It

Meta is replacing its human content moderation workforce with AI systems - right as the company faces closing arguments in a landmark child safety trial. At the same time, Signal's creator is building encrypted AI infrastructure into Meta's backend. Three overlapping crises, one company, zero public accountability.

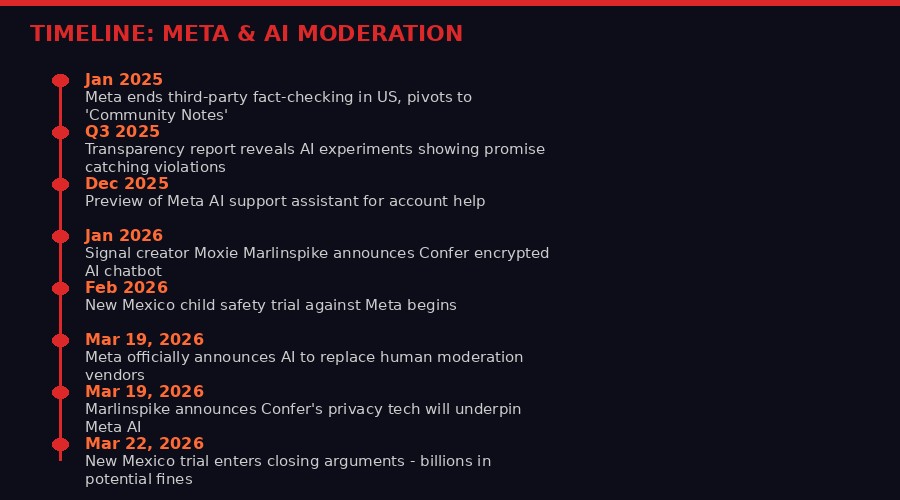

Three things happened in a single week that, read together, tell you exactly where the internet is headed - and who controls it. On March 19, 2026, Meta announced its AI content enforcement systems will gradually replace the third-party human vendor workforce that has been moderating Facebook and Instagram for years. The same day, Moxie Marlinspike - the cryptographer who invented Signal - announced he is working with Meta to bring end-to-end encrypted AI to the company's entire product ecosystem. And in a courtroom in Santa Fe, New Mexico, prosecutors were preparing closing arguments in a case that could cost Meta billions over its failure to protect children on those same platforms.

These three storylines are not separate. They are the same story. Meta is betting that AI can do what its human workforce could not - keep its platforms safe enough to survive the coming legal reckoning - while simultaneously being trusted with the intimate details of billions of users' lives. That is an enormous gamble. And users are not the ones making it.

The Announcement: What Meta Actually Said

Meta's announcement was careful, corporate, and designed to bury the most important news in paragraphs five through eight. The company framed everything in terms of capability uplift: its new AI systems can catch 5,000 scam attempts per day that existing systems miss, they reduced reports of celebrity impersonation by over 80 percent, they catch twice as much adult sexual solicitation content as human review teams, and they make 60 percent fewer mistakes in enforcement.

Those are impressive numbers. But the buried lede was this sentence: "As we do this, we'll reduce our reliance on third-party vendors for content enforcement."

Third-party vendors. That is the polite corporate phrase for the armies of contract workers - mostly located in the Philippines, Kenya, Colombia, and parts of Eastern Europe - who have been making the actual judgment calls on whether a post gets removed, whether an account gets banned, whether a photo crosses the line into child exploitation. These workers have been documented suffering from severe psychological trauma, including PTSD, from sustained exposure to violent and sexually abusive content. They are paid a fraction of what Meta's own engineers earn. And now, they are being replaced by machines.

"While we'll still have people who review content, these systems will be able to take on work that's better-suited to technology, like repetitive reviews of graphic content or areas where adversarial actors are constantly changing their tactics." - Meta official statement, March 19, 2026

The phrase "repetitive reviews of graphic content" is a remarkable piece of euphemism. What it describes is a human being looking at child sexual abuse material, beheading videos, or torture images, making a judgment call, clicking a button, and then doing it again. Thousands of times per shift. For years. The job has been described by multiple former moderators as permanently damaging to their mental health. Meta is now saying that AI will do this instead.

The AI systems Meta describes are genuinely sophisticated. They operate in languages covering 98 percent of people online - compared to the roughly 80 languages covered by previous systems. They understand "cultural nuance - including niche subcultures - rapidly changing and regionally specific code words, emoji meanings, and slang." That last capability is significant: adversarial actors have long exploited linguistic and cultural gaps in moderation systems to run scams, trafficking operations, and extremist recruitment campaigns in less-monitored languages.

Meta also describes a multi-layer capability that goes beyond simple keyword detection. The account-takeover example is illustrative: the AI noticed a pattern - new location login, password change, profile edits - that looked innocent in isolation but together signaled a compromise. That is genuine behavioral analysis, not just content filtering.

The Trial: What the Courts Are Already Saying

The timing of Meta's AI moderation announcement is not a coincidence. On March 22, 2026, the New Mexico child safety trial entered closing arguments after six weeks of testimony that exposed, in granular detail, the failures of Meta's existing content moderation systems.

The case, filed in 2023 by New Mexico Attorney General Raul Torrez, is built on an unusually solid foundation. State investigators created undercover accounts posing as children and documented the speed and consistency with which they received sexual solicitations on Meta's platforms. They gathered internal Meta documents - thousands of pages of correspondence and reports - showing that company executives understood the risks to children and continued to minimize public disclosure of those risks.

The jury is weighing two counts of violating New Mexico's Unfair Trade Practices Act. If they find in the state's favor, civil penalties could reach $5,000 per violation - and with the number of social media views and users in New Mexico, prosecutors say that could add up to billions of dollars. A separate second phase, decided by a judge alone, would determine whether Meta created a "public nuisance" on the scale that would require court-ordered remediation.

"Safety is extremely important for the service and having it be something that people trust and want to use over time." - Meta CEO Mark Zuckerberg, video deposition shown at trial

The New Mexico case is not alone. A California jury was simultaneously deliberating on separate social media addiction cases, part of a series of bellwether trials that could determine the liability exposure for thousands of pending lawsuits. Meta's case is potentially the most dangerous because it sidesteps the Section 230 immunity shield that has protected tech companies from liability for user-posted content - by focusing instead on the company's own deceptive business practices.

The second-order implication is significant: Meta's AI moderation announcement could be read as evidence in future proceedings. If the company is now claiming AI can catch violations its human reviewers missed - including the kinds of child exploitation content at the heart of the New Mexico case - then plaintiffs in future cases have a straightforward argument: Meta knew better systems were possible and chose not to implement them sooner.

KEY LEGAL EXPOSURE - WHAT META IS FACING

- New Mexico trial: billions in potential civil penalties for deceptive practices related to child safety

- California bellwether cases: social media addiction liability for thousands of pending suits

- Section 230 challenge: New Mexico case may bypass the traditional immunity shield

- Class action from xAI/Grok CSAM lawsuit: separate but related pressure on AI image generation

- Pentagon vs Anthropic precedent: AI companies increasingly defined as national security infrastructure

The Encryption Paradox: Signal's Creator Goes to Work for Meta

At precisely the moment Meta is asking users to trust AI with the moderation of their content, the company is also bringing in the person most associated with the argument that you should never trust a tech company with your data.

Moxie Marlinspike created Signal, the gold-standard encrypted messaging platform, and the underlying Signal Protocol that now encrypts WhatsApp. In January 2026, he launched Confer, an AI chatbot with end-to-end encryption - the platform's developers cannot access user data, cannot train on it, cannot share it. On March 19, 2026, he announced he is working to integrate Confer's privacy technology into Meta AI.

"Already, AI chat apps have become some of the largest centralized data lakes in history, containing more sensitive data than anything ever before. We are using LLMs for the kind of unfiltered thinking that we might do in a private journal - except this journal is an API endpoint to a data pipeline specifically designed for extracting meaning and context." - Moxie Marlinspike, Confer blog post, March 19, 2026

Marlinspike is not wrong about the risk. AI chat systems are accumulating intimate data at a scale and depth that no previous technology has matched. People tell their AI assistants things they would not tell their therapists, their partners, or their lawyers. They upload medical records, financial documents, drafts of sensitive communications. And most AI systems, including Meta AI, currently store that data in cleartext on corporate servers - accessible to employees, governments via subpoena, and hackers.

The Confer solution is technically elegant. It uses client-side encryption so that the AI inference happens - somehow - without the server being able to see the plaintext of the conversation. The technical implementation involves what Marlinspike calls "private inference" and passkey-based encryption. The details are published on Confer's blog and have not been publicly broken by cryptographers.

But here is the paradox at the core of this deal: end-to-end encryption makes AI moderation categorically impossible. You cannot simultaneously claim that AI systems are catching 5,000 scam attempts per day by analyzing content, and also claim that the content is end-to-end encrypted so no one can see it. These two properties are in fundamental tension.

Meta's announcement thread-needles this by saying the privacy technology would underpin "Meta AI" - the company's consumer chatbot - while the moderation AI applies to content posted to Facebook and Instagram. These are different products. But they share infrastructure, they share user identity, and they are moving toward deeper integration. The distinction between "your private AI conversation" and "your social media activity" is one Meta has every incentive to blur over time.

What the Human Moderators Actually Did - and What Gets Lost

To understand what is being automated away, you need to understand what human content moderators actually did. The job was not simply pattern-matching against a list of prohibited content. It was contextual judgment across enormous cultural variation, at high speed, with severe psychological cost.

A post that shows a graphic image of violence might be documentation of a war crime - crucial evidence that should stay up - or it might be trauma porn being circulated for shock value. A seemingly innocuous conversation between an adult and a teenager might be grooming, or it might be a mentorship relationship. A meme using offensive language might be in-group reclamation from a marginalized community, or it might be targeted harassment. These distinctions require cultural knowledge, contextual reading, and sometimes professional-grade psychology.

Former Facebook moderator moderators who spoke to journalists described making hundreds of these decisions per shift, under time pressure, with inadequate psychological support. Many reported developing PTSD symptoms - intrusive thoughts, sleep disruption, hypervigilance - from sustained exposure to the worst content humans produce. In 2020, Facebook settled a class action lawsuit from moderators for $52 million, acknowledging the psychological harm but not the structural conditions that caused it.

Meta's claim that AI "can take on work that's better-suited to technology, like repetitive reviews of graphic content" frames the psychological damage of the job as an inefficiency to be optimized away, rather than evidence of a structural problem with the volume of harmful content on its platforms.

The more honest framing would be: Meta built platforms that attract billions of users and generate billions in advertising revenue, and those platforms also attract predators, scammers, and people who post horrific content. Managing that harmful content required paying contractors to be psychologically damaged by it. Now Meta is offloading that function to machines, which do not experience psychological damage but also cannot experience moral weight, professional accountability, or the kind of situational empathy that sometimes prevents a judgment call from destroying an innocent person's account.

The Grok Lawsuit: What Happens When AI Is Given No Red Lines

The same week Meta announced its AI moderation expansion, three Tennessee high school students filed a federal lawsuit against Elon Musk's xAI, alleging that Grok's image generation tools were used to transform real photos of them - taken from yearbooks and homecoming pictures - into sexually explicit material.

The case illustrates, with brutal clarity, the risks of what happens when an AI company does the opposite of what Meta claims to be doing: instead of using AI to enforce content standards, xAI deliberately chose to use AI to lower them. Musk publicly promoted Grok's ability to generate "spicy" content as a competitive differentiator - a way to attract users who found other AI systems too restrictive.

The lawsuit alleges that xAI knew its image generation systems would be capable of producing sexually explicit images of minors but released them anyway. It claims there is currently no technical way to prevent the generation of explicit images of adults while completely blocking the generation of explicit images of children - a claim that, if accurate, means the product was deployed with a known child safety flaw baked in.

"She has difficulty eating and sleeping and suffers from recurring nightmares. Jane Doe 2 has begun self-isolating and avoiding being on her school campus, and even dreads attending her own graduation." - Federal lawsuit filed by three Tennessee teenagers against xAI, March 2026

The perpetrator in this case was not xAI - he was a classmate who used the tool. He has been arrested. But the lawsuit's central argument is that xAI is the proximate cause because it built and deployed a tool whose misuse for child sexual abuse imagery was foreseeable and preventable. This is the same argument structure being used against Meta in New Mexico - the company built something dangerous, knew it was dangerous, and deployed it anyway.

The contrast between Meta's current position - announcing ambitious AI safety improvements - and xAI's position - defending a product marketed partly on its ability to bypass safety restrictions - represents the two poles of the current AI content debate. One pole says AI is the solution to content moderation failures. The other says AI is the cause of content moderation failures. Both are true, depending on how the technology is configured and what incentives the company is responding to.

The Second-Order Effects: What This Architecture Actually Means

Beyond the immediate story, there are structural shifts happening that will take years to fully understand. Here are the ones worth watching.

The accountability gap. When a human moderator makes a wrong call - deleting legitimate journalism, banning a whistleblower account, missing a death threat - there is at least a theoretical chain of accountability. A human made a decision. That decision can be appealed. The human can be retrained or let go. When an AI system makes the same wrong call, the accountability chain dissolves into statistical distributions and training data choices made years earlier. Meta says it is keeping human oversight for "highest risk and most critical decisions" - but the definition of what counts as highest risk will be determined by Meta, not by regulators or users.

The language advantage and its limits. Meta's claim that its AI now covers 98 percent of world languages - up from 80 languages previously - is genuinely significant for global safety. Trafficking operations and extremist recruitment have historically flourished in less-monitored languages. But language coverage is not the same as cultural understanding. A model that correctly parses Tigrinya syntax may still completely misread the social context of a Tigrinya-language post about a disputed election. The gap between linguistic capability and cultural competence is where the most consequential moderation failures will continue to happen.

The adversarial arms race. Every time a company announces new AI moderation capabilities, adversarial actors begin optimizing against them. The cat-and-mouse dynamic between platform safety teams and bad actors has been documented extensively - scammers and spammers have consistently found ways to evade automated detection within weeks of new systems deploying. Meta specifically notes this as a strength of AI - it can adapt faster to "constantly changing tactics." But adversarial actors can also use AI to generate evasion strategies. The race is accelerating in both directions.

The privatization of speech governance. The most important structural effect of replacing human moderators with AI is that decisions about what speech is permissible on the world's largest communication platforms become embedded in machine learning models that are trained, updated, and controlled by a single private company with no democratic accountability. This was already true when humans were making the calls - they were following Meta's policies. But human judgment introduces friction, inconsistency, and occasional acts of conscience that create pressure for policy review. AI systems apply policy with perfect consistency and zero friction. That makes them more efficient and more dangerous, simultaneously.

The Bigger Picture: AI Moderation as Infrastructure

What Meta is building is not simply a more efficient moderation system. It is digital governance infrastructure - a set of systems that will determine what three billion people can say to each other, what businesses can advertise, what news can spread, and what political speech is permitted on the largest communication network in human history.

The comparison to physical infrastructure is not hyperbole. When a city builds a water treatment system, the technical choices about what contaminants to filter and what levels are acceptable are not made by the company that builds the plant - they are set by public health regulators, informed by scientific consensus, subject to legal challenge and democratic revision. When Meta builds a content moderation system, those equivalent choices - what speech is harmful, how confident do we need to be before removing something, who bears the cost of false positives - are made internally, protected as trade secrets, and deployed at global scale before anyone outside the company can evaluate them.

The ongoing legal pressure - New Mexico, California, the xAI suits, the Anthropic-Pentagon case playing out in parallel - represents an early and still-primitive attempt by legal systems to impose some external accountability on these decisions. Courts are not the right mechanism for this. They are slow, expensive, reactive, and limited to jurisdiction. But they are what exists right now, and the cases being heard in 2026 will set precedents that shape how AI content systems are governed for the next decade.

Moxie Marlinspike's involvement with Meta is the most philosophically interesting thread in all of this. Marlinspike built his career on the principle that privacy is a structural property of systems, not a corporate promise - that encryption means the company cannot access your data even if it wants to, even under subpoena, even under national security letters. His decision to work with Meta implies he believes the urgency of bringing real privacy to AI chat outweighs the reputational cost of partnering with a company currently on trial for harming children.

That is not necessarily a wrong calculation. WhatsApp is genuinely end-to-end encrypted thanks to Marlinspike's earlier collaboration with Meta. Billions of people have better privacy than they would have had otherwise. If he can do the same for AI chat, the privacy benefit could be enormous. But the integration of encrypted AI chat with a platform that simultaneously uses AI to surveil and moderate public content creates a set of architectural tensions that have not been publicly worked through.

The question is not whether Meta is telling the truth when it says AI makes its platforms safer. Based on the numbers it has published, it probably does. The question is whether a single private company should be the one making those safety decisions for three billion people, using systems whose internal logic is not publicly auditable, whose failure modes are not publicly disclosed, and whose deployment is not subject to any meaningful external governance. That question is not being asked in any of the current courtrooms. It should be.

What Comes Next

The New Mexico trial will reach a verdict sometime in the next weeks. If prosecutors win, the penalties will be significant and the precedent will be more significant. Future plaintiffs will have evidence that Meta's own executives acknowledged risks they did not disclose, that internal documents showed awareness of child exploitation patterns, and that better technical systems existed but were not deployed sooner. The AI announcement made this week will not help Meta's legal position in cases where the harm happened before the AI was deployed.

The xAI/Grok lawsuit will take longer to resolve. Its core technical claim - that there is no way to permit explicit adult content while blocking CSAM - is a factual assertion that will require expert testimony and technical discovery. If it holds up, it would effectively mean that any AI image generation system that permits explicit adult content is inherently producing a tool that can be weaponized against children. That would be a significant finding with consequences across the entire generative AI industry.

Marlinspike's Meta collaboration will be watched closely by cryptographers and privacy researchers. The technical implementation of private inference at Meta's scale has never been done before. If it works, it would be a genuine advance in applied privacy engineering. If the privacy claims turn out to be weaker than advertised - if there are exceptions for law enforcement access, if the encryption does not cover all conversation data, if the implementation is not independently audited - the backlash will be severe and Marlinspike's credibility, carefully built over two decades, will take a significant hit.

And the human moderator workforce that Meta is phasing out? There are no official numbers for how many people will be displaced. The contractor workforce is distributed across dozens of countries through multiple layers of vendor relationships, making it deliberately difficult to count. Based on industry estimates, the number is in the tens of thousands globally. Those jobs paid poorly, caused documented psychological harm, and are now being automated away - but they were jobs, with income, and the communities where they were concentrated have no obvious alternative.

The machine that replaces them does not need healthcare. It does not develop PTSD. It does not organize unions or file lawsuits about working conditions. From a corporate finance perspective, it is a better solution in almost every measurable way. From a governance perspective, it represents the largest transfer of speech authority from humans to machines in the history of the internet. And it happened in a week that most people spent watching basketball.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramSOURCES

- Meta: Boosting Your Support and Safety With AI, March 19, 2026

- AP News: Landmark trial against Meta highlights mental health risks for children, March 22, 2026

- AP News: Tennessee teens sue xAI over deepfake sexual images, March 2026

- Moxie Marlinspike: Confer is bringing foundational AI privacy to Meta, March 19, 2026

- Meta Transparency Report Q3 2025

- The Verge: Meta AI moderation and content enforcement coverage, March 2026