Big Tech on Trial: Meta Faces Landmark Reckoning Over Children's Safety

March 23, 2026 | Los Angeles / Santa Fe

Two simultaneous trials are testing whether social media platforms can be held liable for engineering addiction in children. (Pexels)

In two courtrooms on opposite sides of America, juries are deciding whether Instagram built a machine that deliberately broke children's minds - and whether any amount of corporate disclaimers can paper over that.

Closing arguments began this week in what may be the most consequential tech accountability trials of the decade. In Los Angeles, 12 jurors are deliberating whether Meta's Instagram and Google's YouTube are liable for addicting a young woman to their platforms from the age of 9, exacerbating depression and suicidal thoughts that followed her into adulthood. Simultaneously in Santa Fe, New Mexico, a separate jury is weighing state charges that Meta knowingly created a "breeding ground" for predators who target children for sexual exploitation.

Both cases are heading toward verdicts at the same moment. Both draw on a trove of internal Meta documents that paint a picture of a company that understood exactly what it was doing to its youngest users - and kept going anyway.

The legal exposure is staggering. If plaintiffs prevail, the California case alone could unlock thousands of similar lawsuits filed in a federal multidistrict litigation involving an estimated 13,000 individual cases. The New Mexico case carries potential sanctions that prosecutors say could reach into the billions of dollars. And a third trial - representing school districts rather than individuals - is scheduled for June in Oakland, California.

Silicon Valley is watching. So are the 40-plus state attorneys general who have already filed their own suits against Meta.

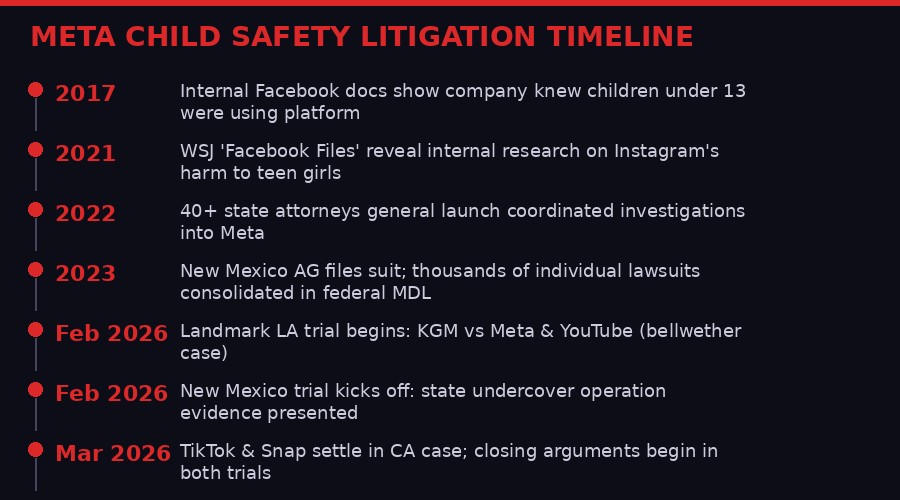

A decade of warnings, internal documents, and escalating legal pressure culminating in two simultaneous trials. (BLACKWIRE)

The California Bellwether: Engineering Addiction

The Los Angeles trial centers on how social media platforms engineered engagement for children who were not equipped to resist it. (Pexels)

The Los Angeles case is technically about one person - identified in court documents as KGM, and called "Kaley" by her attorneys. She is now 20 years old. She started using YouTube at age 6. She started using Instagram at age 9. Before she graduated elementary school, she had posted 284 videos to YouTube.

Her lawsuit claims that the social media companies' deliberate product design choices - infinite scroll, notification dopamine loops, algorithmic amplification of emotionally charged content - addicted her to the platforms, worsened pre-existing mental health struggles, deepened depression, and contributed to suicidal ideation. It is a lawsuit about childhood, engineering, and corporate responsibility.

Plaintiff attorney Mark Lanier opened the trial with a comparison that immediately framed the stakes: he described Meta and Google as two of the richest corporations in history that "engineered addiction in children's brains." He cited internal Meta documents including one email chain where a Meta employee wrote that Instagram is "like a drug" and that the company's employees are "basically pushers." He cited Project Myst, an internal Meta survey of 1,000 teenagers and their parents, which found that children who had experienced trauma or adverse life events were especially vulnerable to addictive usage patterns - and that parental supervision controls had almost no impact on reducing usage.

"Borrowing heavily from the behavioral and neurobiological techniques used by slot machines and exploited by the cigarette industry, Defendants deliberately embedded in their products an array of design features aimed at maximizing youth engagement to drive advertising revenue."

- KGM lawsuit filing, Los Angeles Superior Court

Meta's legal team countered with a more familiar argument: that Kaley had a troubled home life before she ever downloaded Instagram, and that the company cannot be responsible for mental health struggles rooted in family conflict, bullying, and circumstances entirely outside Meta's control. "Not one of her therapists identified social media as the cause," a Meta spokesperson said during the trial.

The tension at the heart of the case is a statistical one that Meta has leaned into heavily: can any plaintiff prove that social media was a "substantial factor" in their harm, rather than just one of many difficult circumstances in a difficult childhood? The judge instructed jurors that social media does not have to be the only factor - just a substantial one. Plaintiff attorney Lanier illustrated this using a cupcake missing a key ingredient: even a small amount of baking soda, he said, is a substantial factor in whether a cake rises.

TikTok and Snap both settled before the trial reached a jury, for undisclosed sums. Meta and Google's YouTube are the remaining defendants. The jury deliberates on each defendant's liability separately.

New Mexico: The Undercover Operation That Exposed Meta

New Mexico investigators created fake child accounts on Meta platforms to document sexual solicitations - capturing evidence of predatory contact within hours of account creation. (Pexels)

While Los Angeles wrestles with the philosophical question of whether notifications are addictive, New Mexico is working through something more visceral: an undercover state investigation in which agents created social media accounts posing as children and documented sexual solicitation attempts in real time.

Attorney General Raul Torrez filed the New Mexico lawsuit in 2023, and the case has spent two years excavating internal Meta correspondence about child sexual exploitation. The prosecution argues that Meta's platforms function as a marketplace for predators - one the company designed, profits from, and has never adequately policed.

The most damning figure presented at trial: Meta's own internal estimate that approximately 100,000 children are subjected to sexual harassment on its platforms every single day. That number, extracted from internal documents during discovery, became a central piece of prosecution evidence. Meta executives acknowledged at trial that enforcement is imperfect, but said the company continuously improves safety systems and reports child sexual abuse material to the National Center for Missing and Exploited Children.

"We're going to have meaningful investments in targeted strategic programming around how you use the internet and how you use social media in ways that are responsible and healthy."

- New Mexico AG Raul Torrez, on requested court-ordered relief

Instagram head Adam Mosseri testified at the New Mexico trial, saying the company's philosophy is to "disclose the risks in a consistent and rigorous way" through blog posts, service agreements, and public communications. In a separate video deposition played at trial, Meta CEO Mark Zuckerberg described safety as "extremely important for the service" and noted that in 2017, Meta stopped linking business performance goals to the amount of time users spend on its platforms.

That 2017 date is telling. A year later, the company was still building features that critics say were designed to maximize time-on-platform for teenagers. The gap between stated values and internal document evidence is the space prosecutors have been working in throughout six weeks of testimony.

Closing arguments in Santa Fe are scheduled for Monday. After that, the jury will weigh two counts of violating New Mexico's Unfair Trade Practices Act. A second phase, decided by a judge rather than a jury, will determine whether Meta created a public nuisance and should fund public programs to repair the damage - a potential financial obligation that legal observers describe as open-ended and difficult to cap.

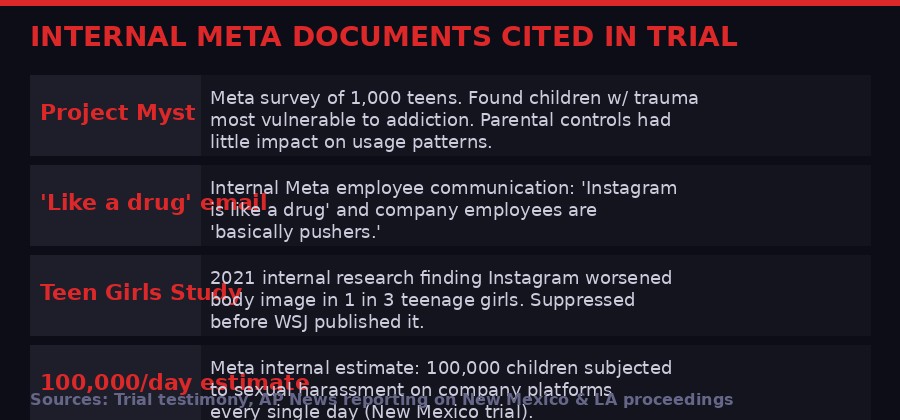

The documents that changed the case: internal Meta research that prosecutors say prove the company knew exactly what it was doing. (BLACKWIRE)

The Section 230 Problem - and How Plaintiffs Are Going Around It

The legal strategy in both cases deliberately avoids attacking Section 230 head-on - instead targeting product design and consumer protection law. (Pexels)

For decades, Section 230 of the 1996 Communications Decency Act has been the near-impenetrable shield that protects social media companies from liability for what their users post. The law was written to encourage the growth of the early internet, and it worked - spectacularly. It also created a legal structure that made it almost impossible to hold platforms accountable for harm that flowed through their systems.

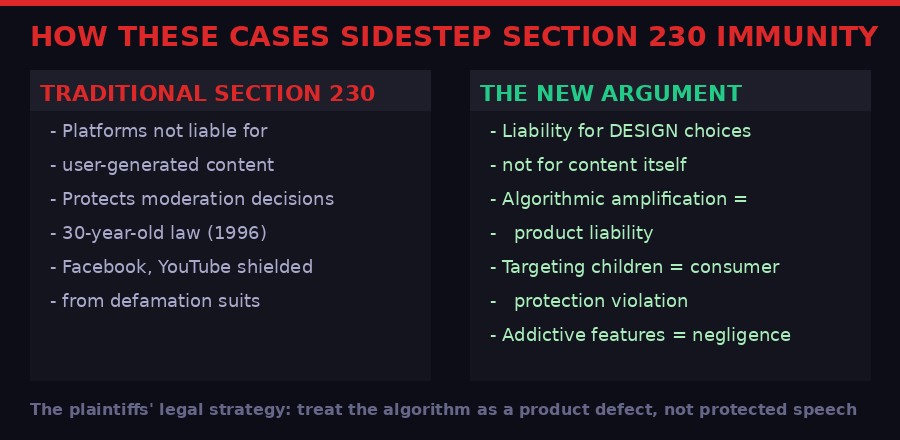

Both the California and New Mexico cases are constructed to sidestep Section 230 entirely, and the legal architecture is worth understanding because it is likely to become a template for every social media accountability case that follows.

The California case argues that Meta and YouTube are not being sued for content - they are being sued for the design of their products. Notification systems, infinite scroll, like counters, algorithmic recommendation engines - these are software engineering choices made by the companies themselves. They are not "content." They are products. And if a product is negligently designed in a way that causes harm, product liability law may apply regardless of Section 230 protections.

The New Mexico case argues from consumer protection law rather than tort law. The state is not claiming Meta is responsible for what predators said to children on its platform - it is claiming Meta made false and misleading representations about how safe its platform was for children, in violation of state consumer protection statutes. That is a different legal theory, and one that has historically been applied to physical products, pharmaceutical companies, and - crucially - the tobacco industry.

Clay Calvert, a nonresident senior fellow of technology policy studies at the American Enterprise Institute, described the bellwether approach as essentially a pressure test: "Both sides want to see how these arguments play out before a jury" before the full weight of thousands of similar cases comes to bear.

The legal theory underpinning both trials: treat the algorithm as a product defect, not as protected speech under Section 230. (BLACKWIRE)

The Tobacco Parallel - and Why It Terrifies Big Tech

Legal observers are drawing direct comparisons to the 1998 Big Tobacco Master Settlement Agreement - a $246 billion reckoning that reshaped an entire industry. (Pexels)

Plaintiff attorney Mark Lanier has explicitly invoked the Big Tobacco comparison multiple times throughout the California trial. Legal experts have picked it up. And the parallel is not merely rhetorical - it maps onto the structural dynamics of both cases with uncomfortable precision.

In the tobacco cases of the 1990s, the decisive turn came when internal industry documents were produced in discovery showing that cigarette companies had conducted internal research for decades demonstrating that nicotine was addictive and that they had deliberately targeted children with marketing. The gap between what the companies said publicly and what they knew internally became the centerpiece of a fraud and consumer protection case that ultimately resulted in the 1998 Master Settlement Agreement - a $246 billion payout and sweeping restrictions on marketing to minors.

The Meta documents that have emerged in these trials tell a structurally similar story. Project Myst identified children with trauma histories as the most vulnerable users - and the most valuable to retain. The "basically pushers" email suggests internal awareness of the addictive mechanics at play. The 100,000-children-per-day figure suggests internal awareness of exploitation that was never disclosed in any material way to users or regulators.

The legal test for "unconscionable" trade practices in New Mexico - which the prosecution has invoked - requires showing conduct that is "grossly unfair." Prosecutors argue that knowing about mass child sexual harassment, building features that maximize child engagement anyway, and then publicly claiming the platforms are safe for children meets that threshold.

Meta's attorneys have pushed back hard on the tobacco comparison, arguing that the science on social media addiction is far less settled than nicotine addiction, and that mental health is a complex, multi-factor phenomenon that cannot be reduced to a single platform. Several of Kaley's own therapists, Meta noted, never diagnosed her with social media addiction specifically - even those who believed such a diagnosis was theoretically possible.

What the tobacco parallel cannot account for is scale. In the tobacco cases, the plaintiffs were fighting over liability for individual smokers. In the Meta cases, the question of damages is potentially tied to the number of people who used the platforms while the company allegedly concealed risks - which, in New Mexico alone, prosecutors say could be calculated as multiple violations per user, multiplying into billions of dollars in civil penalties.

The Scale of the Coming Legal Wave

Whatever verdicts emerge from LA and Santa Fe will shape the trajectory of thousands of cases filed across the country. (Pexels)

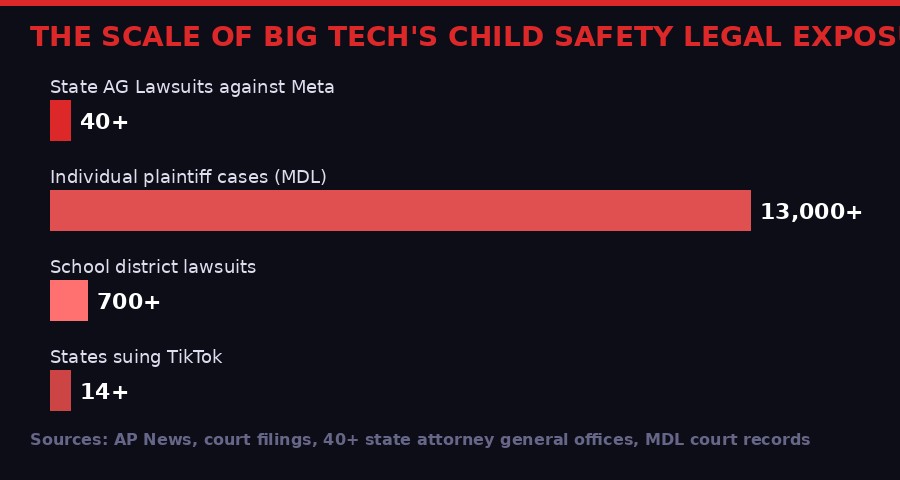

The scope of litigation facing social media companies right now is difficult to fully comprehend without a map.

In the federal multidistrict litigation consolidated in the Northern District of California, an estimated 13,000 or more individual lawsuits have been filed against Meta, TikTok, YouTube, and Snapchat. The LA and Santa Fe cases are not part of that MDL - they are state-level proceedings that have reached trial first because of procedural differences. But their outcomes will reverberate into the federal docket regardless.

A separate bellwether trial scheduled for June in Oakland will represent school districts - a different type of plaintiff with a different theory of harm centered on the disruption to educational environments caused by smartphone and social media addiction among students. School districts have sued over costs they argue they have had to bear: counselors, mental health staff, disciplinary proceedings, and curriculum disruption all traceable to what students do on platforms before, during, and after school.

More than 40 state attorneys general have filed suits against Meta specifically, arguing that the company deliberately designed Instagram and Facebook to addict children. These cases span politically diverse states, suggesting the child safety argument cuts across the usual partisan divisions on tech regulation. Fourteen states have sued TikTok for similar reasons.

The Sacha Haworth of the Tech Oversight Project, a nonprofit focused on platform accountability, described the LA trial's opening as the beginning of a reckoning that has been years in the making: "This was only the first case. There are hundreds of parents and school districts in the social media addiction trials that start today, and sadly, new families every day who are speaking out and bringing Big Tech to court for its deliberately harmful products."

The legal exposure facing social media companies extends far beyond these two trials - with thousands of cases waiting behind them. (BLACKWIRE)

What the Verdicts Actually Mean - Win, Lose, or Settle

Whatever happens in these two courtrooms, the structural reality of 13,000+ pending cases means Meta cannot simply litigate its way out of this reckoning. (Pexels)

The second-order effects of these verdicts will matter more than the first-order outcomes. Here is why.

If plaintiffs win in California, Meta faces a potentially significant damages award in a single case. But the more important consequence is that it validates the legal theory - that addictive product design can be treated as product liability rather than protected speech - across thousands of cases waiting behind it. It also dramatically increases Meta's incentive to settle the federal MDL, potentially for eye-watering sums, rather than risk 13,000 individual jury trials.

If Meta wins in California, the inverse applies: it significantly weakens the bellwether cases, gives the company a powerful precedent to cite in the federal MDL, and likely prompts a wave of dispositive motions from defendants in other cases. It would not end the litigation - the New Mexico case and the attorney general suits operate on different legal theories - but it would slow the momentum considerably.

The New Mexico case adds a different dimension. If the jury finds that Meta violated consumer protection law and a judge subsequently rules Meta created a public nuisance, the court-ordered relief could include mandated changes to how Meta operates its platforms in the state - or potentially nationwide, depending on how broadly the injunction is written. That structural relief, rather than monetary damages, is what regulators in Europe have been trying to impose through legislation. A state court order could achieve similar ends through a different route.

There is also a middle path that is increasingly plausible: Meta settles both cases before verdicts come in, as TikTok and Snap did in the California case. Settlement would let the company avoid setting a formal legal precedent, control the narrative around damages, and insert non-disclosure agreements that limit how the evidence is used in future cases. The tobacco companies initially pursued this strategy too - before the state attorneys general coordinated a flanking maneuver that made individual settlements legally impossible.

The coordination factor is underappreciated. More than 40 state AGs filing suits simultaneously is not coincidence - it is an attempt to replicate the structural logic of the Big Tobacco settlement by ensuring no single settlement can resolve the full liability picture. If any of the bellwether trials produce plaintiff victories, those AGs are positioned to accelerate toward their own trials or negotiate from a position of established legal precedent.

For Meta specifically, the financial exposure is almost certainly manageable in the short term - the company reported revenues of roughly $160 billion in 2025 and carries enormous legal reserves. The existential threat is not a single verdict. It is what structural court-ordered relief might require the company to change about its products. Features that monetize engagement at the expense of user wellbeing are baked into the business model at a deep level. A court order to eliminate infinite scroll, cap notification frequency for users under 18, or disable algorithmic recommendation for teenagers would have revenue implications that dwarf any settlement figure.

"What is a lost childhood worth?"

- Mark Lanier, plaintiff attorney, in closing arguments to the LA jury

The Surveillance Architecture Underneath the Story

One aspect of these trials that has received less coverage than it deserves: the level of detail these cases have revealed about how social media companies actually track and model their users - including children.

The discovery process for thousands of lawsuits has forced Meta to produce internal documents at a scale that regulators have never previously achieved. Those documents reveal a level of behavioral modeling that goes far beyond what users consent to when they click "agree" on terms of service. Internal Meta research segments users by psychological vulnerability. Algorithms identify users who are in emotionally destabilized states and increase content delivery accordingly. Notification timing is calibrated to moments of maximum receptiveness.

This is not conjecture from plaintiffs' attorneys. It is described in Meta's own internal research, produced in discovery and entered into evidence. Project Myst's finding that children experiencing trauma were most vulnerable to addictive use patterns was not an academic observation - it was a targeting insight. The question the jury must answer is whether knowing that and building for it anyway constitutes negligence or fraud.

The Washington State Department of Licensing story that surfaced the same week - where Spanish-speaking callers pressing option 2 were routed to an AI voice speaking English with a strong Spanish accent, apparently because AWS's Polly service defaulted to a "Lucia" voice configured for Castilian Spanish - reads, in this context, as a reminder of how casually government and corporate systems deploy AI without adequate oversight or testing. The Meta cases are not about AI specifically, but the underlying dynamic is similar: systems designed without adequate thought for their human impact, catching vulnerable people in their gears.

Whatever juries decide in Los Angeles and Santa Fe, the documents that have now been made public will not be unread. State legislators, regulators in the European Union, and the Federal Trade Commission are all watching. The UK's Online Safety Act already imposes binding requirements on platforms to protect children. Australia passed legislation in late 2025 banning children under 16 from social media entirely. The American legal cases are operating in a global context where the direction of travel is clear, even if the pace remains contested.

The era of social media companies setting their own terms for how they interact with children may be ending. Whether it ends through court verdicts, legislative action, or settled liability is still being decided - in real time, in two courtrooms on opposite sides of a country.

Key sources: AP News reporting by Cedar Attanasio, Barbara Ortutay, Morgan Lee (February-March 2026); New Mexico v. Meta Platforms, Inc., trial testimony and court filings; KGM v. Meta Platforms Inc. et al., Los Angeles Superior Court; American Enterprise Institute (Clay Calvert); Tech Oversight Project (Sacha Haworth)

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram