The two most powerful media companies in the world just lost the same argument in two different courtrooms within 48 hours of each other.

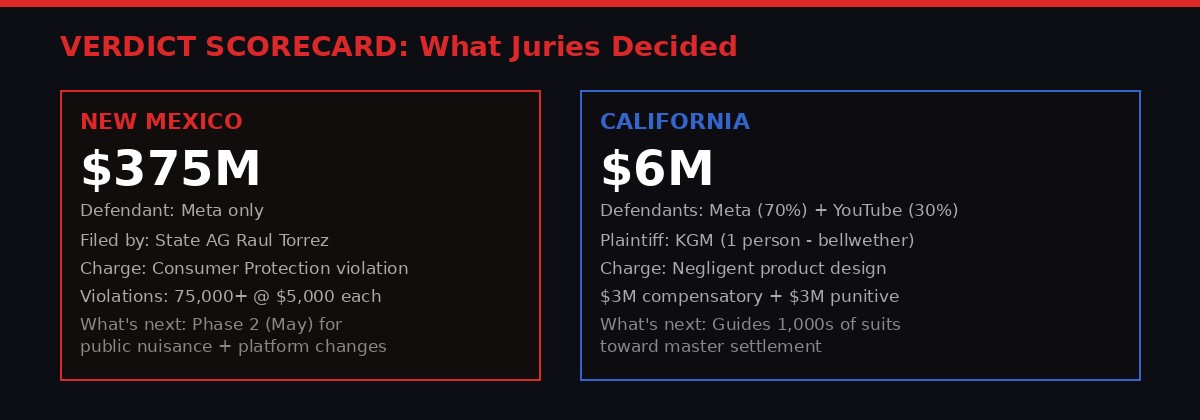

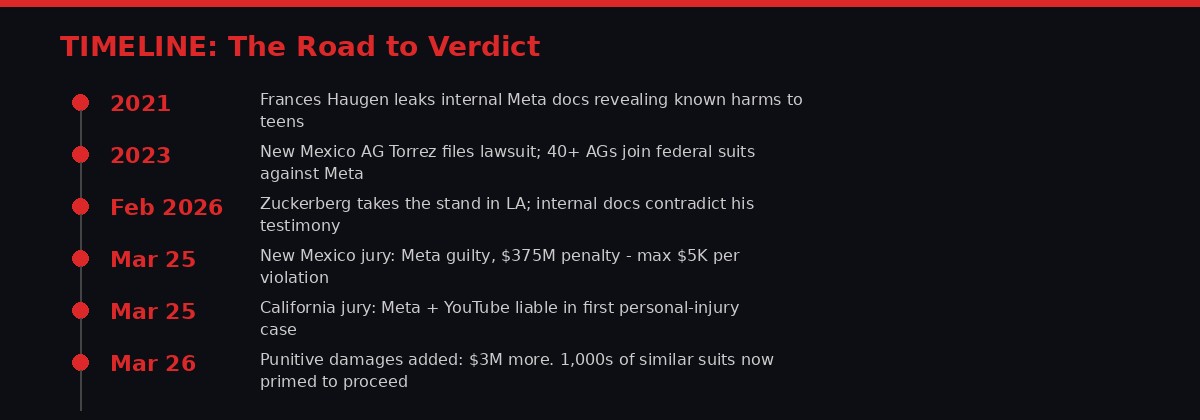

On Tuesday, March 25, a New Mexico jury ruled that Meta - the parent company of Instagram, Facebook, and WhatsApp - knowingly harmed children's mental health, concealed what it knew about child sexual exploitation on its platforms, and violated the state's consumer protection law. Penalty: $375 million. (AP, March 25, 2026)

The next day, a California jury in Los Angeles found both Meta and Google's YouTube liable for designing their platforms in ways that deliberately hook young users - and held them responsible for the resulting mental health damage to a now-20-year-old woman who grew up on their products. Additional penalty: $6 million, split 70-30 between Meta and YouTube.

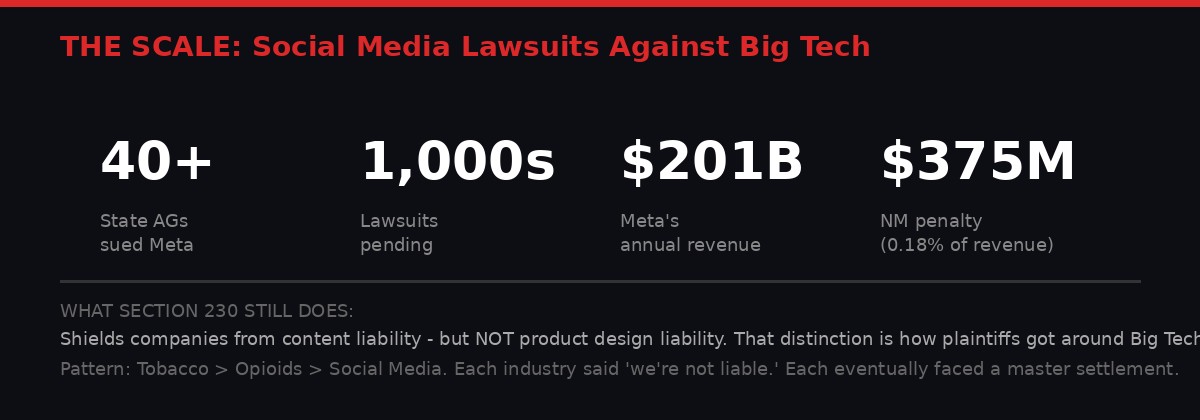

Combined, the two verdicts total $381 million. For Meta, a company valued at $1.5 trillion with $201 billion in annual revenue, that's less than two days of sales. But the financial number isn't the story. The story is what comes next: thousands of lawsuits now primed to move forward, 40+ state attorneys general circling, and the first cracks in a legal shield that Big Tech has leaned on for three decades.

What the New Mexico Jury Decided

The Santa Fe verdict was the product of a nearly seven-week trial built on a quiet, methodical undercover operation. New Mexico state investigators created social media accounts posing as children. Then they waited. Sexual solicitations came quickly. Meta's response, the jury heard, was inadequate.

The jury agreed that Meta made false or misleading statements about platform safety - including public assurances from CEO Mark Zuckerberg, Instagram head Adam Mosseri, and Meta's global head of safety Antigone Davis. They also agreed that Meta engaged in "unconscionable" trade practices that deliberately took advantage of the vulnerabilities and inexperience of children.

"Meta's house of cards is beginning to fall. For years, it's been glaringly obvious that Meta has failed to stop sexual predators from turning online interactions into real-world harm." - Sacha Haworth, The Tech Oversight Project

The penalty came down to math: thousands of individual violations, each carrying a maximum $5,000 fine under New Mexico's Unfair Practices Act. Juror Linda Payton, 38, explained the jury's reasoning directly: each child affected was worth the maximum amount. They settled on approximately 75,000 violations to reach $375 million - less than the $1.9 billion prosecutors had sought.

Meta disagrees with the verdict and will appeal. The company says it "works hard to keep people safe" and maintains it has "a strong record of protecting teens online." (Meta spokesperson, AP, March 25)

What Meta cannot appeal away is Phase 2, scheduled for May. A judge - not a jury - will then determine whether Meta's platforms constitute a public nuisance. If so, the judge could mandate specific operational changes to how Meta's platforms function in New Mexico and potentially beyond.

The California Bellwether: One Woman, Billions of Dollars Riding on Her Case

The Los Angeles verdict carries different weight, but in some ways more dangerous weight for the tech industry.

The plaintiff, known by her initials KGM, was selected as a "bellwether" - a test case designed to see how a social media addiction argument plays with a jury before the industry faces thousands of similar lawsuits. Bellwether trials in tobacco and opioid litigation paved the way for master settlements worth billions. The same logic applies here.

KGM began using YouTube at age 6 and Instagram at age 9. By the time she testified, now 20, she described being on social media "all day long" as a child. Her lawyers argued that Meta and YouTube deliberately designed features to hook young users: infinite scroll feeds with no stopping cues, autoplay functions that eliminate natural exit points, algorithmic notifications calibrated to maximize return visits at psychologically vulnerable moments.

The jury deliberated for more than 40 hours. They came back with a verdict holding both companies negligent. They also decided both companies had adequate warning their platforms could be dangerous for minors and failed to warn users appropriately. Meta bore 70% of the responsibility. YouTube bore 30%.

"We wanted them to feel it." - Juror who asked not to be named, after the California verdict

The $3 million in compensatory damages was followed the next day by a recommendation for $3 million in punitive damages - an additional finding that Meta and YouTube had acted with "malice, oppression, or fraud." The judge has final say over the punitive amount.

Attorney Mark Lanier, who led the plaintiff's case, said the verdict sends a signal beyond the courtroom. TikTok and Snap had already settled before the trial began - reading the room, effectively. Meta and Google chose to fight. They lost.

How They Got Around Section 230

For three decades, Section 230 of the Communications Decency Act has been the tech industry's legal armor. The 1996 law shields platforms from liability for content posted by users. It's the reason Facebook can't be sued for what someone posts on it. It's the reason YouTube isn't liable for extremist videos it didn't film.

The social media addiction lawyers found the gap in the shield.

Section 230 protects platforms from content liability. It says nothing about product design liability. The lawsuits didn't argue that Meta was responsible for any specific post or video. They argued that Meta built a machine - with infinite scroll, with algorithmically-tuned notifications, with autoplay, with no effective age verification - that was designed to maximize engagement at the expense of user welfare. Especially young users.

That's a product defect argument. The same argument used against tobacco companies for designing cigarettes with higher nicotine delivery. The same argument used against opioid manufacturers for designing pills that were harder to titrate safely. Product design is not protected by Section 230.

Meta argued Kaley's mental health problems had other causes unrelated to social media. The jury disagreed, finding social media a "substantial factor" in causing harm. That finding is the key: once a jury says the product caused the harm, the platform's Section 230 argument becomes irrelevant.

The Witnesses Who Made the Case

The courtroom proceedings in both states produced testimony that will define how historians write about this era of the internet.

Mark Zuckerberg took the stand in Los Angeles. The exchange between him and plaintiff attorney Mark Lanier was electric. Lanier asked Zuckerberg three options: help vulnerable users, ignore them, or prey on them. Zuckerberg agreed the third option was unacceptable for a reasonable company. Lanier then spent days demonstrating that Meta chose it anyway.

When asked whether people use a product more if it's addictive, Zuckerberg said: "I'm not sure what to say to that. I don't think that applies here." (AP, court transcript)

Internal documents told a different story. Lanier produced files showing Meta had set explicit goals around time-on-platform before Zuckerberg claimed the company had moved away from such targets. Whistleblower Arturo Bejar, a former Meta engineering director who raised internal alarms for years before testifying to Congress in 2023, gave evidence that Meta's own researchers had documented harm to teen users and the company buried the findings.

"All Mark Zuckerberg accomplished with his testimony today was to prove yet again that he cannot be trusted, especially when it comes to kids' safety." - Josh Golin, Fairplay

In New Mexico, educators from local schools testified about sextortion schemes targeting students through social media platforms. One undercover investigator described creating a child account and receiving sexual solicitations within hours. Meta's safety systems failed to catch any of it.

Adam Mosseri, Instagram's head, also testified in Los Angeles. He faced particularly pointed questions about Instagram's beauty filter features - Lanier pointed out that all 18 external experts Meta had consulted raised concerns about the psychological harm of filters on teen body image. Mosseri said the company had a "high bar" for blocking expression tools. The jury interpreted that bar as set far too high.

The Families Behind the Numbers

The verdicts carry a dollar figure. They also carry names.

Walker Montgomery was 16 years old when someone posing as a teenage girl contacted him through Instagram and manipulated him into cybersex. Within hours, he was the target of a sextortion scheme. He killed himself on December 1, 2022.

His father, Brian Montgomery, a farmer and crop insurance salesman in Mississippi, was watching the verdicts come in. He said he felt a mix of joy and devastation simultaneously. Joy because something was finally happening. Devastation because Walker isn't coming back.

"We're talking about the most financially sound business that the planet has ever known. This will set an expectation." - Brian Montgomery, Walker's father

Deb Schmill's daughter Becca was 18 in September 2020 when she died of fentanyl poisoning from drugs she bought through a social media platform. The overdose followed a sexual assault by someone she had met online, and a subsequent campaign of revenge porn against her.

"That's the painful part of all of this," Schmill said after the verdicts. "If this could have been done five years ago, ten years ago. Things would be so different." (AP, March 26, 2026)

Neither Montgomery nor Schmill were plaintiffs in either lawsuit. They are parents who lost their children and turned that loss into advocacy. For the Parents SOS coalition - families who have watched children die in circumstances linked to social media - the verdicts represent a "watershed moment" they have been fighting toward for years.

What Changes Now - And What Doesn't

Meta's stock closed slightly up the day of the New Mexico verdict. Slightly down the week overall. Investors have not panicked. And there's a hard-edged reason for that: nothing changes immediately.

The California verdict doesn't mandate any specific platform changes. The New Mexico $375 million doesn't force Instagram to redesign its algorithm. Meta and YouTube are appealing. Appellate courts could reduce or overturn the penalties. The process will take years.

But legal precedent doesn't care about years. The California bellwether is now established: a jury said these platforms are negligently designed. That precedent follows every one of the thousands of pending cases into settlement negotiations. The benchmark has been set.

Peter Ormerod, an associate professor of law at Villanova University, called the California verdict "a momentous development" but cautioned it's "one step in a much longer saga." He noted it will take multiple additional bellwether verdicts, or successful appeals by the companies, before the industry faces anything like the multi-billion tobacco or opioid master settlements. (AP, March 25, 2026)

Arturo Bejar, the former Meta engineering director turned whistleblower, said the most effective path to actual platform change is regulatory, not litigation alone. "One thing that I saw working inside the company that effectively led to behavior change was when an attorney general or the FTC stepped in and required things of the company," he told AP. The 40-plus state AGs now pursuing federal suits have "an extraordinary opportunity and the ability to ask for meaningful change."

The Phase 2 trial in New Mexico, scheduled for May, is the most immediate mechanism for forcing tangible platform changes. A judge - unbound by jury dynamics - will determine whether Instagram and Facebook constitute a public nuisance in New Mexico. If so, that judge can issue injunctive relief: specific operational orders about how the platforms must function for users in that state. In practice, platform changes rarely stay state-specific. What Meta builds for New Mexico, Meta builds everywhere.

The Tobacco Playbook - And Why This Time Is Different

The comparison to tobacco litigation has been made so often it has become shorthand. But it holds up. And the current moment resembles, with uncomfortable precision, the early 1990s - before the 1998 master settlement that ultimately extracted $206 billion from the tobacco industry and rewired how cigarettes were marketed and sold in the United States.

For decades, tobacco companies denied that nicotine was addictive. Internal documents showed they knew otherwise. Whistleblowers testified. State attorneys general filed lawsuits. Early verdicts were overturned on appeal. Then the dam broke.

The opioid litigation followed the same arc: industry denial, internal documents proving otherwise, whistleblowers, state AG suits, years of appeals, then a reckoning.

Social media is following the script. The internal Facebook documents Frances Haugen leaked in 2021 revealed that Meta's own research showed Instagram worsened body image issues in teenage girls - and that Meta buried those findings. The Haugen disclosures were the social media equivalent of the tobacco industry's "Cigarette Papers."

There is one critical difference this time. The tobacco and opioid industries were selling physical products that delivered chemical compounds into the human body. The addiction mechanism was pharmacological. Social media's addiction mechanism is behavioral and psychological - the platforms exploit cognitive vulnerabilities, not chemical ones. That makes causation harder to prove. It's also why the California jury's finding that social media was a "substantial factor" in causing harm matters so much: it establishes that psychological design is legally equivalent to pharmacological design when it comes to liability.

"The era of Big Tech invincibility is over." - Sacha Haworth, The Tech Oversight Project

The billion-dollar question is whether the Kids Online Safety Act can finally move through Congress. The bill has passed the U.S. Senate twice. It has stalled in the House both times. The verdicts put new wind behind it. But Big Tech's lobbying apparatus is not standing down, and the current political moment - with the Iran war dominating Washington bandwidth - does not favor legislative attention to long-term regulatory frameworks.

The Global Picture: Europe, Australia, and What's Coming

The American litigation is happening against a backdrop of international regulatory pressure that has already moved faster than U.S. courts.

Australia passed legislation in late 2024 banning children under 16 from social media platforms entirely. Australia's eSafety Commissioner has enforcement powers the U.S. lacks. The European Union's Digital Services Act subjects platforms with over 45 million EU users to mandatory algorithmic audits, risk assessments, and "mitigation measures" for systemic risks - including risks to minors. The EU can fine platforms up to 6% of global annual revenue for violations.

Indonesia passed a social media ban for children under 16 in early 2026. Similar legislation is pending or recently enacted in Canada, France, and the United Kingdom. The United States, home to the platforms themselves, remains the laggard in child online safety regulation.

That gap is narrowing. The 40-plus state attorneys general pursuing federal claims against Meta are not coordinating out of coincidence. They are running a parallel track to the litigation - building regulatory pressure that can force platform changes faster than a court case can. The New Mexico verdict strengthens their hand in settlement negotiations, even with states that haven't yet gone to trial.

The European and Australian models also provide a template for what Phase 2 in New Mexico could mandate. Age verification is now technically achievable - Australia's rollout is demonstrating that. Algorithmic transparency is already required in Europe. Both of those changes, if ordered by a New Mexico judge, would be extraordinarily difficult for Meta to implement only within New Mexico. The practical pressure toward national compliance is enormous.

What Comes Next

The immediate calendar: Phase 2 of the New Mexico trial begins in May. A judge will hear arguments on public nuisance claims and potential injunctive relief. Meta will file appeals in both states - likely seeking to stay enforcement of the penalties pending appeal. In California, the judge will issue final rulings on the punitive damages recommendation before that case formally closes.

In the broader litigation landscape, the California bellwether result will drive settlement conversations across the thousands of pending cases. Lawyers on both sides will now run their own math: how many more bellwether trials does Meta want to risk losing? At what point does a master settlement become cheaper than continued litigation? The tobacco industry held out for decades. The opioid industry took years. Meta's calculation will be influenced heavily by whether future bellwether juries follow the same pattern as Los Angeles.

On Capitol Hill, the verdicts have injected new urgency into Kids Online Safety Act advocacy. Senator Richard Blumenthal, one of the bill's champions, called the verdicts "a profound validation" and said Congress must "finish the job." Whether that translates to floor time in a Senate consumed by the Iran war authorization debate is a different question.

For the platforms themselves, the short-term posture is clear: appeal everything, change nothing mandatory until forced. Meta's stock movement tells that story. Investors don't believe this week's verdicts will meaningfully disrupt Meta's business model in the next two years.

They may be right about two years. They may be wrong about ten. The tobacco master settlement took until 1998 - fully two decades after the first major liability cases were filed. But it happened. Every industry that has ever said "our product isn't causing the harm you allege" and had internal documents proving otherwise has eventually reckoned with those documents.

Meta has the documents. The juries have now seen them. The parents have been right all along.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram