Guilty: How a California Jury Just Rewrote the Rules for Big Tech

Two landmark verdicts in one week. Instagram and YouTube found liable for addicting a child. A jury found malice. Punitive damages are still coming. And 13,000 more cases are watching.

The apps that became a generation's daily reality are now facing their biggest legal reckoning. (Pexels)

For fifteen years, social media companies operated under a simple legal assumption: they were neutral platforms, not publishers, not product manufacturers, not dealers. They were the pipes, not the water. If the water was toxic, that was somebody else's problem.

That assumption just collapsed in a Los Angeles courtroom.

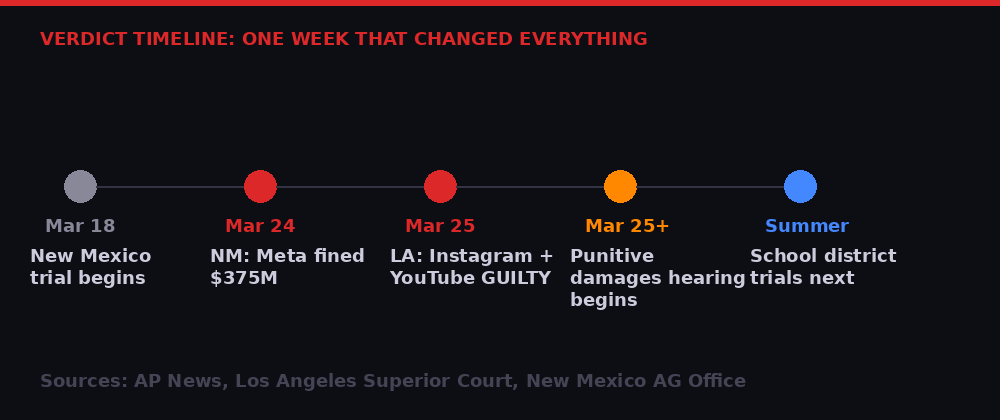

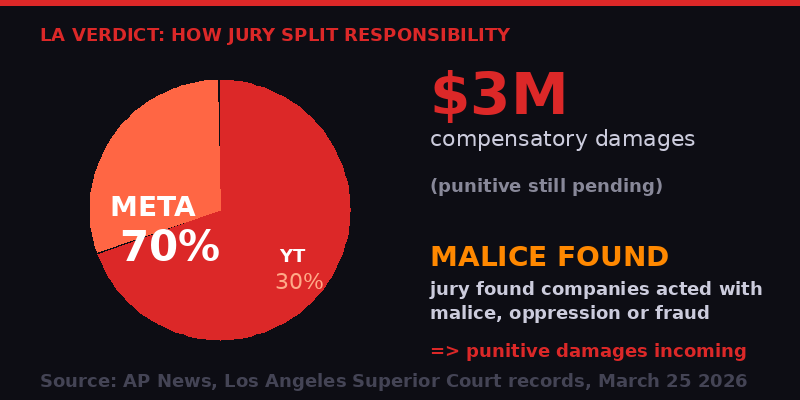

On Wednesday, March 25, 2026, a California jury found Meta's Instagram and Google's YouTube liable for negligence in the design and operation of their platforms, determining both companies knew their products were dangerous to minors and failed to warn of those dangers. The jury awarded $3 million in compensatory damages - and then found that both companies had acted with malice, oppression, or fraud. Punitive damages proceedings began the same day.

It was the second major verdict against Meta in less than 24 hours. On Tuesday, a New Mexico jury fined the company $375 million for child safety violations on its platforms. Two verdicts, two states, one week. And 13,000 more cases in the pipeline.

The social media industry's legal reckoning, years in the making, has finally arrived.

The Los Angeles verdict is a bellwether case - its outcome was designed to shape how thousands of similar lawsuits proceed. (Pexels)

The Case That Changed Everything

The Los Angeles plaintiff, identified in court documents as KGM - or "Kaley," as her attorneys called her throughout trial - is 20 years old. She began using YouTube at age 6 and Instagram at age 9. By middle school she was on social media, by her account, "all day long."

The resulting addiction, she testified, exacerbated depression and suicidal thoughts. She spent years in therapy. Social media was not the origin of her mental health struggles, Meta argued correctly - she came from a turbulent home environment. But her lawyers weren't required to prove causation. Under California law, they only had to prove that the platforms' negligent design was a "substantial factor" in causing her harm.

After nine days and more than 40 hours of deliberation, the jury agreed.

"I don't naysay the opportunity to make money, but when you're making money off of kids, you have to do it responsibly." - Mark Lanier, attorney for plaintiff, closing arguments

The jury determined that Meta held 70% of the responsibility for the plaintiff's harm; YouTube bore 30%. But what the dollar figures don't capture is the more significant finding: the jury found malice. That opens the door to punitive damages that could dwarf the compensatory amount - and the punitive hearing is happening now, this week.

Meta and Google both issued statements rejecting the verdict. Google's spokesperson argued that "the case misunderstands YouTube, which is a responsibly built streaming platform, not a social media site." Meta said it would appeal. Both companies suggested the litigation conflates the harms of life - troubled families, pre-existing mental health conditions - with product liability. Neither statement moved markets in a meaningful way. Both companies have lived with these lawsuits long enough that investors have priced them in.

What investors may not have priced in is the punitive damages phase - and what comes after it.

The verdict chronology: two landmark decisions in under 48 hours, with punitive damages and summer school district trials still ahead. (BLACKWIRE)

The Section 230 Problem - And Why It Didn't Apply Here

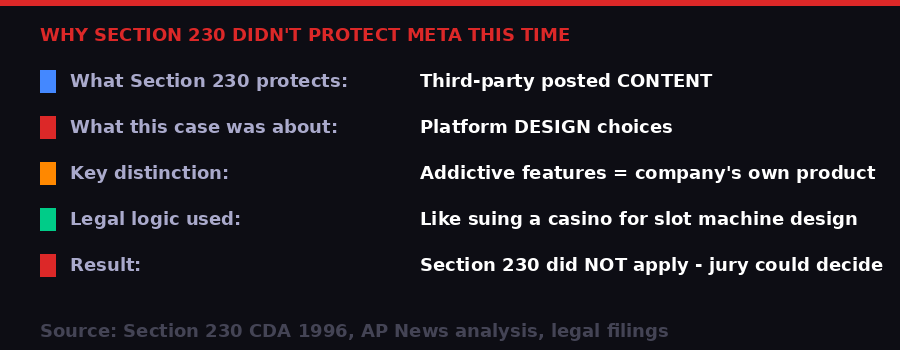

Section 230 of the 1996 Communications Decency Act is the single piece of legislation that made the modern internet possible. Its core protection is simple: online platforms are not legally responsible for content posted by their users. You cannot sue Facebook because a user posted something defamatory. You cannot sue YouTube because someone uploaded a terrorist recruitment video. The platform is the pipe, not the message.

That immunity has held up through decades of challenges. Courts have broadly interpreted Section 230 to protect platforms even when they algorithmically amplified harmful content. As recently as 2023, the Supreme Court refused to gut the law, though multiple justices signaled discomfort with its scope.

But the Los Angeles case was built around a crucial legal distinction: it was not about content. The jurors were explicitly instructed to ignore the content Kaley viewed. They were not evaluating whether Instagram showed her harmful posts. They were evaluating whether Instagram's product design itself - the infinite scroll, the notification system, the algorithmic feed tuned to maximize engagement - constituted a negligently designed product that harmed her.

That is a product liability claim, not a content liability claim. Section 230 does not apply.

The legal logic that cracked open Big Tech's immunity: platform design features are the company's own product, not third-party content. (BLACKWIRE)

This distinction is not new - scholars and plaintiff attorneys have argued it for years. But this is the first time it has produced a jury verdict. That matters enormously. Juries are harder to dismiss than academic papers. And when juries start finding that algorithmic design choices constitute actionable negligence, the entire architecture of how social media is built comes into question.

The infinite scroll - pioneered by Aza Raskin, who later became one of its most prominent critics - exists for one reason: to eliminate the natural stopping point that a paginated interface creates. There is no bottom. Autoplay does the same thing for video. The notification ping exploits variable-reward psychology identical to a slot machine's mechanism. These are not accidents or neutral design choices. Internal documents from both Meta and YouTube, introduced as trial evidence, showed engineers understood the addictive dynamics they were building.

"The facts don't allow that. The evidence has shown just the opposite." - Paul Schmidt, Meta attorney, rebutting the plaintiff's causation theory in closing arguments

The jury disagreed.

The infinite scroll - a design feature now at the center of product liability law. (Pexels)

The New Mexico Verdict: A Different Theory, a Bigger Fine

The New Mexico verdict the day before followed a different legal path to a similar destination. New Mexico Attorney General Raul Torrez sued Meta in 2023 using the state's consumer protection law - not federal tort law. His team built the case by posing as children on Meta's platforms and documenting the sexual solicitations they received, then recording how the company responded (or failed to).

The jury found Meta guilty of thousands of violations of the state's Unfair Practices Act - each violation carrying a separate penalty, totaling $375 million. The jury found that Meta made false or misleading statements about platform safety and engaged in "unconscionable" trade practices that exploited the vulnerabilities of minors.

$375 million sounds large. Against Meta's $201 billion in revenue for 2025, it is 0.19% - a rounding error. But the signal is more important than the dollar amount. This was a state attorney general, not a private plaintiff, and the theory of the case was deception and consumer protection violation, not product liability. That means every other state AG in the country now has a blueprint.

Forty-two state attorneys general have already joined multi-state actions against social media companies. The New Mexico verdict gives every one of them new ammunition. If the formula - pose as a child, document what happens, sue under consumer protection law - worked in New Mexico, it will be tried in Texas, Florida, Ohio, Pennsylvania, and elsewhere.

State attorney general offices are watching the New Mexico verdict closely. Forty-two states have already joined multi-state actions against social media firms. (Pexels)

The 13,000 Cases in the Shadows

What makes this week different from every previous legal challenge to social media companies is not the verdict dollar amounts. It is the bellwether structure.

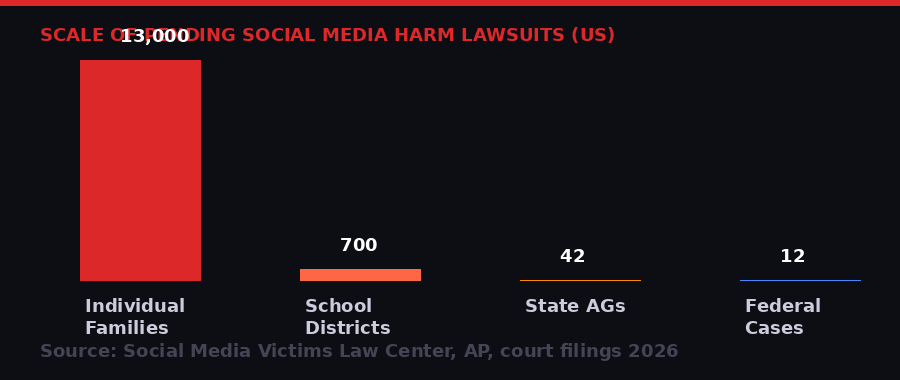

The Los Angeles case was specifically selected as a bellwether trial - a test case, representative of a broader docket, whose outcome signals how the larger mass of similar cases is likely to proceed. According to the Social Media Victims Law Center, which represents more than 1,000 plaintiffs, there are now more than 13,000 individual family lawsuits against social media companies pending in U.S. courts.

Those cases do not automatically resolve because Kaley won. But they are fundamentally changed. Settlement dynamics shift immediately when a defendant loses a bellwether. The risk calculus for Meta and Google just changed. Every attorney representing a family now has a verdict to point to. Every settlement negotiation just moved in favor of the plaintiff.

"This is a monumental inflection point in social media. When we started doing this four years ago, no one said we'd ever get to trial. And here we are trying our case in front of a fair and impartial jury." - Matthew Bergman, Social Media Victims Law Center

TikTok and Snap, the two other defendants originally named in the Los Angeles case, saw this coming. Both settled before trial began. Settlement terms were not disclosed, but the timing is telling - they bought their way out before a jury could rule on liability. That calculus will now look prescient to any company that hasn't yet settled and wrong to any company that chose to fight instead.

The scale of pending litigation against social media companies in the US - from individual families to school districts to state attorneys general. (BLACKWIRE)

Beyond individual families, a separate multidistrict litigation involving more than 700 school districts is scheduled for trial this summer before a federal judge in Oakland. School districts are suing on a different theory - they argue social media addiction has driven up mental health costs, increased counseling needs, and disrupted education. They want the companies to pay for those systemic harms.

The parallel to the opioid litigation is deliberate. Jayne Conroy, an attorney on the school district trial team who also worked opioid cases, described the logic explicitly: "The cornerstone of both cases is the same - addiction. These companies knew about the risks, they have disregarded the risks, they doubled down to get profits from advertisers over the safety of kids."

The opioid cases eventually extracted over $50 billion in settlements from pharmaceutical companies. Social media is bigger than opioids.

What the Dopamine Engineers Built

The technical mechanisms behind the addiction theory are not hypothetical. They are documented in academic literature, in internal company communications, and in testimony from former product engineers who built these systems and later regretted it.

The core mechanism is variable-ratio reinforcement - the same psychological principle that makes slot machines the most addictive form of gambling. Unlike fixed rewards (you pull the lever, you always get the same result), variable rewards create unpredictable patterns: sometimes the scroll reveals something interesting, sometimes it reveals nothing, sometimes it reveals something that triggers a social response. The unpredictability is the addiction. The brain's dopamine system evolved to respond intensely to uncertainty around rewards. Social media is an exploitation of that system at industrial scale.

The infinite scroll eliminates the natural stopping point. Without pagination, the bottom of the feed never arrives. The next piece of content is always one thumb-flick away. Autoplay video does the same thing for YouTube - the decision to continue watching is replaced by the decision to stop, which is psychologically harder for humans to make than the decision to start.

Notification systems create artificial urgency. The ping is trained to be anxiety-producing. You do not know if it is a message, a like, a comment, or a tag until you look. The compulsion to look - to resolve the uncertainty - fires the same neural circuits as checking whether a trap has sprung.

The engineers who built these systems knew what they were building. Internal documents introduced at trial showed Meta and YouTube understood the addictive dynamics of their products. (Pexels)

Meta's internal research on Instagram's effects on teenage girls - leaked by whistleblower Frances Haugen in 2021 - showed the company's own teams found the platform worsened body image issues and depression in adolescent female users. The company knew. The question in this lawsuit was whether knowing, and continuing to deploy these features without adequate warnings, constituted negligence. The jury answered yes.

YouTube's defense was structurally different. The company argued it is not a social media platform but a video platform - more like television than Instagram. It pointed out that Kaley's YouTube use declined as she got older and that she spent only about one minute per day watching YouTube Shorts. That argument persuaded some jurors: YouTube only got 30% of the liability allocation, compared to Meta's 70%. But it did not result in acquittal. The jury decided YouTube Shorts' infinite scroll and autoplay features still constituted a substantial factor in her harm.

The jury's responsibility allocation: Meta bears 70%, YouTube 30%. The finding of malice by both companies opens the door to punitive damages. (BLACKWIRE)

The Industry Reckoning: What Comes Next

The immediate financial exposure for Meta and Google from these specific cases is manageable. Meta has absorbed $375 million from New Mexico. The Los Angeles compensatory award of $3 million is trivial to a company with a $1.5 trillion market cap. Punitive damages are likely to be larger, but courts typically apply multipliers of 2-9x the compensatory amount in consumer harm cases, putting the LA punitive damages in the range of $6 million to $27 million. Still not material to Meta's financials.

The second-order effects are where the real damage accumulates:

Settlement pressure on 13,000+ cases. Even if Meta wins 90% of remaining lawsuits on appeal, settling the rest at modest amounts across thousands of families adds up quickly. More importantly, the reputational and operational cost of perpetual litigation at this scale changes how the company operates.

State AG actions in 42 states. The New Mexico blueprint is now proven. Each state that sues gets its own shot at Meta's revenue. Some will settle quickly. Some will demand operational changes - better age verification, mandatory parental controls, restrictions on algorithmic recommendation for minors - as conditions of settlement. These behavioral remedies are more costly than fines because they affect the revenue engine itself.

Congressional action re-energized. The Kids Online Safety Act (KOSA) has stalled multiple times in the Senate. Advocacy groups will use these verdicts as ammunition to push it over the line. KOSA would impose a duty of care on platforms to act in minors' best interests and limit certain algorithmic features for users under 17. The industry has lobbied against it successfully. Those arguments just got harder to make.

Product liability precedent expanding. If algorithmic feed design constitutes a negligently designed product, what else does? The recommendation system for autoplay? The engagement-optimizing ranking algorithm? Targeted advertising directed at minors? Each of these is a design choice made by engineers, not content posted by users. Section 230 protects none of them.

The Opioid Parallel

The opioid litigation began with individual personal injury cases, moved to state attorney general actions, and culminated in $50+ billion in settlements that forced structural changes in how opioids were manufactured, distributed, and prescribed. Social media companies are following the same trajectory, with one key difference: their revenue base is far larger, their products are used by far more people, and the addiction mechanism is delivered to users at a younger age than any opioid ever was.

Google and Meta are also watching what happens to TikTok, which settled before the LA verdict but now faces renewed scrutiny. TikTok's short-form vertical video format is specifically designed around the dopamine loop that is now being litigated. Its settlement may look like a bargain if punitive damages in the LA case are substantial - or it may reflect sophisticated legal judgment about what was actually at stake.

Snap, which also settled, is in a precarious position. Snapchat's streaks - the feature that counts consecutive days of message exchange and displays a flame emoji that disappears if you break the streak - is one of the more nakedly psychological manipulation tools in social media. You do not need an expert to explain why a 13-year-old feels compelled to maintain a streak. The fact that Snap settled rather than defend that design choice speaks clearly.

The plaintiff in the Los Angeles case began using YouTube at age 6 and Instagram at age 9. Her experience - documented over a month-long trial - became the legal lens through which a jury examined Silicon Valley's design choices. (Pexels)

The Timeline: How This Moment Was Built

The Design Choices on Trial

Understanding what exactly was adjudicated in Los Angeles requires understanding what "platform design" actually means as a legal object. This is relatively new conceptual territory for courts, and the Los Angeles verdict is likely to be studied in law schools for years.

The plaintiff's attorneys identified several specific design features as the proximate cause of harm. The infinite scroll was central - a deliberate decision to remove the natural endpoint from content consumption. Users of paginated sites, studies show, stop scrolling when they reach the bottom of a page. An endless feed never offers that stopping signal. The brain, wired to seek completion, never gets it.

Notification systems were the second major feature. Push notifications are engineered to create anxiety-based compulsion - the fear that something social is happening without you. Instagram's notification design evolved toward maximizing opens, not toward user wellbeing. Internal documents showed the company tested notification frequency and adjusted it based on engagement metrics, not on user-reported satisfaction or mental health outcomes.

The algorithmic feed - which replaced chronological feeds on both Instagram and YouTube - was the third element. Chronological feeds are ordered by time; you see what the people you follow posted, in the order they posted it. Algorithmic feeds are ordered by predicted engagement - you see what a machine thinks will keep you watching longest. That machine is optimizing for advertising impressions, not for your mental health. Showing a depressed teenager content related to depression is not a side effect of an algorithmic feed - it is a feature, because depressed teenagers engage intensely with content that mirrors their emotional state.

"With the social media case, we're focused primarily on children and their developing brains and how addiction is such a threat to their well-being. The medical science is not really all that different from an opioid or a heroin addiction. We are all talking about the dopamine reaction." - Jayne Conroy, plaintiff attorney in school district litigation, AP News

YouTube's defense that it is a television platform rather than a social media platform almost worked. It convinced two of the twelve jurors. But the existence of YouTube Shorts - vertical, autoplay, infinite scroll short-form video launched in 2020 - undermined the television analogy. Television does not auto-advance. It does not know you are sad. It does not serve you an algorithmically curated feed of increasingly extreme content based on your watch history. YouTube Shorts does all of those things. The jury noticed.

What Zuckerberg Testified - And What It Revealed

Mark Zuckerberg's courtroom appearance was unusual by any measure. CEOs of $1.5 trillion companies rarely appear in person at civil trials. Meta calculated, apparently, that Zuckerberg's presence would humanize the company and deflect the narrative that Meta was an uncaring corporation that knowingly harmed children. The calculation may have backfired.

Zuckerberg testified that Meta had invested "billions" in safety features and that the company took youth mental health seriously. He described parental supervision tools, content moderation efforts, and age-verification attempts. Under cross-examination, however, he was confronted with internal communications showing that safety teams had flagged specific concerns about teenage users that were then deprioritized in favor of engagement-focused product updates.

The gap between what Meta says publicly about safety and what its internal teams documented about known harms was the core of the plaintiff's case. Zuckerberg's testimony highlighted that gap rather than closing it. The jury's malice finding - that Meta acted with malice, oppression, or fraud - suggests they did not find his humanization effort persuasive.

Instagram chief Adam Mosseri also testified. Mosseri has been Instagram's public face on teen safety issues for years, testifying before Congress and giving media interviews about the platform's safety investments. His courtroom testimony covered similar ground. Internal documents contradicting his public statements were introduced by the plaintiff's team. The jury had access to both versions of Instagram's story - the public-facing one and the internal one - and chose to believe the internal one.

Mark Zuckerberg and Instagram chief Adam Mosseri both testified during the month-long Los Angeles trial. The jury ultimately found Meta acted with malice. (Pexels)

The Regulatory Vacuum - And Who Might Fill It

The United States does not have a comprehensive federal children's online safety law. The Children's Online Privacy Protection Act (COPPA), passed in 1998, prohibits collecting data from children under 13 without parental consent. It was a meaningful law for its era. It has not kept up with the architecture of algorithmic social media, where the harm is not data collection but behavioral manipulation.

The Kids Online Safety Act (KOSA) has been introduced in multiple sessions of Congress. Its core provisions would establish a "duty of care" for platforms to act in the best interests of minors, require parental controls, and limit certain algorithmic features for users under 17. The social media industry has spent heavily lobbying against it. Civil liberties groups have raised concerns about overreach. It has not passed.

The European Union moved faster. The EU's Digital Services Act, now in force, imposes systemic risk assessments and mitigation obligations on very large platforms. Meta and YouTube are already subject to EU investigations under the DSA specifically related to youth protection and addictive design. The Los Angeles verdict will accelerate European enforcement timelines.

In the absence of federal legislation, states are filling the void. Beyond New Mexico's consumer protection action, Utah passed the Social Media Regulation Act requiring age verification and parental consent for minors. Arkansas passed similar legislation. These laws are being challenged in court by industry groups, but the legal terrain just shifted. "We have safety features" is a harder argument to make after a jury has seen the internal documents.

The Federal Trade Commission has also shown renewed interest in social media and youth. FTC Chair Lina Khan, though no longer in her position after the Trump administration's restructuring of the agency, built a record of investigating Meta's data practices. The Biden-era FTC proposed amending COPPA to add protections. That effort stalled under the current administration, which has generally moved away from consumer protection enforcement at the federal level. State AGs and private litigation are now carrying the load that federal regulation left behind.

The Second-Order Effects Nobody Is Talking About

The most consequential outcome of this week's verdicts may not come from Meta or YouTube at all. It may come from the next generation of social media entrepreneurs watching this case and making different design decisions because they understand the legal exposure that infinite scroll and algorithmic amplification now carries. The best regulation is sometimes the threat of regulation - and the best product safety incentive is sometimes a jury finding malice against the last company that built the same features.

What the Los Angeles and New Mexico verdicts establish, for the first time at the jury level, is that social media platforms are not immune from the basic rules of product liability that apply to every other industry. If you build something you know is dangerous to children and you fail to adequately warn of that danger, you are liable. The cigarette industry learned this. The opioid industry learned this. Social media is learning it now.

The punitive damages hearing will deliver its result before the end of this week. However large or small the number, it will be overshadowed by what happens next: 13,000 families, 700 school districts, and 42 state attorneys general all received the same message from a Los Angeles jury today.

You can win.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramSources: AP News (March 25, 2026), Los Angeles Superior Court trial records, New Mexico AG Office press statements, Social Media Victims Law Center, Frances Haugen whistleblower testimony (2021), Section 230 of the Communications Decency Act (1996), EU Digital Services Act enforcement records, Congressional Research Service analysis of KOSA.