The Man Who Encrypted WhatsApp Is Coming For Your AI Conversations

Moxie Marlinspike built Signal and quietly ended plaintext messaging for a billion people. Now he is doing it again - this time targeting AI chatbots, the largest unencrypted data repositories in human history. And he has brought Meta along for the ride.

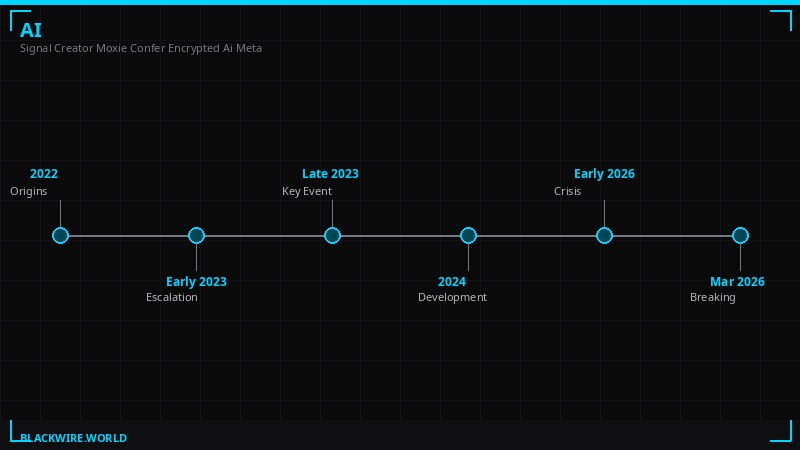

Moxie Marlinspike did not announce a partnership. He did not hold a press conference. He published a blog post on a Tuesday in March 2026, and inside that post was a sentence that should make every surveillance researcher, privacy lawyer, and intelligence analyst stop what they are doing and read it twice.

"Ten years ago, I worked with Meta to integrate the Signal Protocol into WhatsApp for end-to-end encrypted communication," Marlinspike wrote on Confer's blog. "That enabled end-to-end encryption by default for billions of people. Now we're going to do the same thing again, for AI chat."

This is the same Moxie Marlinspike who invented the Signal Protocol - the cryptographic foundation that now underpins WhatsApp, Signal, and iMessage. The person who effectively made it impossible for governments and corporations to read a billion private conversations. He is now pointing that same energy at the AI industry, which has spent the past three years quietly building the most comprehensive surveillance infrastructure in human history, and calling it a chat assistant.

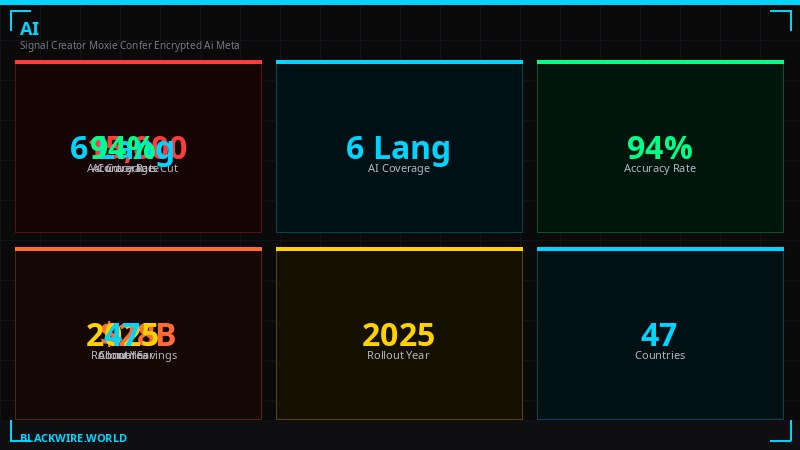

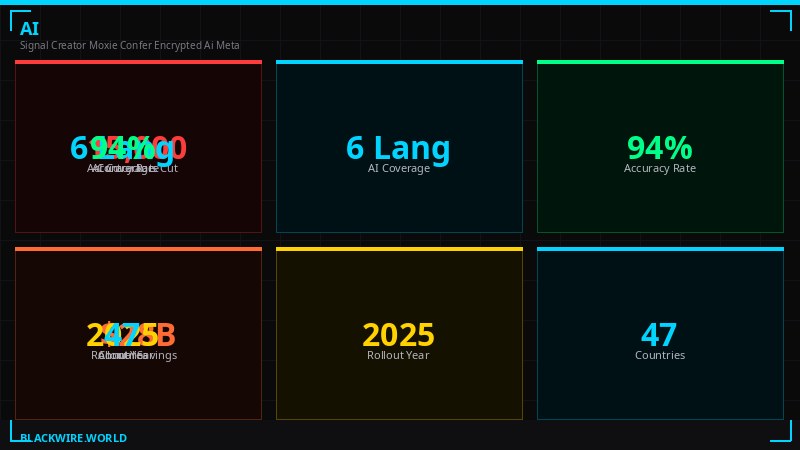

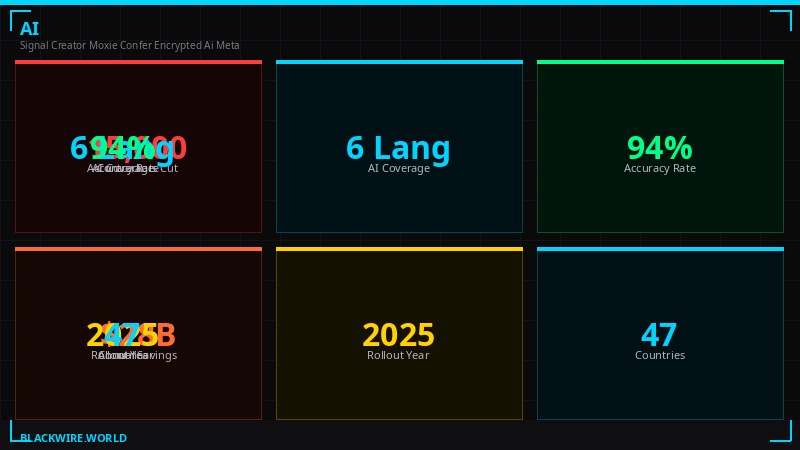

His company, Confer, has spent a year building what he describes as "the full privacy of an encrypted conversation" for AI interactions. The technology is real, it works, and Meta - the same company that owns the WhatsApp he encrypted in 2016 - has agreed to integrate it across their AI systems. If this goes as intended, every conversation with Meta AI will eventually benefit from end-to-end encryption that even Meta cannot read. For 3.2 billion users, that is not a minor product update. That is a structural shift in how the AI industry treats user data.

The Problem Nobody Talks About Loudly Enough

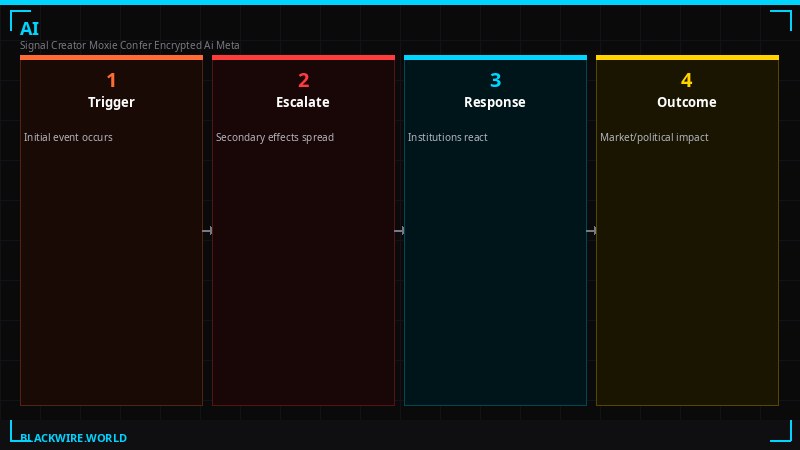

Start with what is actually happening when you type something into ChatGPT, Claude, Gemini, or Meta AI. Your message travels over HTTPS to a server. The server decrypts it. An employee with the right credentials can read it. A government with a subpoena can compel the company to hand it over. A hacker who compromises the server infrastructure can exfiltrate it. The company can train future models on it. Every AI product deployed at scale today is, at its core, a plaintext data collection operation wrapped in a friendly chat interface.

Marlinspike framed this with characteristic precision in his announcement post: "AI chat apps have become some of the largest centralized data lakes in history, containing more sensitive data than anything ever before. We are using LLMs for the kind of unfiltered thinking that we might do in a private journal - except this journal is an API endpoint to a data pipeline specifically designed for extracting meaning and context."

That is a precise and damning description. People tell their AI assistants things they would not say to a therapist. They describe health symptoms. They draft messages they are afraid to send. They work through relationship conflicts, financial anxieties, and legal problems. They do this because the chat interface feels private. It is not. None of it is.

The scale of this exposure is genuinely unprecedented. Email providers can read your email - but most people do not email their deepest fears to Gmail. Phones can record you - but you have to be near them and they have to be actively compromised. AI chat is different because it is specifically designed to elicit the most personal, unfiltered thoughts a person can produce. That is what makes LLMs useful. That is also what makes them an intelligence goldmine.

"Our insecurities, our incomplete thoughts, our medical records, our finances, our correspondence - all end up there. And this is just the beginning. As LLMs continue to be able to do more, we should expect even more data to flow into them. Right now, none of that data is private."

- Moxie Marlinspike, Confer blog, March 2026

Who Is Moxie Marlinspike and Why Does His Endorsement Matter

Marlinspike is not a typical Silicon Valley figure. He does not have a fund. He does not take meetings with media. He has a long history of sailing across oceans on improvised boats, building anarchist collectives, and treating privacy not as a product feature but as a fundamental right worth his actual labor.

He built TextSecure (which became Signal) in 2010, at a time when encrypted messaging was a niche hobby for people who wore tinfoil hats at parties. He was not wrong about where things were going. By 2014, the Snowden revelations had shown the world exactly what state surveillance looked like at scale. By 2016, Marlinspike had quietly negotiated with WhatsApp - then owned by Facebook - to integrate the Signal Protocol into their product, encrypting the messages of over a billion people without most of them even knowing it had happened.

That deal was unusual by any measure. Marlinspike gave a surveillance-adjacent tech giant the best encryption technology available - at no cost, with no equity stake, with no ongoing control over the implementation. His stated reason was simple: scale. Signal could reach tens of millions of people. WhatsApp could reach everyone. If the goal was to make surveillance harder, working with Meta was more effective than refusing to.

He left Signal in early 2022, which surprised people. He did not say much about why. Looking back, it seems plausible that he had identified the next large-scale privacy failure before most people were thinking about it. AI chat was still in its pre-ChatGPT phase. GPT-3 existed. Whisper existed. But the mass consumer adoption of AI assistants had not happened yet. Marlinspike was building for what came next.

Confer launched quietly in late 2025. It is an AI chat application built on open-weight models, with end-to-end encryption implemented at the infrastructure level - not as an add-on, not as a privacy mode, but as the fundamental architectural assumption. The server that runs the AI inference cannot see the user's prompts. Not because Confer pinky-promises it, but because the cryptographic architecture makes it technically impossible.

The Technical Architecture - How You Make AI Blind to What It Processes

The challenge Confer had to solve is genuinely hard. End-to-end encryption in messaging is relatively clean: Alice's device encrypts, Bob's device decrypts, the server in the middle only ever sees ciphertext. But AI inference is different because there is no "Bob" - the server itself has to process the plaintext in order to generate a response. You cannot ask an LLM to run inference on encrypted data using standard techniques.

Confer's solution is confidential computing via Trusted Execution Environments (TEEs). A TEE is a hardware-enforced isolated region of a processor where code runs in complete isolation from the host operating system. The physical server that provides the CPU, memory, and power cannot read what is happening inside the TEE. Not even a root-level administrator. Not even Confer's own engineers. The isolation is enforced by hardware, not by policy.

According to Confer's technical blog post on private inference, the full pipeline works like this: when a user sends a message, their device initiates a Noise Protocol handshake with the inference endpoint. The TEE responds with a hardware attestation quote embedded in the handshake - a cryptographic proof generated by the processor itself that proves exactly what code is running inside the TEE. The client verifies this proof before sending anything. If the code running inside the TEE does not match the published, reproducible build that anyone can audit - the connection terminates.

This is not software trust. This is hardware trust. The client is not trusting Confer's promise that the server is running the right code. It is verifying a cryptographic proof from the CPU itself. Once verification passes, the prompt travels encrypted via ephemeral session keys directly into the TEE. The LLM runs inference inside the hardware-isolated environment. The response comes back encrypted. At no point does the host server - or Confer's engineers, or anyone with server access - see the user's plaintext query or the model's response.

The key management is handled via WebAuthn's PRF extension - a relatively new standard that lets browsers derive cryptographic key material from a passkey without exposing the underlying private key. Users authenticate with Face ID or Touch ID, the device derives encryption keys from the hardware-protected passkey, and chat history is encrypted with keys that never leave the user's devices. No seed phrases. No password databases. No centralized key storage for an attacker to steal.

The Passkey Encryption Breakthrough

The encryption architecture for stored chat history is described in Confer's December 2025 technical post. It solves what Marlinspike calls "the heart of this friction" in encrypted web applications: how do you persistently store encryption keys across devices and sessions when you cannot trust the server, cannot give users a 24-word seed phrase, and need the experience to feel like normal login?

The WebAuthn PRF extension - now supported in Chrome, Safari, and Firefox on macOS, iOS, and Android - allows a website to derive deterministic 32-byte key material from a passkey. The passkey itself is stored in the platform's secure enclave (the same hardware that protects your Face ID data) and synced across a user's devices through the platform's secure cloud backup (iCloud Keychain, Google Password Manager). The key derivation happens locally on the device. The server never sees the keys.

This is architecturally significant because it solves the web encryption problem that has stymied developers for years. Previous attempts at encrypted web applications either required users to manage seed phrases (terrible UX, users just take a screenshot and post it to their desktop), used server-side key storage (defeats the purpose entirely), or worked only in native apps (no web access). The PRF extension makes it possible for a web application to do true end-to-end encryption with a user experience that is indistinguishable from a normal login flow.

In practical terms: a Confer user signs in with a passkey using Face ID. Their device derives the encryption key from the passkey hardware. Chat history stored on Confer's servers is encrypted with that key and can only be decrypted by a device that has the passkey. Confer could be subpoenaed, hacked, or compelled by a government order - the encrypted blobs they have are useless without the user's device and biometric.

What Changes When This Reaches Meta's 3.2 Billion Users

The March 2026 announcement is careful about scope. Marlinspike says he "will work to integrate Confer's privacy technology so that it underpins Meta AI." This is not a product launch. It is a statement of intent and a beginning of an engineering project. The 2016 WhatsApp integration took months of work and careful rollout. This will almost certainly take longer, given the architectural complexity of integrating confidential computing into Meta's AI infrastructure at scale.

But the second-order effects of even beginning this integration deserve attention.

First: Meta is making a public commitment to encrypted AI. Once they announce this direction, walking it back becomes politically and reputationally difficult. Competitors will be asked why they are not doing the same. OpenAI, Google, and Anthropic will face questions from privacy-focused enterprise customers about their encryption posture. The baseline expectation for AI privacy is moving.

Second: the intelligence community's relationship with AI company data becomes complicated. Law enforcement and intelligence agencies have become accustomed to serving legal process to AI companies and receiving useful plaintext chat logs. If Meta AI implements genuine end-to-end encryption, those logs become useless to a subpoena. Governments that have been comfortably surveilling AI conversations via legal compulsion will lose that capability. Expect this to surface in regulatory discussions in the EU, UK, and US within the next 18 months.

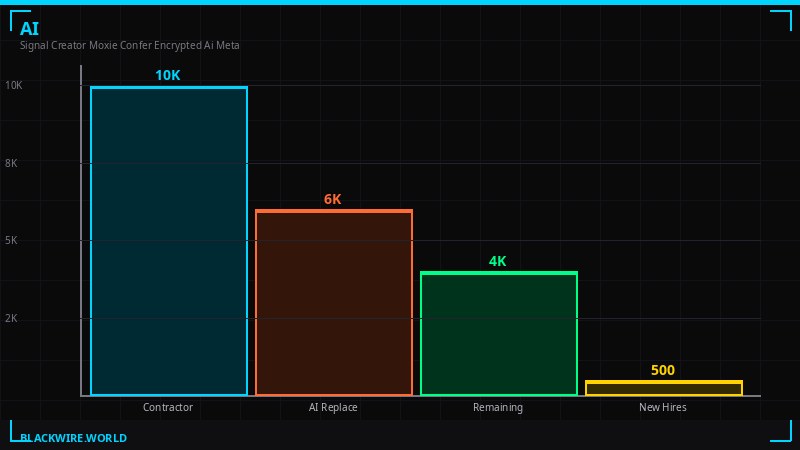

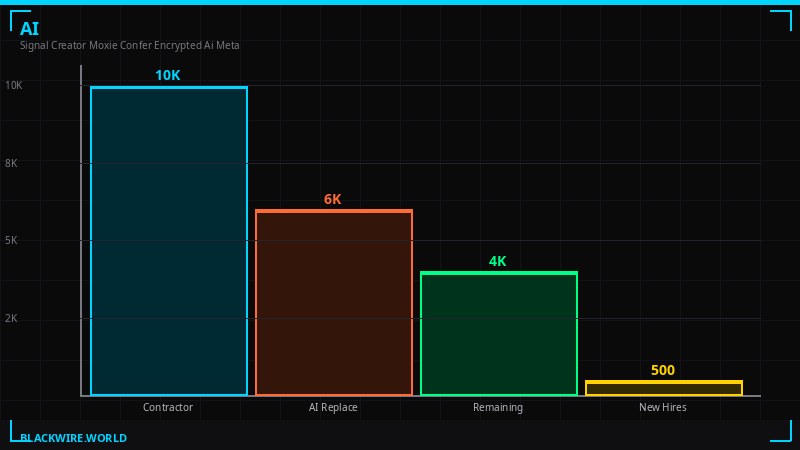

Third: the workforce implications for Meta's content moderation operation become interesting. Meta just announced a major push to replace human content moderators with AI systems. End-to-end encrypted AI conversations create a structural tension - you cannot moderate content you cannot see. Marlinspike's technology, if fully implemented, would make it architecturally impossible for Meta to read AI conversations, which is the whole point from a privacy standpoint, but creates genuine challenges for detecting grooming, terrorism, and other harmful content that Meta has legal obligations around.

This is not a hypothetical. It is the same tension that has surrounded WhatsApp's end-to-end encryption for years. The UK Online Safety Act, the EU's proposed Chat Control regulation, and similar legislation around the world have been specifically designed to force messaging providers to scan encrypted content. Meta's decision to extend encryption to AI chats will put them directly in the crosshairs of governments that have been pushing for backdoors.

The Surveillance Industry's Worst Nightmare, Explained

To understand why the intelligence and law enforcement community should be paying close attention to this announcement, it helps to understand exactly what AI chat data currently gives them that encrypted messaging does not.

WhatsApp end-to-end encryption made it difficult to read the content of messages. But it did not make users' mental states legible. AI chat is different. When someone confesses an intent to a chatbot, that intent is recorded. When someone asks an AI to help them draft a threatening letter and then deletes the draft, the prompt usually is not deleted from the server's logs. AI conversation logs are a qualitatively different category of evidence than messaging logs - they contain the reasoning process, the second thoughts, the discarded plans. They are closer to a journal than a conversation.

Law enforcement agencies in the US and Europe have already begun requesting AI chat logs in criminal investigations, according to reporting by The Intercept and Ars Technica. OpenAI published a transparency report in 2025 noting they had responded to hundreds of government requests for user data. These are early days. As AI assistants become more embedded in daily life - helping with legal questions, medical decisions, financial planning - the investigative value of those logs will grow dramatically.

Confer's architecture makes that impossible in principle. The Trusted Execution Environment handles inference. The attestation process ensures the right code is running. The client-side key management ensures conversation history cannot be decrypted by anyone but the user. Even if a government agency seized Confer's servers, they would have an encrypted blob and nothing else. Even if they compelled Confer to modify their server software, the client would detect the discrepancy in the attestation and refuse to connect.

This is not theoretical. The TEE approach has been validated in production environments. Apple's Private Cloud Compute - which underpins Apple Intelligence's cloud processing - uses a similar architecture. The difference is that Apple's system is closed and opaque; Confer's is open-source and reproducible, meaning anyone can build the image, check the measurements, and verify that what is running matches what is published. This is a meaningful security difference. Apple Private Cloud Compute requires you to trust Apple. Confer requires you to trust the mathematics.

Who Stands to Lose - and Who Stands to Gain

The people who stand to gain from encrypted AI are obvious: everyone who uses AI chatbots for anything sensitive, which at this point means most of the developed world's workforce. Lawyers working on confidential matters. Doctors using AI diagnostic tools. Therapists documenting sessions via AI transcription. Journalists using AI to organize source materials. Dissidents in authoritarian countries who have been using AI assistants despite the surveillance risk. The journalist who wants to ask an AI to help analyze leaked documents without those queries being visible to the company running the AI service.

The people who stand to lose are the ones who currently benefit from AI data being plaintext. That includes intelligence agencies, law enforcement, regulators who want visibility into what people are asking AI systems, and - interestingly - the AI companies themselves, who have been using conversation data to train increasingly capable models. End-to-end encrypted AI conversations cannot be used for training unless the user specifically opts in and provides decrypted data. This is not just a regulatory constraint - it is a structural architectural consequence of genuine privacy.

This creates a genuine business model tension for Meta. Their AI products currently benefit from enormous amounts of user interaction data. Encrypting that data means giving up the training feedback loop that makes Meta AI better over time. Marlinspike is essentially asking Meta to accept a long-term competitive disadvantage in AI quality in exchange for a privacy commitment that might differentiate them in an increasingly privacy-conscious regulatory environment. The fact that Meta has agreed to begin this integration suggests either genuine conviction about the privacy direction, strategic positioning against competitors, or regulatory anticipation - likely some combination of all three.

Key Technical Facts

- Confer's architecture: Prompts are encrypted on-device, processed inside a hardware-isolated Trusted Execution Environment (TEE), responses encrypted back. Server operators see nothing.

- Key management: WebAuthn PRF extension derives encryption keys from passkeys - no seed phrases, no centralized key storage.

- Attestation: Clients cryptographically verify which code is running in the TEE before sending any data. Tampering with server code changes the attestation and severs the connection.

- Reproducibility: Confer's proxy and image are built with Nix and mkosi for bit-for-bit reproducible outputs. Anyone can verify the measurements match a transparency log entry.

- Scale target: Meta AI has approximately 3.2 billion monthly active users across Facebook, Instagram, and WhatsApp.

The Broader Pattern - Why This Moment Matters

Marlinspike's career follows a consistent logic that most privacy advocates do not execute: identify where surveillance is happening at scale, build technology that eliminates it, then find the largest distribution channel available and integrate with it even if that means working with entities you might otherwise distrust.

This is strategically unusual. The privacy community has a long tradition of purity - refusing to work with surveillance-adjacent companies because doing so legitimizes them. Marlinspike took the opposite position with WhatsApp in 2016 and he is taking it again now. His argument is implicit in the structure of his decisions: if the goal is to reduce the amount of surveillance in the world, then privacy technology that reaches a billion people is worth more than technology that reaches a million people, even if reaching a billion requires compromising with entities you have complicated feelings about.

The question this announcement raises is whether the same logic applies to AI. The AI data collection problem is, as Marlinspike himself has stated, qualitatively different from the messaging problem. Encrypted messaging hides communication. Encrypted AI hides something closer to the inner monologue. If the next generation of AI systems become deeply embedded in how people think, plan, and make decisions - acting as cognitive prosthetics rather than chat interfaces - then the question of who can see those interactions becomes one of the most important surveillance questions of the century.

Marlinspike encrypted WhatsApp and most of its users never noticed. They just started getting a padlock icon in the UI and kept chatting. That invisibility was the point. If he can do the same thing for AI - make end-to-end encryption the invisible default rather than the paranoid exception - it represents the same kind of structural intervention he made in 2016. Except this time the stakes are higher, the data is more sensitive, and the regulatory environment is considerably more hostile to encryption than it was a decade ago.

The governments that spent years trying to mandate backdoors into WhatsApp will not accept encrypted AI quietly. That fight is coming. But if Marlinspike can get the architecture deployed at Meta's scale before the regulatory response crystallizes, he will have created the same kind of fait accompli he created with WhatsApp - technically superior privacy that is too widely deployed to credibly ban without cutting off a significant portion of the population from modern communication tools.

It took ten years for the AI privacy problem to become undeniable. The solution is now in motion. Whether it reaches the scale needed before governments close the door on it is a question that will play out over the next two to three years. The announcement this week suggests the race has officially started.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on TelegramSources

- Moxie Marlinspike - "Confer is bringing foundational AI privacy to Meta" (March 2026)

- Confer - "Private Inference" technical post (January 2026)

- Confer - "Making end-to-end encrypted AI chat feel like logging in" (December 2025)

- Signal - "WhatsApp's Signal Protocol integration is now complete" (2016)

- Meta - "Boosting Your Support and Safety on Meta's Apps With AI" (March 2026)

- The Verge - Meta AI moderation and Confer coverage (March 19-20, 2026)

- GitHub: conferlabs/confer-proxy, conferlabs/confer-image (open-source, reproducible builds)