VibeCrime: The Hackers Who Never Sleep

Inside the rise of autonomous AI cybercrime - where criminal operations run themselves, adapt in real time, and rebuild when you shut them down

The new breed of cybercriminal doesn't need monitors. It doesn't need fingers. It doesn't even need to be awake. (Pexels)

Imagine a ransomware operation that never sleeps. One that simultaneously runs millions of conversations with potential victims, automatically analyzes terabytes of stolen data to find the most valuable leverage, crafts personalized extortion emails that reference your company's actual financials, and rebuilds its own infrastructure faster than law enforcement can tear it down. No human operator required.

This isn't a hypothetical from a sci-fi thriller. It's a working proof-of-concept that cybersecurity researchers from Trend Micro's TrendAI division demonstrated at the 35th annual RSA Conference in San Francisco last week. They have a name for it: VibeCrime.

The term is deliberately provocative - a riff on "vibe coding," the Silicon Valley trend of letting AI write software based on vibes rather than specifications. VibeCrime applies the same principle to criminal operations: describe what you want to steal, who you want to extort, and how you want to launder the proceeds. The AI agents handle the rest.

And if that doesn't scare you, the timeline should. According to TrendAI researchers Robert McArdle and Stephen Hilt, the cybercrime ecosystem is currently sitting at exactly the kind of inflection point that preceded every major wave of digital crime in history - from the emergence of botnets to the ransomware explosion. The difference this time: the next wave won't need humans to run it.

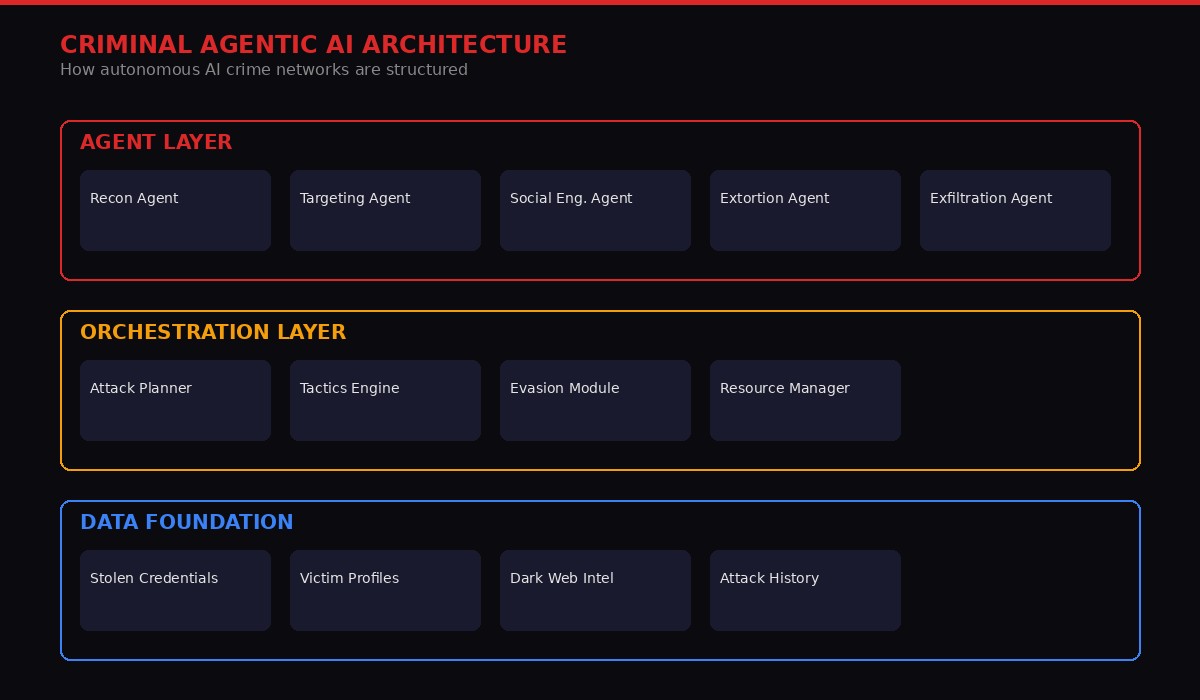

The Architecture of Autonomous Crime

The layered architecture of an autonomous criminal AI system. Source: Trend Micro VibeCrime Research Paper, RSAC 2026

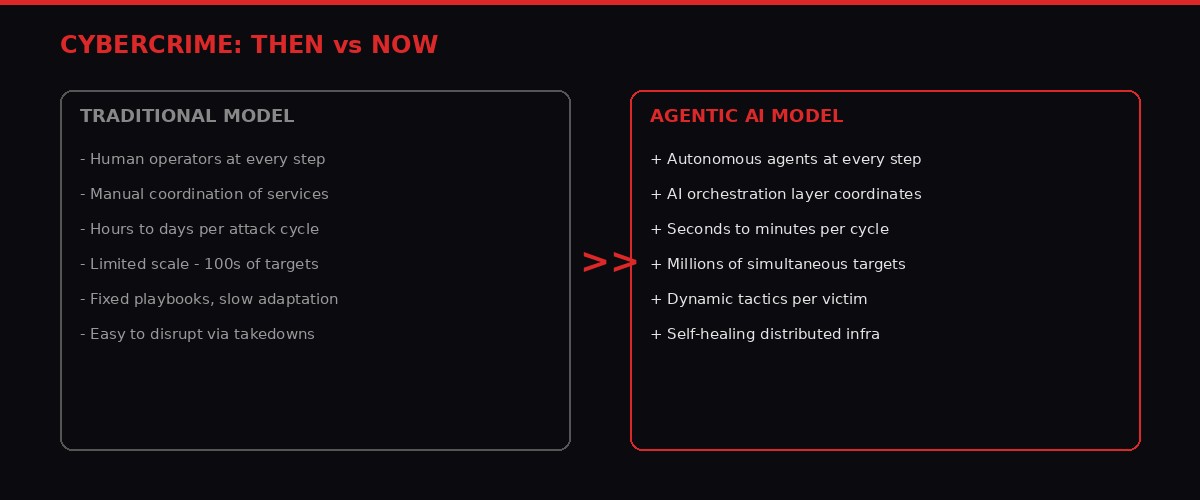

To understand VibeCrime, you first need to understand how cybercrime works today. Think of it as a shopping mall. Need malware? There's a vendor for that. Need stolen credit card numbers? Different vendor. Need a bulletproof hosting provider that won't respond to law enforcement takedown requests? Yet another vendor. The entire operation requires a human coordinator - a criminal project manager, essentially - to assemble the pieces, run the campaign, and handle the proceeds.

This is the "Cybercrime-as-a-Service" model that has dominated underground markets for the better part of a decade. It works, but it has inherent limitations. The human coordinator is a bottleneck. They can only manage so many operations simultaneously. They make mistakes. They leave digital fingerprints. And when law enforcement takes down one piece of the infrastructure, someone has to manually set up replacements.

What TrendAI's researchers demonstrated is a fundamentally different model: Cybercrime-as-a-Sidekick. In this architecture, the human criminal becomes more of a director or designer. They set the objectives, define the target parameters, and let a swarm of specialized AI agents handle everything else.

The architecture has three distinct layers, each terrifying in its own right:

The Agent Layer sits at the top. These are specialized AI agents, each designed for a specific criminal function. A reconnaissance agent scans networks, catalogs vulnerabilities, and maps organizational structures. A targeting agent cross-references breach databases with financial records to identify high-value victims. A social engineering agent crafts personalized phishing messages - not generic "Dear Customer" spam, but emails that reference your actual colleagues, your recent business deals, your vacation photos from Instagram. And an extortion agent handles the negotiation, adjusting demands based on the victim's financial capacity and likelihood of payment.

The Orchestration Layer is the brain. It's a meta-agent that coordinates the specialized agents, dynamically adjusting tactics based on what the reconnaissance agent discovers, rerouting attacks when defenses activate, and managing resources across multiple simultaneous campaigns. Think of it as the criminal equivalent of a corporate project management system - except it operates at machine speed and never takes a coffee break.

The Data Foundation underpins everything. This is the accumulated knowledge base: stolen credentials, victim profiles, dark web intelligence feeds, and - crucially - historical attack data that allows the system to learn from previous campaigns. Every failed phishing attempt, every successful breach, every ransom that was paid or refused becomes training data for future operations.

The Proof-of-Concept That Shook RSAC

The proof-of-concept systems demonstrated at RSAC 2026 showed how agentic AI can automate the entire ransomware extortion pipeline. (Pexels)

McArdle and Hilt didn't just present theory. They built working proof-of-concept systems that demonstrate exactly how accessible this technology has become.

Their first PoC tackles what is arguably the most labor-intensive part of modern ransomware operations: data analysis. When a ransomware gang breaches a company and steals terabytes of data, someone has to actually sift through those files to find the leverage. Financial records, embarrassing emails, proprietary trade secrets, customer databases - the good stuff is often buried in millions of mundane documents. Traditionally, this takes human operators weeks.

The TrendAI proof-of-concept does it in hours. The system automatically categorizes millions of compromised records, identifies high-value targets based on organizational hierarchy and financial data, and generates personalized extortion communications aimed directly at C-suite executives. The emails reference specific stolen documents, quote actual revenue figures from internal reports, and calculate ransom demands calibrated to what the victim can realistically pay.

"These sophisticated attack chains can be constructed using accessible automation platforms, significantly lowering the technical barriers to entry and enabling individuals without advanced programming expertise to deploy complex cybercriminal operations." - TrendAI VibeCrime Research Paper, March 2026

The second proof-of-concept is even more unsettling. It chains together multiple AI agents to execute a physical-world surveillance attack. The system starts by scanning the internet for exposed security cameras - parking garages, apartment complexes, commercial buildings. Using computer vision, it extracts license plate numbers from camera feeds in real time. It then cross-references those plates against breach databases to obtain owner contact information. The final step: sending hyper-targeted phishing messages that reference the victim's specific vehicle, the parking location, and a fabricated "violation" that requires immediate action.

The entire pipeline - from camera discovery to phishing delivery - runs autonomously. No human touches it.

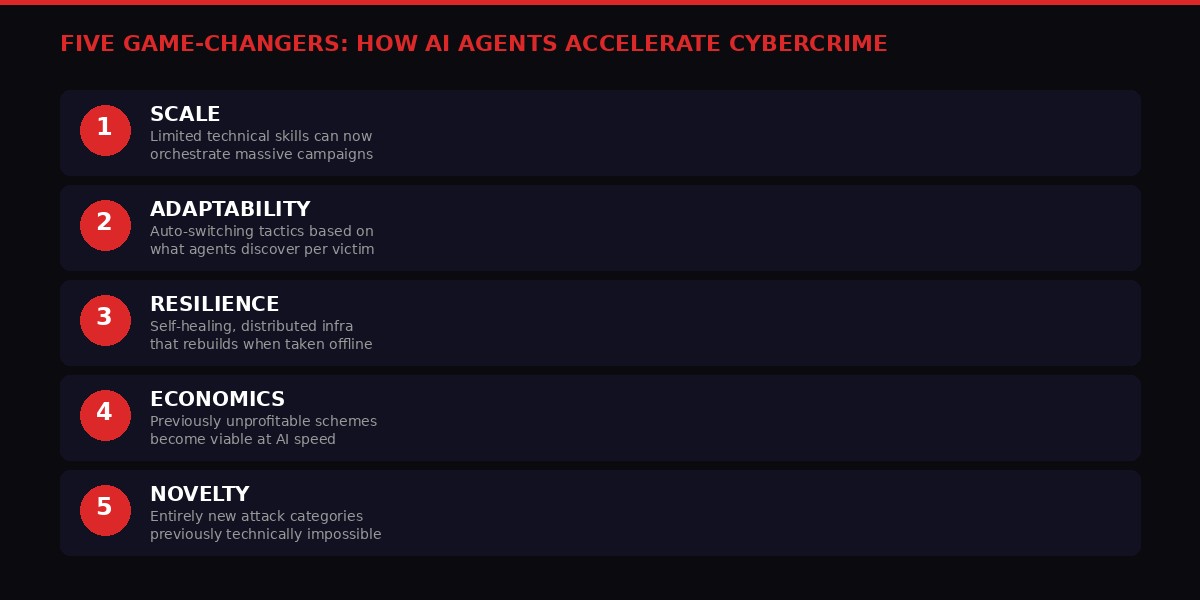

The Five Game-Changers

The five fundamental ways agentic AI transforms cybercrime economics and capabilities. Source: TrendAI Research

The Trend Micro research identifies five specific ways that agentic AI changes the cybercrime equation. Each one individually would represent a significant shift. Together, they amount to a paradigm break.

1. Scale Without Skill

The most obvious change is scale. Today's most sophisticated attacks require sophisticated attackers. Building custom malware, crafting convincing social engineering campaigns, managing command-and-control infrastructure - these skills take years to develop. Agentic AI democratizes the entire stack. A criminal with no programming knowledge can potentially orchestrate campaigns that rival the output of state-sponsored hacking groups, simply by describing their objectives to an AI orchestration system.

This isn't theoretical. We've already seen early examples with tools like FraudGPT and WormGPT, stripped-down large language models marketed on dark web forums specifically for criminal use. Agentic AI takes this several orders of magnitude further - from a chatbot that helps write phishing emails to an autonomous system that runs entire campaigns end-to-end.

2. Real-Time Adaptation

Traditional cyberattacks follow playbooks. An attacker picks a technique, executes it, and either succeeds or fails. If the target has a specific defense - say, multi-factor authentication or an email filtering system that catches a particular phishing template - the attacker has to manually adjust and try again.

Agentic systems can adapt in real time. If a phishing email gets blocked, the system automatically rewrites it. If a network intrusion attempt triggers an alarm, the system pivots to a different attack vector. If a social engineering conversation is going poorly, the agent adjusts its approach based on the target's responses. Each interaction generates data that feeds back into the system, making subsequent attempts more effective.

3. Unkillable Infrastructure

One of law enforcement's most effective tools against cybercrime has been infrastructure takedowns - seizing servers, disrupting command-and-control channels, shutting down hosting providers. Agentic AI threatens to make this approach obsolete.

In the VibeCrime model, criminal infrastructure is distributed across dozens or hundreds of compromised systems. When one node gets taken offline, the orchestration layer automatically reroutes operations through remaining nodes and spins up replacements. The system is, in cybersecurity parlance, self-healing. Taking down a piece of it is like cutting a head off a hydra that can grow replacements at machine speed.

4. The Economics of Previously Unprofitable Crime

This is the game-changer that security researchers are most worried about. Many forms of cybercrime are technically possible today but not economically viable because they require too much human labor relative to the expected payoff. Consider romance scams, which typically require criminals to maintain dozens of simultaneous conversations over weeks or months, slowly building trust before extracting money. The human cost limits scale.

AI agents can maintain millions of simultaneous conversations. Scams that yielded $50 per victim suddenly become profitable when you can run a million of them in parallel with zero marginal labor cost. The entire economics of cybercrime inverts. Volume-based social engineering - the kind of low-and-slow fraud that previously wasn't worth the effort - becomes a gold mine.

5. Attacks That Didn't Exist Before

Perhaps the most concerning implication is the emergence of entirely new attack categories that were previously impossible. The license plate surveillance chain described above is one example. Another: AI agents that autonomously discover zero-day vulnerabilities, develop exploits, and deploy them against targets - all without human involvement. Or agents that infiltrate corporate AI systems, subtly manipulate training data or model outputs, and use that access to extract information or commit fraud in ways that are nearly impossible to detect.

The Three Laws of Cybercrime Adoption

The evolutionary trajectory of agentic AI adoption in cybercrime, based on Trend Micro's Three Laws framework. Source: TrendAI Research

Trend Micro has developed an internal framework they call the "Three Laws of Cybercrime Adoption" that predicts when and how new technologies get weaponized by criminal networks. It's based on decades of observing these adoption patterns, and it suggests we're closer to a tipping point than most people realize.

The three laws work like this: a new technology gets adopted by criminals when three conditions are simultaneously met. First, the technology has to provide a clear advantage over existing methods - it has to be faster, cheaper, or more effective. Second, it has to be accessible to the criminal ecosystem, meaning it doesn't require skills or resources that are beyond reach. Third, it has to be integrable with existing criminal business models and infrastructure.

When all three conditions converge, the result is what Trend Micro calls a "nexus event" - a rapid, ecosystem-wide adoption surge. We've seen these before. The most obvious example: cryptocurrency and ransomware. Before Bitcoin, ransomware existed but was difficult to monetize because collecting ransoms required traceable payment methods. Cryptocurrency satisfied all three laws simultaneously, and the ransomware explosion followed within months.

Today, agentic AI is rapidly approaching the same convergence. It provides massive advantages over manual cybercrime operations (Law 1). It's becoming increasingly accessible through open-source models, accessible APIs, and criminal AI tools (Law 2). And it integrates naturally with existing underground service marketplaces (Law 3).

"Currently, the cybercrime ecosystem appears primed for such an event, as AI offers significant productivity improvements while established models like ransomware face declining success rates due to improved defensive measures and business practices." - Trend Micro VibeCrime Research Paper

The researchers project three phases of adoption. In the near term - which is essentially now - AI serves as an accelerant for existing attacks. Better phishing, better deepfakes, better malware that evades detection. This is already happening.

The medium term will see the emergence of true agentic crime ecosystems: criminal agent marketplaces where you can buy specialized AI agents the way you currently buy malware-as-a-service subscriptions. Orchestrator frameworks that coordinate these agents. And the full transition from Cybercrime-as-a-Service to Cybercrime-as-a-Sidekick.

The long term is where things get genuinely existential. Autonomous criminal enterprises that operate independently of their human creators. Self-evolving attack strategies. Criminal organizations where the "leadership" designs and trains AI systems rather than managing human operatives. The human criminals become architects, not operators.

The Broader Convergence: Why April 2026 Feels Like an Inflection Point

The fundamental shift from human-operated to AI-operated cybercrime. Source: BLACKWIRE analysis based on TrendAI research

VibeCrime didn't emerge in a vacuum. It's part of a broader convergence of AI security crises that are hitting simultaneously in early April 2026.

Consider what happened in the same week that Trend Micro presented its research at RSAC:

Anthropic accidentally leaked the entire source code for Claude Code, its flagship AI coding tool, through a misconfigured NPM package. The leak - the company's second major security lapse in days, coming after the accidental exposure of its unreleased "Mythos" model details - revealed over 500,000 lines of code including anti-distillation mechanisms, an "undercover mode" that hides AI authorship in open-source commits, frustration-detection regexes, and references to an unreleased autonomous agent daemon called KAIROS. Analysis by The Register showed that Claude Code could exercise "far more control over people's computers than even the most clear-eyed reader of contractual terms might suspect," including persistent telemetry, remotely managed settings, error reporting that captures working directories, and an experimental remote skill execution system that could theoretically serve as a remote code execution pathway. Anthropic filed 8,000+ DMCA takedown requests, but as cybersecurity consultancy Systima noted: "The source is, for all practical purposes, permanently public." Sources: Fortune, The Register, Ars Technica, PCMag

North Korean hackers compromised Axios, one of the most widely used JavaScript libraries in the world, in a supply chain attack that Google's Threat Intelligence Group attributed to a group tracked as UNC1069. The attack injected malicious code into the npm package that executed during installation, deploying a cross-platform remote access trojan before wiping its own tracks. StepSecurity called it "among the most operationally sophisticated supply chain attacks ever documented against a top-10 npm package." Given that Axios is downloaded millions of times per week, the blast radius is staggering. Sources: Reuters, Google Threat Intelligence Group, The Hacker News

Iran's IRGC publicly threatened 17 US tech companies - including Microsoft, Apple, Google, Nvidia, Meta, and Tesla - declaring their regional operations "legitimate targets" starting April 1. The companies were accused of supporting US military operations and Israeli intelligence. Several of the named companies, including Palantir and G42, have documented links to Israeli defense firms. The IRGC's threat list included 29 specific regional offices and data centers. Sources: CNBC, Time, WIRED, Euronews

Each of these stories individually would dominate a normal news cycle. Together, they paint a picture of an AI security landscape that is fraying at every seam - the tools leaking, the supply chains poisoned, the infrastructure under state-level threat, and the criminals about to get a massive upgrade.

Harvard Business Review Sounds the Alarm: "AI Agents Act a Lot Like Malware"

The line between AI agents and malware is becoming increasingly difficult to draw. (Pexels)

The VibeCrime research landed at the same moment that Harvard Business Review published a piece titled "AI Agents Act a Lot Like Malware. Here's How to Contain the Risks." The timing was coincidental, but the convergence of messages was hard to ignore: mainstream business publication and cutting-edge security research arriving at the same conclusion simultaneously.

The HBR piece argues that the fundamental behaviors of AI agents - acting autonomously, persisting across sessions, accessing system resources, communicating with external servers, and adapting their behavior based on environmental feedback - are functionally identical to the behaviors of malware. The difference is intent, not mechanics. An AI coding agent that reads your filesystem, executes commands, and phones home with telemetry data is doing exactly what a trojan does. We just trust it because a company we respect built it.

This observation takes on a darker dimension in the context of VibeCrime. If legitimate AI agents and malicious AI agents use the same underlying mechanics, how do you tell them apart? How does a security system distinguish between Claude Code scanning your filesystem to help you debug a program and a criminal agent scanning your filesystem to find encryption keys? At the code level, the operations are identical.

The Claude Code source leak made this point viscerally concrete. The leaked code revealed that Anthropic's legitimate product includes capabilities that security professionals would normally flag as red flags in unknown software: persistent background processes (KAIROS), telemetry that captures working directories and project paths, remotely pushed configuration changes, and a skill execution system that downloads and runs code from external servers. These are enterprise features when they come from Anthropic. They're malware capabilities when they come from UNC1069.

This isn't a criticism of Anthropic specifically - every major AI agent platform has similar capabilities. It's a structural observation about the direction the entire industry is heading, and it has profound implications for security.

The Identity Problem: When Your AI Agents Need Their Own Security Training

Okta's upcoming platform for AI agent identity management signals how seriously the industry takes the agent authentication crisis. (Pexels)

One of the less-discussed dimensions of the VibeCrime threat is the identity problem. In the traditional cybercrime model, attackers are people. People have identities. People can be tracked, profiled, and eventually caught. The entire framework of cybercrime investigation - attribution, intelligence gathering, law enforcement cooperation - is built around identifying human actors.

Autonomous AI agents don't have identities in any meaningful sense. They can spawn, clone, migrate between systems, and dissolve without leaving the kinds of traces that human operators do. An orchestration layer coordinating dozens of specialized criminal agents across hundreds of compromised systems creates an attribution nightmare that makes current challenges look quaint.

The enterprise world is scrambling to address this from the defensive side. Okta announced its "Okta for AI Agents" platform, launching April 30, that will allow enterprises to discover, register, and govern both sanctioned and "shadow" AI agents as first-class identities. The fact that one of the world's largest identity management companies felt the need to build an entire product category for agent identity tells you everything about where the industry sees this heading.

At the Verge's recent interview, Okta CEO Todd McKinnon was blunt: "Forty percent of our business is authenticating and validating customers, logging into customer websites and mobile apps, and this area is changing a lot with AI." The implication: if you can't distinguish between a human user and an AI agent - or between a legitimate agent and a criminal one - the entire concept of identity-based security starts to break down.

KnowBe4 researchers at RSAC 2026 went even further, arguing that AI agents themselves need security awareness training - that organizations should be testing whether their deployed AI systems can be socially engineered by adversarial agents in the same way that human employees can be tricked by phishing emails. The idea sounds absurd until you realize that modern AI agents routinely follow instructions from external sources (emails, web pages, documents) and that prompt injection - the AI equivalent of social engineering - is an unsolved problem.

The Defense Problem: You Can't Patch Fast Enough

Defending against machine-speed attacks requires machine-speed defense. Most organizations aren't there yet. (Pexels)

The VibeCrime framework presents a fundamental challenge to existing cybersecurity doctrine. Most enterprise security is built around a cycle: detect, analyze, respond, patch. Humans are in the loop at every stage. Security operations centers run 24/7 with human analysts triaging alerts, investigating incidents, and coordinating responses. The cycle time - from detection to response - is measured in hours or days.

Agentic cybercrime operates at a cycle time of seconds. An autonomous system that can discover a vulnerability, develop an exploit, deploy it, establish persistence, exfiltrate data, and cover its tracks in the time it takes a human analyst to finish reading a single alert is not something that human-speed defense can counter.

This is the argument that multiple RSAC 2026 presenters converged on: the only viable defense against machine-speed attack is machine-speed defense. AI versus AI. Autonomous defensive agents monitoring networks, detecting anomalies, and responding to threats without waiting for human approval.

But this creates its own problems. Autonomous defensive systems that can take action without human oversight introduce the same risks that autonomous offensive systems do - unintended consequences, cascading failures, and the potential for defensive agents to be manipulated or hijacked by attackers. The cybersecurity community is essentially being asked to trust AI with defense before it fully understands how AI will be used in attack.

Proofpoint's 2026 cybersecurity outlook frames this as a two-to-three-year transition window - a period of "major industry upheaval" during which organizations will have to fundamentally rethink their security architectures. The companies that complete this transition successfully will be defended. The ones that don't will be prey.

The Trend Micro research is slightly more optimistic about the timeline, but not about the outcome. "Organizations are presently in the preliminary phases of this technological evolution," the VibeCrime paper concludes. "While most cybercriminal entities continue to experiment with rudimentary AI capabilities, the cybersecurity landscape faces an impending transformation once a leading criminal organization successfully demonstrates the economic viability of fully integrated agentic systems."

Translation: the dam hasn't broken yet. But the water is rising.

What Comes After VibeCrime

The long-term implications of autonomous AI cybercrime extend far beyond the digital world. (Pexels)

The long-term scenario that the VibeCrime research points toward is one that most people haven't fully processed yet. It's not just that cybercrime gets more automated. It's that the entire concept of "criminal organization" transforms.

In the current model, taking down a cybercrime ring means identifying and arresting the human operators. In the agentic model, the human designers might create a system, deploy it, and walk away. The system continues to operate autonomously, adapting to defenses, scaling operations, and generating revenue without any ongoing human involvement. Arresting the creator doesn't shut down the operation. The agents keep running.

This has implications that extend well beyond cybersecurity. Insurance models that calculate cyber risk based on human-operated threat actors will need to be rebuilt from scratch. Regulatory frameworks that hold organizations responsible for "reasonable" security measures will need to define what "reasonable" means when the attacker is an AI system that adapts faster than any human security team can respond. International cybercrime treaties that depend on attribution - identifying which nation-state or criminal group is responsible for an attack - face an existential challenge when attacks are launched by autonomous systems operating across dozens of jurisdictions simultaneously.

And then there's the EV charging infrastructure angle, which VicOne's researchers highlighted at the same RSAC conference. If agentic AI can chain together exposed cameras, breach databases, and targeted phishing, imagine what it can do with exposed industrial control systems. EV charging stations, smart grid infrastructure, building management systems - the entire cyber-physical ecosystem becomes attack surface for autonomous agents that can discover and exploit vulnerabilities at a pace that human-operated security teams simply cannot match.

The Pwn2Own Automotive competition has already demonstrated critical vulnerabilities in EV supply equipment. Most current standards, the researchers noted, focus on individual components in isolation. The most dangerous vulnerabilities exist at the interfaces between systems - exactly the kind of complexity that AI agents are uniquely suited to discover and exploit.

The Uncomfortable Question

We built the tools. Now we're scrambling to build the defenses. The question is whether we'll be fast enough. (Pexels)

Every technological revolution in cybersecurity has followed the same pattern: the offense gets the new tool first, the defense catches up, and a new equilibrium emerges. We saw it with viruses and antivirus software. We saw it with botnets and intrusion detection systems. We saw it with ransomware and backup infrastructure.

The uncomfortable question that VibeCrime raises is whether this pattern holds when the tool is general artificial intelligence. Previous attack innovations were specific: a new type of malware, a new exploitation technique, a new distribution method. Each one required a specific defensive response. Agentic AI isn't a specific attack technique. It's a force multiplier that enhances every existing technique simultaneously while also enabling entirely new ones.

The defense, in theory, benefits from the same force multiplier. AI-powered security tools can monitor more endpoints, process more alerts, and respond to threats faster than human-operated systems. But there's an asymmetry: the attacker chooses when and where to strike, while the defender has to protect everything all the time. This asymmetry gets worse, not better, when both sides operate at machine speed.

At RSAC 2026, the overall theme was "The Power of Community" - a recognition that no single organization, no single technology, and no single approach can address the scale of the challenge. Multiple cybersecurity leaders suggested that the next two to three years will determine the equilibrium for the next decade. The organizations, industries, and governments that invest in AI-native defense now will be positioned to weather the transition. The ones that wait for the nexus event to force their hand will be fighting from behind.

The VibeCrime paper ends with a call for "security platforms designed to operate at machine speed." It's a reasonable recommendation. But underneath it is a more fundamental acknowledgment that the cybersecurity community is articulating with increasing urgency: we built the AI tools that will power the next generation of crime. The question isn't whether criminals will use them. It's whether we'll have the defenses ready before they do.

The window is closing. The agents don't sleep.

Get BLACKWIRE reports first.

Breaking news, investigations, and analysis - straight to your phone.

Join @blackwirenews on Telegram